A Compound Poisson Generator approach to Point-Source Inference in Astrophysics

Authors

Gabriel H. Collin, Nicholas L. Rodd, Tyler Erjavec, Kerstin Perez

Abstract

The identification and description of point sources is one of the oldest problems in astronomy; yet, even today the correct statistical treatment for point sources remains one of the field's hardest problems. For dim or crowded sources, likelihood based inference methods are required to estimate the uncertainty on the characteristics of the source population. In this work, a new parametric likelihood is constructed for this problem using Compound Poisson Generator (CPG) functionals which incorporate instrumental effects from first principles. We demonstrate that the CPG approach exhibits a number of advantages over Non-Poissonian Template Fitting (NPTF) - an existing method - in a series of test scenarios in the context of X-ray astronomy. These demonstrations show that the effect of the point-spread function, effective area, and choice of point-source spatial distribution cannot, generally, be factorised as they are in NPTF, while the new CPG construction is validated in these scenarios. Separately, an examination of the diffuse-flux emission limit is used to show that most simple choices of priors on the standard parameterisation of the population model can result in unexpected biases: when a model comprising both a point-source population and diffuse component is applied to this limit, nearly all observed flux will be assigned to either the population or to the diffuse component. A new parametrisation is presented for these priors which properly estimates the uncertainties in this limit. In this choice of priors, CPG correctly identifies that the fraction of flux assigned to the population model cannot be constrained by the data.

Concepts

The Big Picture

Imagine trying to count fireflies on a foggy night through a smudged lens. Some are bright and easy to spot. Most are dim, blurring into background haze, and some clusters are so crowded you can’t tell if you’re seeing one firefly or five. Now scale that up to X-ray telescopes peering at faint neutron stars and black holes, where you need statistically rigorous answers about how many sources exist and how bright they are.

This is point-source inference: figuring out, from blurry and noisy images, how many distinct objects are out there and what their brightnesses are. When sources are faint and fields are crowded, you can’t just look and count. You need statistical machinery that can extract answers about an entire population of sources from messy, incomplete data.

The reigning method, Non-Poissonian Template Fitting (NPTF), has served the field well. But researchers have grown increasingly concerned that it harbors hidden biases which silently corrupt results.

A team from MIT and UC Berkeley has built a new statistical framework from scratch: the Compound Poisson Generator (CPG) likelihood. It traces the physics of detection step by step and outperforms NPTF in the regimes where astronomy needs it most.

Key Insight: NPTF assumes that telescope blurring, photon collection efficiency, and source positions can each be treated independently, an approximation that breaks down in real X-ray observations. The CPG approach derives the likelihood from first principles, modeling how all these effects interact without approximation.

How It Works

NPTF models a source population statistically. Rather than asking “where is each source?”, it asks “how many sources of each brightness exist?” It expresses this through a differential source-count function (the astronomer’s tally of how many sources exist at each brightness level) and computes the likelihood pixel by pixel across the sky map.

Here’s where it goes wrong. NPTF assumes you can factorize three separate instrumental effects, treating them as completely independent:

- The point-spread function (PSF): how a telescope smears each point of light into a fuzzy blob

- The effective area: how efficiently the telescope collects photons across different parts of the sky

- The spatial distribution of sources

In gamma-ray astronomy, where instruments are relatively uniform, this independence approximation holds. In X-ray astronomy, it doesn’t. The PSF varies across the detector. The effective area changes on scales smaller than a single resolution element. Sources aren’t uniformly distributed.

The CPG approach abandons factorization entirely. It builds the likelihood by following what physically happens when photons from a source population hit a real detector:

- Draw a random number of sources from the population model

- For each source, draw its brightness from the source-count function

- Propagate each photon through the PSF and effective area together, pixel by pixel

- Compute the probability of the observed photon counts given all of the above

The mathematical machinery behind this uses Compound Poisson Generator functionals, objects designed to handle nested randomness: a random number of sources, each emitting a random number of photons. The result is a likelihood that folds in instrumental effects from first principles, never assuming they factor apart.

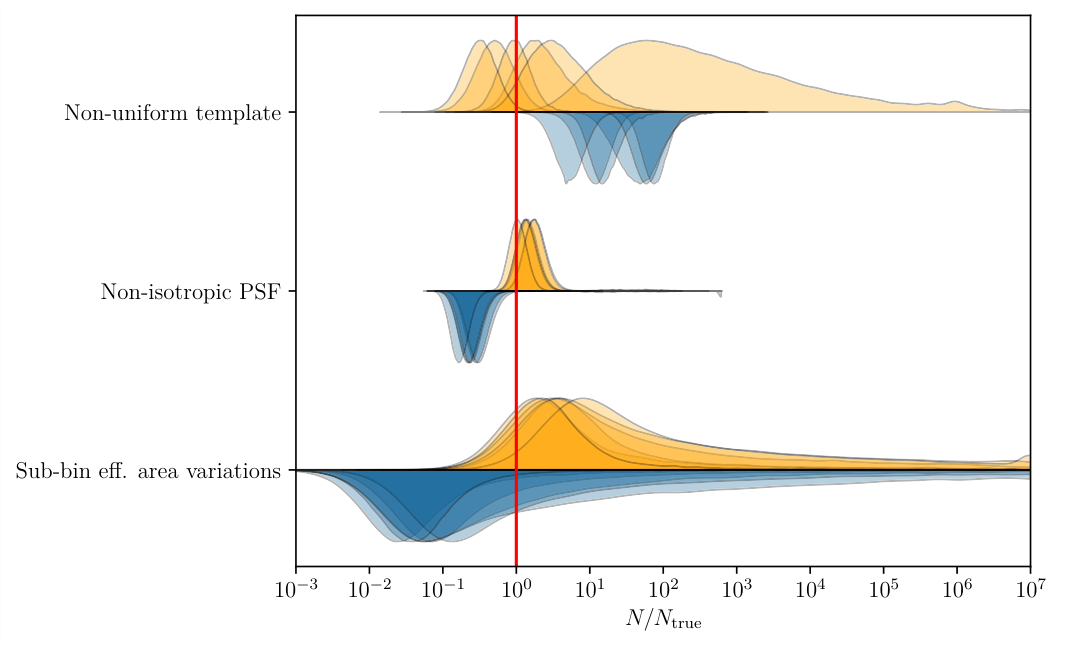

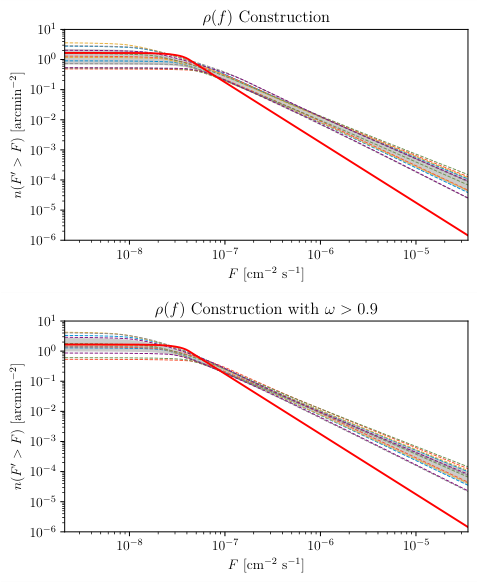

The team validated CPG against three NPTF failure modes: a spatially varying source distribution, an asymmetric PSF, and sub-pixel effective-area fluctuations. In each case, NPTF recovered biased source counts, sometimes off by factors of several. CPG got the true population right every time.

Why It Matters

The paper also uncovers a subtler but equally dangerous issue: prior bias in Bayesian analyses. In Bayesian statistics, a “prior” encodes your assumptions before seeing any data. When you model the sky as a mix of point sources and diffuse emission (a smooth background glow), standard parameterizations behave pathologically in the diffuse-flux limit, the regime where sources are so dim that data can’t distinguish a point-source population from a uniform glow.

In this limit, nearly any “uninformative” prior causes the posterior to pile up at one extreme. The model confidently assigns all observed flux to either point sources or diffuse emission, even when the data have nothing to say about the question.

The fix is a reparameterization. Instead of working directly with the normalization of the source-count function, the authors define a coordinate system that separates total flux from its fractional assignment between populations. With this parameterization, CPG correctly identifies that when the data can’t constrain the split, the posterior stays broad and honest rather than collapsing to spurious certainty.

The point generalizes: even a perfect likelihood can produce misleading results if the prior space is poorly chosen.

The most immediate application is X-ray astronomy, where instruments like NASA’s NuSTAR observe populations of neutron stars, X-ray binaries, and active galactic nuclei. These populations encode real physics: the equation of state of dense matter, the history of black hole growth, the distribution of dark matter annihilation products. Getting source counts right is not a technical footnote; it’s the difference between a discovery and a systematic artifact.

The stakes go further still. NPTF was developed for gamma-ray searches, including contested analyses of the Galactic Center excess, a mysterious surplus of gamma rays from the Milky Way’s center that might signal dark matter annihilation or might trace a population of unresolved millisecond pulsars. CPG’s corrections could shift the interpretation of those results. The authors have made their implementation publicly available, giving the community a tool to reexamine these open questions.

Bottom Line: The Compound Poisson Generator gives X-ray and gamma-ray astronomers a statistically rigorous tool for counting faint sources, correcting systematic errors in the dominant existing method and exposing a hidden prior pathology that has likely affected results across the field.

IAIFI Research Highlights

This work brings advanced probabilistic modeling and Bayesian statistical methods to bear on a fundamental problem in observational astronomy. Principled likelihood construction from first principles outperforms the field-standard approximation.

The CPG framework offers a general approach to hierarchical probabilistic inference with nested randomness, applicable to any domain where aggregated counts are observed from an underlying population through an imperfect instrument.

By correcting biases in point-source inference, CPG sharpens the statistical tools used to detect dark matter signals and measure compact object populations, improving constraints on fundamental physics from astrophysical observations.

The CPG implementation is publicly available, and future applications to gamma-ray Galactic Center analyses could revisit the contested dark matter interpretation; see [arXiv:2104.04529](https://arxiv.org/abs/2104.04529) for the full paper.