A machine-learning pipeline for real-time detection of gravitational waves from compact binary coalescences

Authors

Ethan Marx, William Benoit, Alec Gunny, Rafia Omer, Deep Chatterjee, Ricco C. Venterea, Lauren Wills, Muhammed Saleem, Eric Moreno, Ryan Raikman, Ekaterina Govorkova, Dylan Rankin, Michael W. Coughlin, Philip Harris, Erik Katsavounidis

Abstract

The promise of multi-messenger astronomy relies on the rapid detection of gravitational waves at very low latencies ($\mathcal{O}$(1\,s)) in order to maximize the amount of time available for follow-up observations. In recent years, neural-networks have demonstrated robust non-linear modeling capabilities and millisecond-scale inference at a comparatively small computational footprint, making them an attractive family of algorithms in this context. However, integration of these algorithms into the gravitational-wave astrophysics research ecosystem has proven non-trivial. Here, we present the first fully machine learning-based pipeline for the detection of gravitational waves from compact binary coalescences (CBCs) running in low-latency. We demonstrate this pipeline to have a fraction of the latency of traditional matched filtering search pipelines while achieving state-of-the-art sensitivity to higher-mass stellar binary black holes.

Concepts

The Big Picture

Imagine you’re a surgeon waiting for a transplant organ. Every second the heart is in transit matters. Now imagine the organ is a fleeting ripple in the fabric of spacetime, and your window to catch it before it vanishes is measured in seconds. That’s the challenge facing gravitational-wave astronomers every time two black holes collide somewhere in the universe.

When massive objects merge, they send out gravitational waves, distortions in spacetime that race outward at the speed of light. Detectors like LIGO and Virgo can pick them up, but the science payoff explodes when other telescopes can swing into action immediately afterward.

Some of the most dramatic cosmic collisions involve neutron stars, the dense, city-sized remnants left behind when massive stars explode. These mergers produce gravitational waves, gamma-ray bursts (intense flashes of high-energy radiation), visible light, and X-rays, all at once.

To catch the whole show, astronomers need alerts within about one second of detection. Traditional detection algorithms are too slow. They’re thorough, but they’re not fast.

A team at MIT, LIGO, the University of Minnesota, and several other institutions has now built the first fully machine-learning-based pipeline, called Aframe, capable of detecting gravitational waves from colliding black holes in real time. It runs at a fraction of the latency of conventional methods and matches the sensitivity of the best algorithms in the field.

Key Insight: Aframe is the first end-to-end ML pipeline for gravitational wave detection operating at low latency, achieving millisecond-scale inference while matching state-of-the-art sensitivity for higher-mass binary black hole signals.

How It Works

At its core, Aframe is a neural network that watches two data streams at once, one from LIGO’s Hanford, Washington detector and one from Livingston, Louisiana, and asks a single question: is there a gravitational wave signal hiding in this noise?

The architecture adapts ResNet34 (“Residual Network with 34 layers”), a deep learning design originally built for image recognition, to handle time-series data. Two modifications matter most:

- 1D convolutions replace standard 2D convolutions, processing data that unfolds over time (like a seismograph readout) rather than across a two-dimensional image.

- Group Normalization replaces Batch Normalization. Standard batch normalization tracks statistics across the full training batch, but training batches are loaded with simulated signals while live inference sees mostly noise. Group Normalization computes statistics within smaller channel groups, keeping the network’s behavior consistent between training and deployment.

The pipeline runs in a sliding-window fashion at 4 Hz, evaluating a new window every 0.25 seconds. Each raw output is smoothed with a one-second “top hat” filter (a moving average over a one-second window), converting noisy frame-by-frame scores into a stable detection statistic. Four Hz strikes the right balance: frequent enough to catch transient signals, light enough to keep compute costs manageable.

For live throughput, Aframe uses an inference-as-a-service (IaaS) architecture. The neural network runs as a dedicated, always-on service rather than being called ad hoc. Inference executes on a dedicated server via NVIDIA’s Triton Inference Server and TensorRT, while client processes handle data loading and preprocessing independently, so each component scales on its own. A technique called snapshotting caches overlapping input data server-side, avoiding redundant transfers for unchanged portions of each window.

Training relies on aggressive data augmentation: simulated binary black hole waveforms are injected at varying signal strengths into real LIGO noise, forcing the network to handle the non-stationary, glitchy character of real detector data rather than idealized Gaussian noise. The network trains with binary cross-entropy loss, an Adam optimizer, and a one-cycle learning rate schedule with cosine annealing.

Why It Matters

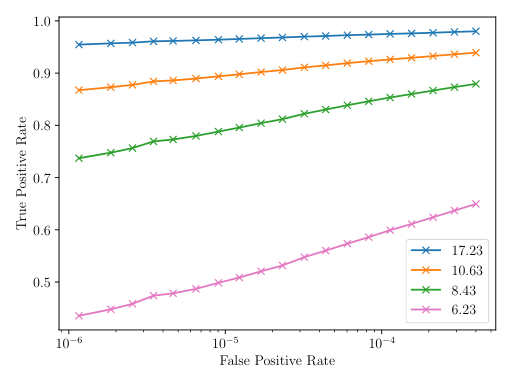

The immediate payoff is speed. Traditional matched filtering pipelines compare incoming data against a vast library of precomputed signal templates, like checking a fingerprint against every entry in a database. They’re exquisitely sensitive but carry significant computational overhead. Aframe delivers comparable sensitivity on higher-mass binary black holes while cutting time-to-alert dramatically.

In multi-messenger astronomy, where gravitational waves are combined with light and other signals to build a complete picture of cosmic events, that speed difference is decisive. Catching a kilonova (the luminous explosion following a neutron star merger) at peak brightness rather than already fading can make or break the science from a single event.

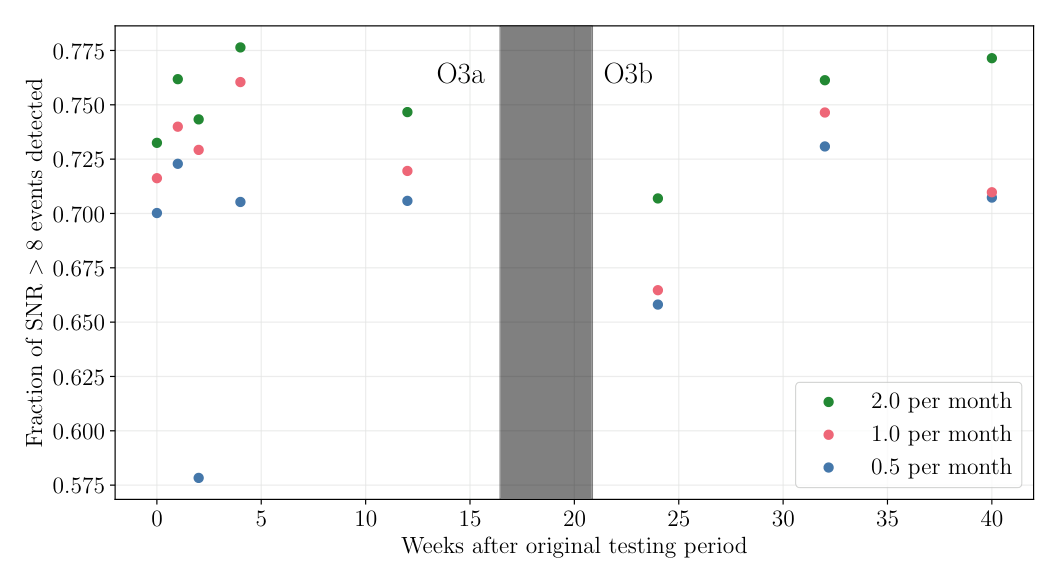

But Aframe’s deeper significance is infrastructural. The field has struggled for years to move ML proposals from papers into practice. By building a fully deployable pipeline and benchmarking it against the GWTC-3 catalog (the published record of confirmed detections from LIGO and Virgo’s third observing run), the team has produced something the community can actually build on, not just another proof-of-concept.

Binary neutron star mergers are the obvious next target: they spend far longer in the detector’s sensitive band and present harder detection challenges. The IaaS architecture, the normalization choices, and the augmentation strategies developed here all carry over directly.

Bottom Line: Aframe proves that a fully ML-based gravitational wave search pipeline can operate in real time with competitive sensitivity and a fraction of traditional latency. Faster multi-messenger alerts and AI-powered astrophysics infrastructure are now within reach.

IAIFI Research Highlights

This work connects deep learning infrastructure with gravitational-wave astrophysics, deploying a production-ready neural network pipeline inside the LIGO computing environment and benchmarking it against established physics search algorithms.

The team's use of Group Normalization to resolve the training-inference distribution mismatch, combined with an inference-as-a-service architecture for scalable deployment, provides practical lessons for any real-time ML system in scientific computing.

Aframe achieves state-of-the-art sensitivity to higher-mass binary black hole mergers at millisecond inference latency, enabling the sub-second gravitational-wave alerts that multi-messenger astronomy needs to maximize follow-up science.

Future work targets binary neutron star detection and adaptation to next-generation detectors; the full pipeline and methods are described in [arXiv:2403.18661](https://arxiv.org/abs/2403.18661) (Marx et al., 2024).