A Parameter-Masked Mock Data Challenge for Beyond-Two-Point Galaxy Clustering Statistics

Authors

Beyond-2pt Collaboration, :, Elisabeth Krause, Yosuke Kobayashi, Andrés N. Salcedo, Mikhail M. Ivanov, Tom Abel, Kazuyuki Akitsu, Raul E. Angulo, Giovanni Cabass, Sofia Contarini, Carolina Cuesta-Lazaro, ChangHoon Hahn, Nico Hamaus, Donghui Jeong, Chirag Modi, Nhat-Minh Nguyen, Takahiro Nishimichi, Enrique Paillas, Marcos Pellejero Ibañez, Oliver H. E. Philcox, Alice Pisani, Fabian Schmidt, Satoshi Tanaka, Giovanni Verza, Sihan Yuan, Matteo Zennaro

Abstract

The last few years have seen the emergence of a wide array of novel techniques for analyzing high-precision data from upcoming galaxy surveys, which aim to extend the statistical analysis of galaxy clustering data beyond the linear regime and the canonical two-point (2pt) statistics. We test and benchmark some of these new techniques in a community data challenge "Beyond-2pt", initiated during the Aspen 2022 Summer Program "Large-Scale Structure Cosmology beyond 2-Point Statistics," whose first round of results we present here. The challenge dataset consists of high-precision mock galaxy catalogs for clustering in real space, redshift space, and on a light cone. Participants in the challenge have developed end-to-end pipelines to analyze mock catalogs and extract unknown ("masked") cosmological parameters of the underlying $Λ$CDM models with their methods. The methods represented are density-split clustering, nearest neighbor statistics, BACCO power spectrum emulator, void statistics, LEFTfield field-level inference using effective field theory (EFT), and joint power spectrum and bispectrum analyses using both EFT and simulation-based inference. In this work, we review the results of the challenge, focusing on problems solved, lessons learned, and future research needed to perfect the emerging beyond-2pt approaches. The unbiased parameter recovery demonstrated in this challenge by multiple statistics and the associated modeling and inference frameworks supports the credibility of cosmology constraints from these methods. The challenge data set is publicly available and we welcome future submissions from methods that are not yet represented.

Concepts

The Big Picture

Imagine trying to reconstruct a symphony from only the bass line. You’d capture the rhythm and some structure, but miss the harmonics, the countermelodies, the rich texture that makes the music what it is.

For decades, cosmologists have been doing something analogous: mapping the universe’s large-scale structure using only its simplest pairwise measurements, essentially counting how often galaxies appear close together at various distances. It works well. But the universe has far more to say.

As galaxies cluster under gravity over billions of years, the initially near-random distribution of matter develops complex, irregular patterns. Filaments stretch across vast distances as bridges of galaxies. Voids, enormous empty regions, open up between them. Clusters and intricate web-like structures emerge. Simple pairwise measurements can’t capture this complexity. They’re blind to information encoded in triangles, voids, and higher-order arrangements.

With next-generation surveys like DESI, Euclid, and the Rubin Observatory about to flood the field with unprecedented data, cosmologists need better statistical tools. Ones that can hear the full symphony.

A large international collaboration, the Beyond-2pt Collaboration, ran a rigorous community data challenge to test whether the new generation of “beyond two-point” analysis methods actually works, and whether they can reliably extract fundamental cosmological parameters (the handful of numbers describing the universe’s composition and history) from realistic mock data.

Key Insight: Multiple independent beyond-two-point methods successfully recovered hidden cosmological parameters from realistic mock galaxy catalogs, validating a new generation of statistical tools that should sharpen our maps of the universe.

How It Works

The challenge had a built-in safeguard: parameter masking. The true cosmological parameters were hidden from participants until after they submitted results, preventing analysts from unconsciously tuning their methods to match expected answers. Think of it as a double-blind clinical trial for cosmological statistics.

The mock data came from high-precision N-body simulations, massive computer calculations that evolve millions of simulated dark matter particles under gravity to produce realistic galaxy distributions. Three flavors of mock catalogs were included: galaxies in “real space” (without observational distortions), “redshift space” (with the distortions real telescopes see), and on a “light cone” (the most realistic scenario, mimicking how surveys observe the sky across cosmic time).

Six distinct analysis methods competed, each targeting different aspects of the non-Gaussian information in galaxy clustering:

- Density-split clustering divides the survey volume into regions of different galaxy density and measures clustering in each environment separately

- Nearest neighbor statistics characterize how isolated or crowded each galaxy is relative to its neighbors

- Void statistics extract information from the large empty regions between galaxy filaments

- BACCO power spectrum emulator uses machine learning to interpolate between simulations, extending power spectrum analysis to smaller, nonlinear scales

- LEFTfield performs field-level inference: rather than compressing observations into summary statistics, it analyzes the full three-dimensional galaxy density field directly using Effective Field Theory (EFT), a framework borrowed from particle physics that describes matter clustering at large scales without requiring a model of every small-scale detail

- Joint power spectrum and bispectrum analyses add the bispectrum (three-point correlations measuring triangular galaxy configurations) using both EFT and simulation-based inference (SBI)

SBI trains neural networks on thousands of simulations to learn the statistical relationship between observations and parameters, bypassing the need for an analytic likelihood function (which becomes intractable for complex statistics). Both field-level inference and SBI are among the most ambitious applications of modern machine learning to fundamental cosmology.

Why It Matters

The validation is significant precisely because it’s hard. Each method involves a long chain of modeling choices: how to simulate galaxy formation, how to handle observational effects, how to build a statistical model, how to sample parameter space. Any link can introduce systematic bias. The fact that multiple independent methods, built on completely different mathematical frameworks, all recovered the true parameters consistently is strong evidence the field is on the right track.

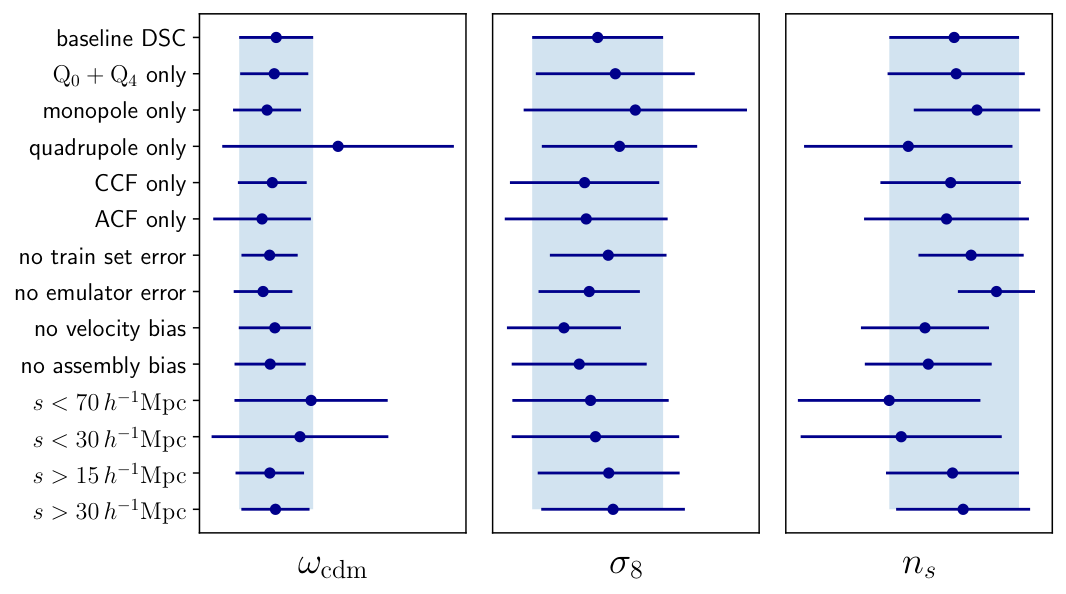

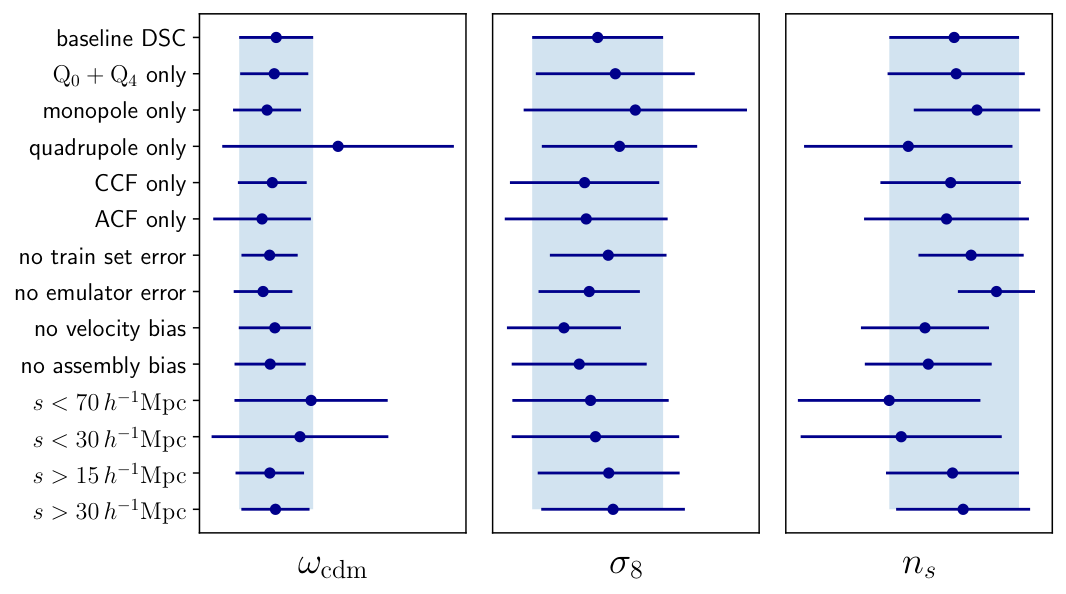

The challenge also exposed where work remains. Some methods struggled with light-cone geometry or scale cuts. Others showed tension between real-space and redshift-space results that needs further investigation. The collaboration treats this as a living challenge: the dataset is publicly available, and teams are invited to submit results from methods not yet tested.

The next frontier includes realistic survey complications like survey geometry, photometric redshift uncertainties, and the full complexity of galaxy bias (the messy relationship between where galaxies live and where the underlying dark matter actually is).

Bottom Line: The Beyond-2pt challenge shows that a new toolkit of statistical methods can reliably decode cosmological information that traditional analyses miss. As next-generation surveys come online, these tools will be essential for squeezing every bit of physics out of the data.

IAIFI Research Highlights

This work unites simulation-based inference and machine learning emulators with rigorous statistical cosmology, showing how AI-driven methods can be validated against traditional analytic approaches in a controlled community challenge.

Simulation-based inference and neural network emulators like BACCO proved competitive with analytic methods, showing that learned statistical models can recover unbiased cosmological parameters from complex, high-dimensional data.

Recovering ΛCDM parameters, including matter density and the amplitude of density fluctuations, from beyond-two-point statistics directly constrains the physics of dark matter, dark energy, and the growth of large-scale structure.

Future challenge rounds will tackle realistic survey systematics and tighter constraints on extensions to ΛCDM; full results and data are described in [arXiv:2405.02252](https://arxiv.org/abs/2405.02252).