Active learning for photonics

Authors

Ryan Lopez, Charlotte Loh, Rumen Dangovski, Marin Soljačić

Abstract

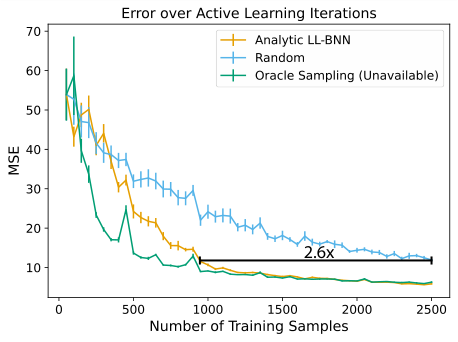

Active learning for photonic crystals explores the integration of analytic approximate Bayesian last layer neural networks (LL-BNNs) with uncertainty-driven sample selection to accelerate photonic band gap prediction. We employ an analytic LL-BNN formulation, corresponding to the infinite Monte Carlo sample limit, to obtain uncertainty estimates that are strongly correlated with the true predictive error on unlabeled candidate structures. These uncertainty scores drive an active learning strategy that prioritizes the most informative simulations during training. Applied to the task of predicting band gap sizes in two-dimensional, two-tone photonic crystals, our approach achieves up to a 2.6x reduction in required training data compared to a random sampling baseline while maintaining predictive accuracy. The efficiency gains arise from concentrating computational resources on high uncertainty regions of the design space rather than sampling uniformly. Given the substantial cost of full band structure simulations, especially in three dimensions, this data efficiency enables rapid and scalable surrogate modeling. Our results suggest that analytic LL-BNN based active learning can substantially accelerate topological optimization and inverse design workflows for photonic crystals, and more broadly, offers a general framework for data efficient regression across scientific machine learning domains.

Concepts

The Big Picture

Imagine finding the best recipe in a cookbook with 11,000 entries, but each one takes eight hours to test. You could sample at random, or you could start with a small batch, identify the flavors hardest to predict, and test only those next. That’s what MIT physicists and engineers have done for photonic crystals, cutting the number of expensive simulations needed to train an accurate predictive model by a factor of 2.6.

Photonic crystals are engineered materials with precisely repeating microscopic structures that control how light moves through them. They block certain colors of light while allowing others to pass. That forbidden zone for specific wavelengths is called a band gap, and its size determines what a photonic crystal is actually useful for. Computing the band gap for any given design requires a rigorous simulation, which is slow and expensive, especially in three dimensions. Testing thousands of candidates this way is simply not feasible.

Researchers Ryan Lopez, Charlotte Loh, Rumen Dangovski, and Marin Soljačić at MIT attacked this bottleneck head-on, developing a machine-learning framework that learns which simulations to run rather than running them all.

Key Insight: By adding a layer that measures prediction confidence to a neural network, this approach matches the prediction accuracy of a fully trained model using 2.6 times less simulation data than random sampling, shrinking the computational cost of photonic crystal design considerably.

How It Works

The core idea is active learning, a strategy where a model iteratively decides what data it needs next rather than passively consuming a fixed dataset. Standard machine learning is like studying with a randomly shuffled deck of flashcards. Active learning is the student who sets aside everything they already know and drills specifically on what stumps them.

The team’s implementation works in cycles. They begin with a small set of labeled photonic crystal structures, examples where the full simulation has been run and the band gap is known. A neural network trains on those examples, then predicts band gaps for thousands of unlabeled candidates it hasn’t seen. The question at each step: which candidates should be simulated next?

That’s where the approximate Bayesian last layer (LL-BNN) comes in. In a standard neural network, the final layer produces a single number. One prediction, no caveats. The LL-BNN makes that final layer probabilistic: instead of fixed connection strengths, each is described by a range of plausible values with a learned center and spread. This transforms the network from a machine that outputs a number into one that outputs a probability distribution, a prediction with an associated confidence.

What makes this work practical is that the Bayesian computation is analytic rather than approximate. Standard Bayesian neural networks require running dozens or hundreds of random samples to estimate uncertainty, which is expensive and noisy. By taking the mathematical limit as the number of Monte Carlo samples goes to infinity, the team derives a closed-form expression for the predictive variance. Uncertainty scores become computable in a single forward pass plus a handful of matrix operations: speed without sacrifice.

The workflow proceeds as follows:

- Initialize with a small set of randomly labeled photonic crystal structures

- Train the neural network (backbone + Bayesian last layer) jointly on the current labeled set

- Evaluate all unlabeled candidates using the analytic variance formula, one pass per candidate

- Select the highest-uncertainty structures for new band-gap simulations

- Add those newly labeled points to the training set and repeat

The network takes 2D dielectric-constant maps of photonic crystal unit cells as input and passes them through a deep neural network backbone. Symmetry operations (reflections and rotations) augment the training data to help the model generalize from limited examples. The Bayesian last layer then converts the backbone’s learned features into a predictive distribution over band gap size.

Why It Matters

The immediate payoff is computational. Full band structure simulations for 2D photonic crystals are already non-trivial; in 3D, they become substantially more expensive. A 2.6x reduction in required training data isn’t a minor optimization. It could mean the difference between a surrogate model that’s practical to build and one that isn’t. This efficiency opens the door to screening far larger design spaces, including complex 3D geometries that would otherwise be prohibitive.

The analytic LL-BNN framework is also a general tool for data-efficient regression wherever labeled data is expensive to acquire. Scientific machine learning increasingly faces exactly this bottleneck: simulations are costly, experiments take time, and labels are scarce. The same approach could apply to materials discovery, molecular property prediction, or potential-energy surface sampling.

Open questions remain. The current work focuses on 2D photonic crystals with two material components; scaling to 3D structures and multi-material designs would test how well the framework holds up. The interaction between batch size and efficiency also deserves further study, since optimal batch sizing likely depends on problem geometry.

Bottom Line: Active learning with analytic Bayesian uncertainty slashes the simulation burden for photonic crystal design by more than half, and the underlying framework is general enough to accelerate data-efficient modeling across scientific computing wherever simulations are expensive and labels are scarce.

IAIFI Research Highlights

This work fuses Bayesian machine learning with photonic crystal physics, using neural uncertainty estimates to guide expensive electromagnetic simulations and connecting AI methodology directly to a fundamental problem in light-matter interaction.

The analytic last-layer Bayesian formulation achieves closed-form uncertainty quantification at a fraction of the cost of Monte Carlo-based methods, making principled Bayesian deep learning more practical for real-world regression tasks.

By enabling data-efficient surrogate models for photonic band gap prediction, this approach speeds up inverse design workflows for engineered optical materials that manipulate the fundamental interaction between light and structured matter.

Future work targeting 3D photonic crystals and multi-component material systems could expand the framework's reach; the full paper ([arXiv:2601.16287](https://arxiv.org/abs/2601.16287)) is available from the MIT Physics and EECS departments.