Advances in machine-learning-based sampling motivated by lattice quantum chromodynamics

Authors

Kyle Cranmer, Gurtej Kanwar, Sébastien Racanière, Danilo J. Rezende, Phiala E. Shanahan

Abstract

Sampling from known probability distributions is a ubiquitous task in computational science, underlying calculations in domains from linguistics to biology and physics. Generative machine-learning (ML) models have emerged as a promising tool in this space, building on the success of this approach in applications such as image, text, and audio generation. Often, however, generative tasks in scientific domains have unique structures and features -- such as complex symmetries and the requirement of exactness guarantees -- that present both challenges and opportunities for ML. This Perspective outlines the advances in ML-based sampling motivated by lattice quantum field theory, in particular for the theory of quantum chromodynamics. Enabling calculations of the structure and interactions of matter from our most fundamental understanding of particle physics, lattice quantum chromodynamics is one of the main consumers of open-science supercomputing worldwide. The design of ML algorithms for this application faces profound challenges, including the necessity of scaling custom ML architectures to the largest supercomputers, but also promises immense benefits, and is spurring a wave of development in ML-based sampling more broadly. In lattice field theory, if this approach can realize its early promise it will be a transformative step towards first-principles physics calculations in particle, nuclear and condensed matter physics that are intractable with traditional approaches.

Concepts

The Big Picture

Imagine trying to predict the weather, but instead of tracking air pressure and humidity, you need to track every quark and gluon inside a single proton, a particle so small that a billion of them lined up would span the width of a human hair. Now do that for an entire atomic nucleus, on a four-dimensional spacetime grid, using equations so complex they break even the world’s most powerful supercomputers. That’s the challenge facing nuclear physicists who want to extract predictions from Quantum Chromodynamics (QCD), our most fundamental theory of the forces that hold matter together.

QCD is simultaneously well understood and computationally impenetrable. We know the equations. We just can’t solve them efficiently at the energies where protons and neutrons live, because the interactions between quarks and gluons become so intense that the usual mathematical shortcuts fail completely.

The go-to workaround is lattice QCD, where spacetime is broken up into a four-dimensional grid and the theory is simulated numerically. This demands enormous computational resources. Lattice QCD is currently one of the largest consumers of open-science supercomputing in the world, yet even exascale machines, capable of a quintillion operations per second, can’t handle many of the questions we desperately want to answer.

A perspective paper by Kyle Cranmer, Gurtej Kanwar, Sébastien Racanière, Danilo Rezende, and Phiala Shanahan argues that machine learning, specifically generative AI models redesigned for physics, may be the breakthrough that finally cracks these calculations.

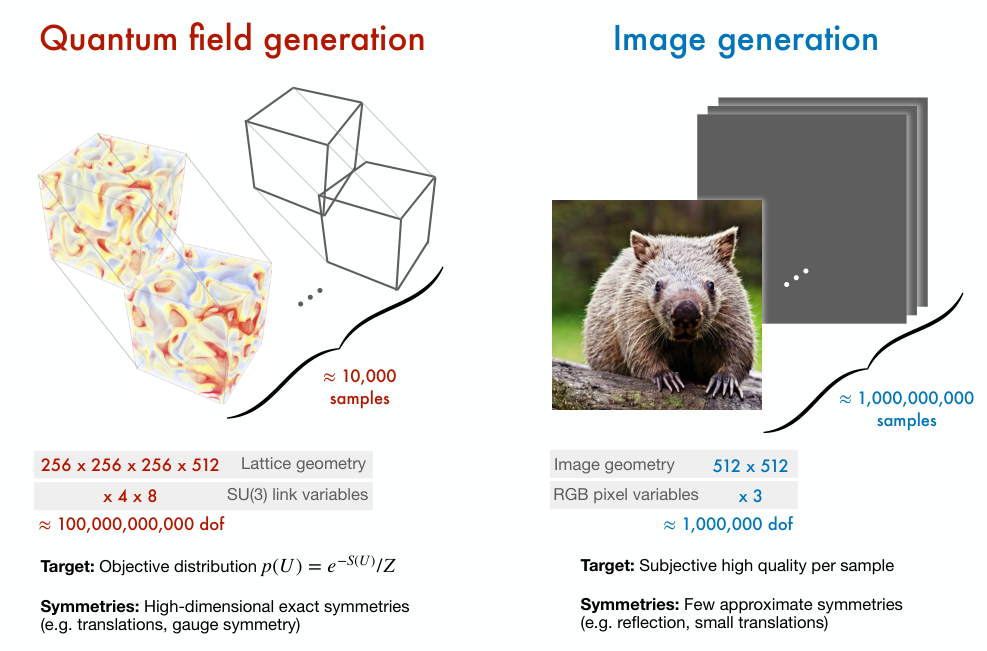

The same technology behind AI image and text generators can be repurposed to sample quantum field configurations in lattice QCD. But the models have to be carefully redesigned to respect the deep symmetries of the underlying physics, and to provide mathematically exact results rather than approximate ones.

How It Works

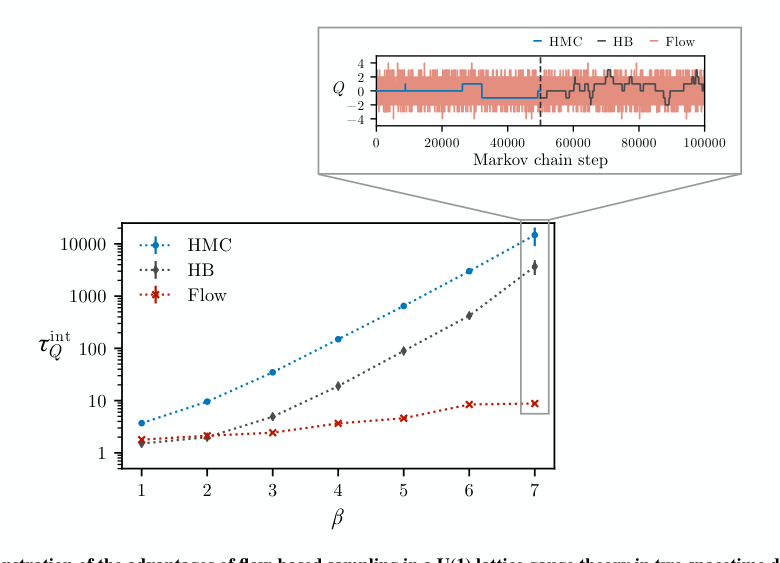

The computational bottleneck in lattice QCD isn’t raw arithmetic. It’s sampling. To compute measurable quantities like the proton mass, physicists evaluate statistical averages over an enormous space of possible field configurations using Monte Carlo methods, random-sampling algorithms that estimate quantities by drawing many representative examples. The gold standard has been Hamiltonian Monte Carlo (HMC), an algorithm that navigates configuration space like a ball rolling across a landscape. HMC was actually invented for lattice QCD before becoming a workhorse of modern statistics. But as lattice grids get finer (necessary to approach a realistic, continuous description of spacetime), HMC suffers from critical slowing down: the algorithm gets trapped in local regions and requires exponentially more steps to explore configuration space properly.

Enter normalizing flows, generative AI models that learn a smooth, reversible transformation between a simple distribution (think: a bell curve) and a complex target distribution. Instead of stumbling through configuration space via a random walk, a trained flow can directly propose new configurations drawn from something close to the true QCD distribution.

The math is clean: if you know the transformation and its Jacobian (how it stretches and compresses space), you can compute the exact probability of any proposed sample. Plug that into a Metropolis accept/reject step, a standard check that accepts or discards each proposed sample to keep the overall collection unbiased, and the algorithm remains asymptotically exact. It converges to the correct answer given enough samples. That guarantee is non-negotiable in physics.

What makes this hard, and interesting, is the structure of the QCD distribution. It’s invariant under gauge symmetry, a mathematical redundancy in how we describe gluon fields: there are many equivalent ways to write the same physical state. Naive ML architectures would have to learn this symmetry from data, a wasteful and unreliable approach. The solution is to build it in by design:

- Gauge-equivariant architectures use carefully constructed building blocks called coupling layers, coordinated transformations that update field variables in lockstep, while preserving exact gauge invariance at every step.

- Group-valued variables: the link matrices in QCD live on SU(3), a curved space of symmetry transformations. Flows must be defined on curved mathematical surfaces called manifolds, not the flat geometry most ML assumes.

- Multigrid-inspired designs process the lattice hierarchically, capturing structure at multiple length scales simultaneously.

The result is a new class of ML architectures that look nothing like what’s used in image or text generation. They’re purpose-built for the geometry and symmetry of quantum field theory.

Why It Matters

The payoff runs in two directions. For fundamental physics, a scalable ML sampler for lattice QCD would unlock calculations currently out of reach: the fine-tunings in nuclear physics that determine why carbon exists in the universe, how the lightest elements formed in the first minutes after the Big Bang, and how protons and neutrons bind into nuclei. These aren’t incremental improvements. They’re questions no amount of traditional computing can answer without algorithmic breakthroughs.

For machine learning, the demands of lattice QCD push generative modeling into genuinely new territory. Physics requires exactness guarantees that image generators don’t need. It requires models that scale to terabyte-sized samples on custom supercomputer architectures. And it requires incorporating non-trivial mathematical structures (Lie groups, manifold-valued fields, gauge redundancy) that have no counterpart in commercial AI.

The techniques developed here are already finding applications in drug discovery and molecular simulation. History suggests this is no accident: both the Metropolis algorithm and HMC were born in nuclear physics before becoming foundational tools across all of computational science.

By redesigning generative ML models to respect the deep symmetries of quantum field theory, researchers have opened a path toward exact, scalable samplers for lattice QCD. If it works at full scale, it could transform first-principles nuclear physics while advancing the broader science of machine learning.

IAIFI Research Highlights

This work combines theoretical particle physics and modern generative AI, showing how the unique demands of QCD can drive real innovations in machine learning architecture design.

The paper introduces gauge-equivariant normalizing flows, a new class of generative models that encode non-trivial Lie group symmetries by construction, extending ML-based sampling to manifold-valued, highly structured scientific distributions.

If fully realized, ML-based lattice QCD samplers would overcome the critical slowing down that limits current calculations, enabling first-principles predictions of nuclear structure and reactions that remain computationally intractable with existing methods.

The authors identify scaling to full-scale lattice QCD as the central open challenge, with promising early results suggesting the approach is viable; the full perspective is available at [arXiv:2309.01156](https://arxiv.org/abs/2309.01156).

Original Paper Details

Advances in machine-learning-based sampling motivated by lattice quantum chromodynamics

2309.01156

Kyle Cranmer, Gurtej Kanwar, Sébastien Racanière, Danilo J. Rezende, Phiala E. Shanahan

Sampling from known probability distributions is a ubiquitous task in computational science, underlying calculations in domains from linguistics to biology and physics. Generative machine-learning (ML) models have emerged as a promising tool in this space, building on the success of this approach in applications such as image, text, and audio generation. Often, however, generative tasks in scientific domains have unique structures and features -- such as complex symmetries and the requirement of exactness guarantees -- that present both challenges and opportunities for ML. This Perspective outlines the advances in ML-based sampling motivated by lattice quantum field theory, in particular for the theory of quantum chromodynamics. Enabling calculations of the structure and interactions of matter from our most fundamental understanding of particle physics, lattice quantum chromodynamics is one of the main consumers of open-science supercomputing worldwide. The design of ML algorithms for this application faces profound challenges, including the necessity of scaling custom ML architectures to the largest supercomputers, but also promises immense benefits, and is spurring a wave of development in ML-based sampling more broadly. In lattice field theory, if this approach can realize its early promise it will be a transformative step towards first-principles physics calculations in particle, nuclear and condensed matter physics that are intractable with traditional approaches.