Analytic Regression of Feynman Integrals from High-Precision Numerical Sampling

Authors

Oscar Barrera, Aurélien Dersy, Rabia Husain, Matthew D. Schwartz, Xiaoyuan Zhang

Abstract

In mathematics or theoretical physics one is often interested in obtaining an exact analytic description of some data which can be produced, in principle, to arbitrary accuracy. For example, one might like to know the exact analytical form of a definite integral. Such problems are not well-suited to numerical symbolic regression, since typical numerical methods lead only to approximations. However, if one has some sense of the function space in which the analytic result should lie, it is possible to deduce the exact answer by judiciously sampling the data at a sufficient number of points with sufficient precision. We demonstrate how this can be done for the computation of Feynman integrals. We show that by combining high-precision numerical integration with analytic knowledge of the function space one can often deduce the exact answer using lattice reduction. A number of examples are given as well as an exploration of the trade-offs between number of datapoints, number of functional predicates, precision of the data, and compute. This method provides a bottom-up approach that neatly complements the top-down Landau-bootstrap approach of trying to constrain the exact answer using the analytic structure alone. Although we focus on the application to Feynman integrals, the techniques presented here are more general and could apply to a wide range of problems where an exact answer is needed and the function space is sufficiently well understood.

Concepts

The Big Picture

Imagine trying to reverse-engineer a complex recipe by tasting it. Not just “salty and sweet,” but the precise gram measurements of every ingredient. Now imagine you have unlimited, infinitely precise bites, but the dish has thousands of components. That’s roughly the challenge physicists face when computing Feynman integrals, the mathematical building blocks underlying every prediction in particle physics.

These integrals describe how particles interact at the quantum level. The decay of a Higgs boson, the anomalous magnetic moment of the electron, a particle scattering off another: all depend on evaluating Feynman integrals to high accuracy. Exact formulas are almost always needed for comparison with experiment, but direct computation typically yields only messy decimal approximations.

Physicists have spent decades developing tricks to wrestle these objects into exact, compact symbolic expressions. Most approaches work “top-down,” exploiting the known mathematical structure of an integral to constrain its form before computing a single number. A team from Harvard and IAIFI has now shown a complementary strategy: work bottom-up. Compute the integral numerically, to extraordinary precision, at many carefully chosen input values, then use a classical algorithm from number theory to reconstruct the exact answer.

Key Insight: By combining high-precision numerical evaluation with prior knowledge of which functions can appear in the answer, it’s possible to pin down exact rational coefficients using lattice reduction, turning approximate numbers into certified analytic results.

How It Works

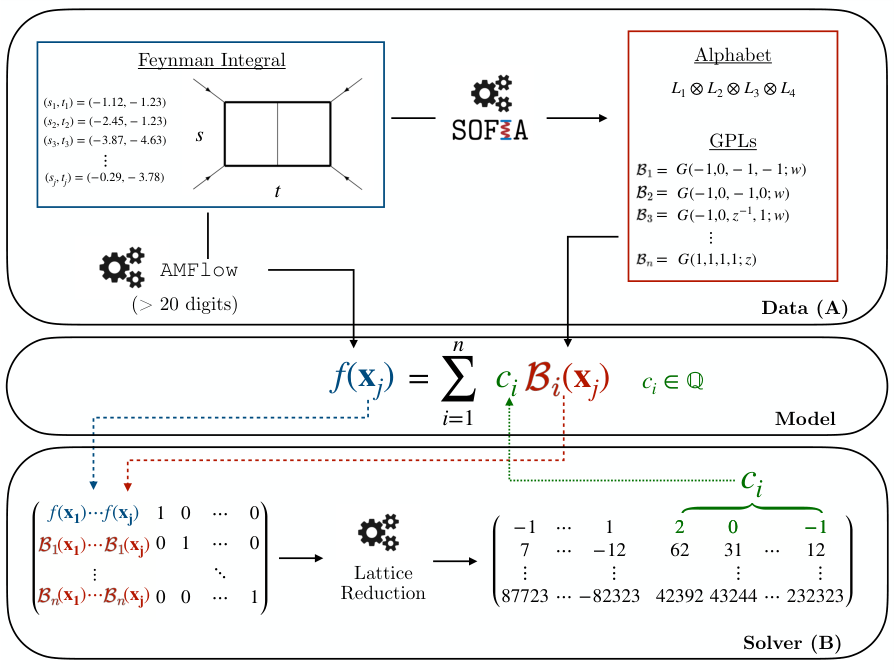

The core idea is deceptively simple. Any Feynman integral f(x) can, in principle, be written as a linear combination of known basis functions Bᵢ(x), a toolkit of well-understood mathematical functions like logarithms and their higher-order generalizations. The catch: the coefficients cᵢ must be exact rational numbers (like 2 or −7/3), not floating-point approximations. Matrix inversion alone can’t recover them reliably, because numerical error compounds quickly as the basis grows.

The researchers’ solution rests on three ingredients:

-

High-precision numerical integration using AMFlow, a modern tool that reduces multi-loop Feynman integrals to solving one-dimensional differential equations, yielding evaluations to 100 or more significant digits.

-

A basis of iterated integrals derived from the integral’s known singularity structure, the mathematical locations where the integral blows up or vanishes. Decades of progress in solving the Landau equations have made it possible to enumerate candidate basis functions before solving for anything.

-

Lattice reduction, specifically the Lenstra–Lenstra–Lovász (LLL) algorithm, a number-theory technique that identifies exact rational numbers hiding inside long decimal approximations. Given enough precision, LLL distinguishes the true rational coefficient from numerical noise.

Here’s the workflow: evaluate the integral and all basis functions at n chosen points, assemble the data into a matrix, and feed it into LLL. If the precision is sufficient and the basis is correct, the algorithm returns exact integers (the coefficients cᵢ). A decimal like 1.9999999998… becomes, unambiguously, 2.

The approach scales well. The team mapped out how much precision is required as a function of basis size, number of evaluation points, and coefficient magnitude. Precision requirements grow only linearly with the number of basis functions, so the method stays tractable even for complex multi-loop diagrams.

The paper works through four examples of increasing difficulty. The simplest is the one-loop triangle integral, whose exact answer involves dilogarithms with coefficients {2, −2, 1}, trivially recovered. The most demanding is the two-loop outer-mass double box, a particularly complex scattering diagram involving massive particles across two kinematic scales. Its exact form involves dozens of basis functions drawn from weight-4 polylogarithms, and the LLL pipeline handles it cleanly, recovering all coefficients exactly, including several that prior approaches had left incomplete.

Why It Matters

Feynman integrals are the engine of precision particle physics. Every theoretical prediction for the Large Hadron Collider, including searches for physics beyond the Standard Model, depends on computing these integrals to two, three, or four loops. Obtaining exact analytic results has long been the bottleneck.

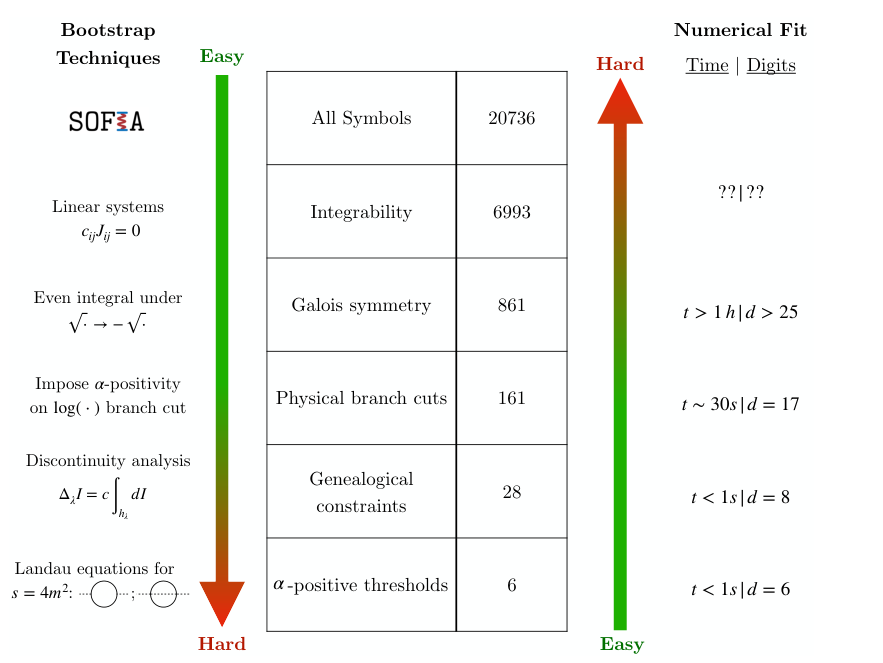

The two main alternatives each have drawbacks. The Landau-bootstrap approach derives results purely from analytic constraints. It’s powerful, but requires considerable human ingenuity for each new integral. Numerical methods are fast but deliver approximations that can’t always be trusted at the precision experiments demand.

This paper opens a third path, one that is more automated. Once the function space is understood (and modern tools like SOFIA can now determine it semi-automatically for many integrals), the numerical-plus-LLL pipeline recovers exact answers with minimal manual intervention. The method also generalizes beyond Feynman integrals. Any problem requiring exact functional decomposition over a sufficiently constrained function space could benefit, from algebraic geometry to number theory to machine-learning-assisted mathematical discovery.

Bottom Line: High-precision numerics plus lattice reduction can recover exact analytic answers for Feynman integrals that have resisted closed-form computation, providing a reproducible, automatable route through one of theoretical physics’ most persistent bottlenecks.

IAIFI Research Highlights

This work sits at the intersection of algorithmic number theory (LLL lattice reduction) and the physics of Feynman integrals, using machine-precision numerical evaluation as the connective tissue between the two fields.

The paper shows that analytic regression (recovering exact symbolic expressions from high-precision numerical data) works as a general machine-assisted strategy, with applications well beyond particle physics.

By providing a bottom-up complement to the Landau-bootstrap program, the method accelerates computation of multi-loop Feynman integrals essential for next-generation precision predictions at collider experiments.

Future work will extend the approach to integrals involving elliptic functions and other exotic function spaces; the preprint is available at [arXiv:2507.17815](https://arxiv.org/abs/2507.17815) (Barrera, Dersy, Husain, Schwartz, Zhang, 2025).