Automatic Environment Shaping is the Next Frontier in RL

Authors

Younghyo Park, Gabriel B. Margolis, Pulkit Agrawal

Abstract

Many roboticists dream of presenting a robot with a task in the evening and returning the next morning to find the robot capable of solving the task. What is preventing us from achieving this? Sim-to-real reinforcement learning (RL) has achieved impressive performance on challenging robotics tasks, but requires substantial human effort to set up the task in a way that is amenable to RL. It's our position that algorithmic improvements in policy optimization and other ideas should be guided towards resolving the primary bottleneck of shaping the training environment, i.e., designing observations, actions, rewards and simulation dynamics. Most practitioners don't tune the RL algorithm, but other environment parameters to obtain a desirable controller. We posit that scaling RL to diverse robotic tasks will only be achieved if the community focuses on automating environment shaping procedures.

Concepts

The Big Picture

Imagine handing a new intern a task at 5 PM and walking in the next morning to find it done perfectly. No hand-holding, no tweaking the instructions, no staying late to troubleshoot. That’s the dream roboticists have been chasing for decades, and the obstacle isn’t what most people think.

The conventional wisdom says we need better learning algorithms. Smarter optimization, more efficient trial-and-error, cleverer software designs. But a position paper from MIT’s Improbable AI Lab argues this is the wrong place to look. The real bottleneck is something unglamorous: the enormous amount of human labor required to set up the training environment before learning even begins.

Before you can train a robot to load a dishwasher, someone has to define what it can observe, how it can move, what counts as success, and how the simulation should behave. That process, called environment shaping, is the hidden tax on every robotics AI project. The researchers’ thesis: the field will only scale to truly general robotics if we automate environment shaping itself.

Key Insight: Environment shaping (designing rewards, observations, actions, and simulation dynamics) is the primary bottleneck in scaling reinforcement learning to diverse robotic tasks, and the community should treat its automation as a first-class research priority.

How It Works

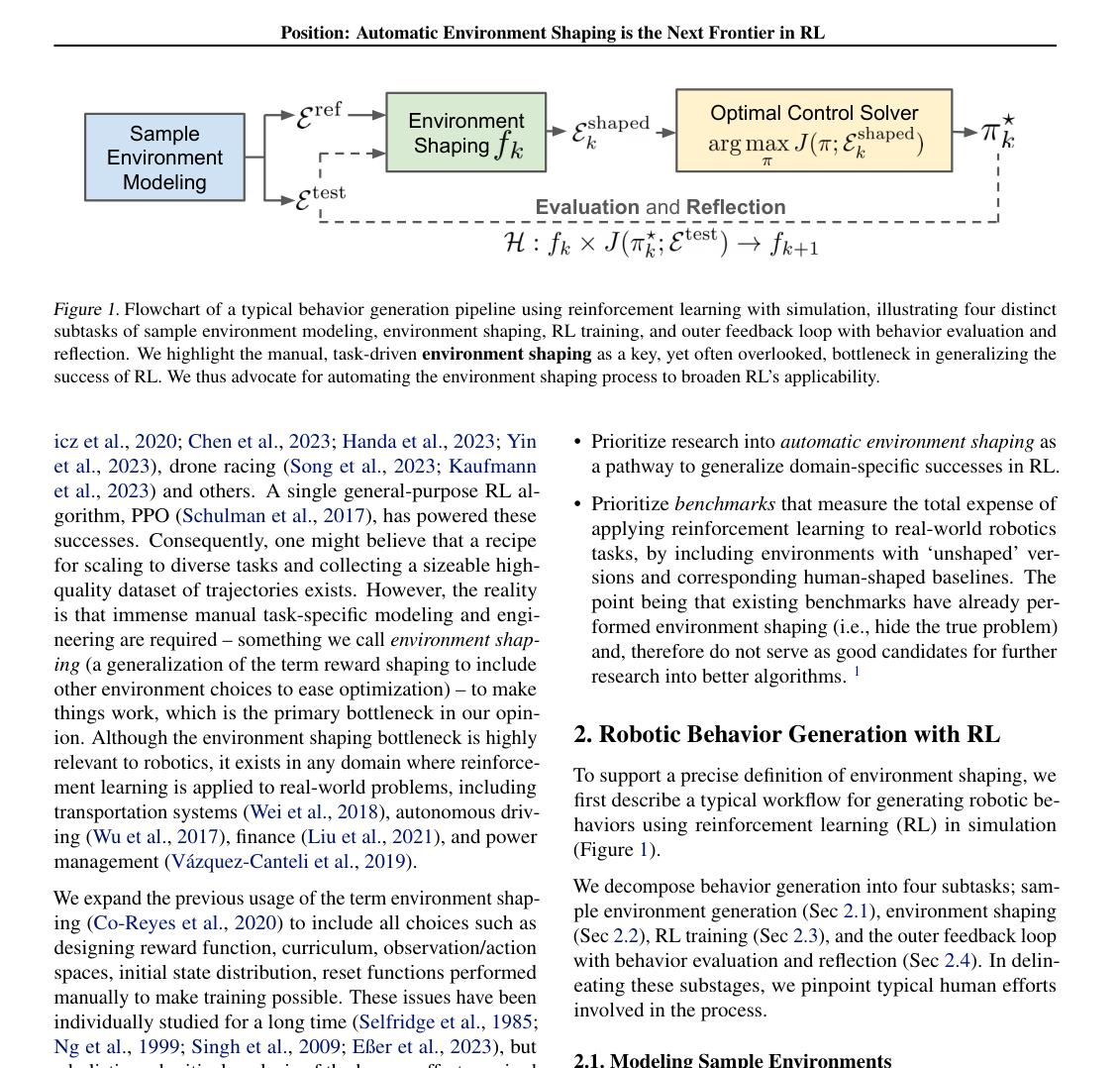

The MIT team studies reinforcement learning (RL), a method where a robot learns by trial and error inside a simulation, then transfers that behavior to the real world. They break this process into four stages:

- Sample environment modeling — building a simulation: importing robot and asset models, placing objects in their default poses

- Environment shaping — the human-intensive design work: crafting reward functions, defining observations, choosing action representations, specifying curricula

- RL training — running the algorithm inside the shaped simulator

- Outer feedback loop — evaluating the learned behavior and iterating

Most research attention flows to stage three. But the paper argues that practitioners almost never tune the algorithm. They tune the environment.

A roboticist wrestling with a four-legged robot (a “quadruped”) that won’t learn to walk isn’t tweaking the underlying learning equations. They’re redesigning the reward function, restricting the action space, adjusting the difficulty of the terrain curriculum.

The paper defines environment shaping precisely: every design choice made to make training tractable that isn’t strictly required to model the physics of the task. A reward function that penalizes falling is environment shaping. So is giving the robot access to its own joint velocities. So is starting training on flat ground before introducing stairs. None of these choices flow automatically from “teach the robot to walk.” A human expert has to invent them.

The dishwasher example is instructive. Modeling the true distribution of real-world dishwashers (every brand, loading configuration, water pressure, soap residue) is intractable. So you pick a representative sample, call it a reference environment, and design your shaping choices to work on that. The problem: every new robot and every new task requires re-inventing these choices from scratch, with no principled way to transfer expertise across problems.

The paper catalogs where human effort concentrates: designing reward functions (often weeks of iteration), crafting observations that include the right signals, selecting action representations that make the problem tractable, and specifying domain randomization. That last one means intentionally varying simulation parameters like lighting, friction, and object weights so the robot learns to handle real-world variability. Each category is a form of expert knowledge that currently lives in researchers’ heads and doesn’t generalize.

Why It Matters

This is a position paper. It’s making an argument, not presenting a new algorithm. And the argument lands hard.

The paper arrives at a moment when large language models have shown that the right abstraction plus scale can dissolve problems that previously required painstaking hand-engineering. The same transition happened in computer vision, where hand-coded edge detectors gave way to learned representations, and in language processing, where grammar rules gave way to models trained on vast text. The authors are betting robotics is next, but only if the community stops optimizing the RL algorithm and starts automating the scaffolding around it.

What would that look like in practice? LLMs already show early promise in generating reward functions from natural language task descriptions. Curriculum design can be framed as a meta-learning problem, where a system learns how to set up learning tasks rather than just how to solve them. Domain randomization ranges can be inferred from real-world data.

Each of these is a research direction on its own. The paper is a call to organize them into a coherent program rather than treating them as isolated tricks. The long-term prize: a robot you can hand a task description in the evening and trust to figure out the rest.

Bottom Line: The next breakthrough in robotics won’t come from better RL algorithms. It’ll come from automating the unglamorous work of environment design that currently requires expert human labor for every new task. This paper names that problem and makes the case that solving it is the field’s most urgent priority.

IAIFI Research Highlights

This work connects theoretical RL research with practical robotics deployment, identifying the human-in-the-loop bottleneck that prevents AI systems from autonomously acquiring physical skills at scale.

The paper reframes a longstanding assumption in RL research, that algorithmic advances are the primary lever, and argues that meta-level automation of training environment design is the more impactful frontier.

By targeting the scalability of sim-to-real transfer, this work addresses a core obstacle to deploying intelligent physical agents that interact with the messy, variable structure of the real world.

The paper calls for systematic research into automated reward design, curriculum generation, and observation/action space optimization; the full position paper is available via ICML 2024 proceedings ([arXiv:2407.16186](https://arxiv.org/abs/2407.16186), PMLR 235, 2024).