Categorical Representation Learning and RG flow operators for algorithmic classifiers

Authors

Artan Sheshmani, Yizhuang You, Wenbo Fu, Ahmadreza Azizi

Abstract

Following the earlier formalism of the categorical representation learning (arXiv:2103.14770) by the first two authors, we discuss the construction of the "RG-flow based categorifier". Borrowing ideas from theory of renormalization group flows (RG) in quantum field theory, holographic duality, and hyperbolic geometry, and mixing them with neural ODE's, we construct a new algorithmic natural language processing (NLP) architecture, called the RG-flow categorifier or for short the RG categorifier, which is capable of data classification and generation in all layers. We apply our algorithmic platform to biomedical data sets and show its performance in the field of sequence-to-function mapping. In particular we apply the RG categorifier to particular genomic sequences of flu viruses and show how our technology is capable of extracting the information from given genomic sequences, find their hidden symmetries and dominant features, classify them and use the trained data to make stochastic prediction of new plausible generated sequences associated with new set of viruses which could avoid the human immune system. The content of the current article is part of the recent US patent application submitted by first two authors (U.S. Patent Application No.: 63/313.504).

The Big Picture

Imagine trying to understand a city from a satellite photo. Zoom out too fast and you lose the street-level detail that explains why neighborhoods work the way they do; zoom in too close and the sheer number of buildings overwhelms any sense of pattern. What you really want is a systematic way to move between scales, preserving what matters at each level while compressing what doesn’t. Physicists have been doing exactly this for decades with a technique called the renormalization group, and now a team led by researchers at IAIFI has turned that same machinery into a new kind of AI architecture.

The renormalization group (RG) is one of theoretical physics’ most powerful ideas. It explains how the laws of physics change depending on the scale at which you observe a system: phase transitions (water turning to steam), the behavior of quarks inside protons, critical phenomena in condensed matter. But that mathematical machinery has seen surprisingly little direct adoption in machine learning. A new paper by Artan Sheshmani, Yizhuang You, Wenbo Fu, and Ahmadreza Azizi changes that, constructing an architecture called the RG-flow categorifier that encodes how information flows across scales as its core operating principle.

The team applied the architecture to a genuinely difficult problem in computational biology: predicting how influenza viruses evolve to evade the human immune system.

Key Insight: By embedding the mathematical structure of renormalization group flows directly into a neural network, the researchers built a classifier that can not only recognize genomic patterns, but generate new, biologically plausible viral sequences that might escape immune detection.

How It Works

In statistical mechanics, a many-body system (billions of interacting spins in a magnet, say) is too complex to analyze directly. RG solves this through coarse-graining: iteratively grouping small-scale variables into effective large-scale ones, building a hierarchy of descriptions that zooms out step by step. A genomic sequence has the same structure, with many tokens at the microscopic level and coherent meaning only emerging at higher scales.

The catch is that physicists typically define the coarse-graining rules by hand. For real-world data like protein sequences, those rules aren’t obvious. The team’s solution borrows from holographic duality, the idea from string theory that a physical system in flat space can be equivalently described by a theory on a higher-dimensional curved surface.

In the RG context, the optimal coarse-graining transformation maps flat data space into a hyperbolic geometry, a curved, saddle-shaped space that naturally organizes information by hierarchy. Features at different length scales become disentangled in this curved space, and short-range correlations in the interior represent long-range correlations in the original data.

To make this invertible and trainable, the researchers combine three ingredients:

- Neural ODEs: Network depth becomes a continuous parameter governed by a differential equation. The network flows smoothly through scales rather than jumping in discrete steps, with RG “flow time” as the governing variable.

- Flow-based generative models: Bijective transformations that let the network both classify data and generate new samples by running the same process forwards or backwards.

- Hyperbolic space embedding: Hierarchical structure is encoded in the geometry itself, with features organized by relevance at each scale.

The algorithm separates relevant fields (features that dominate at large scales) from irrelevant fields (microscopic fluctuations that wash out under coarse-graining). An objective function maximizes the disentanglement between the two, mirroring the physical intuition of RG flows converging toward fixed points where scale-invariance holds.

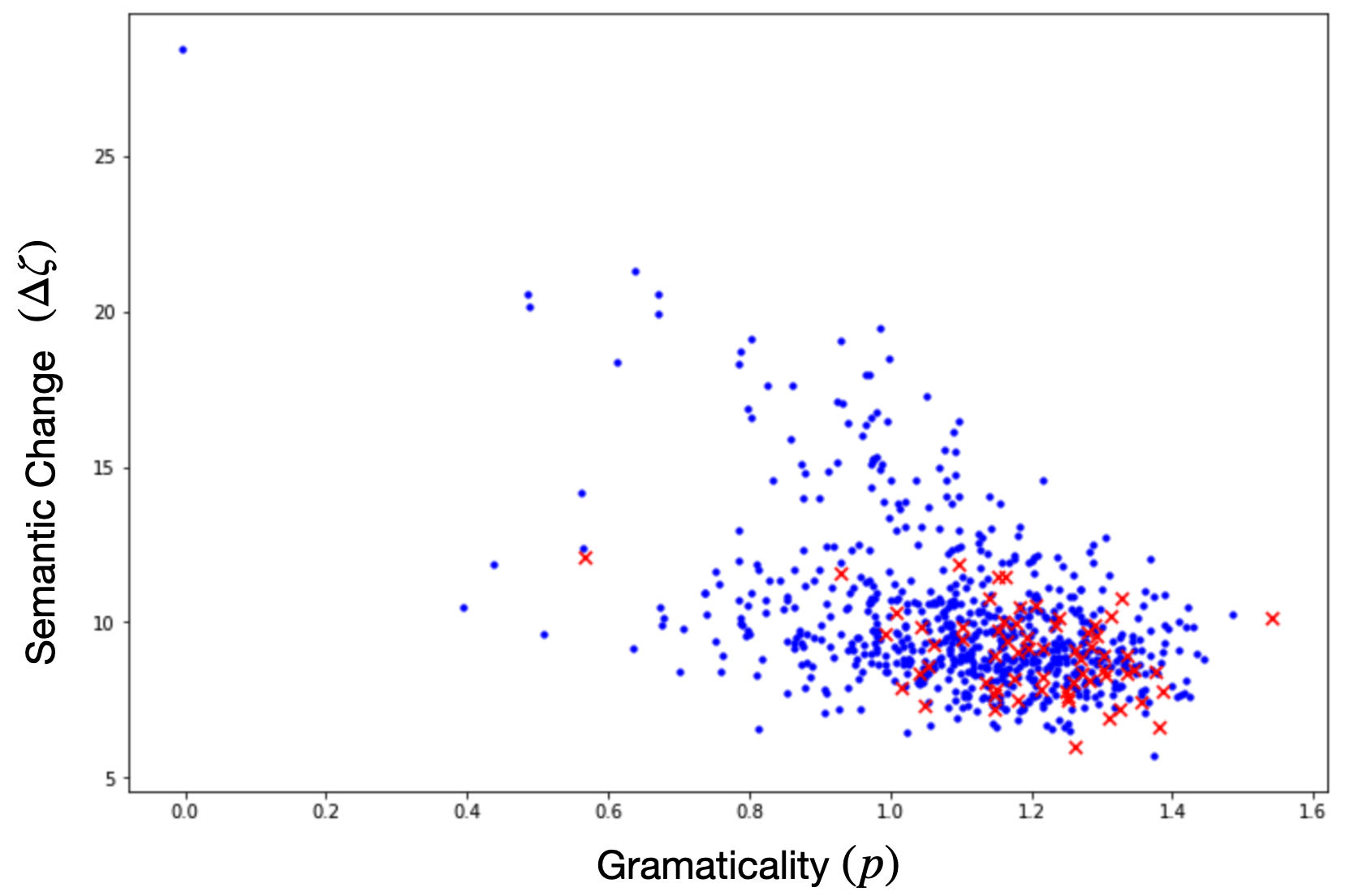

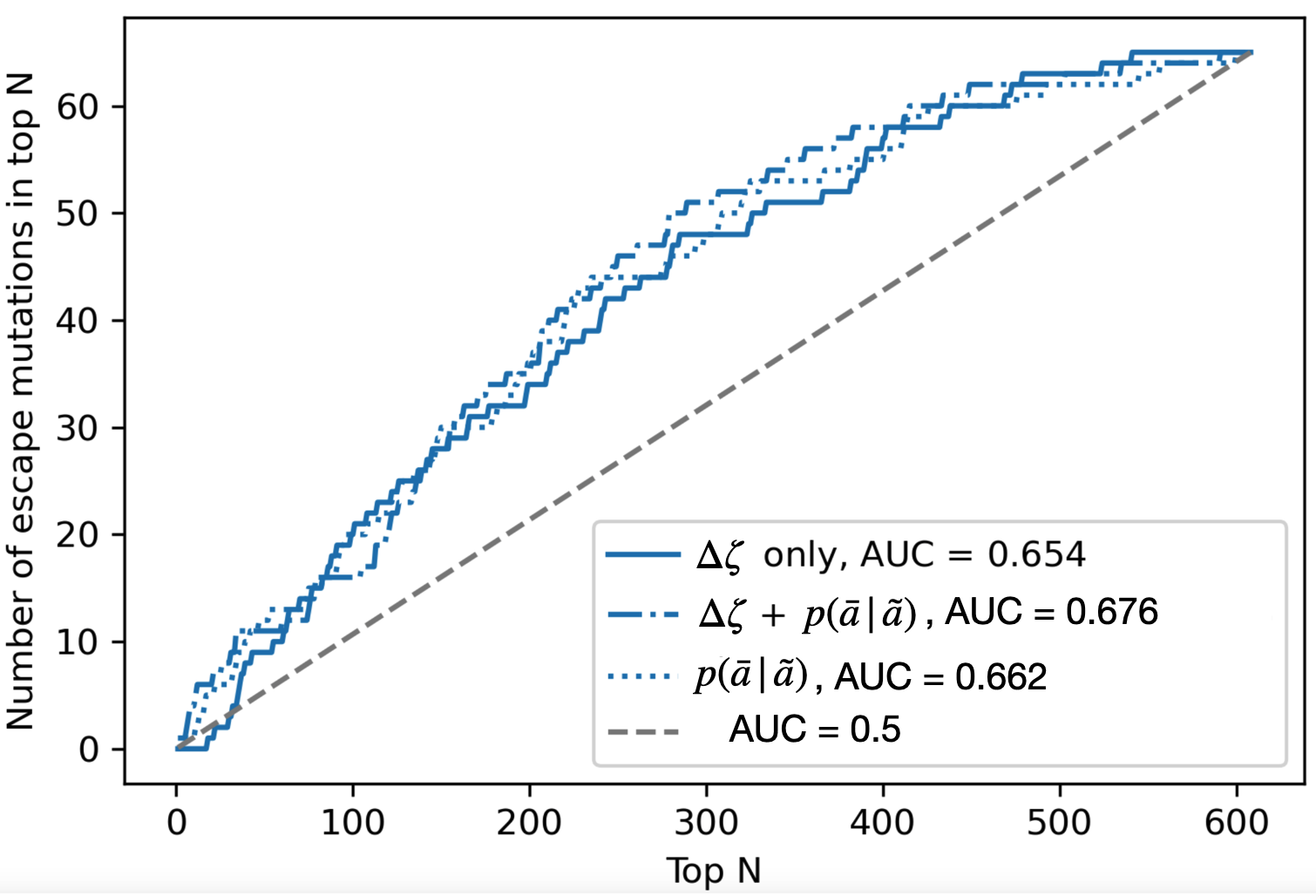

The experiments focused on flu virus genomics. Trained on hemagglutinin protein sequences, the surface proteins that determine strain identity and immune response, the model first learned the statistical distribution of amino acid residues at each position along the sequence. It then moved to full sequence distribution learning, capturing correlations across the entire chain. The most striking result came from the viral escape mutation task: given sequences known to evade immune detection, the trained model generated new, statistically plausible sequences with similar escape properties. None of them appeared in the training data.

Why It Matters

The connection between RG theory and deep learning has been floating around the theoretical ML community for years. Convolutional networks seem to implement something like coarse-graining, and network depth parallels a physical system’s flow toward large-scale behavior. But these analogies had stayed informal.

This paper makes them precise, embedding the RG transformation directly into the architecture with grounding in differential geometry, hyperbolic geometry, and holographic duality.

The biomedical angle deserves attention. Predicting viral evolution, not just classifying known strains but generating hypothetical future variants, is the kind of problem where standard ML approaches struggle. The RG categorifier has a structural advantage here: it doesn’t memorize training sequences. It learns the hierarchical structure that generates them. The same framework could extend to protein folding, drug design, or any sequential data where multi-scale structure matters.

Bottom Line: The RG-flow categorifier translates one of quantum field theory’s central conceptual tools into a working AI architecture, then demonstrates it by generating new flu virus sequences that could evade immune detection, pointing toward new approaches in both AI research and pandemic preparedness.

IAIFI Research Highlights

This work connects renormalization group theory, a pillar of quantum field theory and statistical physics, with neural network architecture design, translating holographic duality and hyperbolic geometry into a concrete, trainable machine learning system.

The RG-flow categorifier introduces an architecture capable of both classification and generation at all network layers, built on a physically motivated, invertible coarse-graining framework using neural ODEs and flow-based generative models.

The paper shows how RG flow operators can be constructed on moduli spaces of smooth maps, bringing together nonlinear sigma models and holographic duality in a single algorithmic framework.

Future directions include scaling the architecture to larger genomic datasets and extending the holographic mapping to two-dimensional data such as images. The full paper is available at [arXiv:2203.07975](https://arxiv.org/abs/2203.07975), with related foundational work at [arXiv:2103.14770](https://arxiv.org/abs/2103.14770).