Chained Quantile Morphing with Normalizing Flows

Authors

Samuel Bright-Thonney, Philip Harris, Patrick McCormack, Simon Rothman

Abstract

Accounting for inaccuracies in Monte Carlo simulations is a crucial step in any high energy physics analysis. It becomes especially important when training machine learning models, which can amplify simulation inaccuracies and introduce large discrepancies and systematic uncertainties when the model is applied to data. In this paper, we introduce a method to transform simulated events to better match data using normalizing flows, a class of deep learning-based density estimation models. Our proposal uses a technique called chained quantile morphing, which corrects a set of observables by iteratively shifting each entry according to a conditonal cumulative density function. We demonstrate the technique on a realistic particle physics dataset, and compare it to a neural network-based reweighting method. We also introduce a new contrastive learning technique to correct high dimensional particle-level inputs, which naively cannot be efficiently corrected with morphing strategies.

Concepts

The Big Picture

Imagine training a self-driving car exclusively in a video game, then expecting it to navigate real city streets. The simulated world looks close enough, same roads, same traffic lights, but subtle differences accumulate. The car has learned the quirks of the simulation, not reality.

Physicists at the Large Hadron Collider face a version of this problem. They rely on Monte Carlo simulations, computer programs that recreate the chaos of proton-proton collisions by generating millions of random events, to train the machine learning algorithms that sift through petabytes of experimental data. These simulations are impressively detailed, but not perfect.

They struggle to capture every nuance of how particles interact with detectors, how quarks clump into composite particles, and dozens of other messy real-world effects. When a neural network trained on simulated data encounters real collision events, the gaps between simulation and reality translate directly into measurement errors.

This problem has grown more acute as physicists have adopted more powerful machine learning models operating on granular, particle-level data. Those models are also more sensitive to simulation quirks. Researchers from Cornell and MIT, working under the IAIFI umbrella, have developed a solution: a technique called chained quantile morphing with normalizing flows that systematically reshapes simulated data to match reality, without discarding the simulations entirely.

Key Insight: Rather than reweighting simulated events or throwing them out, chained quantile morphing physically transforms the simulated data distribution to match experimental data, correcting the simulation itself, one observable at a time.

How It Works

The core idea draws on a clean mathematical fact: any probability distribution can be transformed into any other using cumulative distribution functions (CDFs), which describe what fraction of a dataset falls below any given value. In one dimension, this is almost trivial. If you know where a simulated particle falls in the simulated distribution, you can map it to the corresponding position in the real distribution. Same quantile, different value. The simulation measured your photon at 47 GeV; the real detector would have seen 49 GeV. Shift it.

Higher dimensions are where things get hard. Particle collisions don’t produce single numbers. They produce jets of dozens of particles, each with energy, momentum, and angular coordinates. These observables are deeply correlated, so correcting each one independently would destroy those correlations and produce physically nonsensical events.

Chained quantile morphing (CQM) solves this by correcting observables sequentially, each conditioned on those already corrected:

- Correct observable $x_1$ using its marginal CDF

- Correct $x_2$ conditioned on the corrected $x_1$

- Correct $x_3$ conditioned on corrected $x_1$ and $x_2$

- Continue until all observables are corrected

Each step uses a normalizing flow, a deep learning model that learns smooth, invertible transformations between probability distributions, to estimate the conditional CDFs continuously. Earlier implementations relied on discretized, binned approximations. Normalizing flows replace those with neural estimators that handle complex correlations without binning artifacts.

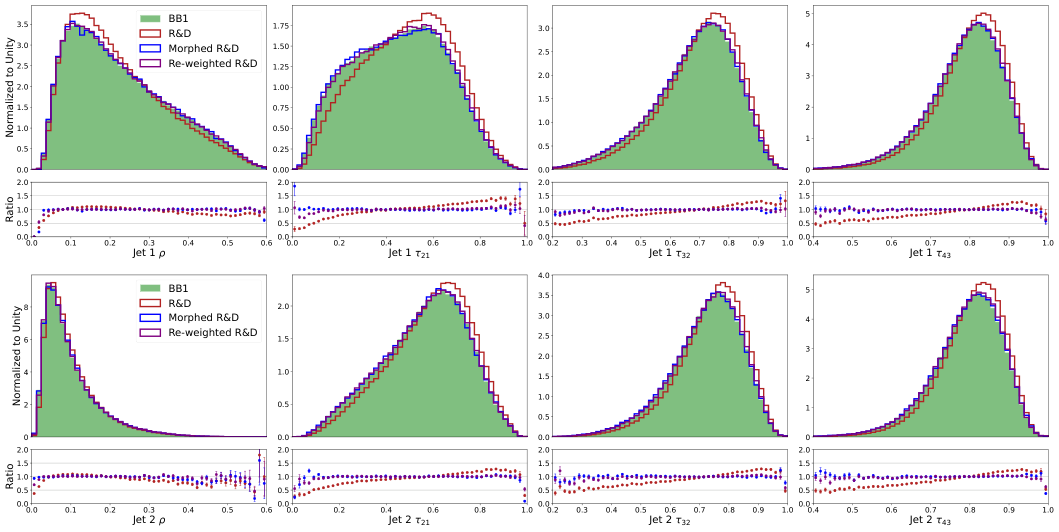

The result is a transformed simulated dataset where each event has been physically shifted to match the real data distribution, while preserving inter-observable correlations. The authors test this on realistic jet events (the particle sprays produced when high-energy quarks and gluons scatter), correcting observables like jet mass, charged particle fraction, and track multiplicity.

For truly high-dimensional inputs, with dozens or hundreds of particles per jet, CQM hits a wall. The chain of conditional CDFs becomes computationally intractable at that scale.

The workaround: use contrastive learning, where a neural network learns to distinguish real data from simulated data, to compress the high-dimensional particle cloud into a low-dimensional summary capturing the physically relevant information. CQM then operates in this compressed space. Morphing there and mapping back to particle-level inputs corrects the full distribution without having to wrestle with the raw dimensionality.

Why It Matters

The stakes here are higher than they might appear. Monte Carlo corrections aren’t just a technical nuisance. They directly limit the precision of Standard Model measurements and the sensitivity of searches for new physics. As the LHC enters high-luminosity operation and physicists squeeze every last bit of statistical power from their datasets, systematic uncertainties from simulation mismodeling could become the dominant limitation. A method that reduces those uncertainties could be what separates a discovery from noise.

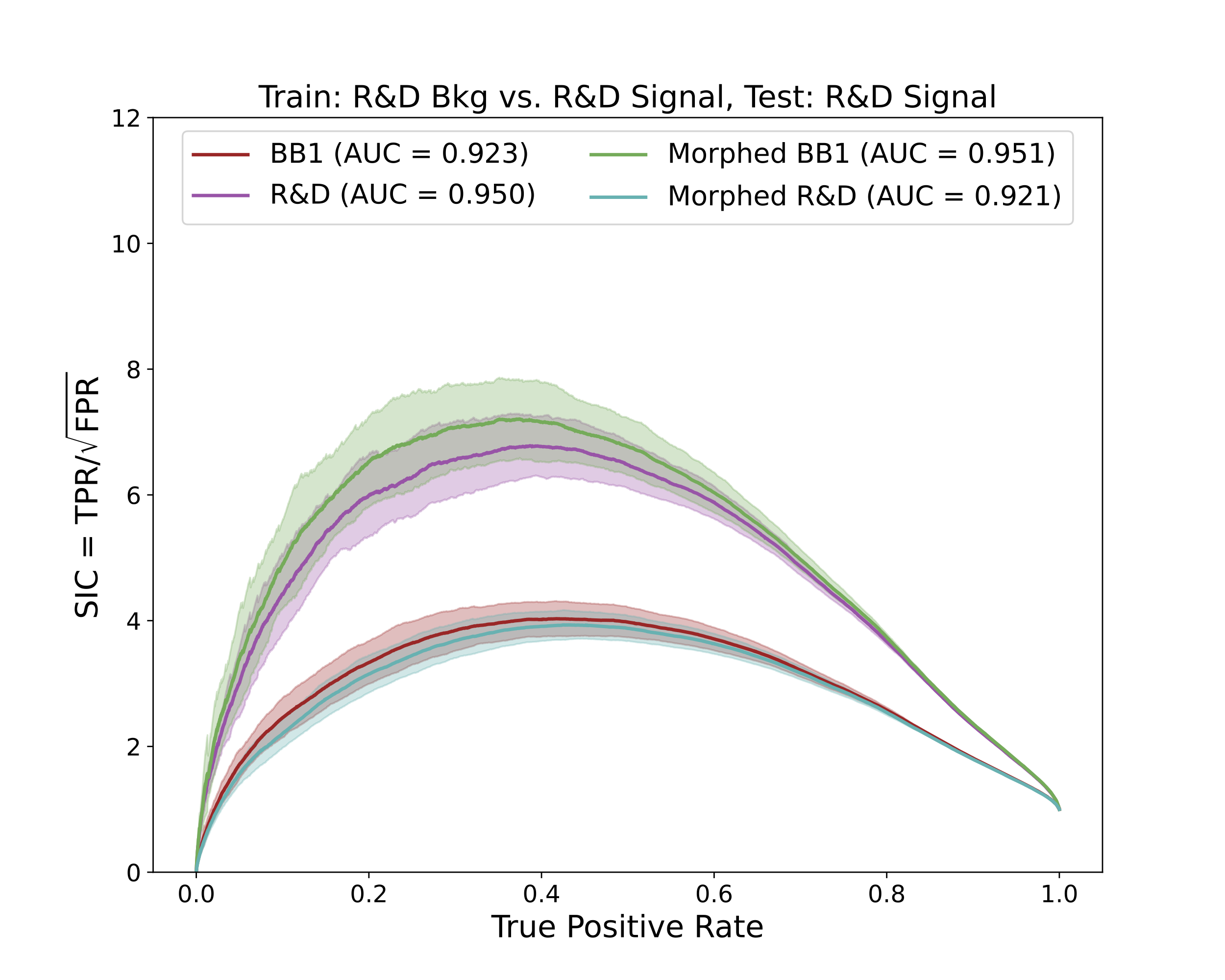

CQM with normalizing flows has real advantages over existing reweighting methods. When simulated and real distributions differ substantially, particularly in the tails where rare but important events live, reweighting assigns enormous statistical weights to individual events and bloats uncertainties. Morphing sidesteps this by physically moving events rather than up-weighting them.

The authors show that CQM is robust to small levels of signal contamination, can be trained in a control region, and accurately interpolates into a blinded signal region. These are all essential properties for deployment in real LHC analyses. Against neural network reweighting, CQM shows competitive performance overall and does best precisely in the regimes where reweighting struggles most.

Bottom Line: Chained quantile morphing with normalizing flows offers a principled, powerful way to correct Monte Carlo simulations for particle physics analysis. A new contrastive learning extension opens the door to correcting the high-dimensional particle-level data that modern ML models need.

IAIFI Research Highlights

This work applies modern deep learning (normalizing flows and contrastive learning) to a central practical challenge in experimental particle physics, showing that generative ML can serve as precision calibration tools at the LHC.

The paper introduces a continuous, flow-based implementation of chained quantile morphing and a contrastive learning strategy for high-dimensional domain adaptation. These techniques have potential applications beyond physics, wherever simulation-to-reality gaps limit ML performance.

By reducing systematic uncertainties from Monte Carlo mismodeling, this method directly improves the precision of LHC measurements and the sensitivity of new physics searches, addressing one of the primary bottlenecks in current experimental analyses.

Future work could extend CQM to even higher-dimensional inputs and explore integration into end-to-end analysis pipelines. The method is detailed in [arXiv:2309.15912](https://arxiv.org/abs/2309.15912) by Bright-Thonney, Harris, McCormack, and Rothman (2023).