Comparing Point Cloud Strategies for Collider Event Classification

Authors

Peter Onyisi, Delon Shen, Jesse Thaler

Abstract

In this paper, we compare several event classification architectures defined on the point cloud representation of collider events. These approaches, which are based on the frameworks of deep sets and edge convolutions, circumvent many of the difficulties associated with traditional feature engineering. To benchmark our architectures against more traditional event classification strategies, we perform a case study involving Higgs boson decays to tau leptons. We find a 2.5 times increase in performance compared to a baseline ATLAS analysis with engineered features. Our point cloud architectures can be viewed as simplified versions of graph neural networks, where each particle in the event corresponds to a graph node. In our case study, we find the best balance of performance and computational cost for simple pairwise architectures, which are based on learned edge features.

Concepts

The Big Picture

Imagine trying to describe a fireworks display to someone who can only hear a fixed number of bangs. You’d have to decide in advance: record the five loudest? The first five? What if the best information hides in the pattern of smaller bursts that got cut off?

This is roughly the problem physicists face when classifying collider events, the messy particle-spraying aftermath of proton collisions at the Large Hadron Collider (LHC). Every collision produces a different number of particles flying in different directions. Traditional machine learning demands fixed-size inputs, forcing physicists into uncomfortable choices about which particles to keep and how to summarize the rest.

A team from UT Austin, Stanford, and MIT took a different approach. Instead of squeezing variable-length collision data into rigid templates, they treated each event as a point cloud: an unordered set of particles where each point encodes that particle’s energy, speed, and direction. This representation matches the physics. There’s no “correct” ordering to particles, and no reason to truncate the list.

Their question wasn’t whether point clouds work (earlier work established that) but which kind of point cloud architecture works best, and at what computational cost. Their answer: a simple pairwise architecture outperforms the benchmark set by the ATLAS experiment, one of the flagship particle detectors at the LHC, by a factor of 2.5 while remaining computationally tractable.

Key Insight: By representing collider events as point clouds and learning directly from particle pairs, researchers can more than double classification performance compared to hand-engineered feature baselines, without the combinatorial headaches of traditional approaches.

How It Works

The researchers focused on a specific problem: identifying Higgs boson decays into two tau leptons (H→ττ) against a noisy background of other processes. Tau leptons are short-lived and hard to reconstruct, making this channel a good stress test for new classification strategies.

Each collision event becomes a point cloud, where each particle is an n-dimensional vector encoding its energy, momentum direction, and other properties. The cloud is unordered and variable-length. The challenge is building neural networks that operate on such inputs without imposing artificial structure.

The team built and compared seven distinct architectures, organized around two foundational ideas:

- Deep Sets: Compute global event properties using permutation-symmetric functions, meaning functions that give the same answer regardless of particle ordering. Think of summing contributions from each particle, then feeding that sum into a neural network.

- Edge Convolutions (EdgeConvs): Compute local information about each particle relative to its neighbors, capturing how particles relate to each other rather than just their individual properties.

From these building blocks, the paper constructs a hierarchy. The simplest, called particlewise, looks at each particle independently. The pairwise architecture considers every particle pair together, learning features from their combined kinematics. The tripletwise extends this to all particle triples. More elaborate variants add iterated layers, nonlinear projections, and global context carried into local computations.

The pairwise architecture has a useful dual interpretation: it simultaneously acts as a deep set operating on pairs and as a symmetric pooling of edge convolutions. This makes it both powerful and interpretable.

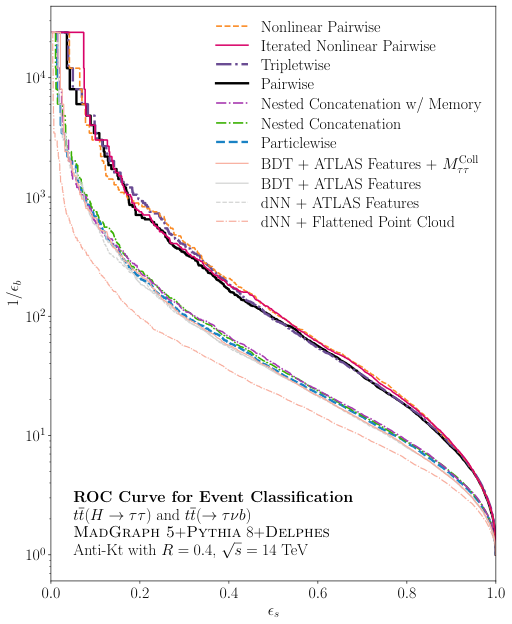

Benchmarking against ATLAS. The team trained all architectures on Monte Carlo simulated events and compared them against the ATLAS collaboration’s published analysis, which uses carefully hand-engineered features fed into boosted decision trees.

The pairwise architecture delivered a 2.5× performance improvement: for every fake Higgs→ττ event accepted, the network finds 2.5 times as many real ones. Even the simplest point cloud approaches outperformed the hand-engineered baseline.

More complex architectures showed marginal gains but scaled poorly. Tripletwise computations grow as O(n³) in particle count; pairwise grows as O(n²). For typical LHC events with dozens to hundreds of particles, that difference is decisive.

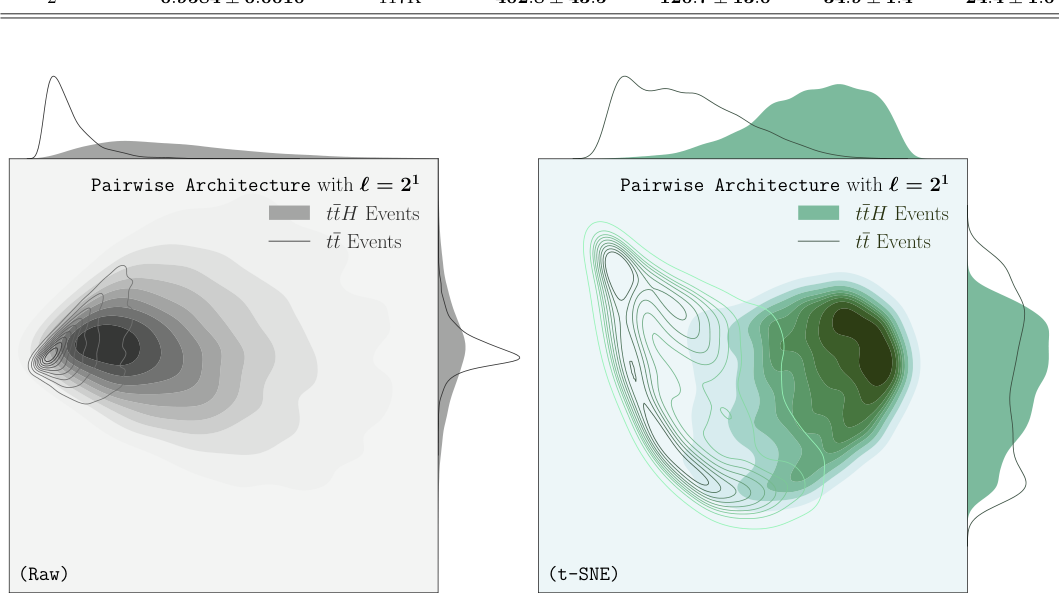

One surprise: even a single-dimensional latent space in the pairwise architecture outperformed the full ATLAS analysis. With a few dozen dimensions, performance plateaus near optimal. The features the network learned to extract correlate with the hand-engineered features physicists already use, discovered automatically from raw kinematics without being told what to look for.

Why It Matters

Point cloud methods were developed for 3D computer vision (think autonomous vehicles analyzing LiDAR scans). This paper systematically tests whether those ideas transfer to collider physics, and which variants matter most. Not all point cloud architectures are equal, and the computational tradeoffs are real.

For the LHC physics program, the implications are concrete. The ATLAS and CMS collaborations process billions of events, so any deployed architecture must run at scale. A method 2.5× more sensitive to Higgs signals means experiments can reach discovery thresholds with less data, or probe rarer decay modes that were previously inaccessible.

The pairwise architecture is also conceptually simple: just a deep set acting on particle pairs. That makes it easier to validate, audit, and deploy than a black-box graph neural network. It matters when your detector costs billions of dollars and your results must withstand years of scrutiny.

The pairwise and tripletwise architectures can be seen as simplified graph neural networks (GNNs), and the paper’s framework provides a principled ladder for trading expressivity against cost. Future work could explore dynamic graph construction, letting the network decide which pairs to attend to, or incorporate detector-specific symmetries directly into the architecture.

Bottom Line: A simple pairwise point cloud architecture more than doubles Higgs classification performance over traditional engineered features, while remaining computationally tractable. For LHC physics, the sweet spot lies in learning from particle pairs, not individual particles or complex graph structures.

IAIFI Research Highlights

This work connects graph-based deep learning from computer vision with experimental particle physics, showing that point cloud methods developed for 3D scene understanding work well for LHC event classification.

The paper provides a systematic benchmark of deep sets and edge convolution architectures on real physics data, clarifying when architectural complexity pays off and when simpler pairwise representations match or exceed more elaborate designs.

A 2.5× performance gain over the ATLAS baseline on H→ττ classification could meaningfully improve sensitivity to rare Higgs processes at the LHC without requiring additional collision data.

The pairwise architecture's success motivates exploration of dynamic graph construction and symmetry-aware variants; the full paper is available at [arXiv:2212.10659](https://arxiv.org/abs/2212.10659).

Original Paper Details

Comparing Point Cloud Strategies for Collider Event Classification

2212.10659

["Peter Onyisi", "Delon Shen", "Jesse Thaler"]

In this paper, we compare several event classification architectures defined on the point cloud representation of collider events. These approaches, which are based on the frameworks of deep sets and edge convolutions, circumvent many of the difficulties associated with traditional feature engineering. To benchmark our architectures against more traditional event classification strategies, we perform a case study involving Higgs boson decays to tau leptons. We find a 2.5 times increase in performance compared to a baseline ATLAS analysis with engineered features. Our point cloud architectures can be viewed as simplified versions of graph neural networks, where each particle in the event corresponds to a graph node. In our case study, we find the best balance of performance and computational cost for simple pairwise architectures, which are based on learned edge features.