Diffusion-HMC: Parameter Inference with Diffusion-model-driven Hamiltonian Monte Carlo

Authors

Nayantara Mudur, Carolina Cuesta-Lazaro, Douglas P. Finkbeiner

Abstract

Diffusion generative models have excelled at diverse image generation and reconstruction tasks across fields. A less explored avenue is their application to discriminative tasks involving regression or classification problems. The cornerstone of modern cosmology is the ability to generate predictions for observed astrophysical fields from theory and constrain physical models from observations using these predictions. This work uses a single diffusion generative model to address these interlinked objectives -- as a surrogate model or emulator for cold dark matter density fields conditional on input cosmological parameters, and as a parameter inference model that solves the inverse problem of constraining the cosmological parameters of an input field. The model is able to emulate fields with summary statistics consistent with those of the simulated target distribution. We then leverage the approximate likelihood of the diffusion generative model to derive tight constraints on cosmology by using the Hamiltonian Monte Carlo method to sample the posterior on cosmological parameters for a given test image. Finally, we demonstrate that this parameter inference approach is more robust to small perturbations of noise to the field than baseline parameter inference networks.

Concepts

The Big Picture

Two numbers govern the universe’s large-scale structure: Ω_m (the fraction of the universe made of matter) and σ_8 (how unevenly that matter is clumped). These leave subtle fingerprints on the dark matter density field, a map of where matter congregates across cosmic scales. Reading those fingerprints back out from an observation means working from effect to cause, and that’s hard.

Traditional approaches compress the field into a power spectrum, a curve capturing structure at each scale, losing information in the process. Richer methods that work directly with the full map require computing how likely a given observation is, which gets expensive fast. Researchers Nayantara Mudur, Carolina Cuesta-Lazaro, and Douglas Finkbeiner have now built a single diffusion model that does both: generate realistic dark matter fields for any cosmology, and work backward from an observed field to tightly constrain Ω_m and σ_8.

Key Insight: Using the approximate likelihood built into a trained diffusion model, the researchers run Hamiltonian Monte Carlo to sample the full posterior over cosmological parameters, achieving tight, noise-robust constraints without bespoke summary statistics.

How It Works

The foundation is a denoising diffusion probabilistic model (DDPM), the same mathematical machinery behind image generators like Stable Diffusion. The model learns to progressively blur an image into noise, then reverse that process to reconstruct the original. It is conditional: it takes Ω_m and σ_8 as inputs, learning what dark matter fields look like across a wide range of cosmologies.

Training data comes from the CAMELS Multifield Dataset, drawn from IllustrisTNG simulations. Each simulation tracks 256³ dark matter particles over a 25 Mpc/h volume. The team trained on 10,500 two-dimensional field slices spanning 700 cosmologies, using a U-Net with circular convolutions to respect the periodic boundary conditions of cosmological simulations.

Once trained, the model fills two roles:

- Forward emulator: Given Ω_m and σ_8, generate realistic dark matter density fields whose power spectra and higher-order statistics match true simulation outputs across the full parameter range.

- Inference engine: Given an observed field, constrain the parameters that produced it.

The inference step uses Hamiltonian Monte Carlo (HMC), a sampling method borrowed from physics. Think of a ball rolling across a hilly landscape where valleys mark likely parameter combinations. HMC uses gradient information to explore efficiently. The gradients come from the diffusion model itself: by tracking how reconstruction error changes as Ω_m and σ_8 are varied, the team extracts a differentiable approximate likelihood and uses HMC to walk through it, building a full posterior distribution.

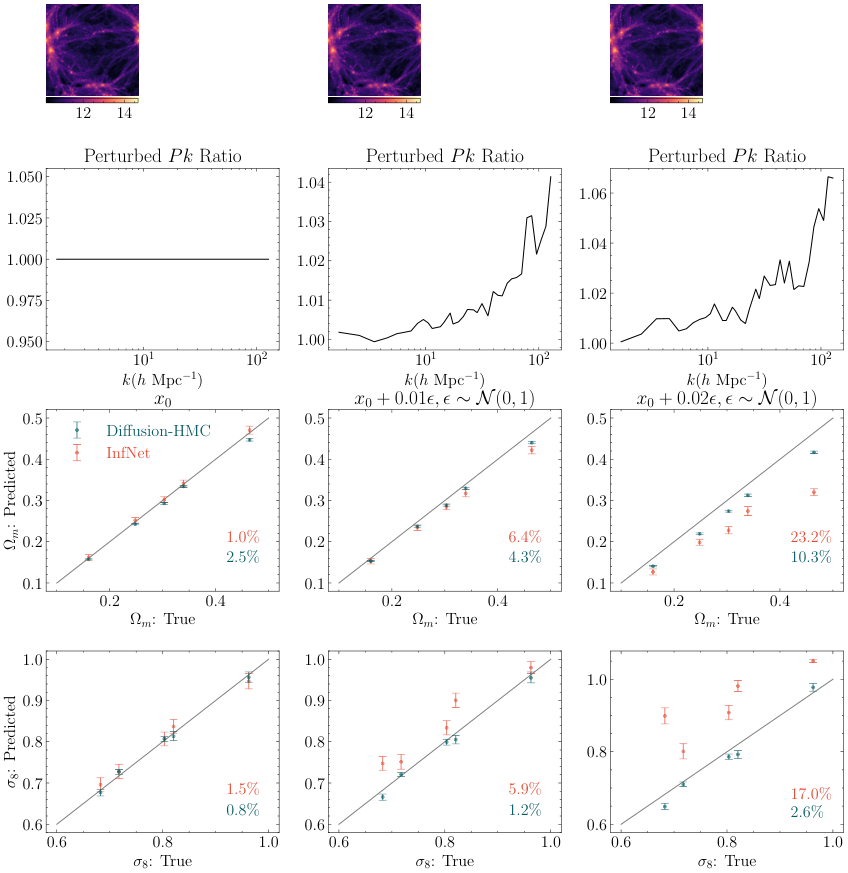

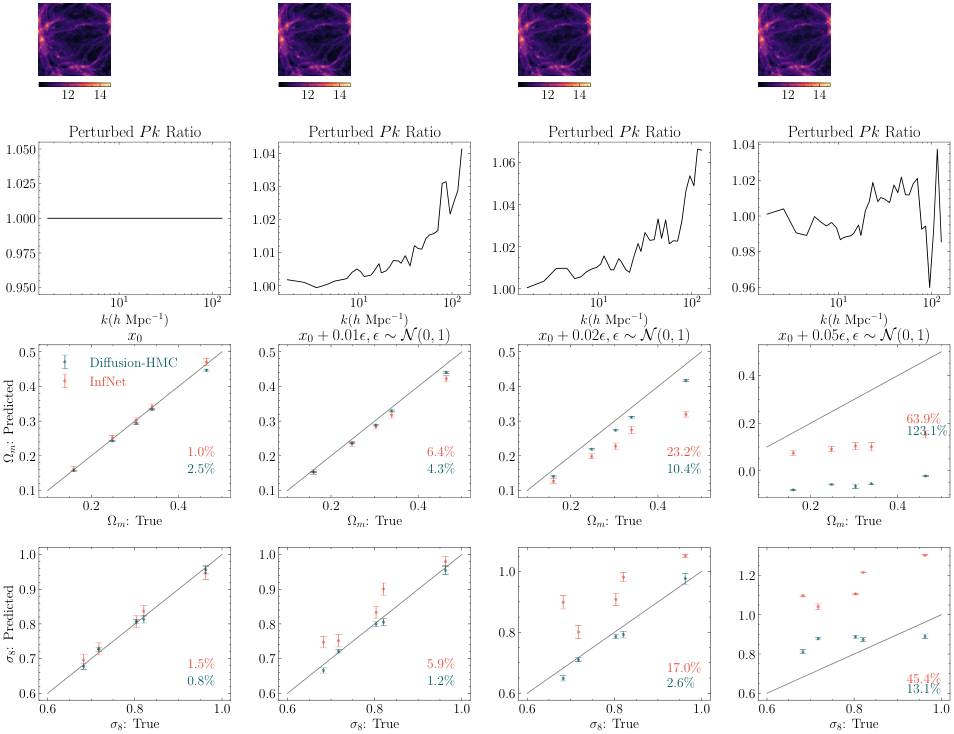

The result is not just a best-fit cosmology but a complete picture of consistent values and their trade-offs. The method also holds up under noise. When the researchers added Gaussian noise to test fields and compared Diffusion-HMC against a standard discriminative CNN, the CNN’s performance degraded noticeably. The diffusion model, trained to denoise fields at every corruption level, retained structural knowledge of the signal even when noise intruded.

Why It Matters

This work sits squarely in the space of simulation-based inference (SBI), where the likelihood is too expensive to compute analytically and you need other ways to do statistics. The idea that a diffusion model’s approximate likelihood can drive HMC is new. It points to a broader principle: generative models built for synthesis can be repurposed for analysis, running the same learned probability structure in reverse.

For cosmology, full-field inference rather than power-spectrum compression matters. Non-Gaussian features in the dark matter distribution, especially at small scales where gravity has scrambled initial conditions, carry real information about fundamental physics. Capturing that information with a single trainable model, without hand-crafting bespoke statistics, is a real advance.

The noise robustness is not a footnote. Upcoming surveys from DESI, the Vera Rubin Observatory’s LSST, and the Nancy Grace Roman Space Telescope will generate data at unprecedented scale but also with complex systematic effects. Methods that degrade gracefully under noise are far more useful than those requiring pristine conditions.

The current model is conditioned only on Ω_m and σ_8. Extending it to the full CAMELS parameter space is an obvious direction. And since HMC relies on an approximate likelihood, pinning down where that approximation breaks will be essential before applying the method to real survey data.

Bottom Line: A single diffusion model trained on dark matter simulations can both generate realistic cosmic fields and constrain cosmological parameters. HMC-based inference proves more noise-robust than discriminative neural network baselines, opening a new path toward field-level cosmological analysis with upcoming surveys.

IAIFI Research Highlights

This work fuses score-based generative modeling from machine learning with Hamiltonian Monte Carlo from statistical physics. A model built for synthesis doubles as a precision measurement tool for cosmological inference.

The paper shows a dual use of diffusion models as both generative emulators and approximate likelihood engines, creating a template for deploying generative AI in scientific inverse problems beyond image synthesis.

Full-field inference on dark matter density maps extracts cosmological constraints beyond power-spectrum summaries, probing non-Gaussian structure that encodes information about matter clustering and fundamental cosmological parameters.

Future work could extend the framework to real observed fields and the full CAMELS parameter space; the paper is available at [arXiv:2405.05255](https://arxiv.org/abs/2405.05255).