Dynamic Black-hole Emission Tomography with Physics-informed Neural Fields

Authors

Berthy T. Feng, Andrew A. Chael, David Bromley, Aviad Levis, William T. Freeman, Katherine L. Bouman

Abstract

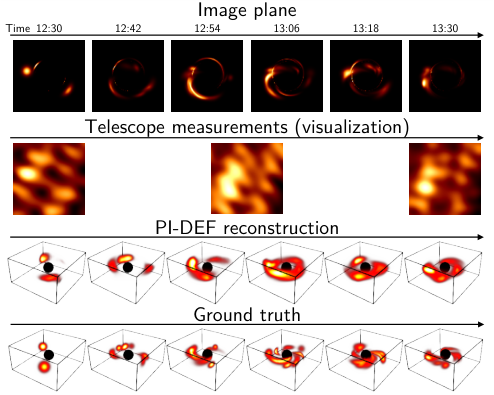

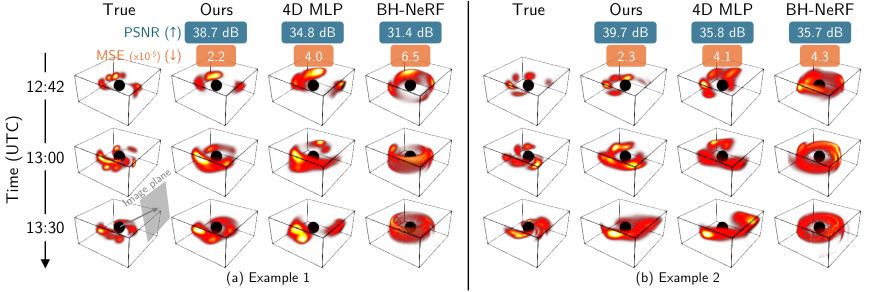

With the success of static black-hole imaging, the next frontier is the dynamic and 3D imaging of black holes. Recovering the dynamic 3D gas near a black hole would reveal previously-unseen parts of the universe and inform new physics models. However, only sparse radio measurements from a single viewpoint are possible, making the dynamic 3D reconstruction problem significantly ill-posed. Previously, BH-NeRF addressed the ill-posed problem by assuming Keplerian dynamics of the gas, but this assumption breaks down near the black hole, where the strong gravitational pull of the black hole and increased electromagnetic activity complicate fluid dynamics. To overcome the restrictive assumptions of BH-NeRF, we propose PI-DEF, a physics-informed approach that uses differentiable neural rendering to fit a 4D (time + 3D) emissivity field given EHT measurements. Our approach jointly reconstructs the 3D velocity field with the 4D emissivity field and enforces the velocity as a soft constraint on the dynamics of the emissivity. In experiments on simulated data, we find significantly improved reconstruction accuracy over both BH-NeRF and a physics-agnostic approach. We demonstrate how our method may be used to estimate other physics parameters of the black hole, such as its spin.

Concepts

The Big Picture

Imagine trying to reconstruct a hurricane’s full three-dimensional structure armed with only a single weather station and a handful of blurry snapshots taken minutes apart. That’s roughly the challenge astronomers face when peering into the turbulent gas swirling around a black hole.

The Event Horizon Telescope (EHT) gave us the first photograph of a black hole’s shadow in 2019. But a photograph is flat, frozen, and incomplete. The real action plays out across three dimensions of space and time: superheated glowing gas, hotspots of radiation that flare and vanish in minutes, matter spiraling inward at terrifying speeds.

A team from Caltech, MIT, Princeton, and the University of Toronto has taken a major step toward seeing that hidden reality. Their system, PI-DEF (Physics-Informed Dynamic Emission Fields), uses neural networks guided by the laws of physics to reconstruct the full dynamic, three-dimensional gas environment around a black hole from the EHT’s notoriously sparse radio data.

Key Insight: PI-DEF simultaneously reconstructs both the glowing gas and its velocity field, enforcing fluid physics as a flexible constraint rather than a rigid rule. The result is far more accurate three-dimensional mapping of the black-hole environment than any previous method, along with estimates of the black hole’s spin.

How It Works

The fundamental challenge is a math problem with too many unknowns and too few measurements. The EHT is effectively a single telescope the size of Earth, built by linking radio dishes across multiple continents. It sees the black hole from one angle only, and its data are sparse, noisy, and captured over short time windows. Reconstructing a time-evolving 3D volume from this is what mathematicians call ill-posed: infinitely many 3D scenes could produce the same handful of radio measurements.

The previous state-of-the-art, BH-NeRF, handled this by assuming the gas moves according to Keplerian dynamics, the simple orbital mechanics that govern planets circling the sun. That works reasonably well far from the black hole, where gravity alone dominates. Close in, where extreme gravity and powerful magnetic forces drive the gas, Keplerian orbits are a poor description. The gas can flare up suddenly, vanish, swirl, and splash. BH-NeRF can’t capture any of that.

PI-DEF drops the Keplerian assumption and replaces it with something more flexible:

-

Two neural fields, jointly optimized. The system represents both the emissivity (how brightly the gas glows) and the velocity field (how the gas moves) as coordinate-based neural networks. Each takes a location in space and a moment in time as input and returns a physical quantity like brightness or speed. Both networks train simultaneously.

-

Physics as a soft constraint. Instead of enforcing Keplerian motion as an unbreakable rule, PI-DEF uses the velocity field to guide the emissivity’s evolution via a continuity equation, the principle that gas doesn’t spontaneously appear or disappear. This enters the learning process as a preference, not an absolute law, so the network can adapt when turbulence, magnetic activity, or sudden emission departs from smooth fluid behavior.

-

Differentiable rendering through curved spacetime. Light near a black hole bends around the massive object. PI-DEF incorporates gravitational lensing directly into its image-formation calculation, tracing curved light paths from the 3D gas distribution to the simulated EHT measurements. The entire pipeline is differentiable, meaning the system works backward from observed data through the curved-spacetime physics to refine its reconstruction.

-

Spin estimation as a bonus. The light-bending calculation depends on the black hole’s spin parameter. The team showed they could treat spin as an additional learnable quantity, letting PI-DEF infer a fundamental property of the black hole from the same data.

Tested on simulated EHT data, PI-DEF substantially outperformed both BH-NeRF and a physics-agnostic baseline. Velocity field recovery also improved markedly, especially near the black hole where the Keplerian assumption breaks down most severely.

Why It Matters

The EHT has so far shown us still photographs of two black holes, M87* and Sagittarius A*. Those images confirmed the existence of a shadow, matching a key prediction of general relativity. But the real tests of extreme physics require watching the gas move: how matter behaves in ultra-strong gravitational fields, how powerful jets launch outward at near-light speed, how gravity and electromagnetism interact in these environments. PI-DEF is a step toward making that possible.

The work also highlights a useful design principle. When physical laws are embedded directly into a neural network’s training as flexible constraints, rather than applied as external post-processing, you can solve reconstruction problems that would otherwise have far too many possible solutions. And the ability to infer black hole spin from imaging data alone hints at a future where fundamental black hole properties can be read directly from radio telescope arrays.

Bottom Line: PI-DEF shows that physics-informed neural fields can reconstruct the dynamic, three-dimensional gas around a black hole far more accurately than methods that either ignore physics or oversimplify it, opening a path toward testing general relativity in the most extreme environments the universe offers.

IAIFI Research Highlights

PI-DEF merges computer vision techniques (neural radiance fields and differentiable rendering) with general relativistic astrophysics, showing that methods from computational graphics can unlock new scientific knowledge about black holes.

Jointly learning a dynamic scene and its governing velocity field, with physical laws as soft differentiable constraints, dramatically outperforms both physics-free and rigidly constrained approaches on severely ill-posed inverse problems.

Dynamic 3D reconstruction of the emitting plasma closest to a black hole's event horizon provides a new observational tool for testing general relativity and magnetohydrodynamics in extreme gravitational regimes.

Future work will apply PI-DEF to real EHT observations of Sgr A* and M87*, where it could constrain black hole spin and directly probe accretion physics. The paper is available as [arXiv:2602.08029](https://arxiv.org/abs/2602.08029).

Original Paper Details

Dynamic Black-hole Emission Tomography with Physics-informed Neural Fields

[arXiv:2602.08029](https://arxiv.org/abs/2602.08029)

Berthy T. Feng, Andrew A. Chael, David Bromley, Aviad Levis, William T. Freeman, Katherine L. Bouman