Dynamic Sparse Training with Structured Sparsity

Authors

Mike Lasby, Anna Golubeva, Utku Evci, Mihai Nica, Yani Ioannou

Abstract

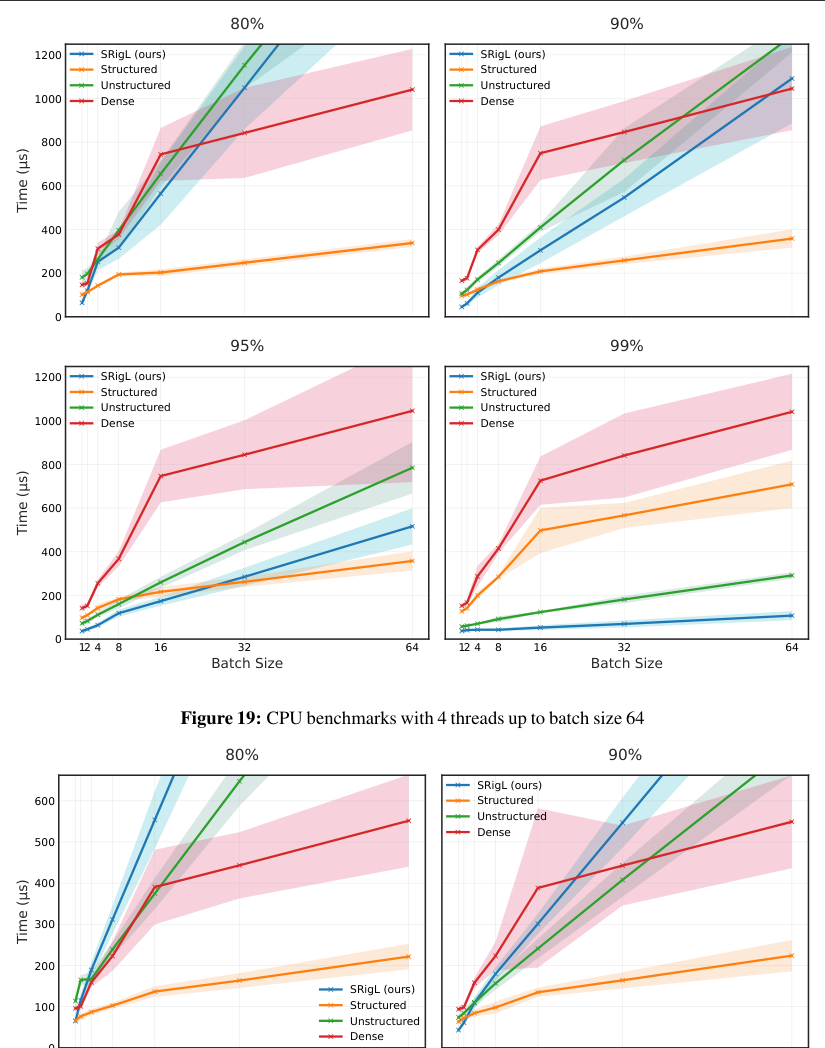

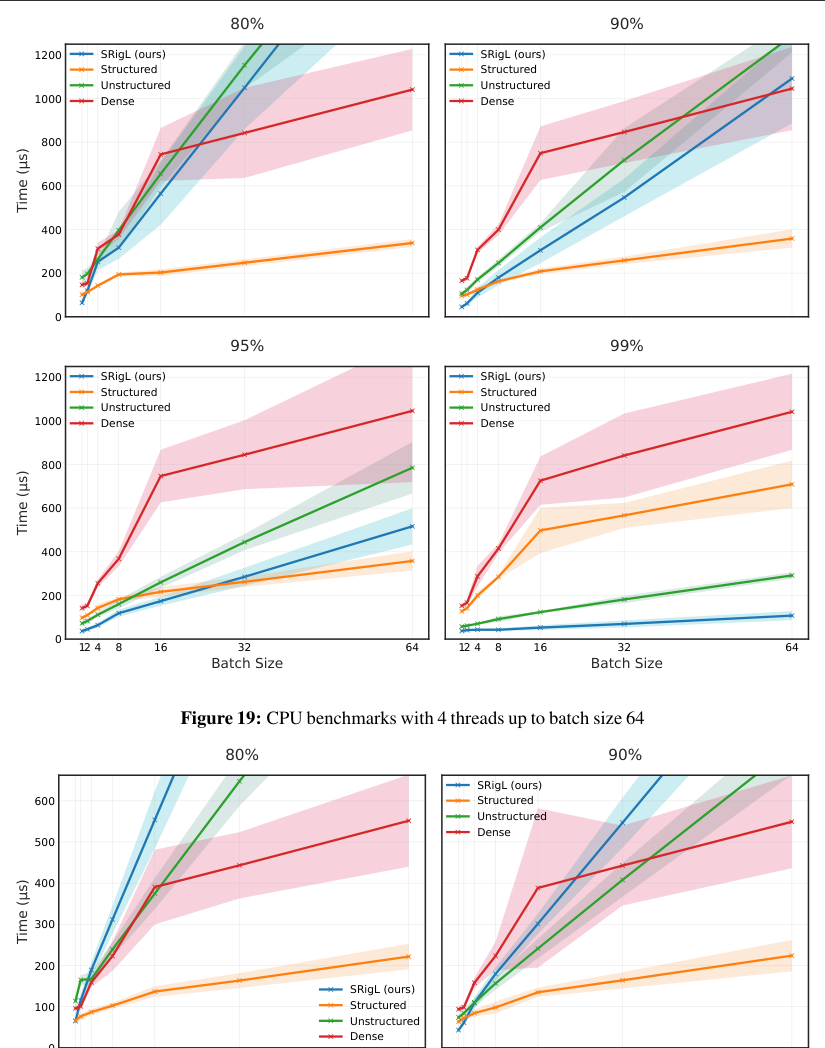

Dynamic Sparse Training (DST) methods achieve state-of-the-art results in sparse neural network training, matching the generalization of dense models while enabling sparse training and inference. Although the resulting models are highly sparse and theoretically less computationally expensive, achieving speedups with unstructured sparsity on real-world hardware is challenging. In this work, we propose a sparse-to-sparse DST method, Structured RigL (SRigL), to learn a variant of fine-grained structured N:M sparsity by imposing a constant fan-in constraint. Using our empirical analysis of existing DST methods at high sparsity, we additionally employ a neuron ablation method which enables SRigL to achieve state-of-the-art sparse-to-sparse structured DST performance on a variety of Neural Network (NN) architectures. Using a 90% sparse linear layer, we demonstrate a real-world acceleration of 3.4x/2.5x on CPU for online inference and 1.7x/13.0x on GPU for inference with a batch size of 256 when compared to equivalent dense/unstructured (CSR) sparse layers, respectively.

Concepts

The Big Picture

Imagine a library where most shelves are empty. In theory, you’d only need to visit a handful of spots to find every book. But if the books are scattered randomly, one here, three there, none in predictable locations, the librarian still has to check every shelf. The library is technically sparse, but finding anything takes as long as ever.

This paradox has dogged neural network compression for years. Researchers have gotten very good at training networks with 90% or more of their weights (the numerical values that determine how a model processes data) set to zero. This is called sparse training. Sparse models are theoretically cheaper to run: fewer calculations, less memory. But “theoretically cheaper” and “actually faster” are two different things.

When zero weights are scattered randomly, real hardware can’t exploit the sparsity. CPUs and GPUs read data in orderly, predictable chunks. The library is mostly empty, but the librarian is still exhausted.

A team from the University of Calgary, MIT, Google DeepMind, and affiliated institutions has found a way to have it both ways. Their method, Structured RigL (SRigL), trains neural networks that are both highly sparse and organized in a pattern hardware can actually exploit, with real-world speedups of up to 13x on GPU inference compared to conventional sparse formats.

Key Insight: SRigL closes the gap between theoretical and practical efficiency by learning structured sparsity during training rather than imposing it afterward, letting the network adapt its weights to fit a hardware-friendly pattern.

How It Works

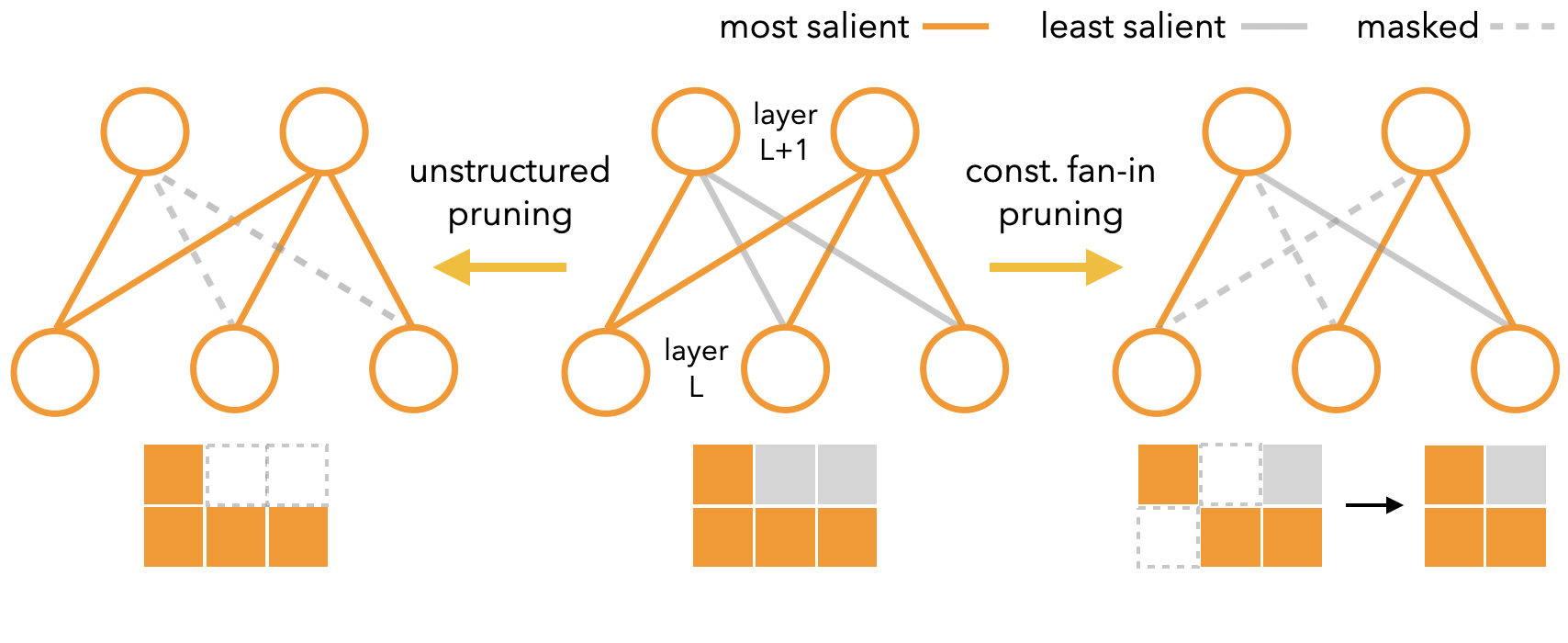

SRigL builds on an existing algorithm called RigL (Rigged Lottery), a Dynamic Sparse Training (DST) method. Traditional pruning trains a full network first, then cuts weights. DST does it differently: sparsity is maintained throughout training. Weights are periodically pruned (smallest magnitude) and regrown (largest gradient magnitude), letting the network explore different sparse connectivity patterns over time. RigL consistently matches or beats dense models at 85–95% sparsity. The catch: its sparsity is unstructured, with surviving weights scattered at arbitrary positions.

SRigL adds one critical constraint, constant fan-in, meaning every neuron receives exactly the same number of incoming connections. Think of each neuron being allowed exactly 10 input wires, no more, no less. This creates fine-grained N:M sparsity: out of every M consecutive weights in a row, exactly N are nonzero. A GPU no longer hunts for random weight positions. It loads weights in predictable, contiguous blocks.

The training procedure runs in three stages:

- Sparse initialization: The network starts with a random sparse mask satisfying constant fan-in.

- Dynamic mask updates: At regular intervals, SRigL drops the smallest-magnitude weights per neuron and grows the largest-gradient-magnitude weights, always preserving fan-in.

- Neuron ablation: Above ~90% sparsity, the authors discovered that standard RigL naturally kills entire neurons, zeroing all their incoming weights. SRigL makes this explicit, letting active neurons concentrate their fixed fan-in budget on the most useful connections.

That third stage emerged from careful empirical analysis. At extreme sparsity, RigL was already doing neuron ablation, but implicitly and inefficiently. Making it explicit lets the algorithm lean into the behavior, and performance at extreme sparsity improved dramatically.

There’s a theoretical payoff too. The paper shows mathematically that constant fan-in layers have lower variance in their output norms compared to equally sparse but unstructured layers. Internal signals stay better-behaved during training, which contributes to more stable optimization.

Why It Matters

The gap between algorithmic efficiency and real-world efficiency has long frustrated the compression community. Papers routinely report reductions in FLOPs (the basic arithmetic a model performs) that never translate to faster inference on actual hardware.

SRigL attacks this gap directly. On a 90% sparse linear layer, it achieves 3.4x speedup over dense and 2.5x over unstructured sparse on CPU for single-sample inference. On GPU with batch size 256, the gains are sharper: 1.7x over dense and 13.0x over unstructured sparse. These are wall-clock measurements, not theoretical FLOP counts.

The practical stakes are growing. As AI models get larger and more expensive to deploy, techniques that make inference genuinely faster become essential. This is especially true for scientific applications like physics simulations, particle detector readouts, and gravitational wave analysis, where models must run at high throughput on constrained hardware. A method that delivers structured sparsity without sacrificing generalization opens doors that unstructured pruning has kept closed.

Open questions remain. The authors compare SRigL primarily on image classification; how constant fan-in transfers to transformers, diffusion models, or graph neural networks is still unexplored. The neuron ablation behavior at extreme sparsity also raises questions about what the surviving network topology represents, which could be worth investigating from an interpretability angle.

Bottom Line: SRigL proves that structured sparsity doesn’t require sacrificing accuracy. By learning structure and weights simultaneously, it delivers hardware-ready sparse networks with up to 13x real-world inference speedup over unstructured sparse formats.

IAIFI Research Highlights

The work ties fundamental neural network theory (why constant fan-in reduces output-norm variance) to practical hardware engineering, connecting the kinds of questions that IAIFI's approach to AI for science is built around.

SRigL sets a new bar for structured dynamic sparse training, showing that hardware-friendly sparsity patterns can be learned end-to-end without the accuracy penalty traditionally associated with structured pruning.

Efficient sparse inference matters directly for physics experiments that need real-time AI on edge hardware, from trigger systems in particle detectors to fast gravitational wave classifiers where latency is mission-critical.

Future work will likely extend constant fan-in structured sparsity to transformer architectures and probe theoretical connections between neuron ablation and network topology. The paper ([arXiv:2305.02299](https://arxiv.org/abs/2305.02299)) appeared as an ICLR 2024 conference paper.

Original Paper Details

Dynamic Sparse Training with Structured Sparsity

2305.02299

["Mike Lasby", "Anna Golubeva", "Utku Evci", "Mihai Nica", "Yani Ioannou"]

Dynamic Sparse Training (DST) methods achieve state-of-the-art results in sparse neural network training, matching the generalization of dense models while enabling sparse training and inference. Although the resulting models are highly sparse and theoretically less computationally expensive, achieving speedups with unstructured sparsity on real-world hardware is challenging. In this work, we propose a sparse-to-sparse DST method, Structured RigL (SRigL), to learn a variant of fine-grained structured N:M sparsity by imposing a constant fan-in constraint. Using our empirical analysis of existing DST methods at high sparsity, we additionally employ a neuron ablation method which enables SRigL to achieve state-of-the-art sparse-to-sparse structured DST performance on a variety of Neural Network (NN) architectures. Using a 90% sparse linear layer, we demonstrate a real-world acceleration of 3.4x/2.5x on CPU for online inference and 1.7x/13.0x on GPU for inference with a batch size of 256 when compared to equivalent dense/unstructured (CSR) sparse layers, respectively.