Efficient Constraint-Aware Flow Matching via Randomized Exploration

Authors

Zhengyan Huan, Jacob Boerma, Li-Ping Liu, Shuchin Aeron

Abstract

We consider the problem of generating samples via Flow Matching (FM) with an additional requirement that the generated samples must satisfy given constraints. We consider two scenarios, viz.: (a) when a differentiable distance function to the constraint set is given, and (b) when the constraint set is only available via queries to a membership oracle. For case (a), we propose a simple adaptation of the FM objective with an additional term that penalizes the distance between the constraint set and the generated samples. For case (b), we propose to employ randomization and learn a mean flow that is numerically shown to have a high likelihood of satisfying the constraints. This approach deviates significantly from existing works that require simple convex constraints, knowledge of a barrier function, or a reflection mechanism to constrain the probability flow. Furthermore, in the proposed setting we show that a two-stage approach, where both stages approximate the same original flow but with only the second stage probing the constraints via randomization, is more computationally efficient. Through several synthetic cases of constrained generation, we numerically show that the proposed approaches achieve significant gains in terms of constraint satisfaction while matching the target distributions. As a showcase for a practical oracle-based constraint, we show how our approach can be used for training an adversarial example generator, using queries to a hard-label black-box classifier. We conclude with several future research directions. Our code is available at https://github.com/ZhengyanHuan/FM-RE.

Concepts

The Big Picture

Imagine asking a master sculptor to carve a statue that must fit inside a specific, irregularly shaped display case, with every protrusion and curve staying within bounds. A sculptor trained only on open-air work might produce something beautiful yet impossible to display. Modern AI generative models face exactly this problem: they learn to produce stunning outputs, but nobody told them about the rules.

Flow Matching (FM) transforms random noise into data by learning a smooth flow, like a river current that sweeps particles from chaos to structure. It drives leading image generators and scientific simulators alike. But when generated samples need to satisfy hard constraints (physical laws, safety bounds, or the decision rules of an AI classifier), FM tends to blunder across those lines.

A team at Tufts University has developed a principled way to teach flow matching models to respect constraints, even when those constraints are opaque black boxes that can only answer “yes” or “no.” Their framework, Constraint-Aware Flow Matching via Randomized Exploration (FM-RE), brings generative AI closer to actually playing by the rules.

Key Insight: By introducing a randomized exploration strategy during training, FM-RE can learn to generate samples that satisfy complex, irregularly shaped, and even disconnected constraints, without needing to know anything about their geometry in advance.

How It Works

The paper draws a clean distinction between two types of constraint problems. In the first, you have a differentiable distance function: you can measure exactly how far outside the boundary a sample lands. The fix is straightforward. Add a penalty term to the standard FM training objective that discourages straying from the allowed region. Think of it as a spring force that tugs wayward samples back toward the constraint set.

The harder case is when you only have a membership oracle: a black box that answers “inside” or “outside” with no gradient information, no mathematical hint about which direction leads back to the safe zone. This comes up when the constraint is a trained image classifier or a physics simulator with a pass/fail output. Existing methods require convex constraints (simple, bowl-shaped regions), known barrier functions, or reflection mechanisms. Those assumptions rarely hold in practice.

FM-RE’s solution is surprisingly simple. During training, the model doesn’t just follow a single trajectory from noise to data. It randomizes its endpoint samples, perturbing candidate outputs and querying the oracle to find which perturbations land inside the constraint set.

By averaging over many such randomized probes, the model learns a mean flow (an average trajectory) that gravitates toward the valid region. Picture a blindfolded hiker who figures out the safe path by repeatedly reaching a hand forward and feeling whether they’ve crossed the fence.

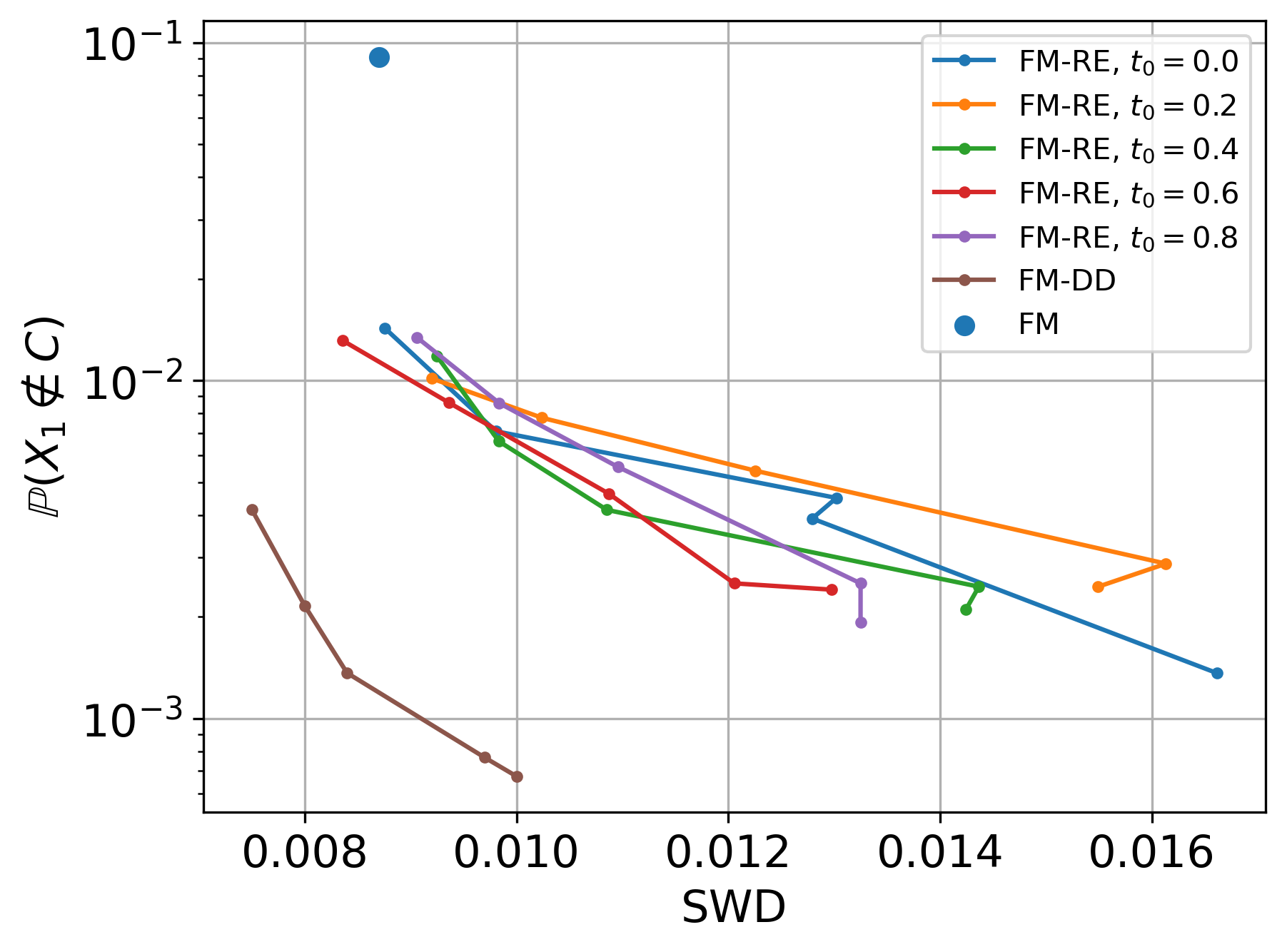

Training happens in two stages:

- Stage 1 trains a standard flow matching model on the data, learning the basic distribution without worrying about constraints.

- Stage 2 fine-tunes that model using randomized exploration to push generated samples toward constraint satisfaction.

This split matters. Constraint probing is computationally expensive, and doing it from scratch wastes resources on learning the basic distribution. By front-loading that learning, the two-stage method achieves the same constraint satisfaction rate with far fewer oracle queries.

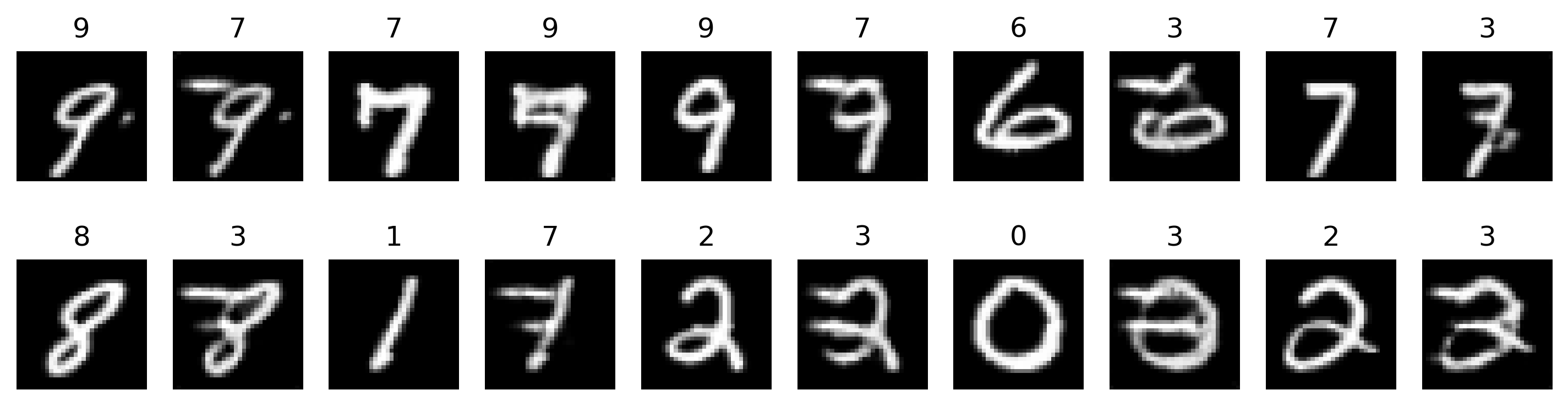

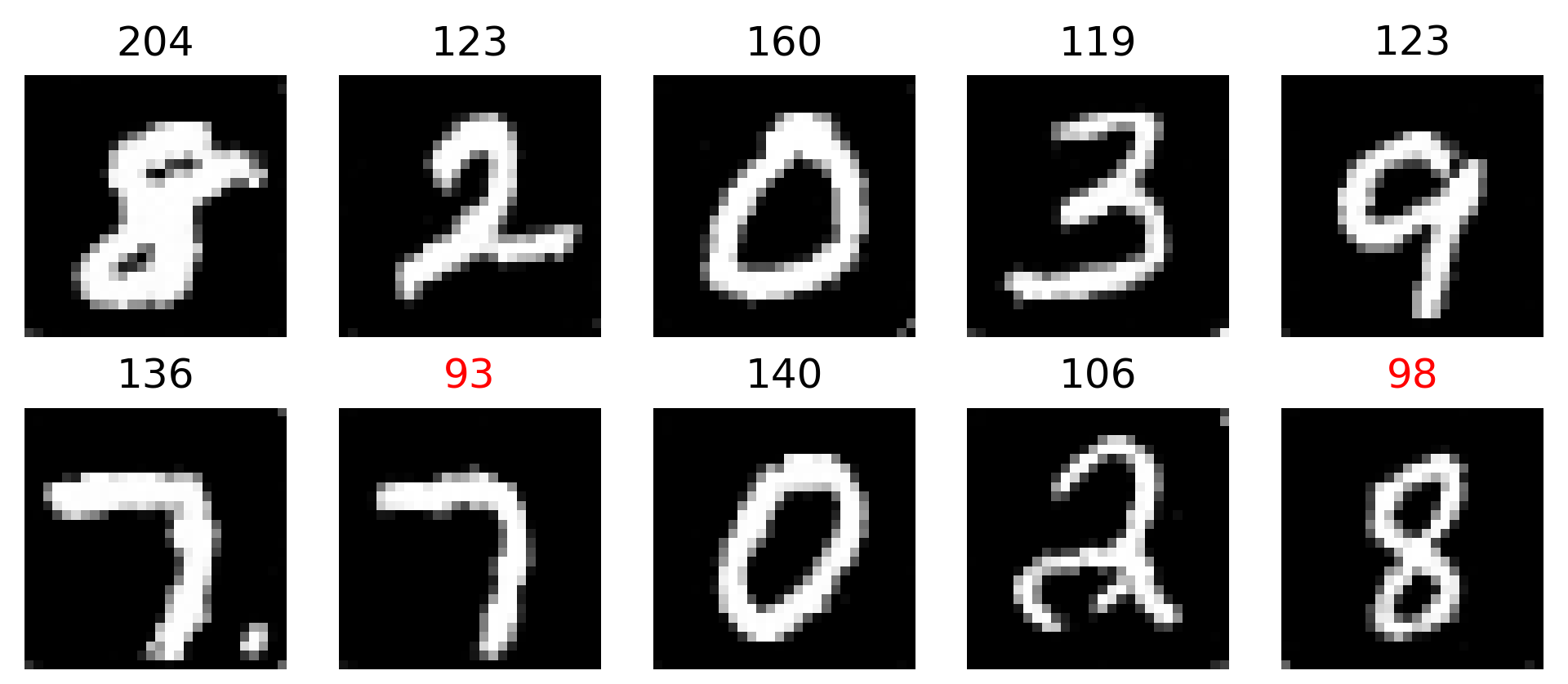

Why It Matters

The most immediate application is adversarial example generation: given a hard-label black-box classifier that returns only “right” or “wrong,” FM-RE learns to generate inputs that fool it. That’s a real capability for AI robustness testing. Any domain where data must satisfy physical plausibility constraints (fluid dynamics, molecular design, climate modeling) could also benefit, since the model needs only a working simulator, not explicit constraint equations.

The physics implications run deeper. Physical systems are full of constraints: conservation laws, symmetry requirements, boundary conditions. Traditional generative models learn the statistical pattern but regularly violate these rules at the sample level. FM-RE offers a path toward generation that is physically faithful, not just statistically accurate.

The approach also fits naturally with constraints defined by expensive computational oracles like quantum chemistry codes or lattice QCD solvers, where gradient information simply isn’t available.

Open questions remain. The method’s sample efficiency under very tight constraints, its behavior in extremely high dimensions, and its scaling to constraints that are themselves neural networks are all directions the authors flag for future work.

Bottom Line: FM-RE teaches generative AI to respect constraints it can’t see, using only yes/no feedback, and does so efficiently enough to be practical. This opens a realistic path to physically faithful AI generation across science and engineering.

IAIFI Research Highlights

This work tackles a core problem at the AI-physics boundary: training generative models to produce outputs consistent with physical laws and domain constraints, even when those constraints are opaque to gradient-based learning.

FM-RE extends constrained generative modeling beyond convex or analytically tractable settings, letting flow matching work with arbitrary membership oracles, a capability that has been missing from real-world deployments of these models.

The oracle-based constraint framework fits naturally with physics simulations where validity is checked computationally rather than analytically, opening doors in lattice field theory, molecular dynamics, and related fields.

Future directions include scaling to higher-dimensional constraint settings and integrating FM-RE with physics simulators as constraint oracles; the full paper and code are available at [arXiv:2508.13316](https://arxiv.org/abs/2508.13316) and https://github.com/ZhengyanHuan/FM-RE (2025).