End-to-end Differentiable Calibration and Reconstruction for Optical Particle Detectors

Authors

Omar Alterkait, César Jesús-Valls, Ryo Matsumoto, Patrick de Perio, Kazuhiro Terao

Abstract

Large-scale homogeneous detectors with optical readouts are widely used in particle detection, with Cherenkov and scintillator neutrino detectors as prominent examples. Analyses in experimental physics rely on high-fidelity simulators to translate sensor-level information into physical quantities of interest. This task critically depends on accurate calibration, which aligns simulation behavior with real detector data, and on tracking, which infers particle properties from optical signals. We present the first end-to-end differentiable optical particle detector simulator, enabling simultaneous calibration and reconstruction through gradient-based optimization. Our approach unifies simulation, calibration, and tracking, which are traditionally treated as separate problems, within a single differentiable framework. We demonstrate that it achieves smooth and physically meaningful gradients across all key stages of light generation, propagation, and detection while maintaining computational efficiency. We show that gradient-based calibration and reconstruction greatly simplify existing analysis pipelines while matching or surpassing the performance of conventional non-differentiable methods in both accuracy and speed. Moreover, the framework's modularity allows straightforward adaptation to diverse detector geometries and target materials, providing a flexible foundation for experiment design and optimization. The results demonstrate the readiness of this technique for adoption in current and future optical detector experiments, establishing a new paradigm for simulation and reconstruction in particle physics.

Concepts

The Big Picture

Imagine trying to tune a piano in a pitch-dark room using only a microphone, and you’re only allowed to twist one knob at a time, waiting minutes between each adjustment. That’s roughly the situation physicists have faced for decades when calibrating the massive underground detectors they use to catch neutrinos. Each detector has hundreds of interdependent parameters, and the tools to tune them have always been slow, sequential, and blind to the ways those parameters interact.

These optical detectors are cathedral-sized tanks filled with ultrapure water or a special liquid that emits flashes of light when a particle passes through, lined with thousands of light sensors. Experiments like Super-Kamiokande in Japan and IceCube at the South Pole have reshaped our understanding of neutrino mass and the way neutrinos shift between different types as they travel. But extracting physics from raw sensor data requires a three-step pipeline: simulate what the detector should see, calibrate the simulation to match reality, then reconstruct what actually happened. Each step feeds the next, yet they’ve historically been built and tuned in isolation.

A team from Tufts University, CERN, and SLAC has now collapsed all three steps into a single, differentiable framework. Because every stage is linked through calculus, changing one parameter automatically reveals how it affects every other part of the pipeline. The result is the first end-to-end system of this kind for optical particle detectors, able to calibrate and reconstruct particle events simultaneously.

Key Insight: By making every stage of the simulation mathematically differentiable, the researchers can compute, in a single pass, exactly how every detector parameter affects every measurement. This enables simultaneous optimization of the entire pipeline.

How It Works

The trick is automatic differentiation (AD), the same mathematical machinery that powers deep learning. AD isn’t numerical approximation (like finite differences, where you nudge each parameter and see what changes) and it isn’t symbolic algebra. It decomposes the entire simulation into elementary operations and threads the chain rule of calculus through all of them, computing exact gradients efficiently.

In reverse-mode AD, the cost of computing the full gradient is roughly constant regardless of how many input parameters you have. Compare that to finite-difference approaches, where every parameter requires a full simulation run.

The simulation pipeline models three physical stages:

- Light generation — A charged particle traveling faster than light in the medium produces a cone of Cherenkov radiation (similar to a sonic boom, but for light) or excites atoms to glow through scintillation. The simulator computes the number and directional distribution of photons emitted.

- Light propagation — Photons travel through the detector medium, scattering and absorbing along the way. The simulator tracks this stochastically but in a differentiable way, preserving gradient flow through the probabilistic elements.

- Light detection — Photons arrive at photosensors with some efficiency. The simulator models the sensor response, including timing and charge, in a differentiable manner.

The hardest engineering problem was making each step differentiable without sacrificing physical realism. Discrete, stochastic operations (the kind that naturally appear in particle physics simulators) would normally break gradient flow. The team addressed this with differentiable approximations for sharp, discontinuous steps and with neural network surrogates: learned stand-ins for physically complex processes too expensive to model directly. Well-understood physics like ray optics is implemented as exact differentiable operators. Complex processes use learned models that stay smooth and differentiable.

Calibration works by treating detector parameters (absorption length, photosensor efficiency, geometric alignment) as learnable variables. Rather than tuning them one at a time in a fixed sequence, gradient descent adjusts all of them at once. This matters because many parameters are correlated: a bias in absorption length can masquerade as a shift in sensor efficiency. Disentangling them sequentially leads to suboptimal working points and hard-to-quantify systematic errors. Joint optimization accounts for these correlations directly.

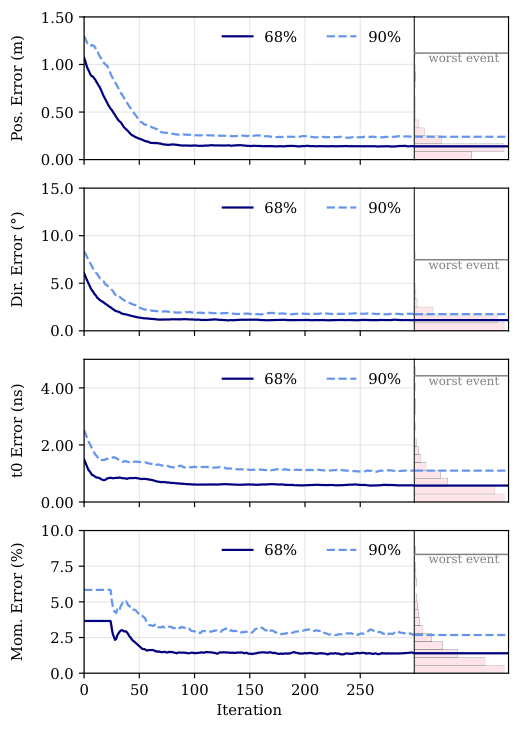

Reconstruction follows the same logic. Given real detector data, the framework treats unknown particle properties (vertex position, direction, energy) as variables to optimize, using the differentiable simulator as the forward model. Gradients guide the optimizer toward the most consistent physical explanation for what the sensors saw.

Why It Matters

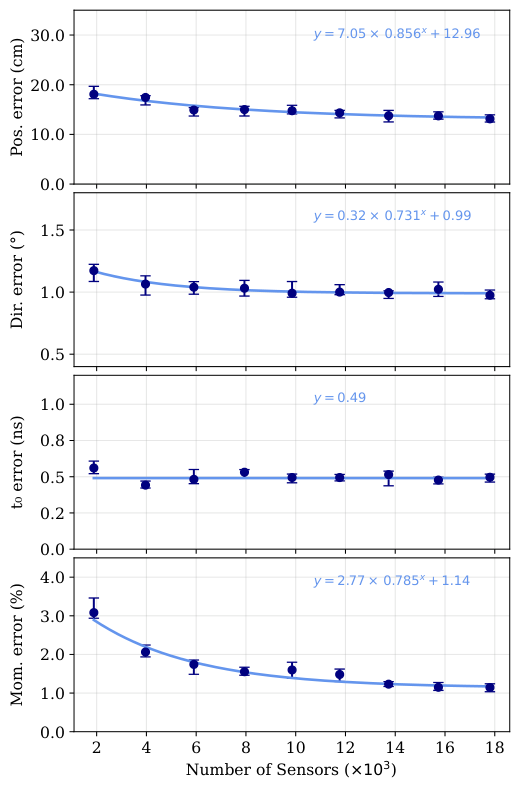

In head-to-head tests, gradient-based calibration and reconstruction matched or exceeded the accuracy of conventional methods while running faster. The pipeline simplification may matter even more. Physics experiments currently maintain separate software stacks for simulation, calibration, and reconstruction, each maintained by different teams with hand-crafted interfaces between them. A unified differentiable framework collapses that complexity, reducing failure modes and making it easier to propagate uncertainties through the entire chain.

The timing matters too. Next-generation experiments like Hyper-Kamiokande, with over 40,000 photosensors, and proposed DUNE Phase II detectors will generate data at scales that make sequential calibration untenable. By showing readiness now, this work positions the differentiable approach for adoption in exactly those experiments.

Beyond calibration and reconstruction, the framework opens a door to experiment design optimization: physicists can ask not just “how do we analyze this detector?” but “how should we build the next one to maximize physics sensitivity?”

Bottom Line: A unified, differentiable simulator eliminates the artificial divisions between simulation, calibration, and reconstruction. It simplifies analysis pipelines while matching or beating conventional methods, establishing a new paradigm ready for the next generation of neutrino experiments.

IAIFI Research Highlights

This work applies automatic differentiation, the mathematical engine behind modern deep learning, directly to the long-standing challenge of calibrating and reconstructing neutrino detector data. It bridges differentiable programming and experimental particle physics in a single operational framework.

The paper shows that hybrid differentiable models combining physics-based operators with neural network surrogates can outperform traditional non-differentiable approaches in both speed and accuracy on real scientific inverse problems.

Joint calibration of all detector parameters simultaneously reduces systematic uncertainties and improves sensitivity in experiments like Super-Kamiokande and IceCube, which have driven some of the most important discoveries in fundamental physics over the past three decades.

The modular design allows straightforward adaptation to diverse detector geometries and materials, with direct applicability to Hyper-Kamiokande and DUNE Phase II. The full paper is available at [arXiv:2602.24129](https://arxiv.org/abs/2602.24129).

Original Paper Details

End-to-end Differentiable Calibration and Reconstruction for Optical Particle Detectors

[arXiv:2602.24129](https://arxiv.org/abs/2602.24129)

Omar Alterkait, César Jesús-Valls, Ryo Matsumoto, Patrick de Perio, Kazuhiro Terao

Large-scale homogeneous detectors with optical readouts are widely used in particle detection, with Cherenkov and scintillator neutrino detectors as prominent examples. Analyses in experimental physics rely on high-fidelity simulators to translate sensor-level information into physical quantities of interest. This task critically depends on accurate calibration, which aligns simulation behavior with real detector data, and on tracking, which infers particle properties from optical signals. We present the first end-to-end differentiable optical particle detector simulator, enabling simultaneous calibration and reconstruction through gradient-based optimization. Our approach unifies simulation, calibration, and tracking, which are traditionally treated as separate problems, within a single differentiable framework. We demonstrate that it achieves smooth and physically meaningful gradients across all key stages of light generation, propagation, and detection while maintaining computational efficiency. We show that gradient-based calibration and reconstruction greatly simplify existing analysis pipelines while matching or surpassing the performance of conventional non-differentiable methods in both accuracy and speed. Moreover, the framework's modularity allows straightforward adaptation to diverse detector geometries and target materials, providing a flexible foundation for experiment design and optimization. The results demonstrate the readiness of this technique for adoption in current and future optical detector experiments, establishing a new paradigm for simulation and reconstruction in particle physics.