Exploring gauge-fixing conditions with gradient-based optimization

Authors

William Detmold, Gurtej Kanwar, Yin Lin, Phiala E. Shanahan, Michael L. Wagman

Abstract

Lattice gauge fixing is required to compute gauge-variant quantities, for example those used in RI-MOM renormalization schemes or as objects of comparison for model calculations. Recently, gauge-variant quantities have also been found to be more amenable to signal-to-noise optimization using contour deformations. These applications motivate systematic parameterization and exploration of gauge-fixing schemes. This work introduces a differentiable parameterization of gauge fixing which is broad enough to cover Landau gauge, Coulomb gauge, and maximal tree gauges. The adjoint state method allows gradient-based optimization to select gauge-fixing schemes that minimize an arbitrary target loss function.

Concepts

The Big Picture

Imagine trying to photograph a spinning top. The top is real, with definite shape and angular momentum, but your choice of shutter speed, angle, and lighting determines what you actually capture. Some choices make the blur worse; others freeze the motion perfectly. The underlying object never changes, but how well you can measure it depends on how you choose to look.

Physicists studying the strong nuclear force face an analogous problem. Quantum chromodynamics (QCD), the theory governing quarks and gluons, has a mathematical symmetry called gauge invariance. The equations look identical after certain transformations of the underlying fields, much like rotational symmetry in a spinning top. To do practical calculations, physicists simulate QCD on a lattice, a discrete grid of spacetime points, like pixels in a computational snapshot of the universe.

Gauge invariance is useful in many contexts, but it becomes a headache when measuring quantities needed for renormalization, the process of subtracting infinities and extracting clean physical predictions from quantum field theory. Before computing these quantities, you must “fix the gauge”: pick a specific mathematical perspective and stick with it.

For decades, physicists have made these choices by convention, defaulting to schemes like Landau gauge or Coulomb gauge. A new paper from researchers at MIT, Fermilab, and collaborating institutions asks a question that hasn’t gotten enough attention: what if, instead of choosing a gauge by convention, you let a computer learn the best gauge for your specific problem?

Key Insight: By treating gauge-fixing as a differentiable optimization problem, researchers can now systematically search for gauge-fixing schemes that minimize any target quantity, allowing data-driven exploration of gauge choices in lattice QCD calculations.

How It Works

The method rests on one observation. Most common gauge-fixing schemes, including Landau gauge, Coulomb gauge, and maximal tree gauges (schemes that fix a spanning set of links to the identity matrix), can all be written as minimizing a single functional:

$$E = -\frac{1}{N_d N_V} \sum_{x,\mu} \text{Re Tr}, p_\mu(x), U_\mu^g(x)$$

Here, $U_\mu^g(x)$ are the gauge-transformed links and the coefficients $p_\mu(x)$ are real numbers assigned to each link. The magic is in those coefficients: set them all to 1 and you get Landau gauge; set them to 1 only for spatial links and you recover Coulomb gauge; make them binary over a tree structure and you have a maximal tree gauge.

This parameterization turns a discrete zoo of gauge choices into a continuous family, and a continuous family is something you can differentiate through.

The key computational challenge: given a gauge-fixing algorithm that minimizes $E$ for a set of $p_\mu(x)$ coefficients, how do you compute the gradient of a downstream loss $\ell$ with respect to those coefficients? Running automatic differentiation through all the solver iterations is numerically unstable.

The team’s solution is the adjoint state method, borrowed from optimal control theory. Rather than differentiating through the iterative solver, it treats the gauge-fixing condition as a constraint and solves a single linear system to extract gradients exactly. The solver becomes a black box. Swap in any algorithm you like, and the gradient computation stays stable and efficient.

The workflow has three steps:

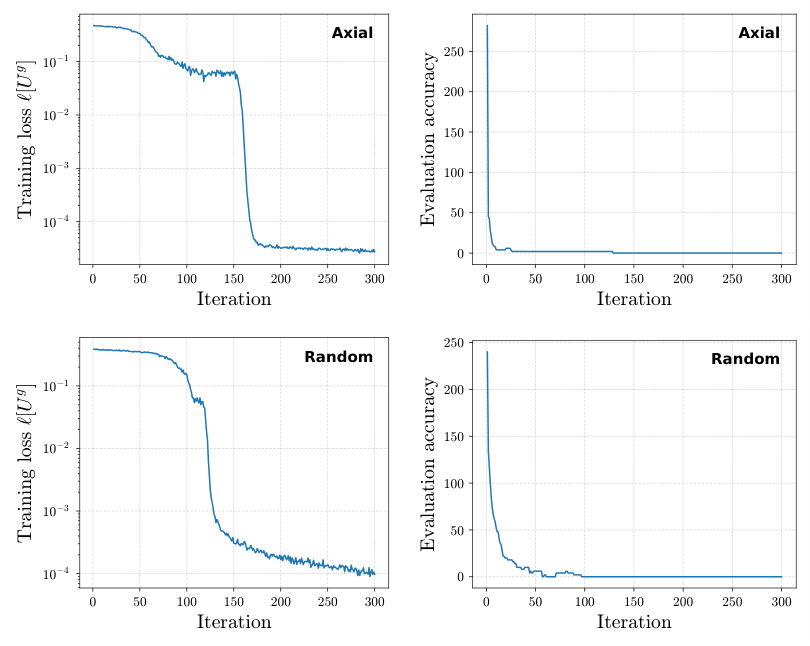

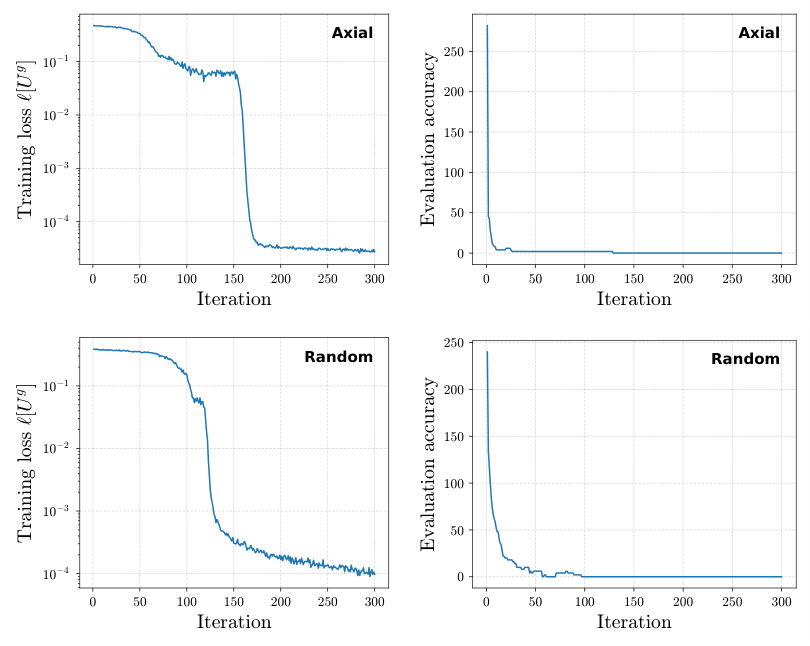

- Fix the gauge: Run a standard iterative minimizer to find the gauge transformation $g^*(x)$ satisfying the gauge condition for your current $p_\mu(x)$.

- Solve the adjoint problem: Compute Lagrange multipliers $\lambda_c(z)$ by solving a linear system involving the Hessian of $E$ at the fixed-gauge solution.

- Update the scheme parameters: Use the adjoint variables to compute $d\ell/dp_\mu(x)$ and take a gradient step.

For the maximal tree subfamily, where $p_\mu(x)$ should ideally be binary, the team introduces a temperature regulator: a smooth interpolation that relaxes the discrete constraint and lets gradient descent explore continuously before annealing toward a binary solution.

Why It Matters

Physicists have long known that gauge choice affects statistical noise in their calculations, but nobody had a principled way to search for better schemes. This framework changes that.

The timing is good. Recent work has shown that gauge-variant quantities can dramatically reduce statistical noise through contour deformations (a technique for improving the signal-to-noise ratio in Monte Carlo calculations). With this new framework, researchers can directly optimize their gauge scheme to minimize noise rather than relying on educated guesses.

What makes this paper interesting beyond the specific application is the methodological bet it makes. Differentiable programming, gradient-based optimization, automatic differentiation: these are the standard tools of modern machine learning, and here they’re being applied to the constrained, discrete problems at the heart of lattice field theory. The adjoint state method has been a workhorse in fluid dynamics and engineering optimization for years. Its appearance in lattice QCD connects two communities that rarely overlap.

Future extensions could incorporate richer parameterizations such as maximal Abelian gauges, or couple gauge optimization directly with normalizing-flow samplers now being developed for lattice QCD. Whether learned gauge schemes generalize across different lattice spacings and physical parameters is an open question, one that echoes familiar generalization challenges in deep learning.

Bottom Line: By making gauge-fixing differentiable, this work turns an old convention into a learnable design choice, giving lattice QCD practitioners a new knob to turn in the quest for cleaner, cheaper calculations of fundamental physics.

IAIFI Research Highlights

This work imports adjoint state methods from optimal control into lattice gauge theory, unifying disparate gauge-fixing schemes under a single differentiable parameterization and enabling gradient-based optimization over them.

The approach shows how differentiable programming and gradient-based optimization, core tools of modern ML, apply to discrete, constrained physics problems through continuous relaxation and stable adjoint-state gradients.

The framework gives lattice QCD practitioners a principled method to optimize gauge-fixing schemes for specific tasks, including noise reduction via contour deformations and precision RI-MOM renormalization.

Future work could extend this approach to richer gauge functionals and integrate learned schemes with normalizing-flow samplers. This preprint (MIT-CTP/5786) was presented at LATTICE2024 in Liverpool. [[arXiv:2410.03602](https://arxiv.org/abs/2410.03602)]

Original Paper Details

Exploring gauge-fixing conditions with gradient-based optimization

[arXiv:2410.03602](https://arxiv.org/abs/2410.03602)

William Detmold, Gurtej Kanwar, Yin Lin, Phiala E. Shanahan, Michael L. Wagman

Lattice gauge fixing is required to compute gauge-variant quantities, for example those used in RI-MOM renormalization schemes or as objects of comparison for model calculations. Recently, gauge-variant quantities have also been found to be more amenable to signal-to-noise optimization using contour deformations. These applications motivate systematic parameterization and exploration of gauge-fixing schemes. This work introduces a differentiable parameterization of gauge fixing which is broad enough to cover Landau gauge, Coulomb gauge, and maximal tree gauges. The adjoint state method allows gradient-based optimization to select gauge-fixing schemes that minimize an arbitrary target loss function.