FeatUp: A Model-Agnostic Framework for Features at Any Resolution

Authors

Stephanie Fu, Mark Hamilton, Laura Brandt, Axel Feldman, Zhoutong Zhang, William T. Freeman

Abstract

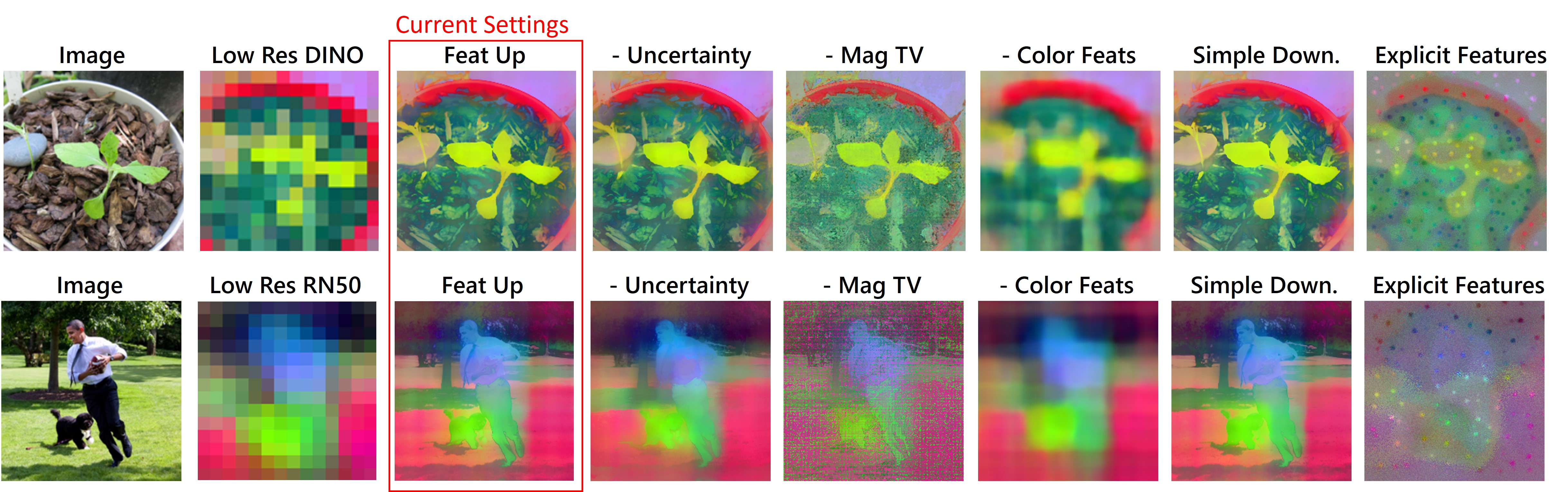

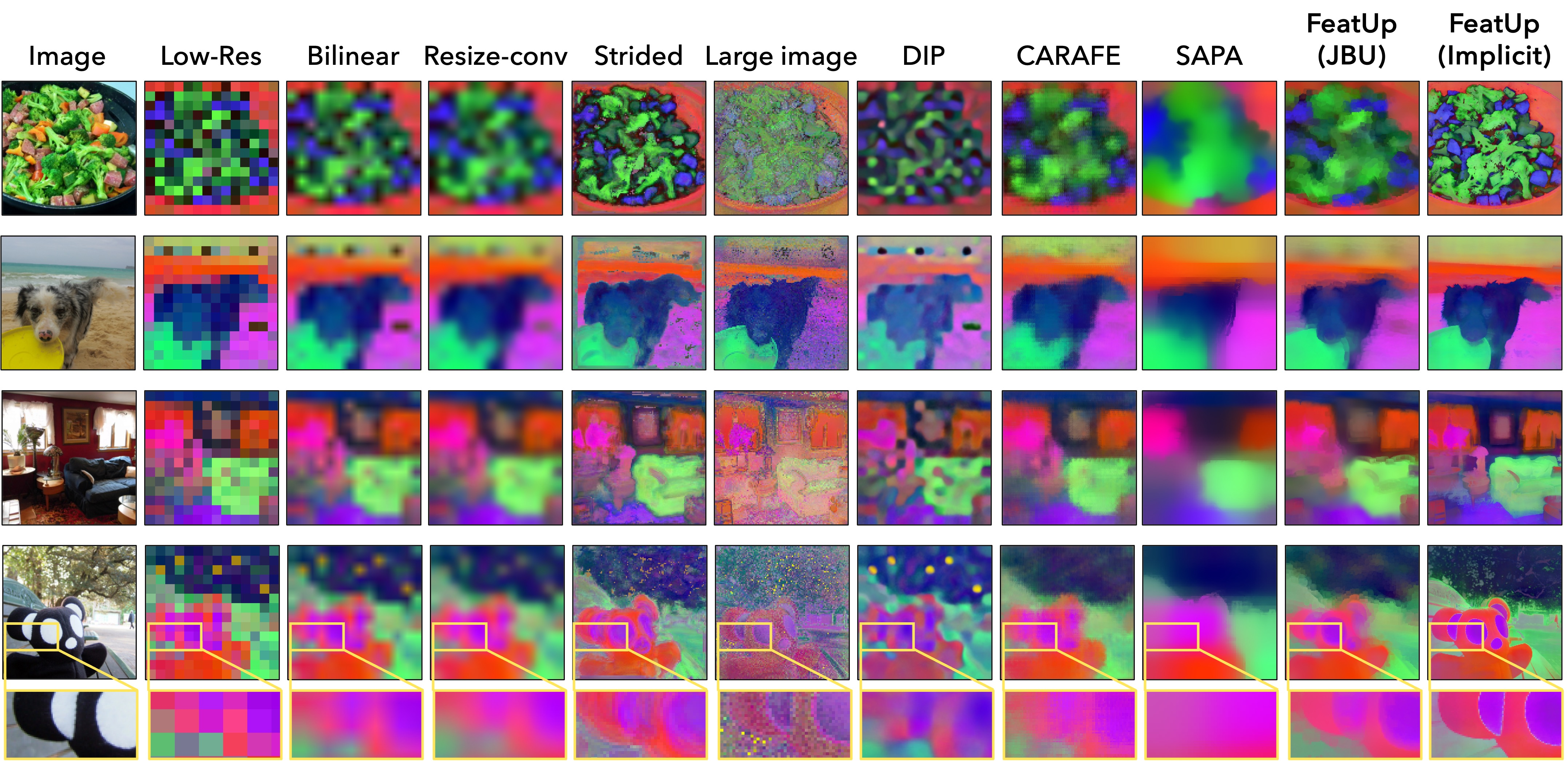

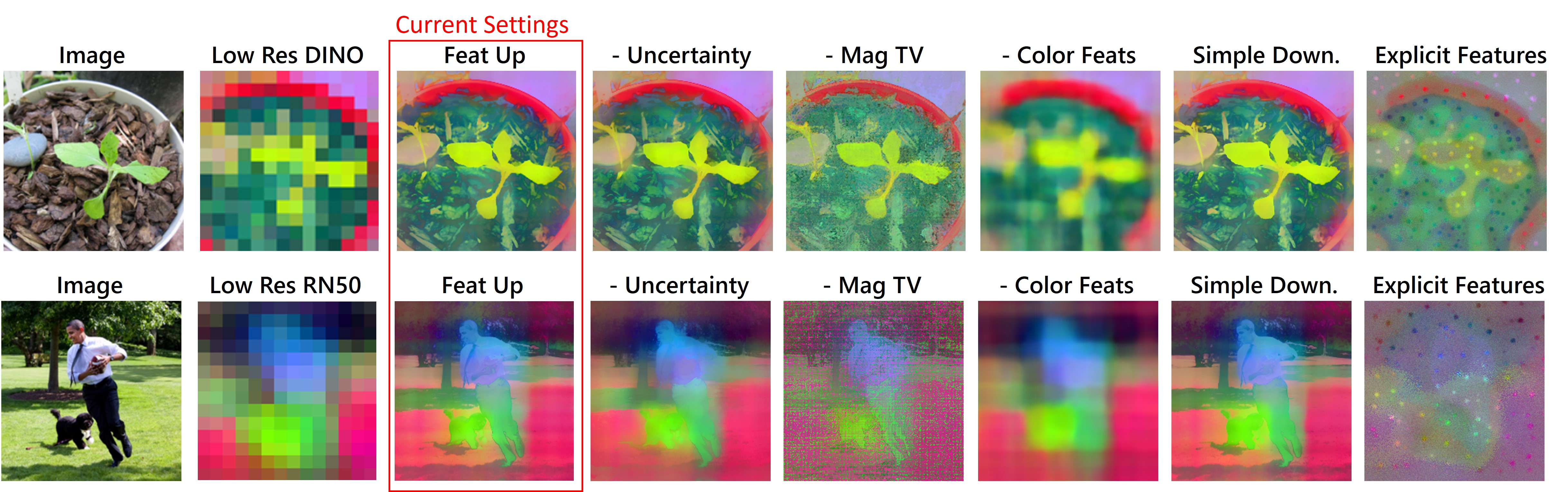

Deep features are a cornerstone of computer vision research, capturing image semantics and enabling the community to solve downstream tasks even in the zero- or few-shot regime. However, these features often lack the spatial resolution to directly perform dense prediction tasks like segmentation and depth prediction because models aggressively pool information over large areas. In this work, we introduce FeatUp, a task- and model-agnostic framework to restore lost spatial information in deep features. We introduce two variants of FeatUp: one that guides features with high-resolution signal in a single forward pass, and one that fits an implicit model to a single image to reconstruct features at any resolution. Both approaches use a multi-view consistency loss with deep analogies to NeRFs. Our features retain their original semantics and can be swapped into existing applications to yield resolution and performance gains even without re-training. We show that FeatUp significantly outperforms other feature upsampling and image super-resolution approaches in class activation map generation, transfer learning for segmentation and depth prediction, and end-to-end training for semantic segmentation.

Concepts

The Big Picture

Imagine hiring the world’s best detective to analyze a crime scene, then handing them a photo so blurry you can barely make out the furniture. The detective’s expertise is intact, but the resolution is too coarse to catch the details that matter. This is roughly the situation facing modern AI vision models when asked to do precise spatial tasks like outlining objects or estimating distances.

Today’s leading image-recognition systems (ResNet, Vision Transformers, the engines powering photo search and self-driving cars) are very good at understanding what is in an image. But to get there, they aggressively compress location information during processing. ResNet-50 squashes a 224×224 pixel image down to an internal grid just 7×7 cells across, a 32-fold reduction. Think of redrawing a detailed city map as a rough sketch: you keep the neighborhoods, but lose the street addresses.

When you then ask the model to draw a precise boundary around a dog, or estimate how far away a fire hydrant is, the fine location details have already been discarded. The model knows what is there but has largely forgotten where.

A team from MIT, UC Berkeley, Microsoft, Adobe Research, and Google has introduced FeatUp, a framework that restores this lost spatial detail to a model’s internal representations without changing what those representations mean and without retraining for each new task.

Key Insight: FeatUp borrows a core idea from 3D scene reconstruction: just as NeRF reconstructs a 3D scene from multiple 2D views, FeatUp reconstructs high-resolution features from multiple low-resolution “views” generated by slightly jittering the input image.

How It Works

Apply small transformations to an image (a slight crop, a horizontal flip, a bit of padding) and a vision model produces slightly different low-resolution feature maps for each version. Each is a different “view” of the same scene, carrying slightly different location information depending on how the transformation interacted with the model’s compression step.

FeatUp learns to combine these views into a single high-resolution feature map using a multi-view consistency loss: the sharp map being reconstructed, if compressed back down under each transformation, should match what the model originally produced. It works backwards from many compressed-view constraints to recover the underlying sharp structure.

This is the same logic that lets Neural Radiance Fields (NeRF) reconstruct a full 3D scene from 2D photos taken at different angles. You know what each camera angle should produce, so you work backwards to find the underlying structure that explains all the views simultaneously.

The team built two architectures around this idea:

-

The feedforward upsampler is a fast, general-purpose network trained once and applied to any image. At its core is a Joint Bilateral Upsampling (JBU) filter, a classical technique that sharpens a low-resolution signal by consulting a high-resolution guide (here, the original image). The authors implemented this as specialized GPU code, making it orders of magnitude faster than a naive implementation. It runs in a single forward pass and plugs directly into existing architectures.

-

The implicit upsampler is a small neural network fitted to a single image at test time, drawing direct inspiration from NeRF. Rather than generalizing across images, it memorizes the high-resolution structure of one scene and can produce feature maps at any target resolution.

Both approaches include two learned downsampler variants: a simple blur and an attention-based downsampler that weights spatial locations by content, mimicking how transformers compress information.

The feedforward approach is built for production: fast, general, a drop-in module. The implicit approach is built for analysis: maximum fidelity when a single image demands close scrutiny.

Why It Matters

The payoff is measurable. FeatUp’s upsampled features significantly outperform bilinear interpolation, nearest-neighbor, bicubic, and image super-resolution baselines on class activation map generation, segmentation transfer learning, and depth prediction, all without retraining the underlying backbone. On end-to-end semantic segmentation, plugging in FeatUp features yields gains across multiple backbone architectures.

But the bigger story is about modularity. A persistent friction in applying foundation models to real-world tasks is the mismatch between what these models represent and the spatial granularity those tasks require. FeatUp offers a clean solution: treat spatial resolution as a separable concern, recoverable after the fact. The enormous investment in self-supervised pretraining (DINO, CLIP, DINOv2, and their successors) becomes more directly useful for dense prediction without architectural surgery.

The NeRF analogy is more than decorative. It suggests a broader direction: using consistency constraints across multiple views or perturbations as a supervision signal when ground-truth labels are unavailable. FeatUp applies this to 2D features. The same principle could extend to video, 3D point clouds, or multimodal representations where spatial alignment is costly to supervise directly.

Bottom Line: FeatUp gives any vision model’s features a sharp spatial upgrade with no retraining required. By treating high-resolution reconstruction as a multi-view consistency problem, it turns a longstanding bottleneck in dense prediction into a modular, pluggable component.

IAIFI Research Highlights

FeatUp imports a core concept from physics-inspired neural rendering (NeRF's multi-view consistency) and applies it to recover spatial structure in 2D feature representations, showing how geometric reasoning principles transfer across domains.

FeatUp is a model-agnostic, task-agnostic drop-in module that improves dense prediction performance across segmentation, depth estimation, and explainability without modifying or retraining the underlying backbone.

By enabling finer-grained feature maps from pretrained vision encoders, FeatUp has potential relevance to scientific imaging tasks such as particle physics event reconstruction or astrophysical image analysis, where spatial precision directly affects measurement accuracy.

Future work could extend FeatUp's multi-view consistency framework to video, 3D representations, and scientific foundation models. The paper appeared at ICLR 2024 and is available as [arXiv:2403.10516](https://arxiv.org/abs/2403.10516).