Gauge Equivariant Neural Networks for 2+1D U(1) Gauge Theory Simulations in Hamiltonian Formulation

Authors

Di Luo, Shunyue Yuan, James Stokes, Bryan K. Clark

Abstract

Gauge Theory plays a crucial role in many areas in science, including high energy physics, condensed matter physics and quantum information science. In quantum simulations of lattice gauge theory, an important step is to construct a wave function that obeys gauge symmetry. In this paper, we have developed gauge equivariant neural network wave function techniques for simulating continuous-variable quantum lattice gauge theories in the Hamiltonian formulation. We have applied the gauge equivariant neural network approach to find the ground state of 2+1-dimensional lattice gauge theory with U(1) gauge group using variational Monte Carlo. We have benchmarked our approach against the state-of-the-art complex Gaussian wave functions, demonstrating improved performance in the strong coupling regime and comparable results in the weak coupling regime.

Concepts

The Big Picture

Imagine trying to describe the behavior of water by tracking every single water molecule, billions upon billions of them, each interacting with its neighbors. Now scale that problem up by orders of magnitude, and replace those molecules with the force-carrying fields that underlie electricity, magnetism, and the nuclear forces, fields that must obey strict mathematical constraints at every point in space. You start to glimpse the challenge facing physicists who want to simulate the fundamental forces of nature on a computer.

These constraints come from gauge theories, the mathematical framework underlying electromagnetism, the strong nuclear force, and the physics of materials. Gauge theories are notoriously resistant to direct simulation. The problem isn’t just computing power; it’s that any faithful representation of the system must respect the theory’s deep symmetry structure, or the whole calculation falls apart.

The specific culprit is gauge symmetry: the idea that you can independently change how quantities are measured at every point in space and time, as long as the physical predictions stay the same. A wave function that violates this symmetry doesn’t just give wrong answers. It’s physically meaningless. Building that constraint into a flexible, learnable representation has been one of the grand challenges at the intersection of machine learning and quantum physics.

Researchers from MIT, the University of Illinois, and collaborating institutions have now developed a new class of gauge equivariant neural network wave functions capable of simulating gauge theories where fields can take any value along a continuous range, a significant step beyond what previous neural network approaches could handle.

Key Insight: By baking gauge symmetry directly into the neural network architecture, rather than enforcing it as a post-hoc constraint, this approach produces wave functions that are physically exact by construction and more accurate than the previous state-of-the-art in the hardest simulation regime.

How It Works

The team focused on the Kogut-Susskind model, a standard testing ground for lattice gauge theories, set in 2+1 dimensions with the U(1) gauge group. U(1) is the simplest continuous gauge group, describing rotations on a circle. This is the lattice version of compact electrodynamics, and it’s a natural first target before tackling more complex theories like quantum chromodynamics (QCD), the theory of quarks and the strong nuclear force.

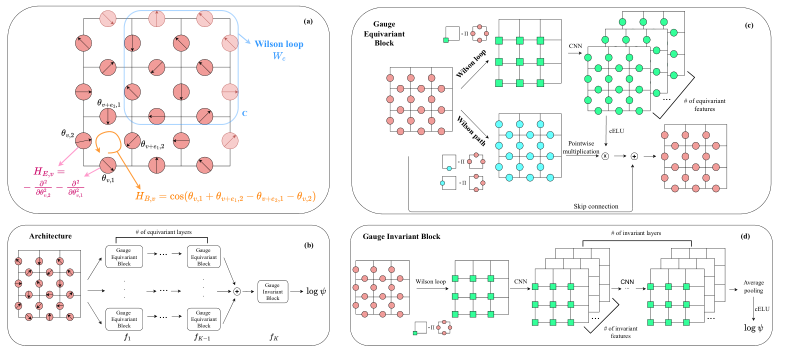

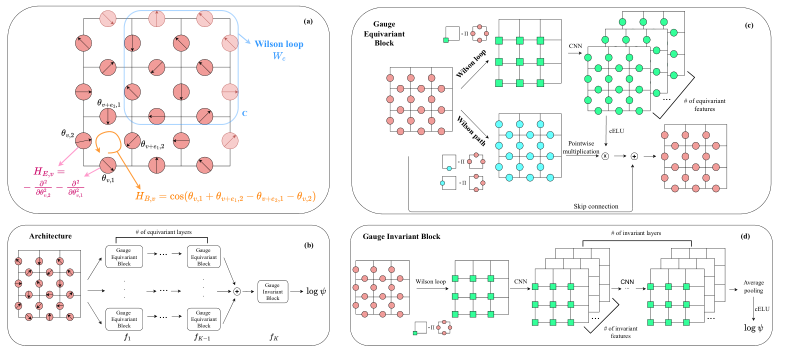

The lattice is a grid of vertices connected by edges. The gauge field lives on each edge as an angular variable θ ∈ [0, 2π), represented as the complex exponential e^(iθ). Two competing terms govern the system’s energy: a kinetic term that wants the field to fluctuate, and a magnetic term that wants neighboring plaquettes (the square loops formed by four edges) to align.

The core architectural challenge was building a neural network that transforms equivariantly under gauge transformations: when you apply a gauge transformation to the input field configuration, the hidden representations should transform in a consistent, predictable way, and the final output wave function should remain invariant. The team achieved this through a chain of nonlinear mappings:

- Equivariant blocks process the gauge field while preserving transformation properties at each layer, like convolutional filters that “know” how the gauge field transforms.

- Gauge invariant blocks pool the equivariant features into scalar quantities unchanged under any gauge transformation.

- The full architecture stacks these blocks like a standard CNN, with every operation designed to respect the underlying symmetry group.

This is a continuous-variable architecture, and that distinction matters. Previous neural network approaches to lattice gauge theory handled discrete gauge groups (like Z_N, the integers modulo N). The U(1) group is continuous: the angular variable can take any value in [0, 2π). That demands a fundamentally different treatment using complex-valued representations and Fourier-mode decompositions, a technique for breaking continuous signals into their component frequencies.

The wave function is optimized using variational Monte Carlo (VMC): network parameters are tuned to minimize the expected energy by sampling field configurations from the probability distribution |ψ|². Because gauge symmetry is built into the architecture, every sampled configuration and every computed gradient is automatically consistent with the physical constraints.

Why It Matters

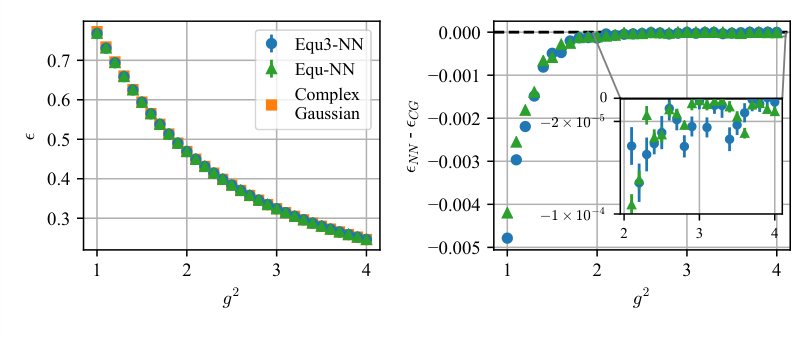

The benchmarks speak for themselves. In the strong coupling regime (large coupling constant g), where quantum fluctuations dominate and the field is wildly disordered, the gauge equivariant neural network outperforms the previous best approach (complex Gaussian wave functions) by a meaningful margin. In the weak coupling regime, where the field tends toward smooth configurations, performance is comparable. This asymmetry makes physical sense: the strong coupling regime demands the most expressive wave function, and the neural network’s nonlinear depth delivers exactly there.

U(1) gauge theory in 2+1D is a stepping stone. The same architectural principles, equivariant features, gauge-respecting convolutions, invariant readout, should generalize to non-abelian gauge groups like SU(2) and SU(3). These are groups where the order of transformations matters (unlike simple rotations on a circle), and they govern the weak and strong nuclear forces respectively.

Quantum hardware is another frontier. As quantum computers mature, they’ll need efficient classical descriptions of gauge-invariant states to initialize and benchmark quantum simulations. Neural network wave functions that respect gauge symmetry by construction are natural candidates for that role.

There’s also a pure machine learning angle. The techniques developed here, building equivariant architectures for continuous symmetry groups acting on structured graph data, feed into the growing field of geometric deep learning, where symmetry principles guide network design rather than being treated as an afterthought.

Bottom Line: By embedding gauge symmetry directly into a neural network architecture, this work achieves more accurate ground state simulations of a foundational quantum field theory model and opens the door to tackling the continuous gauge groups that govern the actual forces of nature.

IAIFI Research Highlights

This work applies geometric deep learning (gauge equivariant convolutional architectures) to a core problem in theoretical physics: finding the ground state of a continuous-variable quantum lattice gauge theory. Symmetry-respecting network design turns out to be a practical computational advantage, not just a physics-inspired metaphor.

The paper advances equivariant neural networks for continuous Lie groups acting on structured lattice data, contributing new architectural building blocks for geometric deep learning applicable to any domain with local symmetry constraints.

By achieving improved accuracy over state-of-the-art wave functions for the U(1) Kogut-Susskind model in the strong coupling regime, this work brings neural quantum states closer to viability as simulation tools for the gauge theories underpinning the Standard Model.

Future directions include extending the approach to non-abelian gauge groups (SU(2), SU(3)) and coupling gauge fields to matter, which would make the method applicable to QCD-like theories. The paper appeared at the NeurIPS 2022 AI for Science Workshop ([arXiv:2211.03198](https://arxiv.org/abs/2211.03198)).

Original Paper Details

Gauge Equivariant Neural Networks for 2+1D U(1) Gauge Theory Simulations in Hamiltonian Formulation

[2211.03198](https://arxiv.org/abs/2211.03198)

Di Luo, Shunyue Yuan, James Stokes, Bryan K. Clark

Gauge Theory plays a crucial role in many areas in science, including high energy physics, condensed matter physics and quantum information science. In quantum simulations of lattice gauge theory, an important step is to construct a wave function that obeys gauge symmetry. In this paper, we have developed gauge equivariant neural network wave function techniques for simulating continuous-variable quantum lattice gauge theories in the Hamiltonian formulation. We have applied the gauge equivariant neural network approach to find the ground state of 2+1-dimensional lattice gauge theory with U(1) gauge group using variational Monte Carlo. We have benchmarked our approach against the state-of-the-art complex Gaussian wave functions, demonstrating improved performance in the strong coupling regime and comparable results in the weak coupling regime.