GW-YOLO: Multi-transient segmentation in LIGO using computer vision

Authors

Siddharth Soni, Nikhil Mukund, Erik Katsavounidis

Abstract

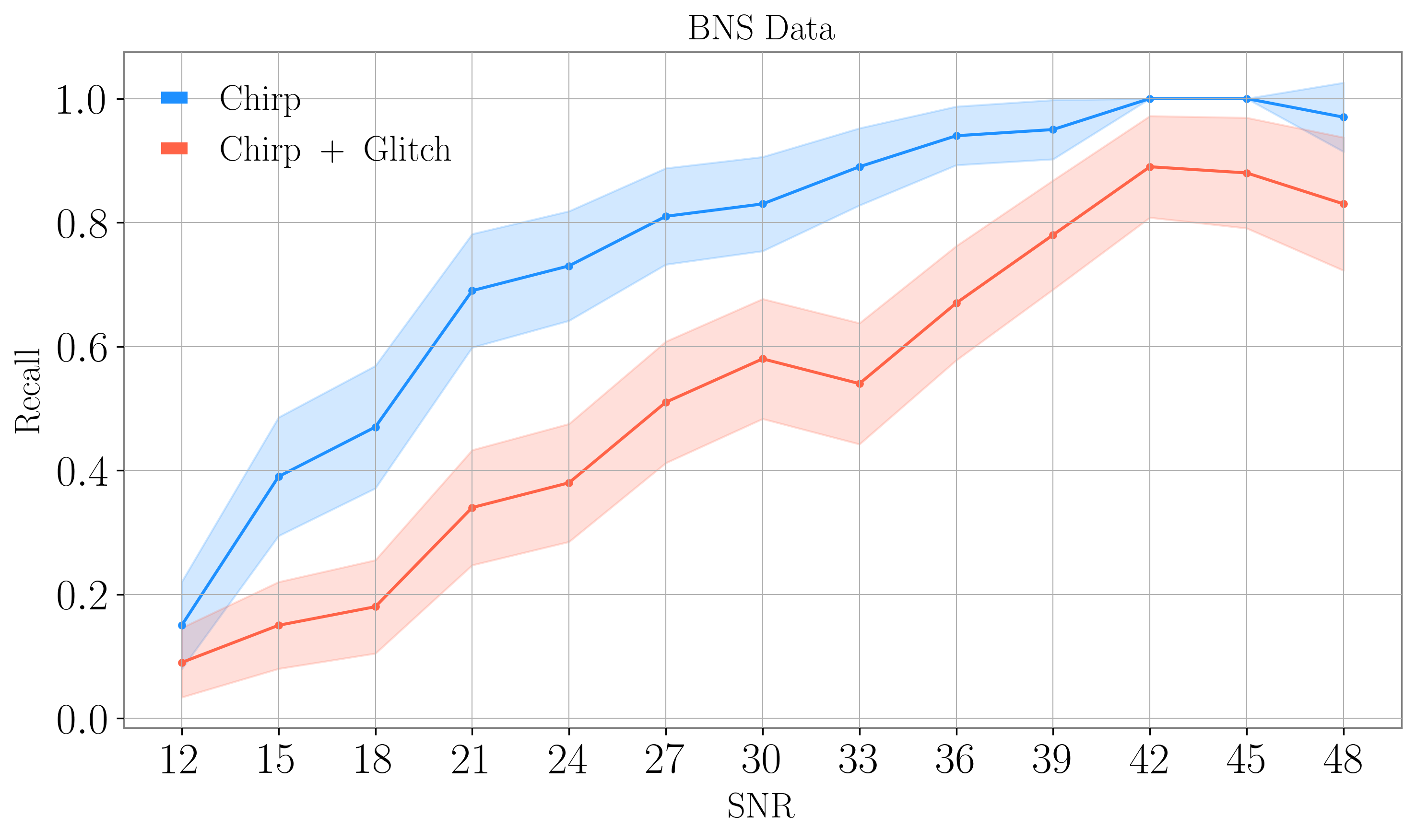

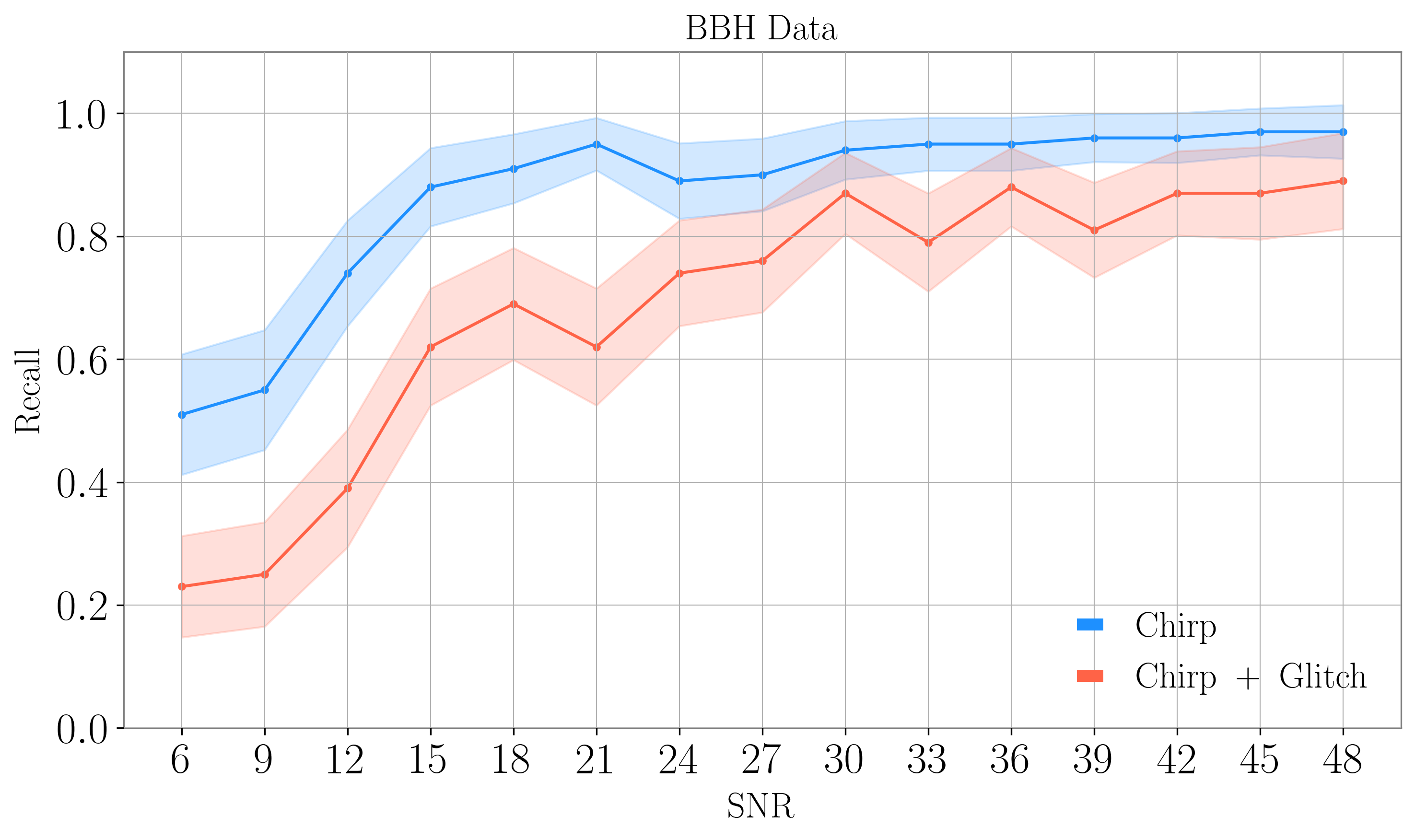

Time series data and their time-frequency representation from gravitational-wave interferometers present multiple opportunities for the use of artificial intelligence methods associated with signal and image processing. Closely connected with this is the real-time aspect associated with gravitational-wave interferometers and the astrophysical observations they perform; the discovery potential of these instruments can be significantly enhanced when data processing can be achieved in O(1s) timescales. In this work, we introduce a novel signal and noise identification tool based on the YOLO (You Only Look Once) object detection framework. For its application into gravitational waves, we will refer to it as GW-YOLO. This tool can provide scene identification capabilities and essential information regarding whether an observed transient is any combination of noise and signal. Additionally, it supplies detailed time-frequency coordinates of the detected objects in the form of pixel masks, an essential property that can be used to understand and characterize astrophysical sources, as well as instrumental noise. The simultaneous identification of noise and signal, combined with precise pixel-level localization, represents a significant advancement in gravitational-wave data analysis. Our approach yields a 50\% detection efficiency for binary black hole signals at a signal-to-noise ratio (SNR) of 15 when such signals overlap with transient noise artifacts. When noise artifacts overlap with binary neutron star signals, our algorithm attains 50\% detection efficiency at an SNR of 30. This presents the first quantitative assessment of the ability to detect astrophysical events overlapping with realistic, instrument noise present in gravitational-wave interferometers.

Concepts

The Big Picture

Imagine trying to identify a faint whisper in a crowded stadium while someone nearby blows an air horn. That’s roughly the challenge facing scientists at LIGO every time a gravitational wave from a black hole merger arrives at the same moment as a nearby “glitch,” an instrumental noise spike. It happened famously with GW170817, the historic first detection of colliding neutron stars in 2017. A loud glitch at the LIGO Livingston detector forced scientists to remove the noise by hand before they could properly analyze the event. That process took days.

Now, researchers at MIT have built something that might make that laborious manual cleanup a relic of the past. Their tool, GW-YOLO, borrows a computer vision algorithm originally designed to spot objects in photographs and repurposes it to scan the time-frequency “pictures” that LIGO’s data naturally produces, identifying gravitational wave signals and noise glitches simultaneously, in about a second.

It is the first system to quantitatively measure how well we can detect cosmic merger events when they overlap with realistic instrument noise. As next-generation detectors come online, that problem is only going to get worse.

Key Insight: GW-YOLO treats gravitational wave data like a photograph, using real-time object detection to simultaneously find and outline both astrophysical signals and noise glitches in LIGO’s time-frequency images — even when they overlap.

How It Works

LIGO’s raw measurement, strain data, records how much space-time is stretched or squeezed by a passing gravitational wave. That signal gets transformed into visual Q-scans: time-frequency spectrograms that look like colorful heat maps. Bright blobs and streaks reveal where energy concentrates across different frequencies and times. An experienced physicist can glance at one and immediately recognize the characteristic chirp of a binary merger or the blotchy smear of a common glitch. GW-YOLO learns to do the same thing, automatically.

The backbone of the system is YOLO (You Only Look Once), a deep neural network architecture famous in computer vision for its speed. Earlier detection methods scan an image in multiple passes. YOLO processes the entire image in a single forward pass, one sweep through the network’s layers, enabling real-time performance. The team adapted this framework for gravitational-wave data, training it to recognize four classes of objects in Q-scan images:

- Binary black hole (BBH) signals

- Binary neutron star (BNS) signals

- Blip glitches (the most common noise transient type)

- Koi Fish glitches (a longer, more complex noise morphology)

GW-YOLO doesn’t just draw a box around what it finds. It produces pixel-level segmentation masks, precise outlines of each detected object in time-frequency space. Knowing exactly which pixels belong to a glitch versus a real signal is the first step toward automated noise subtraction, the kind of operation that took days of expert labor during GW170817.

Training required constructing a realistic synthetic dataset. The team injected simulated BBH and BNS waveforms (the predicted time-frequency shapes of gravitational wave signals) into real LIGO noise across a range of signal-to-noise ratios (SNR), then overlaid those injections with real glitches drawn from LIGO’s own archives. The model learned not just what signals look like in isolation, but what they look like tangled together with noise. That’s the scenario that actually matters.

The performance benchmarks are honest about the difficulty. For binary black hole signals overlapping with glitches, GW-YOLO achieves 50% detection efficiency at SNR 15, reliably spotting relatively loud events. For binary neutron star signals, which sweep through more frequencies over longer durations, the 50% threshold rises to SNR 30. BNS signals are inherently harder to disentangle from an overlapping glitch, and the numbers reflect that physical reality.

Why It Matters

LIGO and its partners are already planning the next generation of gravitational-wave detectors: the Cosmic Explorer in the United States and the Einstein Telescope in Europe. These instruments will be so sensitive they could detect hundreds of binary neutron star mergers every day. At those rates, glitch overlap stops being a rare annoyance and becomes a routine problem.

The current approach, expert humans with specialized software working case by case, simply won’t scale.

GW-YOLO points toward a different kind of pipeline: one that ingests raw LIGO data, produces Q-scans, runs them through the neural network in under a second, and outputs labeled masks telling downstream tools exactly what’s signal and what’s noise. Those pixel masks can feed directly into noise-subtraction algorithms, enabling automated glitch removal that today still requires human oversight. The precise time-frequency coordinates could also help pin down the physical properties of merging objects, since a signal’s shape encodes information about masses, spins, and distances.

Open questions remain. The current system handles two glitch classes and two signal types; real LIGO data contains dozens of distinct glitch morphologies. Extending GW-YOLO’s vocabulary will require more training data and careful validation. Very low-SNR signals, exactly the regime where third-generation detectors will push us, also remain challenging. But as a proof of concept and a first quantitative baseline, the groundwork is laid.

Bottom Line: A computer vision algorithm running in real time can simultaneously identify gravitational wave signals and noise glitches in LIGO data, even when they overlap. GW-YOLO provides the first quantitative benchmark for this capability and a blueprint for the automated pipelines that next-generation detectors will need.

IAIFI Research Highlights

GW-YOLO brings modern computer vision (the YOLO object detection framework) into experimental gravitational-wave physics, applying image segmentation to one of LIGO's most pressing real-world data analysis challenges.

The work extends instance segmentation to a new scientific domain, showing that pixel-level object detection generalizes to physics-derived time-frequency images with strong multi-class discrimination under overlapping, low-SNR conditions.

Automated, real-time separation of astrophysical signals from instrumental noise directly improves LIGO's ability to characterize merger events, particularly in the overlap scenarios that compromised the landmark GW170817 binary neutron star detection.

As third-generation detectors like Cosmic Explorer and Einstein Telescope approach, scalable automated glitch handling becomes essential. GW-YOLO establishes the quantitative baseline and architecture that future pipelines can build on.

Original Paper Details

GW-YOLO: Multi-transient segmentation in LIGO using computer vision

[arXiv:2508.17399](https://arxiv.org/abs/2508.17399)

Siddharth Soni, Nikhil Mukund, Erik Katsavounidis