Hierarchical Neural Simulation-Based Inference Over Event Ensembles

Authors

Lukas Heinrich, Siddharth Mishra-Sharma, Chris Pollard, Philipp Windischhofer

Abstract

When analyzing real-world data it is common to work with event ensembles, which comprise sets of observations that collectively constrain the parameters of an underlying model of interest. Such models often have a hierarchical structure, where "local" parameters impact individual events and "global" parameters influence the entire dataset. We introduce practical approaches for frequentist and Bayesian dataset-wide probabilistic inference in cases where the likelihood is intractable, but simulations can be realized via a hierarchical forward model. We construct neural estimators for the likelihood(-ratio) or posterior and show that explicitly accounting for the model's hierarchical structure can lead to significantly tighter parameter constraints. We ground our discussion using case studies from the physical sciences, focusing on examples from particle physics and cosmology.

Concepts

The Big Picture

Imagine you’re a detective trying to identify a suspect, but you’re only allowed to interview witnesses one at a time, and you can’t compare notes. You’d get clues, but you’d miss the pattern that emerges when all the testimony is viewed together: the consistency that confirms a story, or the contradiction that blows it apart. Now swap the crime scene for a particle collider, the witnesses for individual collision events, and the suspect for a fundamental parameter of the universe. A new set of machine learning methods is finally letting scientists compare notes.

Physicists analyzing collider data or astronomical observations routinely work with event ensembles: large collections of individual measurements that, taken together, constrain some underlying physical model. These models have a layered structure. Some parameters are “global,” shaping every event in a dataset, while others are “local,” relevant only to a single measurement. Traditional approaches often treat each event in isolation before combining results, a shortcut that can severely underestimate the power of the full dataset.

There’s a second problem. The likelihood functions physicists use to calculate how probable a dataset is under a given theory are often impossible to compute exactly for complex simulations. You can run the simulation and observe the output, but you can’t write down its probability in closed form.

Researchers from TU Munich, MIT, IAIFI, and the University of Chicago have built a framework that tackles both problems at once, using neural networks that explicitly respect the model’s layered structure to extract much tighter parameter constraints.

Key Insight: By training neural networks on entire event ensembles while respecting the hierarchical structure of the underlying physics model, the authors achieve substantially tighter parameter constraints than methods that analyze events one by one.

How It Works

The core challenge is simulation-based inference (SBI): learning about a model when you can run simulations but can’t compute exact probabilities. Traditional SBI trains a neural network to estimate likelihoods from single observations. But real physics datasets don’t come as isolated points. They come as ensembles of many events, jointly constrained by the same global parameters.

The authors formalize the layered structure mathematically. Every dataset is governed by global parameters θ (things like a particle interaction cross-section or dark matter mass) and per-event local parameters z_i, specific to each individual measurement. A naive approach handles local parameters one event at a time, then multiplies the results. The authors prove this is only valid under specific conditions, and violating them leads to systematically wrong or miscalibrated results.

Their solution comes in two flavors:

- Frequentist approach: They build a neural network that directly estimates the profile likelihood ratio, a score comparing how well two competing hypotheses explain the full dataset after optimizing over unknown nuisance parameters, across an entire ensemble at once. This is the first end-to-end frequentist SBI method for hierarchical set-valued data.

- Bayesian approach: They train networks to estimate the full posterior distribution over global parameters, given a whole set of events simultaneously. The posterior is a probability map showing which parameter values are most consistent with the observed data. The network sees sets of observations as input, not individual points.

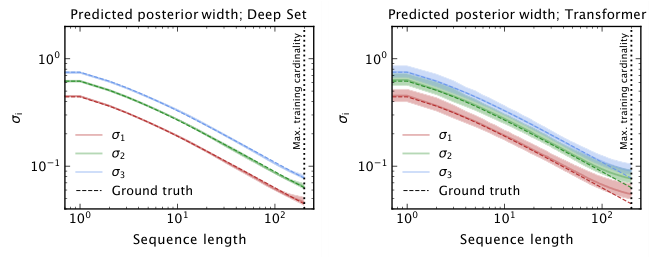

To handle sets of variable size, the architecture uses permutation-invariant networks: models that produce the same output regardless of how you shuffle the inputs. The network aggregates information across events using learned summary statistics, then maps that aggregate to a parameter estimate.

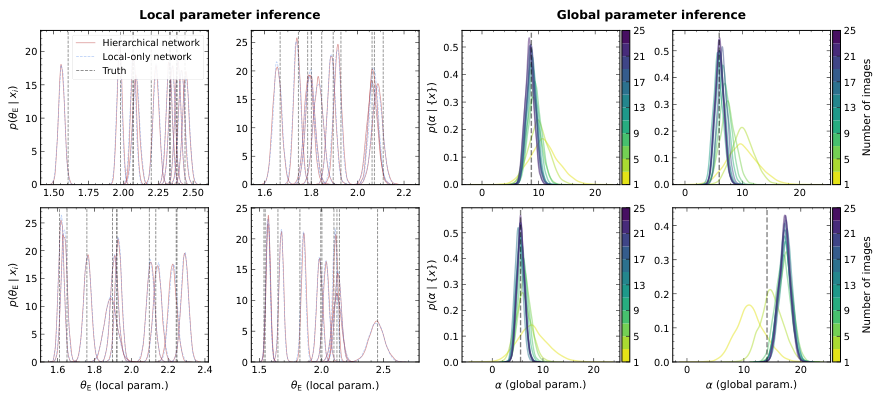

The team validates these methods across three progressively complex case studies. First, a toy Gaussian mixture model where the correct answer is known analytically. Second, a particle physics scenario involving a marked Poisson process, a collider model where the number of events itself carries information about a signal rate. Third and hardest: strong gravitational lensing, where telescope images of galaxies distorted by a foreground mass must be analyzed to infer properties of that mass.

In all three cases, the pattern is the same: hierarchy-aware inference produces tighter, better-calibrated constraints than naive event-by-event combination.

In the gravitational lensing application, the hierarchical approach yields meaningfully narrower posterior distributions, translating directly into better science per photon collected. The method is also much faster than Markov Chain Monte Carlo sampling, a standard but computationally intensive technique for exploring complex probability spaces, even when the likelihood is tractable. It also enables streaming inference, where the posterior updates in real time as new observations arrive without reanalyzing old data.

Why It Matters

Physics has always demanded rigorous statistical inference under complex models. Machine learning has recently provided powerful tools for approximating intractable functions. But the real gains come from combining these two properly: not just attaching a neural network to an existing pipeline, but redesigning inference to respect the model’s actual structure.

The approach applies well beyond particle physics and cosmology. Any domain with layered data-generating processes (population genetics, epidemiology, exoplanet surveys, gravitational wave astronomy) could use the same techniques. As simulation-based inference matures, the principle here will likely become standard practice: learn from ensembles as ensembles, not as collections of individuals.

Open questions remain around scaling to truly enormous datasets and handling cases where the layered structure is itself uncertain or misspecified. But the foundation is solid.

Bottom Line: By building neural estimators that treat entire event ensembles as first-class inputs and explicitly exploit hierarchical model structure, this work delivers significantly tighter physics constraints, faster than MCMC and without requiring a tractable likelihood.

IAIFI Research Highlights

This work connects modern machine learning (permutation-invariant neural networks, amortized inference) with the statistical needs of large-scale physics experiments, showing what becomes possible when AI tools are designed with physics structure in mind.

The paper introduces the first end-to-end frequentist simulation-based inference method for hierarchical set-valued data, advancing the SBI literature with architectures that generalize to variable-cardinality inputs and enable real-time streaming posterior updates.

By enabling tighter, properly calibrated parameter constraints from particle collider data and gravitational lensing images, the methods improve the sensitivity of searches for new physics and dark matter signatures.

Future directions include scaling to higher-dimensional parameter spaces and datasets from next-generation experiments like the Vera Rubin Observatory; the full methodology is available at [arXiv:2306.12584](https://arxiv.org/abs/2306.12584).

Original Paper Details

Hierarchical Neural Simulation-Based Inference Over Event Ensembles

2306.12584

["Lukas Heinrich", "Siddharth Mishra-Sharma", "Chris Pollard", "Philipp Windischhofer"]

When analyzing real-world data it is common to work with event ensembles, which comprise sets of observations that collectively constrain the parameters of an underlying model of interest. Such models often have a hierarchical structure, where "local" parameters impact individual events and "global" parameters influence the entire dataset. We introduce practical approaches for frequentist and Bayesian dataset-wide probabilistic inference in cases where the likelihood is intractable, but simulations can be realized via a hierarchical forward model. We construct neural estimators for the likelihood(-ratio) or posterior and show that explicitly accounting for the model's hierarchical structure can lead to significantly tighter parameter constraints. We ground our discussion using case studies from the physical sciences, focusing on examples from particle physics and cosmology.