Infinite Variance in Monte Carlo Sampling of Lattice Field Theories

Authors

Cagin Yunus, William Detmold

Abstract

In Monte Carlo calculations of expectation values in lattice quantum field theories, the stochastic variance of the sampling procedure that is used defines the precision of the calculation for a fixed number of samples. If the variance of an estimator of a particular quantity is formally infinite, or in practice very large compared to the square of the mean, then that quantity can not be reliably estimated using the given sampling procedure. There are multiple scenarios in which this occurs, including in Lattice Quantum Chromodynamics, and a particularly simple example is given by the Gross-Neveu model where Monte Carlo calculations involve the introduction of auxiliary bosonic variables through a Hubbard-Stratonovich (HS) transformation. Here, it is shown that the variances of HS estimators for classes of operators involving fermion fields are divergent in this model and an even simpler zero-dimensional analogue. To correctly estimate these observables, two alternative sampling methods are proposed and numerically investigated.

Concepts

The Big Picture

Imagine measuring the average height of people in a room, but every so often, a giant walks in, someone ten thousand feet tall, destroying your running average. You could take a million measurements and still never get a reliable answer. This is the statistical nightmare physicists face when calculating certain properties of the subatomic world using Monte Carlo sampling, a method that uses random numbers to numerically estimate quantities too complex to solve analytically.

In quantum field theory, the mathematical framework describing particles and forces at their most fundamental level, physicists can’t just solve equations on a whiteboard. Instead, they chop spacetime into a discrete grid called a lattice and use Monte Carlo sampling to estimate physical quantities numerically.

It works beautifully in many cases. But sometimes the very structure of the theory causes the variance of the estimator (the spread in your answers) to be formally infinite. When that happens, no amount of computing power gives a reliable result. You can run your simulation forever and never converge.

Cagin Yunus and William Detmold at MIT’s Center for Theoretical Physics have pinpointed exactly why this infinite-variance problem arises in specific theories, particularly the Gross-Neveu model and, more broadly, Lattice QCD, and proposed two concrete fixes.

Key Insight: In certain lattice field theories, standard Monte Carlo estimators for fermion-field operators have formally infinite variance, not because of numerical errors, but because of the mathematical structure of the sampling procedure itself. Any finite number of samples is statistically meaningless.

How It Works

The problem starts with how physicists handle fermions, particles like quarks and electrons governed by the Pauli exclusion principle, which forbids two fermions from occupying the same quantum state. Fermions are notoriously difficult to simulate directly. The standard workaround is the Hubbard-Stratonovich (HS) transformation: introduce an auxiliary bosonic field, a fictional particle type without the Pauli restriction, that replaces complicated four-fermion interaction terms with simpler two-way ones. The transformation is mathematically exact, but it changes what you’re sampling over.

The Gross-Neveu model is a 2D quantum field theory that shares key structural features with QCD (the theory of the strong nuclear force) and is widely used as a theoretical testbed. In this model, the HS transformation introduces a continuous auxiliary field, and Monte Carlo then samples configurations of that field.

Here’s the trouble. When the Dirac operator (the matrix governing fermion behavior) has eigenvalues close to zero, so-called “exceptional configurations,” the estimator for fermion propagators blows up. Yunus and Detmold prove this rigorously: for operators built from fermion fields, the HS estimators have divergent second moments, meaning variance is not merely large but formally infinite. The fallout:

- The Central Limit Theorem no longer applies, so sample averages don’t converge to true means

- Sample variance keeps growing with each new sample instead of stabilizing

- No finite number of Monte Carlo samples yields a statistically reliable result

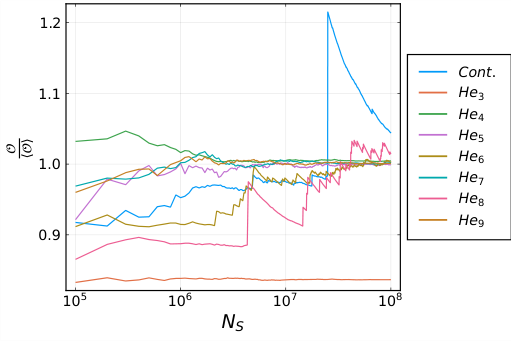

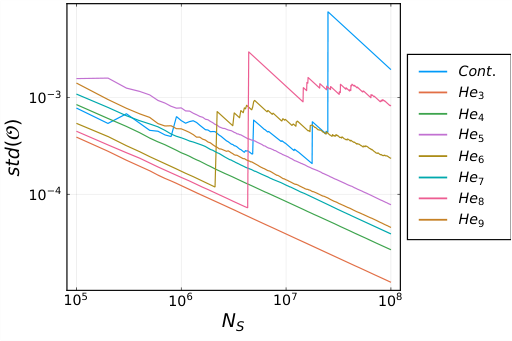

The authors first study a zero-dimensional toy model, stripping away spacetime entirely and keeping only the algebraic structure. Because the toy model is analytically tractable, they can verify their results against exact calculations before testing two proposed solutions on the full 2D theory.

Solution 1: Discrete Hubbard-Stratonovich. Replace the continuous auxiliary field with one taking only a finite set of values. Variance becomes finite by construction since no single sample can produce an infinite contribution. The tradeoff is that the number of configurations grows exponentially with system size, limiting scalability. For small systems, though, it works well.

Solution 2: Sequential Reweighting. For physical quantities that are always zero or positive (covering many observables in these theories), write the mean of a high-variance quantity as a product of means of lower-variance quantities. Instead of estimating one wildly fluctuating number directly, you decompose it into a chain: estimate the first factor, use those results to estimate the second conditioned on the first, and so on. Each factor has finite variance, even when the direct estimate does not.

In numerical tests, sequential reweighting correctly recovers exact answers where the standard HS estimator fails completely. What makes the failure especially treacherous is that the standard estimator’s sample variance can appear well-behaved, hiding the underlying divergence.

Why It Matters

This isn’t abstract. Lattice QCD, the framework governing the strong nuclear force that binds protons and neutrons, faces related infinite-variance problems from zero-modes of the Dirac operator. Nuclear correlation functions built from large numbers of quark fields are essential for understanding atomic nuclei from first principles, and they hit exactly this wall. The more quarks involved, the worse the problem gets.

The root cause is structural. Whenever you use a continuous HS transformation to handle fermionic interactions, you potentially introduce infinite-variance estimators. This spans condensed matter physics (the Hubbard model, central to theories of high-temperature superconductivity) and particle physics alike. Sequential reweighting is general enough to apply well beyond the Gross-Neveu model, offering a practical path forward where discrete HS transformations become computationally infeasible.

Open questions remain. The discrete HS approach needs a way to scale to larger volumes, perhaps through importance sampling within the discrete space. Both methods also need testing in full 4D lattice QCD, where the computational stakes are highest.

Bottom Line: Yunus and Detmold have identified a fundamental statistical failure mode in Monte Carlo simulations of lattice field theories and offered two workable remedies. Their sequential reweighting method opens a path toward reliable first-principles calculations of fermion observables that were previously out of reach.

IAIFI Research Highlights

This work connects rigorous statistical theory with lattice quantum field theory, using tools from probability and stochastic analysis to diagnose and fix a fundamental computational problem in nuclear and particle physics.

The sequential reweighting framework offers a general strategy for taming infinite-variance estimators in Monte Carlo methods, with potential applications wherever importance sampling and stochastic estimation face similar breakdown modes, including in machine learning.

By characterizing and resolving infinite-variance failures in the Gross-Neveu model and outlining the path to Lattice QCD, this work improves prospects for reliable first-principles calculations of hadronic and nuclear observables from the strong force.

Future work will extend these methods to larger spacetime volumes and full 4D lattice QCD; the paper is available at [arXiv:2205.01001](https://arxiv.org/abs/2205.01001).