Learning the Simplicity of Scattering Amplitudes

Authors

Clifford Cheung, Aurélien Dersy, Matthew D. Schwartz

Abstract

The simplification and reorganization of complex expressions lies at the core of scientific progress, particularly in theoretical high-energy physics. This work explores the application of machine learning to a particular facet of this challenge: the task of simplifying scattering amplitudes expressed in terms of spinor-helicity variables. We demonstrate that an encoder-decoder transformer architecture achieves impressive simplification capabilities for expressions composed of handfuls of terms. Lengthier expressions are implemented in an additional embedding network, trained using contrastive learning, which isolates subexpressions that are more likely to simplify. The resulting framework is capable of reducing expressions with hundreds of terms - a regular occurrence in quantum field theory calculations - to vastly simpler equivalent expressions. Starting from lengthy input expressions, our networks can generate the Parke-Taylor formula for five-point gluon scattering, as well as new compact expressions for five-point amplitudes involving scalars and gravitons. An interactive demonstration can be found at https://spinorhelicity.streamlit.app .

Concepts

The Big Picture

Imagine writing a proof that fills fifty pages, only to discover it fits on a napkin. That’s essentially what happens in theoretical particle physics, routinely.

When physicists calculate how particles scatter off each other, they use Feynman diagrams, a set of diagram-based rules that generate the underlying math. The diagrams are powerful, but the intermediate algebra can sprawl into hundreds or thousands of terms. Yet the final answer is often shockingly compact.

One famous example: the collision of five gluons (the particles that carry the strong force binding quarks inside protons) produces, via Feynman diagrams, a calculation hundreds of lines long. The actual answer, discovered by Parke and Taylor in 1986, is a single elegant fraction. The mess was never real. It was an artifact of how we were looking at the problem.

Finding these hidden simplifications matters beyond aesthetics. When physicists have spotted them in the past, they’ve unlocked entirely new frameworks, from string theory connections to the “amplituhedron,” a geometric object encoding particle interactions without any reference to space or time.

The obstacle: getting from a complicated expression to its simplest form requires searching through astronomically many possible rearrangements. No known algorithm reliably finds the shortest form. You either get lucky, or you spend years staring at equations.

A team from Caltech, Harvard, and IAIFI (Clifford Cheung, Aurélien Dersy, and Matthew D. Schwartz) decided to teach a machine to do the staring. The result: a machine learning framework that takes particle physics expressions containing hundreds of terms and compresses them into their simplest known forms, rediscovering the Parke-Taylor formula along the way.

Key Insight: A transformer network trained to recognize mathematical simplicity can compress particle physics expressions spanning hundreds of terms down to single compact formulas, revealing hidden structure that took human physicists decades to find.

How It Works

The mathematical language here is the spinor-helicity formalism, a compact notation where each particle’s state is encoded as a pair of complex numbers called spinors. The building blocks are angle brackets ⟨ij⟩ and square brackets [ij], representing relationships between pairs of particles. Amplitudes, the quantities that determine how likely different collision outcomes are, look elegant in this notation once simplified but bewilderingly messy before.

The same physical quantity can be expressed in countless equivalent ways through identities like the Schouten identity, a rule that lets you rewrite any expression involving three spinors in endless equivalent forms. This creates an astronomically large algebraic search space with no obvious path to simplicity.

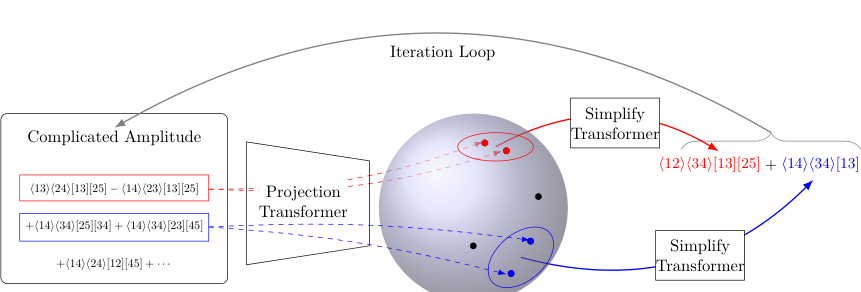

The researchers built their system in two layers, each tackling a different scale of complexity.

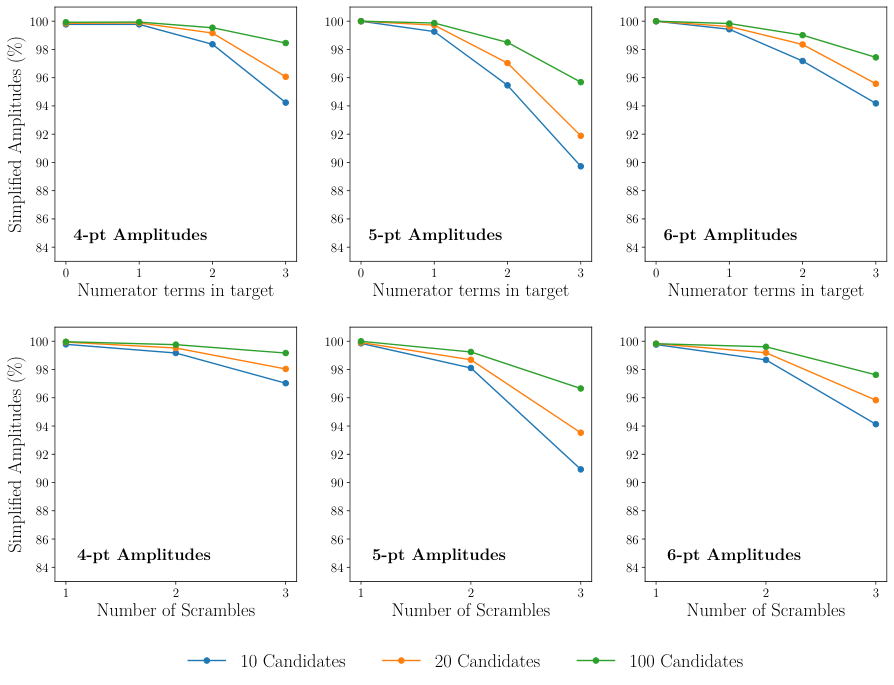

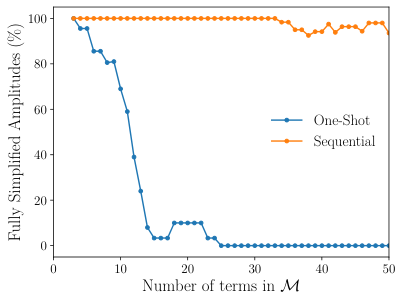

The first layer is a standard encoder-decoder transformer (the same architectural family behind modern language models) trained on pairs of messy input expressions and their known simplified outputs. For expressions up to around a dozen terms, this “one-shot” network reads the full expression and outputs the simplified version directly, token by token. On moderate-length expressions, it achieves impressive simplification rates.

Expressions with hundreds of terms are harder. Feeding the entire thing to a transformer is computationally intractable because processing cost grows prohibitively with length. The researchers’ solution was to mimic how a human expert would approach the problem:

- Scan the expression and identify which terms are likely to combine

- Group those terms into a smaller subset

- Simplify the subset using the trained transformer

- Repeat until nothing remains to simplify

Step one relies on an embedding network trained with contrastive learning, a technique from computer vision where similar items are pulled together in a shared mathematical space and dissimilar items pushed apart. Here, “similar” means “likely to cancel or combine under spinor-helicity identities.” The network learns to place terms close together if they tend to simplify when grouped, and far apart if they don’t. Clustering in this learned space then guides which terms to feed into the simplification transformer at each step.

This sequential pipeline (embed, cluster, simplify, repeat) lets the system tackle full-scale expressions from real quantum field theory calculations.

Why It Matters

Starting from Feynman-diagram outputs containing hundreds of terms, the system independently derives the Parke-Taylor formula for five-point gluon scattering. It also produces new compact expressions for five-point amplitudes involving scalars and gravitons, cases where no simple closed-form was previously known. These aren’t numerical approximations; the outputs are exact symbolic expressions, verifiable by direct calculation.

But the real payoff is what compact forms make possible. Every time physicists have found a simple amplitude, they’ve eventually understood why it’s simple, and that understanding has revealed new theoretical structure. The amplituhedron, celestial holography, the double-copy relationship between gravity and gauge theory: all emerged from staring at compact expressions and asking what principle they encoded.

If machine learning can now systematically produce those compact forms, it becomes a tool not just for calculation but for discovery.

The contrastive learning approach generalizes, too. The same strategy for learning which subexpressions simplify together could apply to loop-level amplitudes, string theory calculations, and other domains where symbolic simplification matters.

Bottom Line: By combining transformer-based symbolic translation with contrastive-learning-guided search, this framework compresses particle physics calculations by orders of magnitude and may help physicists discover the hidden structures that make the universe computable.

IAIFI Research Highlights

This work applies modern deep learning architectures (transformers and contrastive learning) to a central technical challenge in theoretical high-energy physics, showing that ML can function as a tool for symbolic mathematical discovery, not just numerical approximation.

The contrastive learning strategy for guiding symbolic simplification (learning which subexpressions are likely to simplify together) offers a broadly applicable template for using learned representations to navigate combinatorially complex algebraic search spaces.

The system independently rediscovers the Parke-Taylor formula for five-point gluon amplitudes and produces new compact expressions for scalar and graviton amplitudes, creating a path toward systematically finding hidden simplicity in quantum field theory at higher multiplicity and loop order.

Future directions include extending the approach to loop-level amplitudes and more complex theories; an interactive demonstration is available at [spinorhelicity.streamlit.app](https://spinorhelicity.streamlit.app). The paper is available as [arXiv:2408.04720](https://arxiv.org/abs/2408.04720).

Original Paper Details

Learning the Simplicity of Scattering Amplitudes

2408.04720

["Clifford Cheung", "Aurélien Dersy", "Matthew D. Schwartz"]

The simplification and reorganization of complex expressions lies at the core of scientific progress, particularly in theoretical high-energy physics. This work explores the application of machine learning to a particular facet of this challenge: the task of simplifying scattering amplitudes expressed in terms of spinor-helicity variables. We demonstrate that an encoder-decoder transformer architecture achieves impressive simplification capabilities for expressions composed of handfuls of terms. Lengthier expressions are implemented in an additional embedding network, trained using contrastive learning, which isolates subexpressions that are more likely to simplify. The resulting framework is capable of reducing expressions with hundreds of terms - a regular occurrence in quantum field theory calculations - to vastly simpler equivalent expressions. Starting from lengthy input expressions, our networks can generate the Parke-Taylor formula for five-point gluon scattering, as well as new compact expressions for five-point amplitudes involving scalars and gravitons. An interactive demonstration can be found at https://spinorhelicity.streamlit.app .