Not So Flat Metrics

Authors

Kit Fraser-Taliente, Thomas R. Harvey, Manki Kim

Abstract

In order to be in control of the $α'$ derivative expansion, geometric string compactifications are understood in the context of a large volume approximation. In this letter, we consider the reduction of these higher derivative terms, and propose an improved estimate on the large volume approximation using numerical Calabi-Yau metrics obtained via machine learning methods. Further to this, we consider the $α'^3$ corrections to numerical Calabi-Yau metrics in the context of IIB string theory. This correction represents one of several important contributions for realistic string compactifications -- alongside, for example, the backreaction of fluxes and local sources -- all of which have important consequences for string phenomenology. As a simple application of the corrected metric, we compute the change to the spectrum of the scalar Laplacian.

Concepts

The Big Picture

Imagine trying to describe a crumpled piece of paper by measuring only its total area. You’d know something, but you’d miss every wrinkle. For decades, string theorists have been doing something analogous: characterizing the hidden extra dimensions of space using a single global number, the total volume. Now, thanks to machine learning, they can finally look at the wrinkles.

String theory proposes that the universe has six extra spatial dimensions, curled up so tightly we can’t see them. These dimensions take the form of Calabi-Yau manifolds, highly constrained geometric shapes whose precise form determines the particles that exist, their masses, and how they interact. Getting the geometry right isn’t some side project. It is the entire program of connecting string theory to the real world.

The standard approach uses what’s called the large volume approximation: if the extra dimensions are large enough relative to the fundamental string length, corrections to the geometry become negligibly small. But this is a statement about averages. It says nothing about whether any particular region of the space is well-behaved. Fraser-Taliente, Harvey, and Kim ask a sharper question: is the approximation valid at every point across the manifold?

Using neural networks to compute precise numerical descriptions of the geometry, they find the answer is more nuanced than assumed. They also go further, computing the leading correction to the geometry directly.

Key Insight: Global volume conditions are too coarse to guarantee control of the α’ expansion. Machine-learned Calabi-Yau metrics enable pointwise checks and, for the first time, a direct numerical computation of the leading string-theoretic correction to the geometry itself.

How It Works

The physics starts with the α’ expansion, a perturbative series where α’ is proportional to the square of the string length. Think of it as an infinite recipe for the geometry: gravity first, then increasingly complicated correction terms involving higher powers of the curvature. Truncating this series is only valid when those higher terms are small.

The conventional check multiplies the total volume by the appropriate power of α’ and demands the result be large. But this treats curvature as uniform across the manifold, which is exactly the assumption the authors set out to test.

They attack the problem in two stages. First, they train a projective neural network (depth-4, width-64), an architecture that operates in the curved mathematical space where Calabi-Yau manifolds naturally live, on the Fermat quintic: the simplest non-trivial Calabi-Yau, defined by a degree-5 polynomial in four complex dimensions. Using the open-source cymetric framework with one million training points, they reach a Monge-Ampère loss of 8×10⁻⁴ in minutes.

The Monge-Ampère equation is the differential equation whose solution gives the Ricci-flat metric; the loss measures how well the neural network satisfies it. After training, the Ricci scalar, a local curvature measure that should equal exactly zero everywhere for the geometry to be physically valid, is distributed nearly normally around zero with standard deviation σ = 0.5. That confirms the metric’s accuracy.

With the numerical metric in hand, they compute two higher-curvature scalars pointwise across the manifold. The Kretschmann scalar Kr (the leading correction in Heterotic string theory) and the scalar Q (relevant for type IIB) both vary substantially from point to point. Some regions produce values large enough to potentially violate the α’ expansion even when the global volume condition is satisfied. This is where the traditional check breaks down: a manifold can pass the volume test and still harbor dangerous local curvature concentrations.

The second stage tackles the harder question. Instead of just diagnosing where things go wrong, the authors compute the α’³ correction to the Calabi-Yau metric, the first numerical result showing how the geometry deforms when leading string-theoretic effects are included. The leading correction to the 10-dimensional effective action involves a specific combination of four Riemann tensors, the J₀ term. Reducing this to the six-dimensional compact space generates a source term for the metric correction.

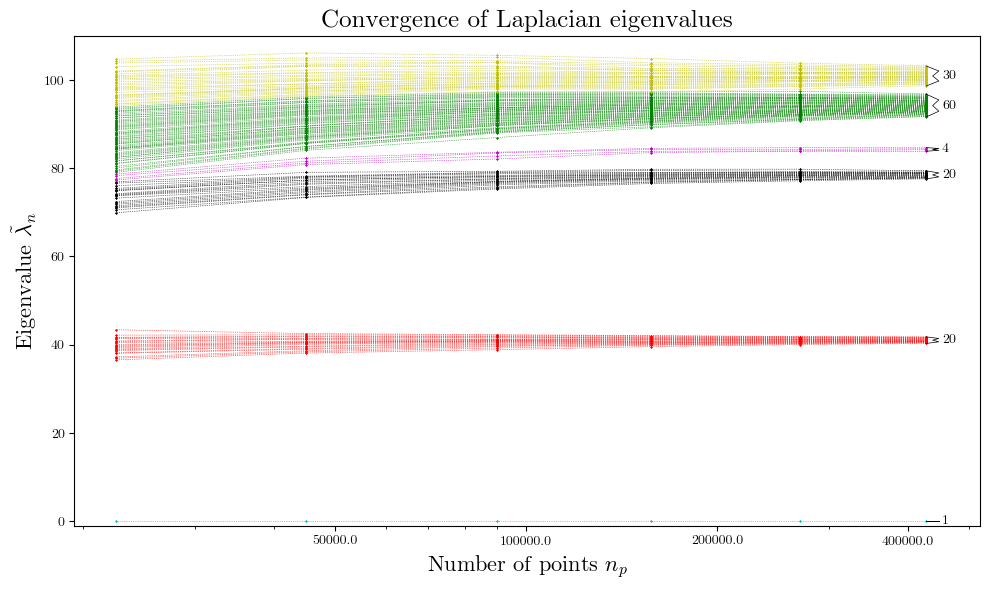

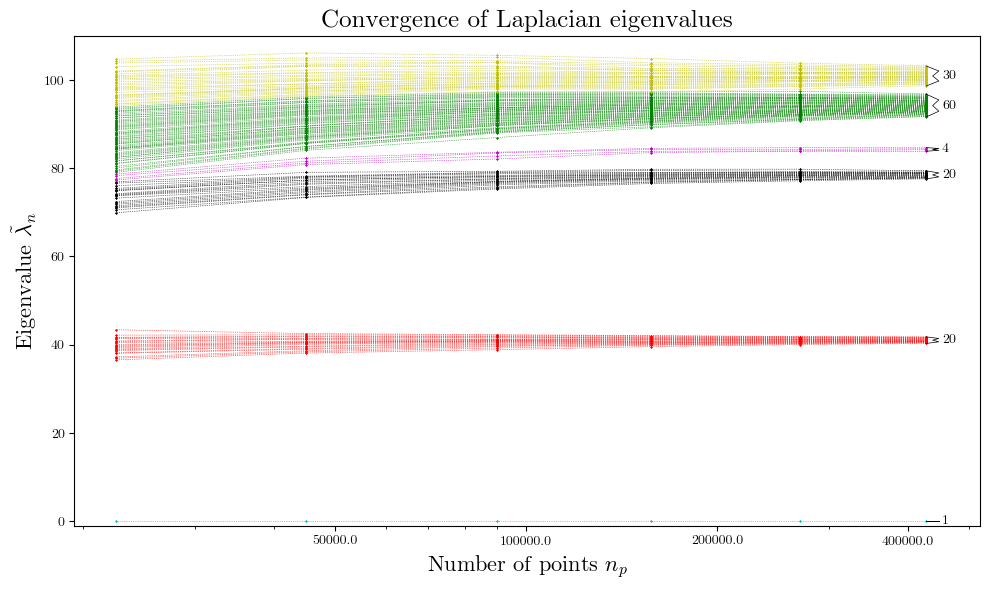

This source is not constant: it inherits all the pointwise variation of the curvature. The authors solve for the correction numerically by expanding in a basis of harmonic functions and inverting the scalar Laplacian, made tractable precisely because they have the numerical metric. The procedure:

- Compute the numerical Ricci-flat metric via neural network

- Evaluate the J₀ curvature source term pointwise

- Decompose into harmonic modes on the Calabi-Yau

- Invert the scalar Laplacian to find the metric perturbation

- Apply the corrected metric to compute observable shifts

As a concrete application, they calculate the shift to the spectrum of the scalar Laplacian: the set of allowed vibrational modes of the geometry, which govern scalar particle masses in the four-dimensional theory we observe. The correction is small (the expansion is controlled), but finite, calculable, and previously out of reach.

Why It Matters

String phenomenology, the field that tries to match string theory’s predictions to observable physics, has long relied on approximate methods because exact Calabi-Yau geometry was inaccessible. Machine learning has changed that. Numerical metrics, once exotic and slow to compute, are now routinely available.

This paper is one of the first to use those metrics not just for leading-order observables, but to push beyond leading order, into the regime where corrections matter. The pointwise validity check works as a general diagnostic: any string compactification claiming to describe realistic physics should pass it locally, not just globally.

The α’³ metric correction computed here is one piece of a larger puzzle. The full story requires including flux backreaction and local source contributions. But it is a piece that can now be handled numerically, and as computing power and ML architectures improve, extending this to more complex Calabi-Yau geometries becomes practical. The wrinkles are just beginning to be mapped.

Bottom Line: By combining machine-learned Calabi-Yau metrics with string-theoretic perturbation theory, this work replaces a decades-old global approximation with a rigorous local one and delivers the first numerical computation of the leading string correction to Calabi-Yau geometry in IIB theory.

IAIFI Research Highlights

This work applies neural network solutions to differential geometry problems in string theory, showing that ML-computed metrics are accurate enough to carry quantitative physics beyond the leading approximation.

The paper advances physics-informed neural networks for constrained geometric problems. Trained metrics reach the precision needed for perturbative calculations requiring sub-percent accuracy.

Computing pointwise curvature invariants and the first numerical α'³ correction to a Calabi-Yau metric in IIB string theory opens a concrete route toward more reliable string compactification phenomenology.

Future work will incorporate flux backreaction and local source contributions alongside the metric correction computed here, building toward fully consistent numerical string vacua. The paper is available as [arXiv:2411.00962](https://arxiv.org/abs/2411.00962).