PFGM++: Unlocking the Potential of Physics-Inspired Generative Models

Authors

Yilun Xu, Ziming Liu, Yonglong Tian, Shangyuan Tong, Max Tegmark, Tommi Jaakkola

Abstract

We introduce a new family of physics-inspired generative models termed PFGM++ that unifies diffusion models and Poisson Flow Generative Models (PFGM). These models realize generative trajectories for $N$ dimensional data by embedding paths in $N{+}D$ dimensional space while still controlling the progression with a simple scalar norm of the $D$ additional variables. The new models reduce to PFGM when $D{=}1$ and to diffusion models when $D{\to}\infty$. The flexibility of choosing $D$ allows us to trade off robustness against rigidity as increasing $D$ results in more concentrated coupling between the data and the additional variable norms. We dispense with the biased large batch field targets used in PFGM and instead provide an unbiased perturbation-based objective similar to diffusion models. To explore different choices of $D$, we provide a direct alignment method for transferring well-tuned hyperparameters from diffusion models ($D{\to} \infty$) to any finite $D$ values. Our experiments show that models with finite $D$ can be superior to previous state-of-the-art diffusion models on CIFAR-10/FFHQ $64{\times}64$ datasets, with FID scores of $1.91/2.43$ when $D{=}2048/128$. In class-conditional setting, $D{=}2048$ yields current state-of-the-art FID of $1.74$ on CIFAR-10. In addition, we demonstrate that models with smaller $D$ exhibit improved robustness against modeling errors. Code is available at https://github.com/Newbeeer/pfgmpp

Concepts

The Big Picture

Imagine sculpting a cloud of static into a photograph. You can’t snap your fingers; you need a path, a way to coax random noise into structured beauty one step at a time. That is the core problem of generative AI: learning to reverse chaos and produce coherent images, audio, proteins, or galaxies from pure randomness.

Two of the most powerful tools for this task are diffusion models (the technology behind image generators like DALL-E and Stable Diffusion) and a newer approach called Poisson Flow Generative Models (PFGM), which borrows its logic from classical electrostatics. In the PFGM picture, data points act like electric charges, and the generator follows the invisible paths traced by electric fields, traveling from a cloud of random noise back to structured data. Both approaches work well, but they’ve always been treated as separate inventions with different strengths and weaknesses.

Researchers at MIT have now shown that diffusion models and PFGMs are actually two endpoints of a single, deeper family. Their framework, PFGM++, gives scientists a new dial to turn, unlocking models that outperform the best diffusion models while also tolerating mistakes more gracefully.

Key Insight: PFGM++ introduces a single tunable parameter D that continuously interpolates between Poisson Flow Generative Models and diffusion models, letting practitioners find the sweet spot between resilience and peak performance for any given task.

How It Works

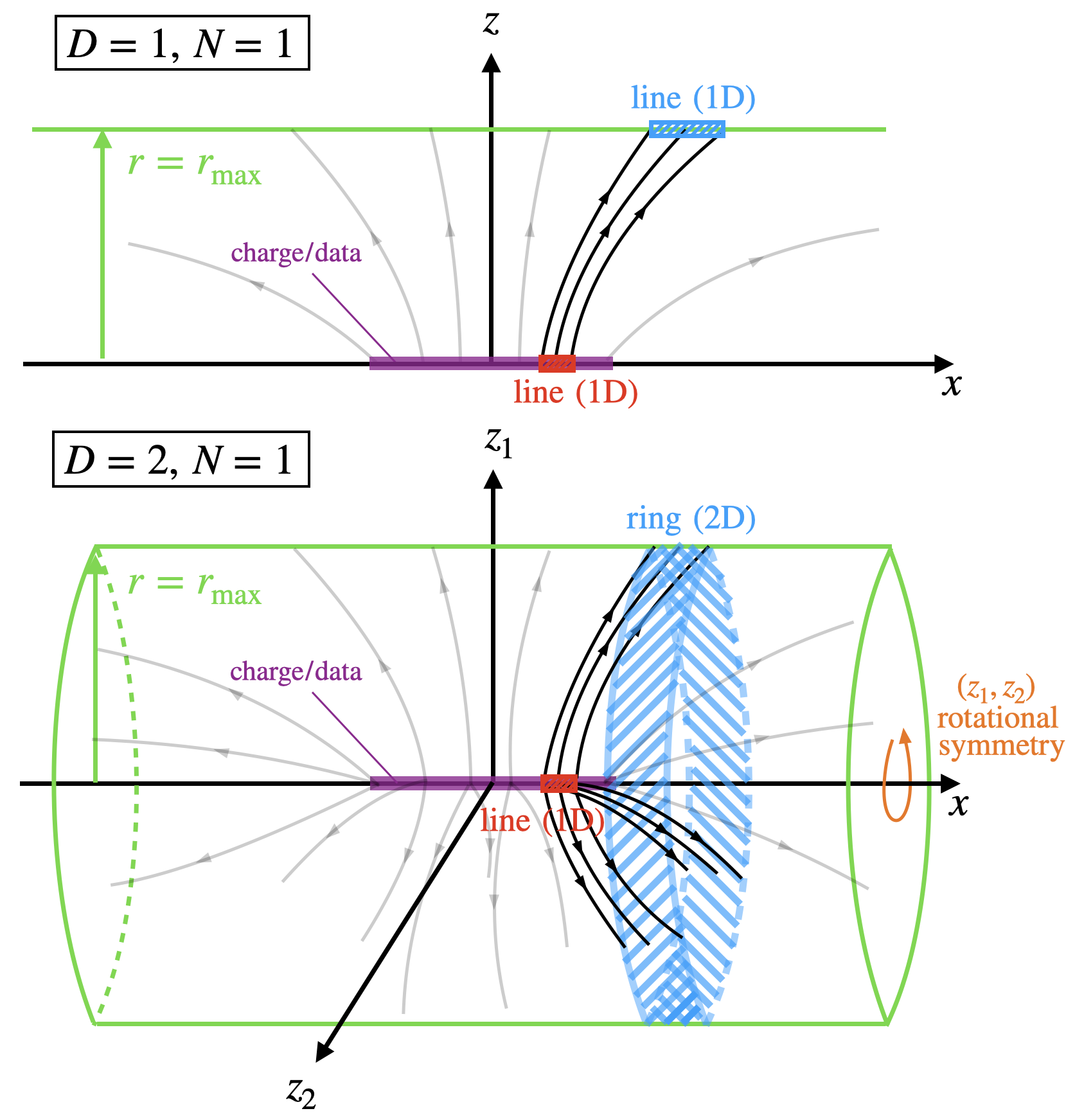

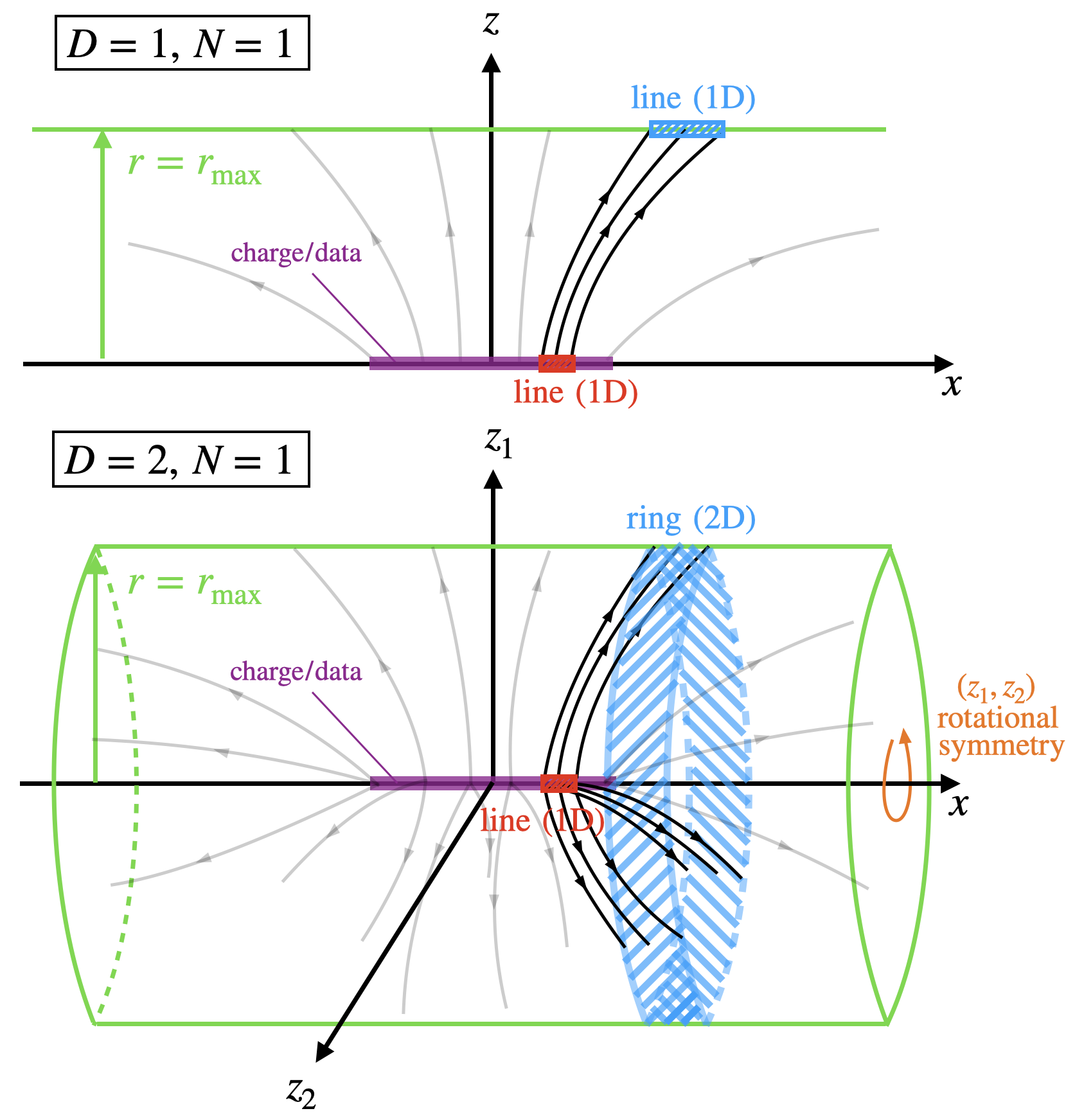

The trick lies in dimensionality. In standard PFGM, you take your N-dimensional data (say, a 64×64 image with thousands of pixel values) and embed it in N+1 dimensions by adding one extra coordinate z. Data points sit on the z=0 flat boundary like charges on a plate, and the electric field lines they generate trace paths from a random starting cloud back to the data.

PFGM++ generalizes this by adding not one, but D extra dimensions. Every data point receives a D-dimensional augmentation vector: a list of D extra numbers appended to its coordinates.

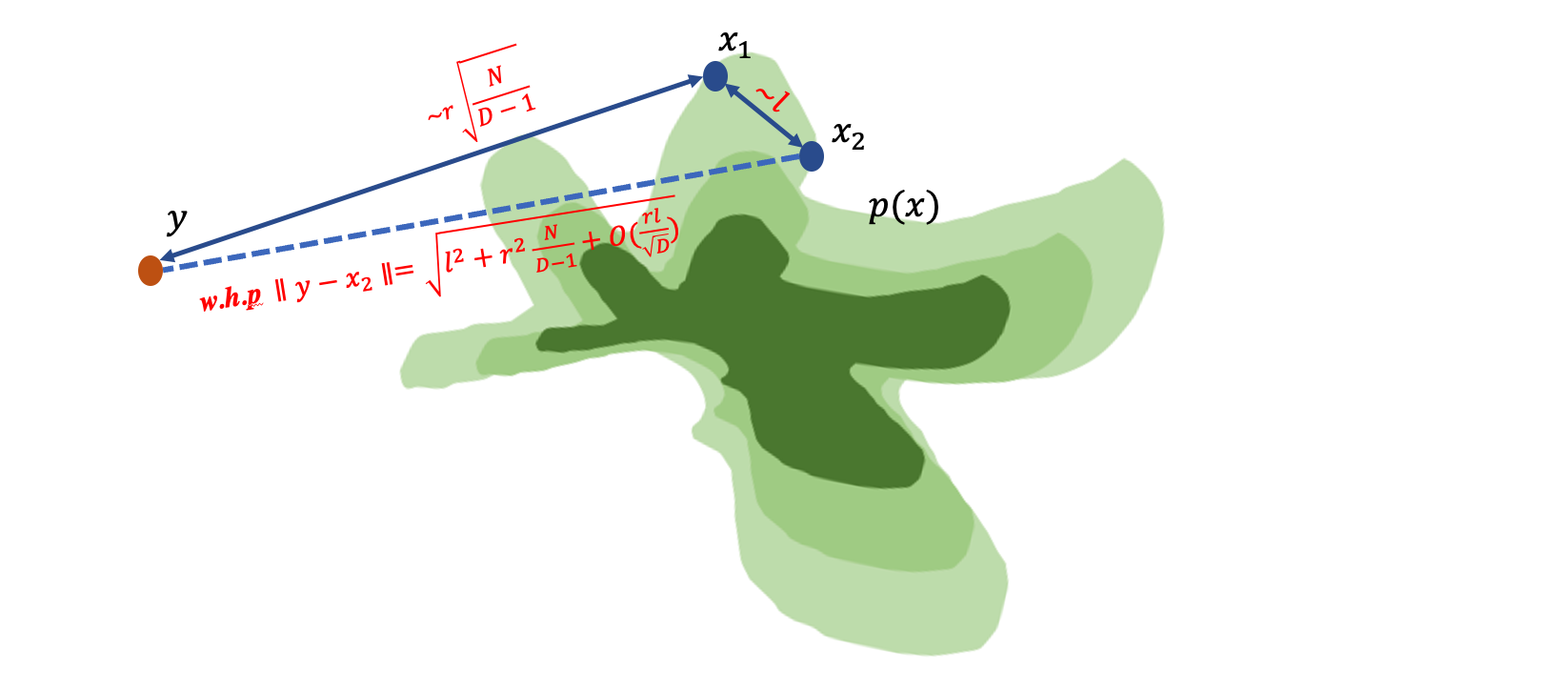

A key symmetry makes this tractable. The physics is invariant under rotations in the augmented space, so you don’t need to track all D extra numbers independently. Everything that matters collapses to a single number: the norm r = ‖z‖, the total length of the augmentation vector. This reduction transforms a potentially intractable high-dimensional problem back into a manageable one.

The result is a family of models parameterized entirely by D:

- D = 1: Recovers the original PFGM, with heavy-tailed distributions (spread-out, wide-ranging noise profiles) that tolerate errors well but are harder to learn.

- D → ∞: Recovers standard diffusion models, with tightly concentrated Gaussian (bell-curve-shaped) behavior that’s easy to optimize but fragile.

- Finite D (e.g., D = 128, 2048): A genuine middle ground that neither framework could previously reach.

The team also replaced PFGM’s original training objective with a cleaner one. The original required estimating electric field targets using very large batches, which introduced bias and computational overhead. PFGM++ instead derives a perturbation-based training objective: it teaches the model by asking it to “undo” small, controlled corruptions added to training examples, mathematically analogous to denoising score matching in diffusion models. The result is unbiased, efficient, and naturally compatible with conditional generation.

To make exploring different values of D practical, the researchers developed a direct alignment method. This procedure automatically transfers well-tuned hyperparameters (the configuration knobs governing how training runs) from a trained diffusion model (D→∞) to any finite D. Practitioners don’t have to start from scratch. They can borrow the hard-won intuitions from years of diffusion model tuning.

Why It Matters

On CIFAR-10, a standard image generation benchmark, PFGM++ with D=2048 achieves an FID score of 1.74 in the class-conditional setting. FID measures image quality; lower is better. On the FFHQ 64×64 face dataset, D=128 reaches an FID of 2.43. Both results beat the previous best diffusion models at their own game.

Raw performance isn’t the whole story, though. Smaller D values produce models that degrade more gracefully when things go wrong. The researchers tested three distinct failure modes: injecting controlled noise into network outputs during sampling, using large step sizes that accumulate rounding errors, and applying post-training quantization (compressing model weights to save memory at the cost of some precision). In every case, models with smaller D held up better. The reason is baked into the physics: a small D widens the distribution of noisy training sample norms, giving the model a broader tolerance band for imperfect predictions.

This unification opens up a real new axis of design. Rather than choosing between two fixed frameworks, researchers can now navigate a continuous spectrum, selecting the D that best matches their task difficulty, architecture quality, and tolerance for error. It also raises worthwhile theoretical questions: why does intermediate D outperform both extremes? What is it about finite-dimensional augmentation that unlocks extra generative capacity?

Bottom Line: PFGM++ doesn’t just improve state-of-the-art image generation. It reveals that diffusion models and Poisson flow models were always two faces of a single physical principle, and that the unexplored middle ground between them holds real scientific value.

IAIFI Research Highlights

PFGM++ unifies two physically-motivated generative frameworks, one rooted in thermodynamics and the other in electrostatics, by generalizing the augmentation dimension *D*. The result exposes a deeper mathematical structure connecting both approaches.

The framework achieves state-of-the-art FID scores of 1.74 on CIFAR-10 and 2.43 on FFHQ 64×64 while providing a principled, tunable tradeoff between resilience and performance that practitioners can exploit across diverse generation tasks.

Symmetry principles from classical field theory (rotational invariance of electric fields in augmented dimensions) directly simplify and power the machine learning here, showing how physics intuition can actively guide AI architecture design.

Future directions include applying PFGM++ to scientific domains like protein structure and cosmological field generation, where tolerance to model error is especially valuable. The paper is available as [arXiv:2302.04265](https://arxiv.org/abs/2302.04265), with code at github.com/Newbeeer/pfgmpp.