Physics-Informed Neural Networks for Modeling Galactic Gravitational Potentials

Authors

Charlotte Myers, Nathaniel Starkman, Lina Necib

Abstract

We introduce a physics-informed neural framework for modeling static and time-dependent galactic gravitational potentials. The method combines data-driven learning with embedded physical constraints to capture complex, small-scale features while preserving global physical consistency. We quantify predictive uncertainty through a Bayesian framework, and model time evolution using a neural ODE approach. Applied to mock systems of varying complexity, the model achieves reconstruction errors at the sub-percent level ($0.14\%$ mean acceleration error) and improves dynamical consistency compared to analytic baselines. This method complements existing analytic methods, enabling physics-informed baseline potentials to be combined with neural residual fields to achieve both interpretable and accurate potential models.

Concepts

The Big Picture

Imagine trying to reconstruct the invisible skeleton of an entire galaxy, the vast scaffolding of dark matter that holds everything together, using only the motions of distant stars as clues. It’s like mapping a city’s underground infrastructure by watching how traffic flows on the surface. It remains one of the central unsolved challenges in astrophysics.

The gravitational field of a galaxy (a mathematical map of how strongly gravity pulls at every point in space) reveals where mass lives, including the dark matter we can’t see directly. Get it right, and you can trace the shape of the dark matter cloud surrounding the galaxy, understand how galaxies evolve, and probe fundamental physics. Get it wrong, and every downstream calculation inherits your errors.

Traditional approaches either use clean mathematical formulas that are simple and interpretable but too rigid to capture the full picture, or build up the gravity map as a weighted sum of overlapping mathematical shapes, which is flexible but computationally expensive and prone to mistaking noise for signal.

Charlotte Myers, Nathaniel Starkman, and Lina Necib at MIT have developed a new approach that combines the best of both worlds: a physics-informed neural network, built from the ground up to obey the laws of physics, that learns the galaxy’s gravitational field directly from data. The result achieves sub-percent accuracy while remaining physically interpretable. Its outputs correspond to real, recognizable physical quantities rather than opaque numbers.

Key Insight: By embedding physical constraints directly into the neural network’s training objective, rather than applying them as post-hoc corrections, the model learns galactic gravitational potentials that are both highly accurate (0.14% mean acceleration error) and physically self-consistent.

How It Works

The core constraint governing any gravitational model is a = −∇ϕ: a star’s acceleration must equal the negative gradient of the gravitational potential. It’s always pulled toward where gravity grows most steeply, and the force and field must stay precisely in step. The researchers built this constraint into their model’s loss function. Every training step penalizes violations of this force-potential relation, so the network doesn’t just fit data; it learns solutions that are physically lawful by construction.

The architecture stacks several design choices on top of this physics-first foundation:

- Coordinate compactification: A spherical coordinate system squeezes the effectively infinite extent of space into a finite numerical range the network can handle cleanly, preventing precision loss at large radii where accelerations decay toward zero.

- Radial scaling: Rather than predicting the full potential directly, the network predicts a scaled residual, the small difference left over after a simple large-scale trend has been removed. This compresses the dynamic range and forces the network to focus on what actually needs learning.

- Analytic fusing: The model combines an analytic baseline, such as an NFW (Navarro-Frenk-White) profile (a standard description of dark matter distribution around a galaxy), with a neural residual field. The network doesn’t waste capacity relearning what we already understand. It focuses on what analytic models miss.

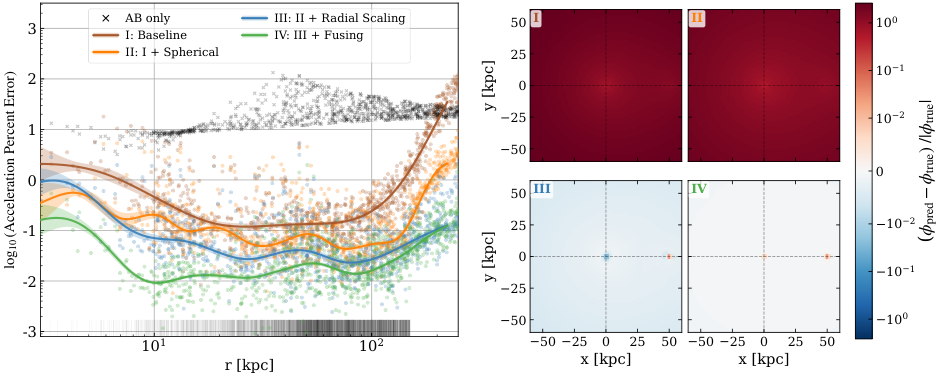

Figure 1 shows these design choices in action: each addition (spherical coordinates, radial scaling, analytic fusing) progressively reduces reconstruction error on the Milky Way–Large Magellanic Cloud system. The full model reaches below 1% acceleration error across the entire spatial range, where the analytic-only baseline struggles in the outer regions dominated by the LMC’s perturbations.

The team also tackled two problems standard neural networks can’t handle alone: uncertainty and time.

For uncertainty, they implemented a Bayesian Neural Network framework using NumPyro, treating network weights as probability distributions rather than fixed numbers. The model reports not just a best-guess potential but a full posterior distribution, which is what downstream scientific inference actually requires. Training proceeds in two stages: tight initial priors keep the analytic baseline dominant, then looser priors let the neural residual capture finer structure. This ensures the two components learn complementary roles.

For time evolution, a neural ODE formulation encodes the gravitational field’s changes as a smooth, continuous trajectory. Rather than treating each snapshot independently, the model enforces physically smooth evolution, preventing unphysical jumps or discontinuities.

Why It Matters

Galactic dynamics is flush with data. The Gaia mission has mapped positions and velocities of over a billion stars; surveys like H3 and APOGEE are adding chemical abundances. The bottleneck is no longer data. It’s modeling.

Analytic potentials are too rigid to capture the LMC’s tidal distortion of the Milky Way, substructure from ancient mergers, or the time-varying response of the dark matter halo to ongoing accretion. This framework targets that gap directly.

The implications reach beyond galactic astrophysics. Physics-informed machine learning, where domain knowledge is woven into architecture and training rather than applied as a patch, is gaining traction across physics. By showing that this approach works at galactic scales, with their enormous spatial extent, complex multi-component structure, and sparse observations, Myers and colleagues expand the range of problems where physics-informed AI can make a real difference.

Open questions remain. How well does the neural ODE formulation scale to longer timescales and more complex merger histories? Can the Bayesian uncertainty estimates be reliably calibrated against real data, where the true potential is unknown?

Bottom Line: By marrying physical constraints with neural network flexibility and Bayesian uncertainty quantification, this framework achieves sub-percent accuracy on galactic potential reconstruction, providing a practical, interpretable tool for mapping dark matter with the next generation of stellar surveys.

IAIFI Research Highlights

This work sits squarely at the intersection of machine learning and gravitational astrophysics, embedding conservation laws and force-potential physics into neural network architectures to model galactic gravitational fields.

The paper introduces several design features for physics-informed neural networks, including coordinate compactification, radial scaling, analytic fusing, and two-stage Bayesian training, that improve both accuracy and calibration for scientific regression tasks with known physical structure.

Accurate, uncertainty-aware reconstruction of galactic gravitational potentials, including time-dependent perturbations from satellite galaxies like the LMC, sharpens one of the primary observational probes of dark matter distribution in the Milky Way.

Future work will apply this framework to real stellar survey data and extend the neural ODE formulation to longer-timescale dynamical evolution; the paper appeared at the Machine Learning and the Physical Sciences Workshop, NeurIPS 2025.

Original Paper Details

Physics-Informed Neural Networks for Modeling Galactic Gravitational Potentials

[arXiv:2602.01806](https://arxiv.org/abs/2602.01806)

Charlotte Myers, Nathaniel Starkman, Lina Necib

We introduce a physics-informed neural framework for modeling static and time-dependent galactic gravitational potentials. The method combines data-driven learning with embedded physical constraints to capture complex, small-scale features while preserving global physical consistency. We quantify predictive uncertainty through a Bayesian framework, and model time evolution using a neural ODE approach. Applied to mock systems of varying complexity, the model achieves reconstruction errors at the sub-percent level ($0.14\%$ mean acceleration error) and improves dynamical consistency compared to analytic baselines. This method complements existing analytic methods, enabling physics-informed baseline potentials to be combined with neural residual fields to achieve both interpretable and accurate potential models.