Product Manifold Machine Learning for Physics

Authors

Nathaniel S. Woodward, Sang Eon Park, Gaia Grosso, Jeffrey Krupa, Philip Harris

Abstract

Physical data are representations of the fundamental laws governing the Universe, hiding complex compositional structures often well captured by hierarchical graphs. Hyperbolic spaces are endowed with a non-Euclidean geometry that naturally embeds those structures. To leverage the benefits of non-Euclidean geometries in representing natural data we develop machine learning on $\mathcal P \mathcal M$ spaces, Cartesian products of constant curvature Riemannian manifolds. As a use case we consider the classification of "jets", sprays of hadrons and other subatomic particles produced by the hadronization of quarks and gluons in collider experiments. We compare the performance of $\mathcal P \mathcal M$-MLP and $\mathcal P \mathcal M$-Transformer models across several possible representations. Our experiments show that $\mathcal P \mathcal M$ representations generally perform equal or better to fully Euclidean models of similar size, with the most significant gains found for highly hierarchical jets and small models. We discover significant correlation between the degree of hierarchical structure at a per-jet level and classification performance with the $\mathcal P \mathcal M$-Transformer in top tagging benchmarks. This is a promising result highlighting a potential direction for further improving machine learning model performance through tailoring geometric representation at a per-sample level in hierarchical datasets. These results reinforce the view of geometric representation as a key parameter in maximizing both performance and efficiency of machine learning on natural data.

Concepts

The Big Picture

Imagine trying to draw a family tree on a flat piece of paper. The further back you go, the more ancestors you have, and branches multiply exponentially. Sooner or later, the page runs out of room. This is essentially the problem physicists face when teaching machines to understand particle jets: the geometry of the data doesn’t fit the geometry of the math.

When a quark or gluon is knocked loose in a collider like the Large Hadron Collider, it doesn’t travel alone. It breaks apart in a chain reaction, splitting into daughter particles, which split again and again, producing a tightly-focused spray of hundreds of particles called a jet.

This process is deeply hierarchical, like a family tree written in subatomic ink. Standard machine learning operates in flat, Euclidean space (the ordinary geometry of straight lines and right angles), which struggles to represent branching, tree-like data. There’s a fundamental mismatch between the shape of the physics and the shape of the math.

A team from MIT’s Laboratory for Nuclear Science and IAIFI decided to fix that mismatch at its root. Rather than forcing jet data into flat geometry, they built models that operate on curved spaces tailored to the data’s natural shape, and found that matching geometry to structure makes a measurable difference.

Key Insight: By embedding jet data in curved, non-Euclidean spaces that accommodate hierarchical structure, this work achieves better classification performance with smaller models, especially for the most complex, deeply branching jets.

How It Works

The central concept is the product manifold (PM) space: a combination of several constant-curvature geometric spaces, mixed and matched to represent different aspects of the data. Think of it as blending a saddle-shaped surface (hyperbolic space, which expands exponentially and fits tree-like data), a flat plane (Euclidean space, efficient for local structure), and a sphere (positive curvature, suited to cyclic relationships). Each component handles different features of the jet.

Hyperbolic space is the crucial piece. In this non-Euclidean geometry, available “room” grows exponentially with distance from any point. That mirrors how a branching tree grows: at each level, the number of branches multiplies. Fitting a deep tree into flat space requires enormous distortion. Hyperbolic space accommodates it almost for free.

The researchers formalize this using Gromov-δ hyperbolicity, a mathematical measure of how tree-like a data structure actually is. Small δ means highly tree-like; large δ means more tangled and complex.

The team built two architectures that operate natively in curved spaces:

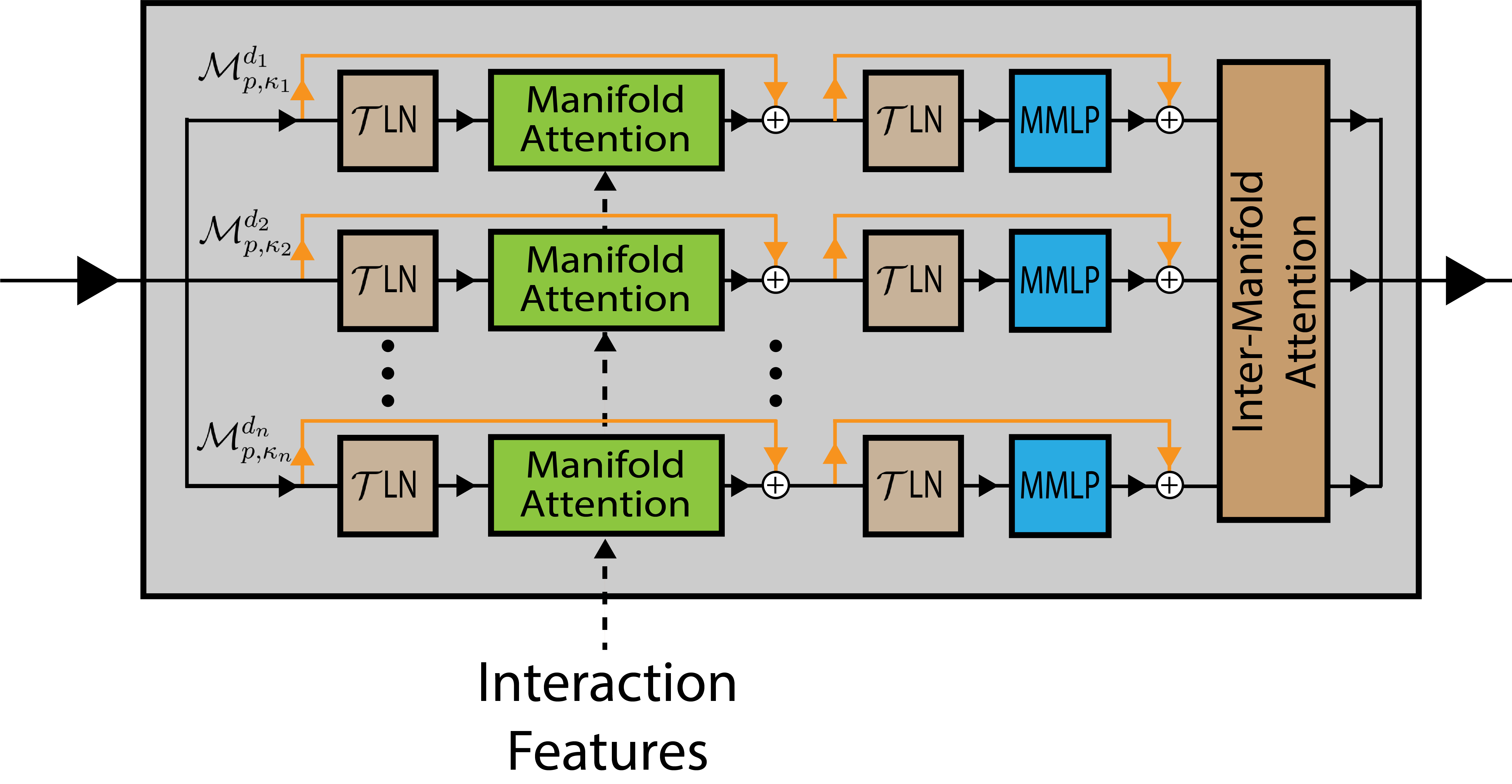

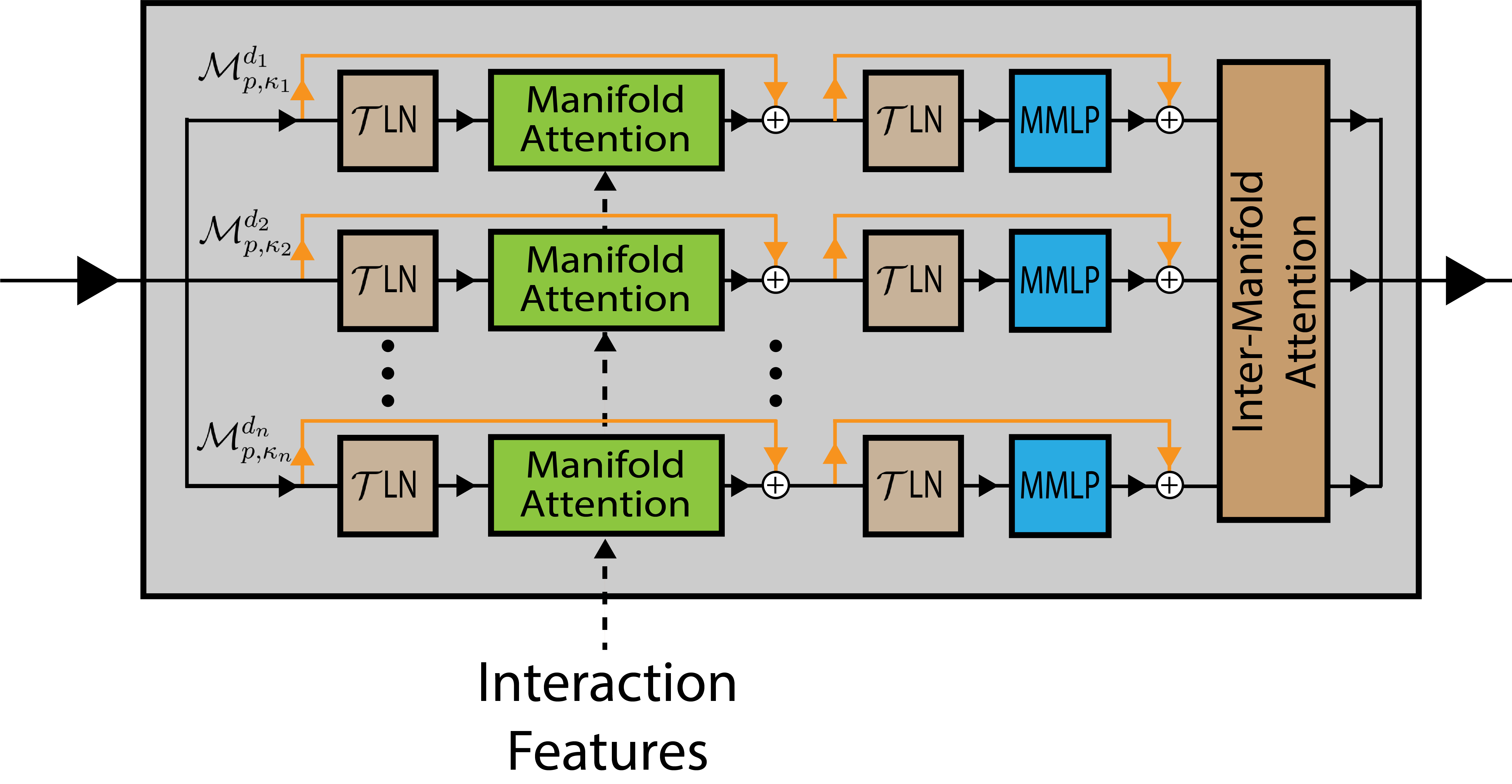

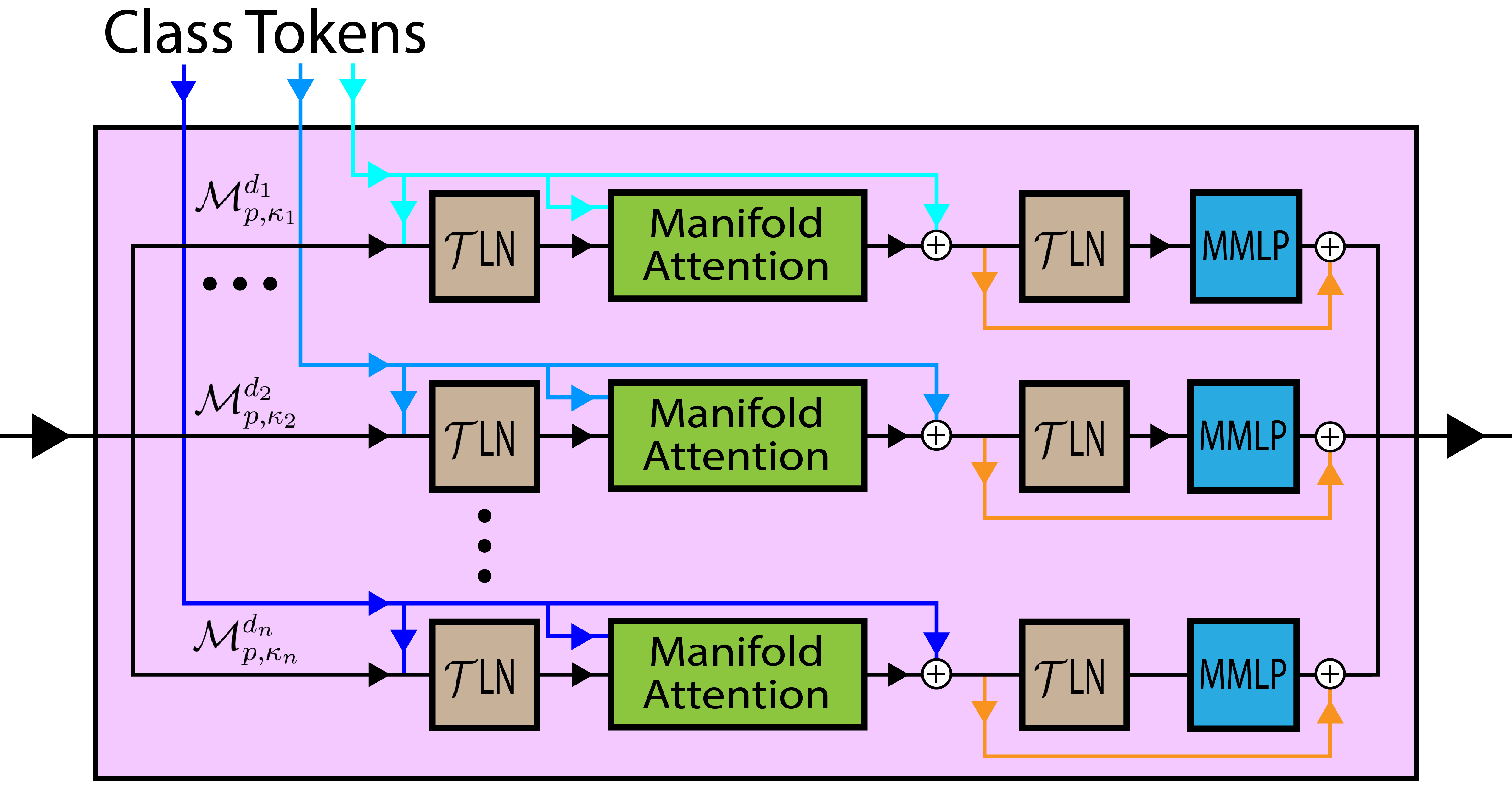

- PM-MLP: A multilayer perceptron where each layer’s operations are generalized to product manifolds, using non-Euclidean analogs of addition, distance, and aggregation.

- PM-Transformer: A transformer architecture (the same family behind large language models) extended to operate on product manifold representations. It processes each particle individually, then aggregates into a jet-level representation.

Both were benchmarked on JetClass, a large-scale dataset of simulated jets spanning ten classes: top quarks, Higgs bosons decaying to quark pairs, simple quark/gluon jets, and more. The experiments systematically varied PM combinations, from pure Euclidean to pure hyperbolic to mixed, to find which geometry worked best for which jet type.

PM representations matched or outperformed Euclidean models of similar parameter count, with the largest gains appearing for the most hierarchical jet types and for small models. Under computational constraints, curved space does more work with fewer parameters.

Why It Matters

The real payoff isn’t that PM spaces help on average. It’s when they help. The researchers measured the Gromov-δ hyperbolicity of individual jets and found a statistically significant correlation: jets with lower δ (more tree-like, more hierarchical) are classified more accurately by the PM-Transformer than by its Euclidean counterpart. The geometry of the model and the geometry of the data are genuinely aligned.

This opens a provocative door. If the benefit of non-Euclidean geometry tracks the actual hierarchical structure of individual data points, future models might adapt their geometry on the fly, choosing or weighting different manifold components based on how tree-like each jet is. That would be a fundamentally new kind of inductive bias: not architecture or training data, but the shape of the mathematical space itself as a tunable parameter per input.

The idea generalizes far beyond particle physics. Biological data (protein interaction networks, evolutionary trees, neural connectomes) is hierarchical too. Social networks. Language structures. Any domain where data branches and stratifies could benefit from this geometric matching approach. Jets just happen to be a particularly clean test case.

Bottom Line: Matching the geometry of a machine learning model to the hierarchical geometry of physical data isn’t just mathematically elegant. It measurably improves performance, and the improvement is largest exactly where it matters most: deeply branching jets and tight computational budgets.

IAIFI Research Highlights

This work connects differential geometry and Riemannian manifold theory to deep learning for experimental particle physics, turning abstract mathematical structure into concrete performance gains on one of collider physics' central classification challenges.

The PM-Transformer shows that transformer architectures can be rigorously extended to non-Euclidean product manifolds without losing generality, providing a framework applicable to any hierarchical dataset, from particle jets to biological networks.

Better jet classification directly improves sensitivity in searches for new physics at the LHC, including Higgs boson measurements and dark matter production signals, by more accurately distinguishing signal jets from QCD background.

The per-sample correlation between classification accuracy and Gromov-δ hyperbolicity motivates future adaptive-geometry models that tune their manifold representation per input. The full paper is available at [arXiv:2412.07033](https://arxiv.org/abs/2412.07033).