QCD Theory meets Information Theory

Authors

Benoît Assi, Stefan Höche, Kyle Lee, Jesse Thaler

Abstract

We present a novel technique to incorporate precision calculations from quantum chromodynamics into fully differential particle-level Monte-Carlo simulations. By minimizing an information-theoretic quantity subject to constraints, our reweighted Monte Carlo incorporates systematic uncertainties absent in individual Monte Carlo predictions, achieving consistency with the theory input in precision and its estimated systematic uncertainties. Our method can be applied to arbitrary observables known from precision calculations, including multiple observables simultaneously. It generates strictly positive weights, thus offering a clear path to statistically powerful and theoretically precise computations for current and future collider experiments. As a proof of concept, we apply our technique to event-shape observables at electron-positron colliders, leveraging existing precision calculations of thrust. Our analysis highlights the importance of logarithmic moments of event shapes, which have not been previously studied in the collider physics literature.

Concepts

The Big Picture

Imagine trying to predict the weather using two different tools: a supercomputer that gives precise forecasts for specific locations, and a full atmospheric simulation that tracks every cloud and raindrop but runs on cruder physics. Neither alone is sufficient. Combining them without introducing artifacts is surprisingly hard. Particle physicists face exactly this problem at colliders like the LHC.

High-energy collider physics relies on two theoretical tools that don’t easily speak to each other. Monte Carlo event generators (named for the casino, because they work by running millions of randomized simulations) recreate the full chaotic particle spray from a collision in rich spatial detail. But comprehensiveness costs precision. Analytic QCD calculations, on the other hand, achieve extraordinary accuracy for specific measurable collision properties, like how “round” or “jet-like” the particle spray looks, but can’t capture the full particle-level picture that experimenters actually record.

Stitching these tools together has been a central challenge of collider theory for decades. Standard “matching and merging” algorithms produce a persistent artifact: events with negative statistical weights, where individual data points subtract from totals rather than add. They’re computationally wasteful, physically awkward, and hard to interpret.

A team from Fermilab and MIT’s Center for Theoretical Physics has found a cleaner path. Their technique borrows a concept from information theory (the mathematics behind data compression and machine learning) to embed precision QCD calculations directly into Monte Carlo simulations. No negative weights, no phase-space slicing, and uncertainty propagation comes built in.

Key Insight: By minimizing the information-theoretic distance between a Monte Carlo simulation and a precision QCD calculation, subject to carefully chosen constraints, the researchers produce reweighted events that are both fully differential and theoretically precise. Strictly positive weights are guaranteed by construction.

How It Works

The mathematical core of the method is the Kullback-Leibler (KL) divergence, a standard measure from information theory of how much one probability distribution differs from another. Given a Monte Carlo prior q and a precision QCD target r, the team asks: find the distribution p closest to q while satisfying constraints derived from the precision calculation.

This constrained optimization has an elegant closed-form solution. Each Monte Carlo event receives a weight:

w(Φ) = exp(−Σ λⱼ gⱼ(v(Φ)))

The λⱼ are Lagrange multipliers found numerically using the Adam optimizer (the same algorithm that trains modern neural networks), and the gⱼ are basis functions encoding what the precision calculation knows. Because the weights are exponentials, they are always positive. The negative-weight problem vanishes by construction.

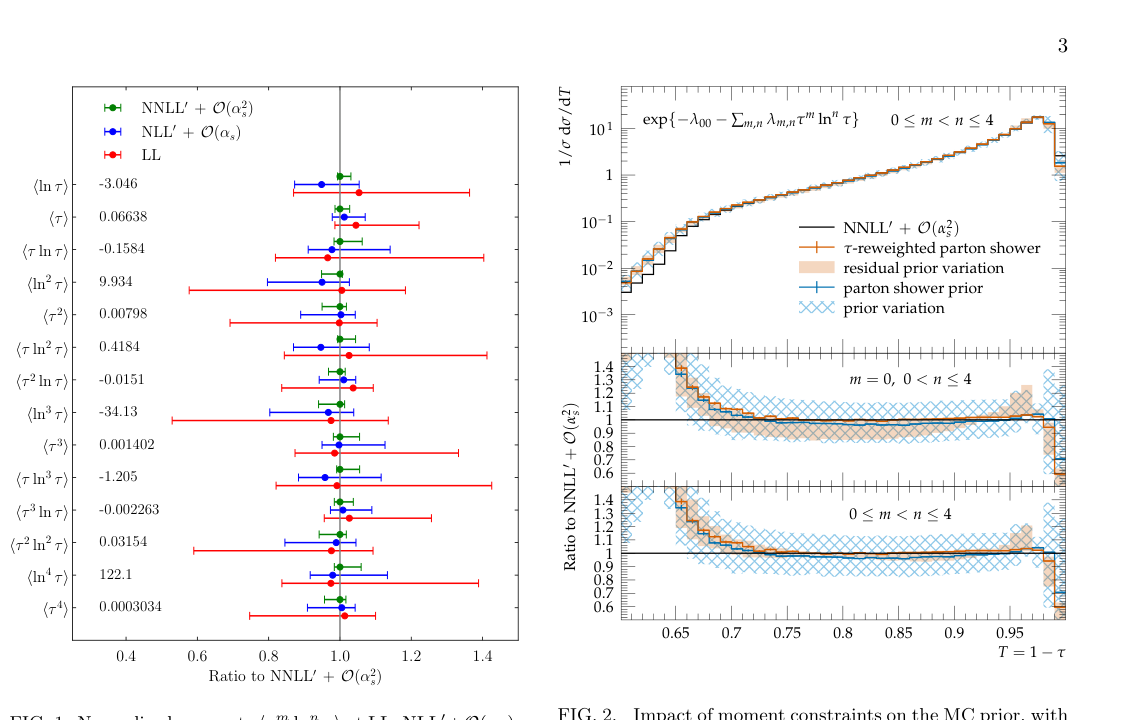

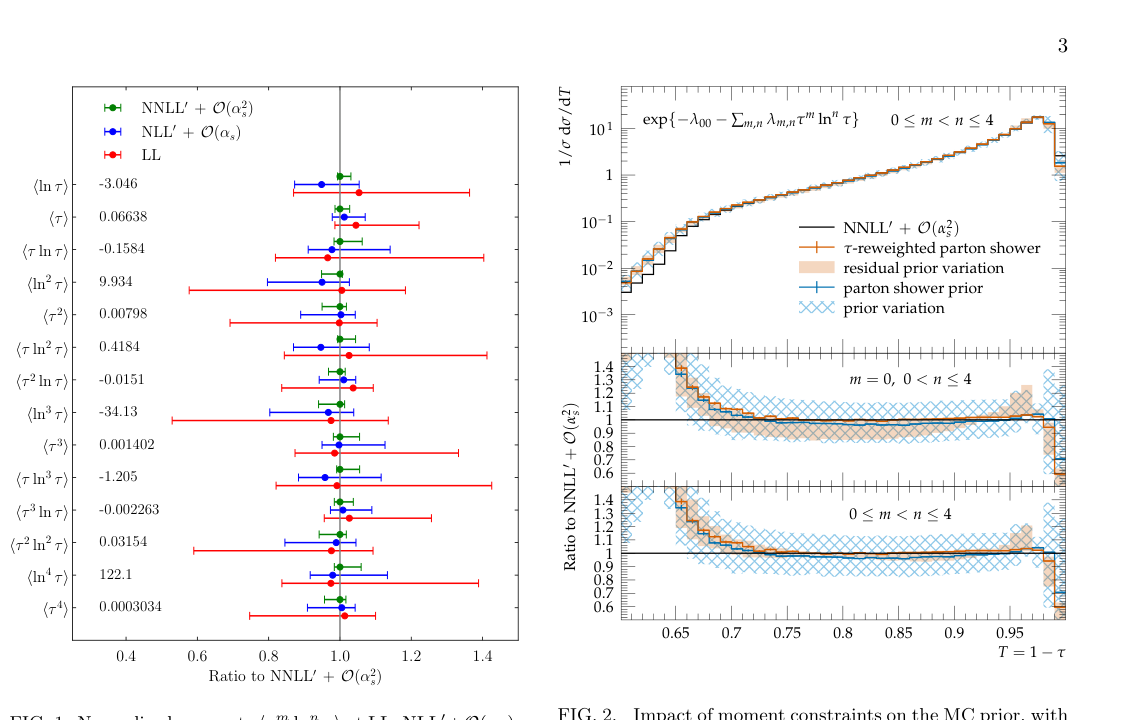

Choosing the right basis functions is where physics intuition meets information theory. Precision QCD calculations for event shapes take a characteristic log-exponentiated form, dominated by “Sudakov logarithms”: towers of logarithms that arise from quantum interference when particles radiate at low energies. This structure, familiar from analytic resummation theory, suggests a natural set of constraints:

- Use logarithmic moments (powers of ln(v)) as basis functions

- If a precision calculation says the mean of ln²(thrust) equals some number, the reweighting enforces exactly that

- Multiple observables can be constrained simultaneously within a single optimization, without contradiction

The authors point out that logarithmic moments of event shapes have not been previously studied in the collider physics literature, despite being directly computable from existing precision calculations.

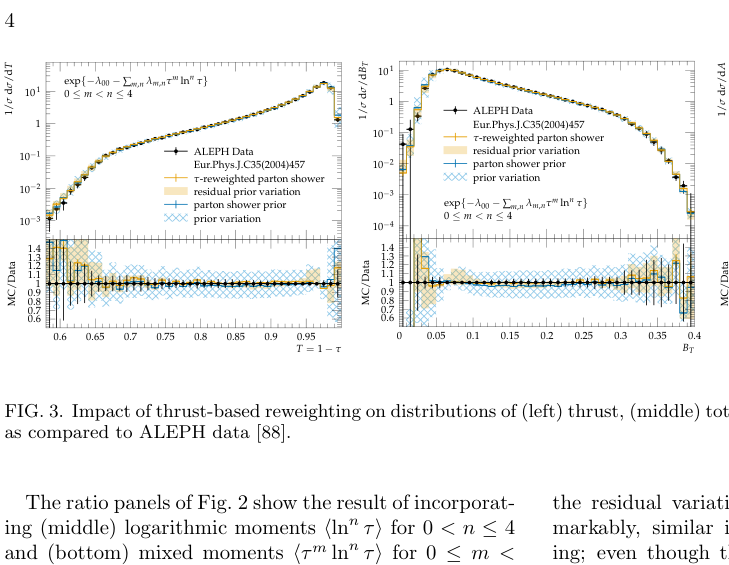

The reweighted MC sample encodes everything the precision calculations know about each observable (thrust, jet broadening, and others) simultaneously, in a single consistent event set.

Why It Matters

The practical stakes are real. At the LHC’s high-luminosity phase and proposed future colliders, theoretical systematic uncertainties are becoming the primary bottleneck. Today’s matching schemes generate significant fractions of negative-weight events, which are computationally expensive to cancel and corrosive to statistical power. Strictly positive weights mean every simulated event contributes constructively.

The conceptual payoff is just as important. By framing the problem as information-theoretic optimization, the method naturally propagates systematic uncertainties from the precision calculation into the Monte Carlo. Varying the input moments and their uncertainties directly changes the output weights and their spread. Theorists know exactly what they are assuming and where their uncertainties come from.

The proof-of-concept focuses on thrust in electron-positron collisions, a clean setting where precision QCD calculations are mature and well-tested. But the framework is general: any observable computable analytically or numerically can become a constraint. Future applications could target LHC processes, incorporate electroweak corrections, or constrain dozens of observables at once.

Bottom Line: This work recasts a decades-old problem in collider physics as an information-theoretic optimization, delivering positive-weight Monte Carlo events that genuinely reflect the precision of state-of-the-art QCD calculations. It’s a combination the field has wanted for a long time.

IAIFI Research Highlights

This work merges advanced QCD calculations with information-theoretic optimization from machine learning. The mathematical language of AI (KL divergence, moment matching, gradient-based optimization) turns out to solve a longstanding problem in fundamental physics simulation.

The method applies the Adam optimizer and principles from maximum entropy inference in a physics context, showing that AI training techniques can work as precision scientific tools, not just data-fitting engines.

By enabling strictly positive-weight Monte Carlo simulations matched to the precision of analytic QCD calculations, this technique addresses one of the central bottlenecks limiting theoretical predictions for current and future collider experiments.

Future extensions will target multi-observable constraints, LHC processes, and electroweak corrections. The full methodology is detailed in [arXiv:2501.17219](https://arxiv.org/abs/2501.17219).