Sampling QCD field configurations with gauge-equivariant flow models

Authors

Ryan Abbott, Michael S. Albergo, Aleksandar Botev, Denis Boyda, Kyle Cranmer, Daniel C. Hackett, Gurtej Kanwar, Alexander G. D. G. Matthews, Sébastien Racanière, Ali Razavi, Danilo J. Rezende, Fernando Romero-López, Phiala E. Shanahan, Julian M. Urban

Abstract

Machine learning methods based on normalizing flows have been shown to address important challenges, such as critical slowing-down and topological freezing, in the sampling of gauge field configurations in simple lattice field theories. A critical question is whether this success will translate to studies of QCD. This Proceedings presents a status update on advances in this area. In particular, it is illustrated how recently developed algorithmic components may be combined to construct flow-based sampling algorithms for QCD in four dimensions. The prospects and challenges for future use of this approach in at-scale applications are summarized.

Concepts

The Big Picture

Imagine trying to understand a crowd by sampling random people on the street. If the crowd moves freely, you get a representative sample quickly. But if it’s packed into tight clusters, mosh pits, and nobody moves between them, you could stand in one cluster all day and never learn about the others. This is one of the central headaches in studying the strong nuclear force.

Quantum Chromodynamics (QCD), the theory of quarks and gluons that holds atomic nuclei together, is the most computationally demanding arena in fundamental physics. To extract predictions from QCD, physicists simulate it on a grid of spacetime points called a lattice, sampling from an enormous collection of possible field states called “configurations.” Traditional sampling algorithms suffer from two crippling problems.

The first is critical slowing-down: as the lattice grows, the sampler must take increasingly tiny steps, making it agonizingly slow. The second is topological freezing: the simulation gets permanently stuck in one region of configuration space, that single mosh pit, and never escapes. A collaboration between MIT, DeepMind, NYU, and other institutions has now shown, for the first time, that machine learning can construct a practical sampling algorithm for full four-dimensional QCD.

Key Insight: By training neural networks that respect the fundamental symmetries of QCD, researchers have built a flow-based sampler that can generate lattice QCD configurations without getting trapped, targeting two of the worst bottlenecks in first-principles nuclear and particle physics calculations.

How It Works

The core idea comes from normalizing flows, a class of machine learning algorithms that learn to transform a simple, easy-to-sample distribution into a complicated target distribution. Think of it like a GPS that warps a flat map so that each uniform grid square corresponds to an equal-probability region of real, mountainous terrain. You draw from the flat map (easy), and the transformation gives you a sample from the terrain (hard).

In lattice QCD, the “terrain” is the QCD probability distribution, exponentially complex, with sharp peaks and topological barriers. The flow model learns a transformation that maps simple random noise into field configurations distributed approximately according to the QCD action. These proposed configurations then feed into a Metropolis accept-reject step, a standard statistical technique that corrects any remaining approximation error by randomly accepting or rejecting each proposal, which guarantees exact, unbiased results.

The real engineering challenge is gauge symmetry, a mathematical rule stating that certain field transformations must leave all physical predictions unchanged. A naive neural network would break this symmetry and produce garbage. The team’s solution: gauge-equivariant coupling layers, network building blocks that transform field configurations in a way that automatically obeys gauge symmetry. The architecture stacks 48 such layers, each parametrized by convolutional networks with four layers and 32 channels.

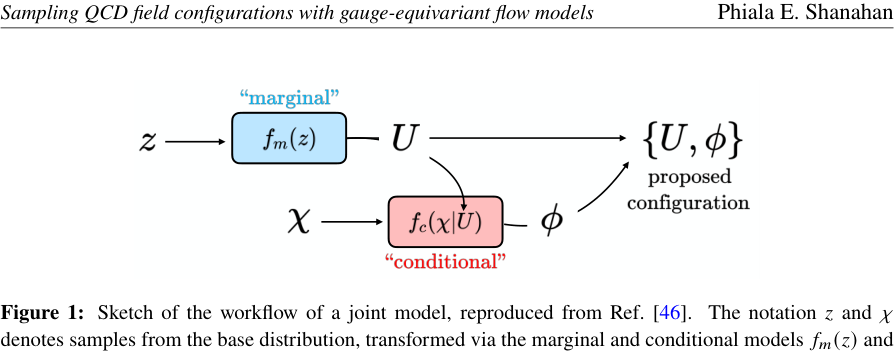

QCD also involves fermions (quarks), which add another layer of complexity. The team handled this with a two-part architecture:

- A marginal flow model that generates gauge field (gluon) configurations from a simple uniform random starting distribution

- A conditional flow model that generates pseudofermion fields, auxiliary mathematical variables encoding quark quantum effects, conditioned on those gauge configurations

Drawing multiple pseudofermion samples for each fixed gauge background and averaging the resulting weights yields increasingly precise estimates of the Dirac operator determinant. This quantity encodes quark-gluon interactions and is notoriously expensive to compute.

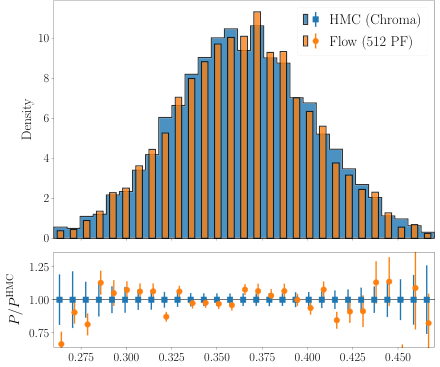

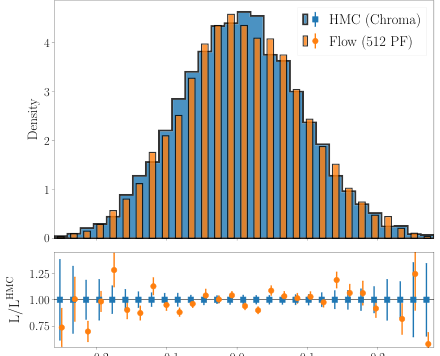

The first numerical demonstration used a modest 4⁴ lattice (four sites in each of four spacetime dimensions) with two fermion flavors and parameters far from the physically realistic regime. The goal was proof-of-principle: show that all the algorithmic pieces fit together and the sampler actually works.

Why It Matters

The two diseases this approach targets are not academic nuisances. As lattice volumes grow toward the physically relevant regime, with larger lattices, finer spacings, and lighter quark masses, traditional algorithms scale catastrophically. Topological freezing in particular can invalidate results for quantities sensitive to the global topology of the gauge field, and that includes many physically important observables.

Flow-based methods offer a fundamentally different scaling behavior. Because the flow generates independent samples rather than evolving through configuration space step by step, it sidesteps the random-walk dynamics that cause critical slowing-down. A well-trained flow can, in principle, move probability weight between topological sectors directly, escaping freezing entirely.

The challenge now is scaling. Moving from a tiny 4⁴ proof-of-principle to the large lattices (32⁴ or bigger) needed for precision physics will require more expressive architectures and significant computational resources. But the roadmap exists, and the pieces work.

Bottom Line: This work assembles gauge-equivariant and fermionic flow components into the first complete, working flow-based sampler for four-dimensional lattice QCD, laying the groundwork for a new generation of nuclear and particle physics calculations.

IAIFI Research Highlights

This work brings normalizing flow architectures from machine learning together with the symmetry constraints of quantum field theory, showing that physics-aware AI can crack problems inaccessible to either field on its own.

Gauge-equivariant coupling layers and joint marginal-conditional flow architectures are a concrete step forward in building neural networks that respect continuous non-Abelian symmetry groups, with applications likely extending well beyond lattice QCD.

By building a flow-based sampler for four-dimensional QCD with dynamical fermions, this work opens a path toward eliminating critical slowing-down and topological freezing, two of the most severe bottlenecks in lattice field theory.

Scaling these methods to physically realistic lattice volumes and parameters is the next major hurdle. The paper, presented at LATTICE2022 and available as [arXiv:2208.03832](https://arxiv.org/abs/2208.03832), lays out both the prospects and the remaining challenges.