Simulation-based Inference for Exoplanet Atmospheric Retrieval: Insights from winning the Ariel Data Challenge 2023 using Normalizing Flows

Authors

Mayeul Aubin, Carolina Cuesta-Lazaro, Ethan Tregidga, Javier Viaña, Cecilia Garraffo, Iouli E. Gordon, Mercedes López-Morales, Robert J. Hargreaves, Vladimir Yu. Makhnev, Jeremy J. Drake, Douglas P. Finkbeiner, Phillip Cargile

Abstract

Advancements in space telescopes have opened new avenues for gathering vast amounts of data on exoplanet atmosphere spectra. However, accurately extracting chemical and physical properties from these spectra poses significant challenges due to the non-linear nature of the underlying physics. This paper presents novel machine learning models developed by the AstroAI team for the Ariel Data Challenge 2023, where one of the models secured the top position among 293 competitors. Leveraging Normalizing Flows, our models predict the posterior probability distribution of atmospheric parameters under different atmospheric assumptions. Moreover, we introduce an alternative model that exhibits higher performance potential than the winning model, despite scoring lower in the challenge. These findings highlight the need to reevaluate the evaluation metric and prompt further exploration of more efficient and accurate approaches for exoplanet atmosphere spectra analysis. Finally, we present recommendations to enhance the challenge and models, providing valuable insights for future applications on real observational data. These advancements pave the way for more effective and timely analysis of exoplanet atmospheric properties, advancing our understanding of these distant worlds.

Concepts

The Big Picture

Imagine trying to figure out the ingredients of a recipe by smelling the exhaust from a restaurant’s kitchen, from across the street, in a noisy city, without ever tasting the food. That’s roughly what astronomers face when analyzing exoplanet atmospheres. When a planet crosses its star, a sliver of starlight filters through its atmosphere, picking up the chemical fingerprints of water vapor, carbon dioxide, methane, and more. The resulting spectrum is faint, noisy, and molecular signals overlap in ways that make them hard to untangle.

The traditional approach, atmospheric retrieval, relies on statistical algorithms that test millions of possible atmospheric configurations and use probability calculations to zero in on the most likely answer. These methods work, but they’re slow. Achingly slow when the European Space Agency’s Ariel mission is expected to beam back spectra from roughly 1,000 exoplanets starting in 2029.

A team from the AstroAI group at the Harvard & Smithsonian Center for Astrophysics, with collaborators at MIT and beyond, built a faster solution and proved it works by winning a global machine learning competition against 293 teams.

Key Insight: By training a neural network called a Normalizing Flow to mimic the output of slow Bayesian algorithms, the AstroAI team achieved state-of-the-art exoplanet atmospheric retrieval in a fraction of the time, making it feasible to analyze thousands of planetary atmospheres at scale.

How It Works

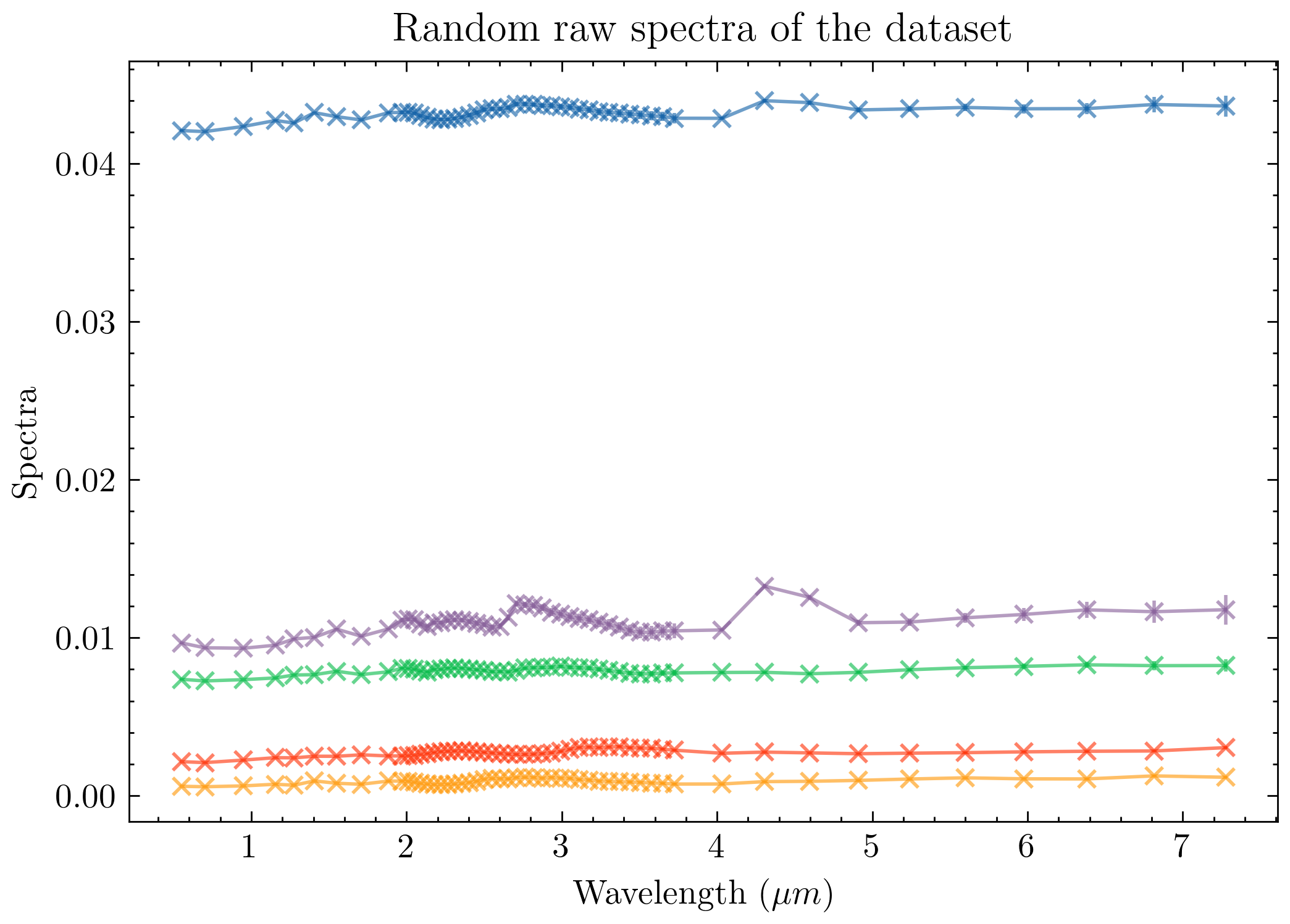

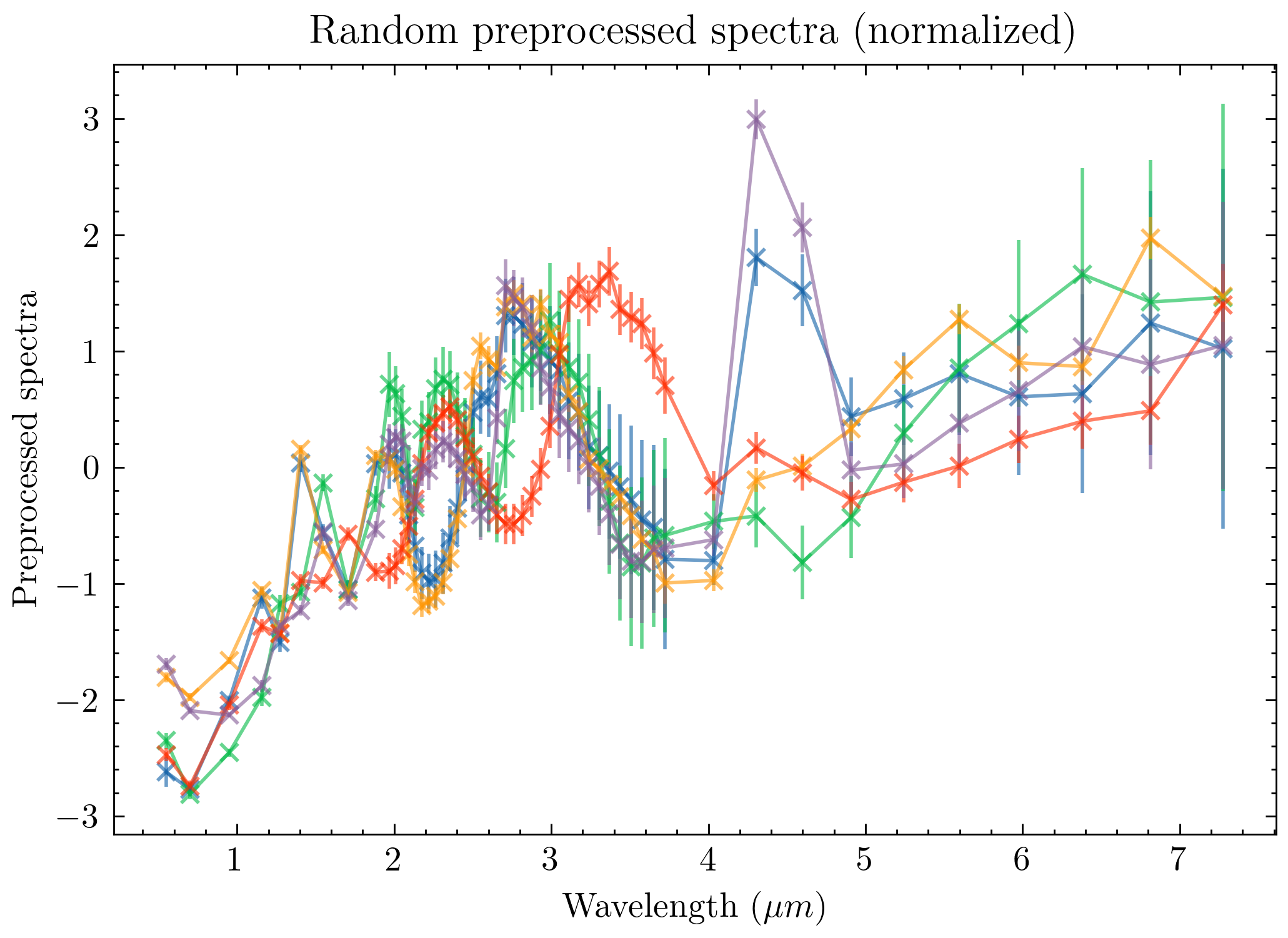

The team’s starting point was the Ariel Data Challenge 2023, a machine learning competition built around simulated exoplanet data. The dataset was generated using TauREx3, a physics-based forward model that takes atmospheric conditions as input and computes the resulting spectrum, running the science forward from cause to effect. For each of 41,423 simulated planets, the challenge provided the spectrum plus supporting measurements: stellar mass, radius, temperature, and distance, along with the planet’s mass, orbital period, and surface gravity.

The goal wasn’t simply to predict the best-fit atmospheric properties. It was to predict the full posterior probability distribution: the complete picture of which combinations of planet radius, temperature, and five molecular abundances (H₂O, CO₂, CO, CH₄, and NH₃) are consistent with the data. A single best-guess answer ignores uncertainty; a posterior captures it honestly.

This is where Normalizing Flows come in. A normalizing flow is a type of neural network that learns to transform a simple distribution (like a bell curve) into a complex, arbitrarily shaped one. The network learns the mapping from spectrum plus supporting measurements to a posterior distribution over seven atmospheric parameters.

The alternative is Nested Sampling, a traditional algorithm that systematically explores the probability landscape by sampling billions of configurations. It’s reliable, but can take hours per planet. A trained normalizing flow produces equivalent results in milliseconds.

The team built and compared three model variants:

- The winning model: trained directly on input parameters from the forward model

- An NS-trained model: trained on samples from Nested Sampling runs (available for only 6,766 of the 41,423 spectra)

- A noised-spectra model: trained on noise-added spectra to better match real telescope observations

The winning model topped the leaderboard, but the paper makes an intriguing confession. The NS-trained model, despite ranking lower, may be the more scientifically valuable approach. The competition weighted 80% of its score on a Kolmogorov-Smirnov test, a measure of how similar two distributions look in shape. That metric rewards matching Nested Sampling posteriors closely, including their quirks and biases. The NS-trained model targets those posteriors directly, making it potentially better aligned with ground truth even if it scores worse on an imperfect metric.

Why It Matters

The James Webb Space Telescope (JWST) has already more than tripled our catalog of well-characterized exoplanet atmospheres, and Ariel will multiply that again by roughly tenfold. The bottleneck is no longer data collection; it’s analysis.

Statistical retrievals that take hours per planet become infeasible at the scale of 1,000 worlds. Machine learning models trained to approximate those retrievals can run in seconds.

Beyond raw speed, the paper raises a deeper methodological question: how do we evaluate AI models for science? The AstroAI team shows that optimizing for a competition metric can diverge from optimizing for scientific accuracy. Their recommendation to rethink the evaluation criteria reflects a broader lesson. When AI enters scientific workflows, the benchmarks need to be as carefully designed as the models themselves.

The team also trained entirely on synthetic spectra from a physics simulator, with no real observational data, and produced a model that generalizes to unseen test cases. This simulation-based inference approach is gaining traction across astrophysics, particle physics, and other fields where forward models are well understood but inverse problems are expensive to solve.

Bottom Line: The AstroAI team won a global machine learning challenge by using Normalizing Flows to replace slow Bayesian atmospheric retrievals with fast, uncertainty-aware neural inference, a critical capability as Ariel prepares to survey 1,000 exoplanet atmospheres starting in 2029.

IAIFI Research Highlights

This work fuses deep generative modeling with radiative transfer physics and Bayesian inference to solve a core problem in observational astrophysics, combining AI and fundamental science in a way that strengthens both.

The paper shows that normalizing flows can accurately approximate complex, high-dimensional posteriors from physical simulations, and it critically examines how benchmark metrics shape model development in simulation-based inference.

Faster, scalable atmospheric retrieval enables systematic characterization of exoplanet chemistry at population scale, with implications for our understanding of planetary formation, habitability, and the diversity of worlds beyond our solar system.

Future work will apply these models to real Ariel observations and improve generalization to realistic noise conditions; the full study is available at [arXiv:2309.09337](https://arxiv.org/abs/2309.09337) from the AstroAI group at the Harvard & Smithsonian Center for Astrophysics.