Smoothness Errors in Dynamics Models and How to Avoid Them

Authors

Edward Berman, Luisa Li, Jung Yeon Park, Robin Walters

Abstract

Modern neural networks have shown promise for solving partial differential equations over surfaces, often by discretizing the surface as a mesh and learning with a mesh-aware graph neural network. However, graph neural networks suffer from oversmoothing, where a node's features become increasingly similar to those of its neighbors. Unitary graph convolutions, which are mathematically constrained to preserve smoothness, have been proposed to address this issue. Despite this, in many physical systems, such as diffusion processes, smoothness naturally increases and unitarity may be overconstraining. In this paper, we systematically study the smoothing effects of different GNNs for dynamics modeling and prove that unitary convolutions hurt performance for such tasks. We propose relaxed unitary convolutions that balance smoothness preservation with the natural smoothing required for physical systems. We also generalize unitary and relaxed unitary convolutions from graphs to meshes. In experiments on PDEs such as the heat and wave equations over complex meshes and on weather forecasting, we find that our method outperforms several strong baselines, including mesh-aware transformers and equivariant neural networks.

Concepts

The Big Picture

Imagine teaching a child to paint by telling them: “Never let colors blur together.” That rule works great for crisp geometric art. But hand them a watercolor brush and ask them to paint a sunset, and suddenly the rule becomes a straitjacket. The colors need to bleed into one another.

Researchers at Northeastern University’s Geometric Learning Lab ran into exactly this mismatch when using neural networks to simulate physical systems. The networks in question are graph neural networks (GNNs), models that treat physical simulations as graphs: collections of points connected by edges.

When you want to model how heat spreads across a metal plate, or how wind patterns evolve across a planet, GNNs are powerful tools. But they carry a troublesome habit called oversmoothing: after repeated rounds of information-sharing between neighboring points, every location starts to look identical to its neighbors. Distinct features blur into a gray average, and your carefully initialized simulation melts into mush.

The fix previously proposed, unitary convolutions that lock each point’s value in place so it can’t blend with its neighbors, turned out to be its own kind of straitjacket. Edward Berman, Luisa Li, Jung Yeon Park, and Robin Walters proved that when the physics itself requires smoothing (think heat diffusion, where hot spots naturally spread and equalize), forcing the network to never smooth anything leads to systematic errors in the other direction. They call this undersmoothing.

Their solution: a new class of relaxed unitary convolutions that let the network breathe, smoothing exactly as much as the physics demands and no more.

Key Insight: Too much smoothing (oversmoothing) and too little smoothing (undersmoothing) are both failure modes for neural network PDE solvers. The same mathematical constraint meant to fix the first actively causes the second.

How It Works

The researchers start from a precise mathematical measurement of smoothness: the Rayleigh quotient, a formula that captures how much a signal varies between neighboring points. A high Rayleigh quotient means the signal is rough and spiky; a low one means it’s smooth and uniform. Previous work showed that standard GNNs drive the Rayleigh quotient toward zero (oversmoothing), while unitary convolutions keep it frozen in place.

The team’s first theoretical contribution is a lower bound on approximation error for unitary functions. They proved that if your physical system’s solution changes its overall “energy” in a direction-dependent way (which heat diffusion does, because the total heat energy decreases as hot spots spread and cool), then a strictly unitary network cannot represent that behavior accurately, no matter how well it’s trained. Unitarity isn’t just suboptimal; it’s provably wrong for these tasks.

Their solution introduces relaxed unitary convolutions in two steps:

- Relax the constraint: Instead of forcing the network’s internal parameters to be exactly unitary, the network learns a unitary component plus a small correction term. This lets the Rayleigh quotient decrease when the physics calls for it, while still resisting the runaway smoothing that plagues standard GNNs.

- Generalize to meshes: Real surfaces (an armadillo figurine, a weather simulation grid) aren’t simple graphs. The team extended the entire framework from abstract graphs to triangular meshes, surface representations where a shape’s exterior is tiled by a connected patchwork of tiny triangles, accounting for the varying geometry of each face.

The resulting architecture, R-UniMesh (Relaxed Unitary Mesh convolutions), threads the needle between the two failure modes.

The figure above tells the story visually. On the heat equation benchmark over the armadillo mesh at timestep 190, the competing EMAN model has blurred the temperature field into a featureless smear (classic oversmoothing). The Hermes model swings the other way, preserving sharp artificial gradients that shouldn’t exist (undersmoothing). R-UniMesh tracks the ground truth closely, capturing both the smooth spreading of heat and the finer structure along the mesh’s ridges.

Why It Matters

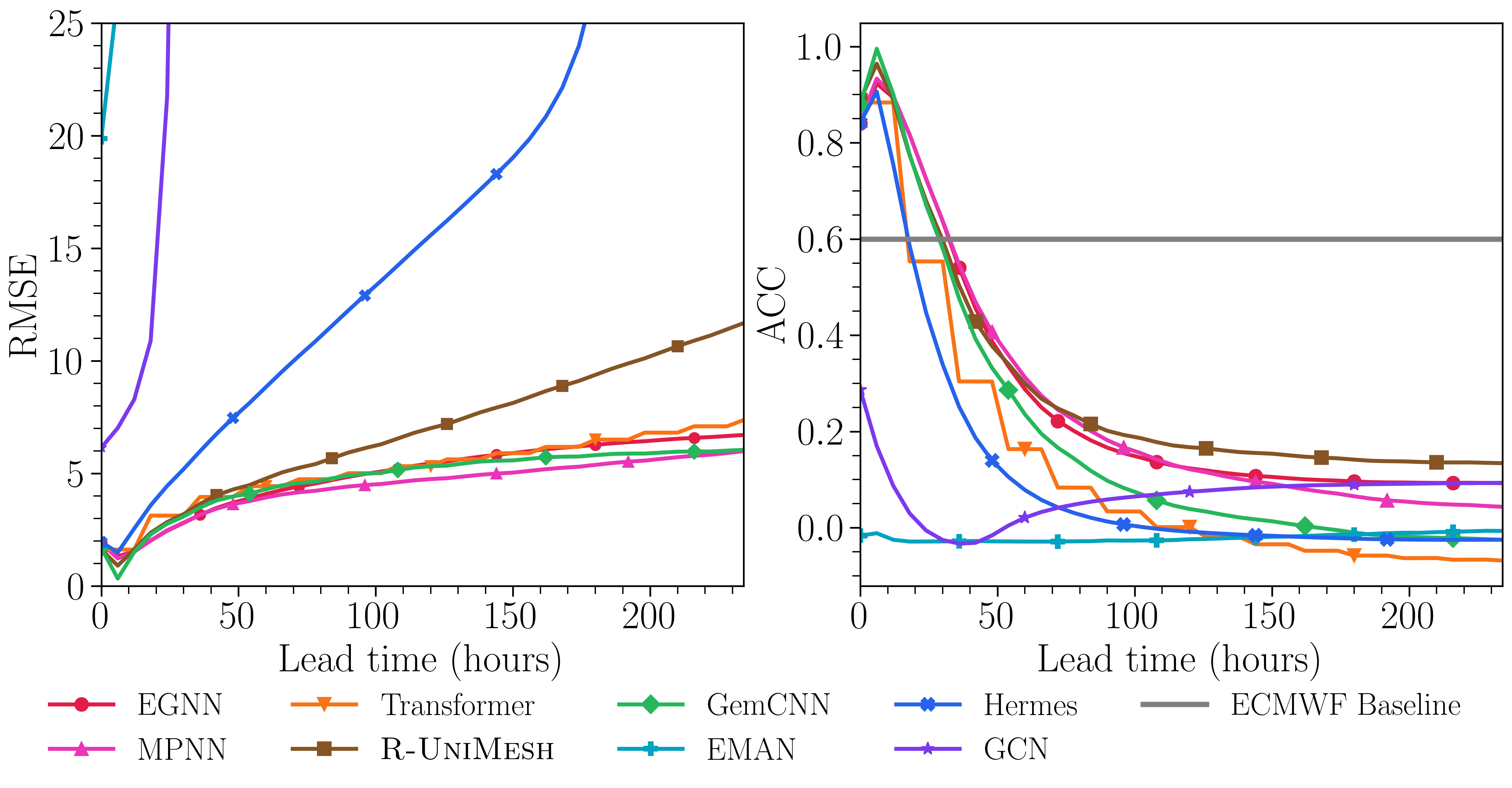

The immediate practical stakes are weather forecasting and climate modeling. The team tests R-UniMesh on real-world atmospheric data and finds it outperforms strong baselines including mesh-aware transformers and equivariant neural networks, architectures that bake in physical symmetries like rotation invariance. Smoothness control turns out to be at least as important a structural assumption as geometric symmetry, and sometimes more so.

The deeper implication reaches across scientific computing. Anywhere researchers use neural networks to simulate PDEs (partial differential equations, the equations describing how physical quantities like heat, pressure, or velocity change continuously through space and time) on irregular geometries, the same smoothness mismatch problem lurks. Blood flow through arteries. Electromagnetic fields around antenna structures. Seismic waves through geological strata.

The Rayleigh quotient framework gives future researchers a diagnostic tool: measure how your network changes the smoothness of its inputs, compare it to how the true physics changes smoothness, and you’ll know immediately whether your architecture is over- or under-constrained. That’s a transferable lens, not just a one-off fix.

Open questions remain. The relaxation parameter that controls how much smoothing is allowed currently requires per-task tuning. Finding principled, automatic ways to set it, perhaps by estimating the physics’ natural smoothing rate from data, is a natural next step. Extending the framework to the more exotic discretizations used in finite element analysis is another frontier.

Bottom Line: By proving that “never smooth” is just as broken as “always smooth,” this work gives neural PDE solvers a mathematically grounded middle path, and backs it up on everything from abstract heat equations to real atmospheric forecasting.

IAIFI Research Highlights

This work sits at the intersection of differential geometry and deep learning, formalizing physical smoothing behavior (a classical PDE concept) as a trainable property of neural network architectures deployed on real scientific meshes.

Relaxed unitary convolutions offer a new architectural primitive for graph and mesh neural networks that provably avoids both oversmoothing and undersmoothing, outperforming mesh-aware transformers and equivariant networks on dynamics benchmarks.

By enabling more accurate neural surrogates for PDEs on complex surfaces, this research accelerates data-driven simulation of physical systems (from heat and wave propagation to atmospheric dynamics) central to fundamental physics modeling.

Future work will explore automatic tuning of the relaxation parameter and extension to non-triangular mesh discretizations; see the paper ([arXiv:2602.05352](https://arxiv.org/abs/2602.05352)) and code at github.com/EdwardBerman/rayleigh_analysis.