Stable Object Reorientation using Contact Plane Registration

Authors

Richard Li, Carlos Esteves, Ameesh Makadia, Pulkit Agrawal

Abstract

We present a system for accurately predicting stable orientations for diverse rigid objects. We propose to overcome the critical issue of modelling multimodality in the space of rotations by using a conditional generative model to accurately classify contact surfaces. Our system is capable of operating from noisy and partially-observed pointcloud observations captured by real world depth cameras. Our method substantially outperforms the current state-of-the-art systems on a simulated stacking task requiring highly accurate rotations, and demonstrates strong sim2real zero-shot transfer results across a variety of unseen objects on a real world reorientation task. Project website: \url{https://richardrl.github.io/stable-reorientation/}

Concepts

The Big Picture

Imagine unpacking a box of oddly shaped items, a ceramic mug, a wrench, a toy dinosaur, and stacking them neatly. Without thinking, your brain computes how each object should sit: which face goes down, which edge provides stability. You don’t memorize every object; you read its geometry and make a judgment call. For a robot, this same task is a surprisingly hard mathematical problem.

The challenge isn’t just picking up an object. It’s predicting the right orientation, the rotation in three-dimensional space that places an object stably on a surface. Robots operating in warehouses, kitchens, or laboratories need to do this constantly.

The mathematics of 3D rotation is treacherous. Small errors cause wildly wrong answers. Many objects have several equally correct orientations. Standard mathematical tools for describing rotation break down at certain edge cases. A robot that fumbles here can’t reliably stack, sort, or hand off anything.

Researchers at MIT’s Improbable AI Lab and Google Research have developed a new system that reframes the rotation problem entirely, achieving large improvements in both accuracy and real-world generalization.

Key Insight: Instead of directly predicting a 3D rotation, the system asks a simpler question: which surface of this object should touch the table? Answering that well turns out to be sufficient to recover a precise, stable orientation.

How It Works

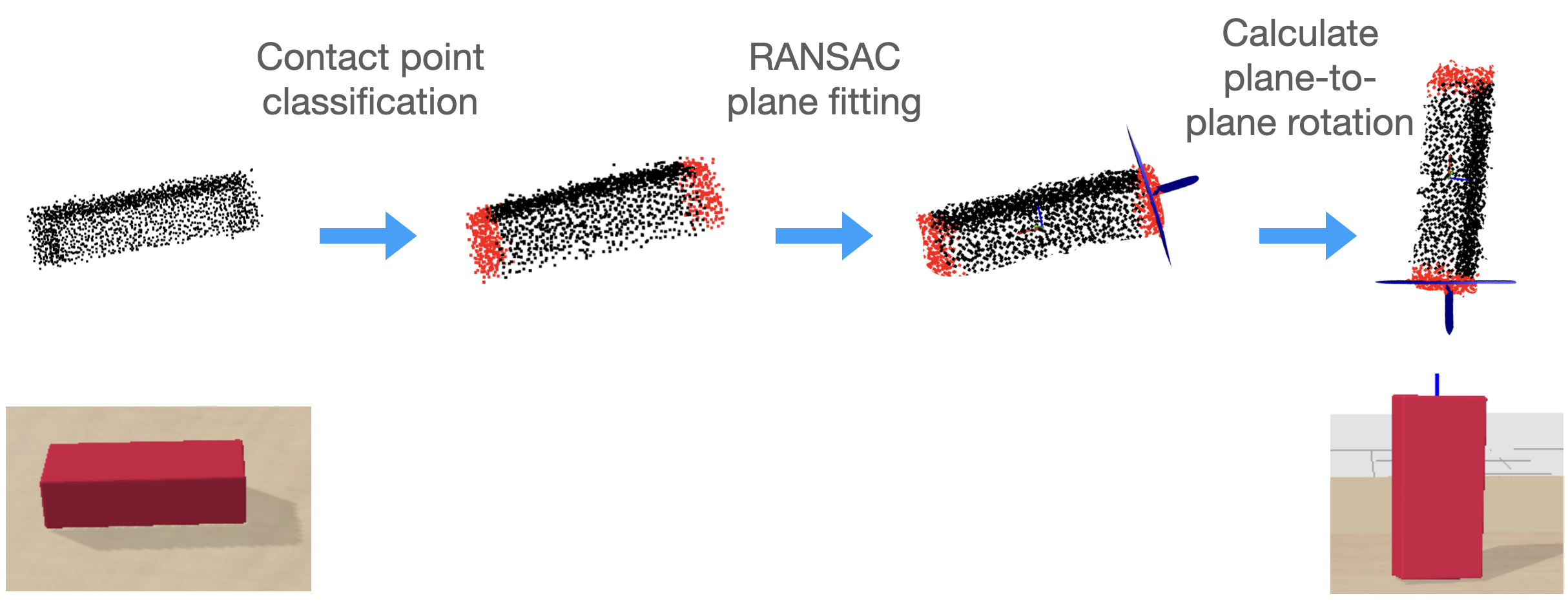

Finding a stable resting orientation is really a two-step process: identify the contact plane (the flat face that should sit on the table), then compute the rotation needed to align it with gravity. This reformulation sidesteps the notorious difficulties of directly predicting rotations.

The system operates in four stages:

- Point cloud input. A depth camera captures a point cloud, a set of noisy, incomplete 3D coordinate measurements of the object’s surface.

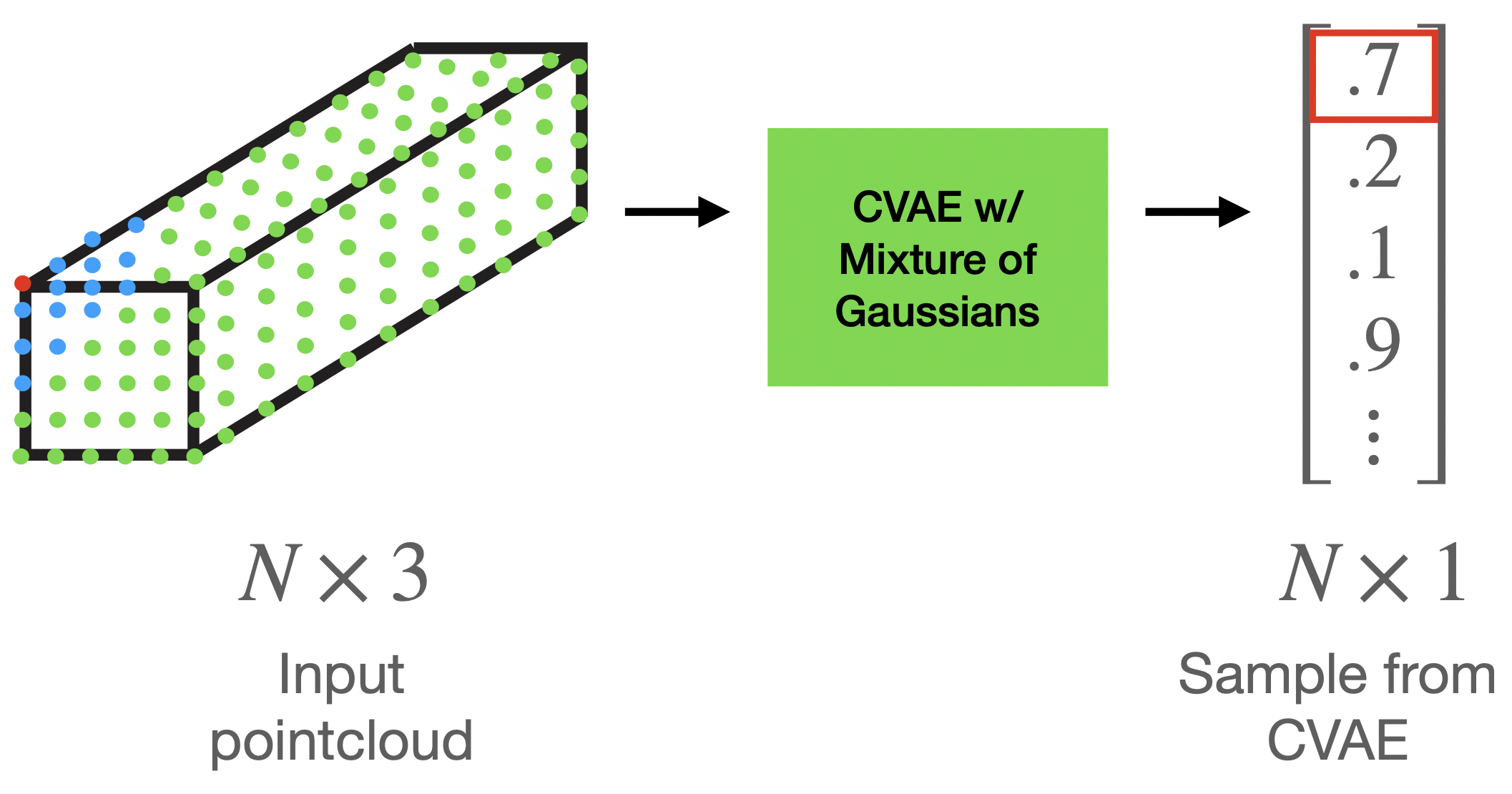

- Contact point prediction. A neural network scores each point: how likely is it to belong to the contact surface, the “bottom” of the object before it’s been flipped?

- Plane fitting with RANSAC. RANSAC (Random Sample Consensus) is a technique for finding the best-fitting geometric shape in noisy data while automatically ignoring outliers. Here it fits a plane to the highest-density cluster of predicted contact points.

- Rotation computation. Rodrigues’ rotation formula converts the fitted plane’s normal vector (the perpendicular direction pointing straight up from the face) into an exact 3D rotation aligned with gravity.

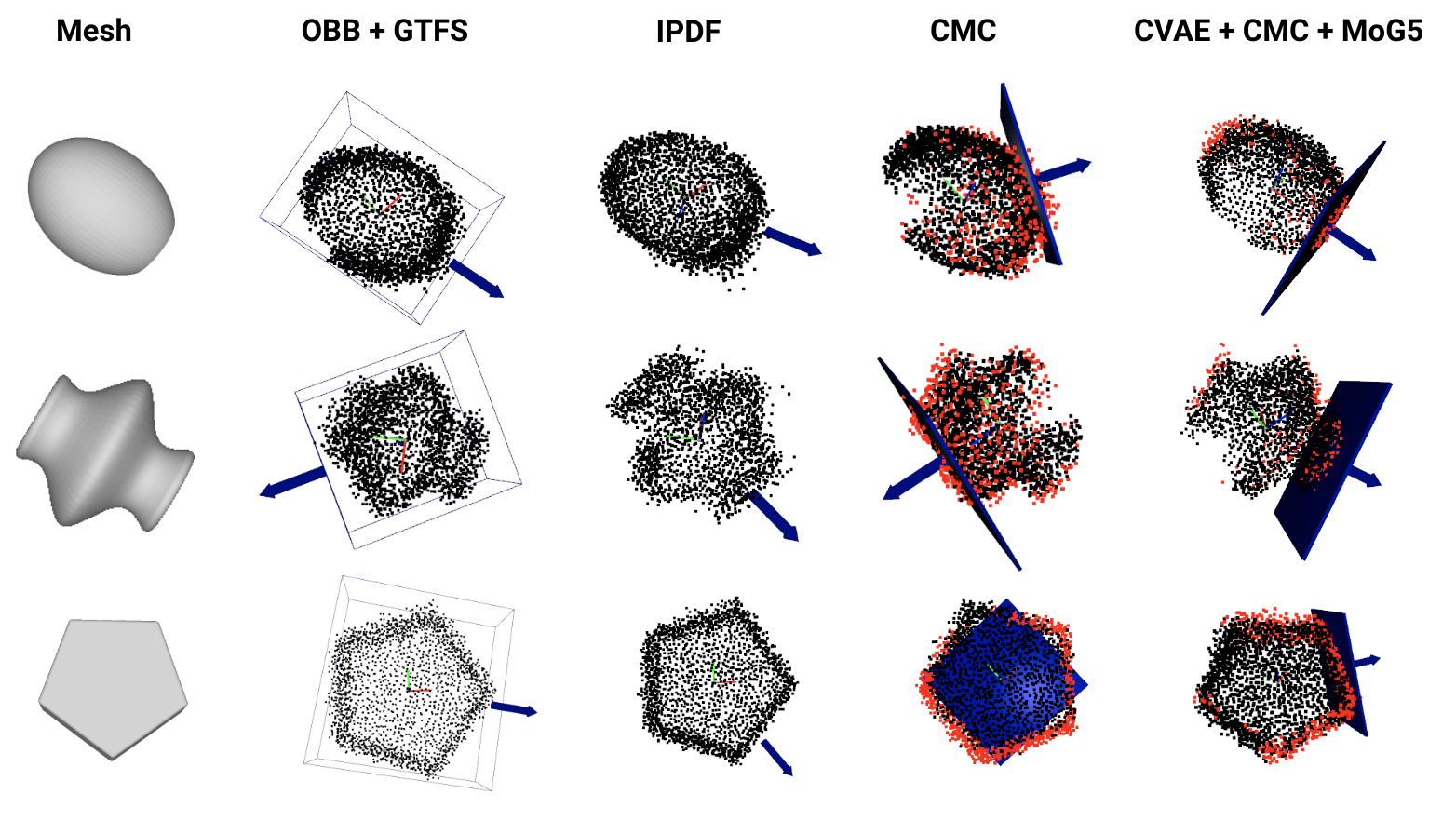

This per-point classification approach generalizes well because contact point probabilities depend mainly on local geometry. Flat regions near the base of an object look geometrically similar regardless of whether you’ve seen that exact object before. Traditional approaches rely on tools like quaternions or Euler angles, compact mathematical encodings of rotation that become ambiguous or ill-conditioned at certain orientations. The network avoids those issues entirely: it votes on which surface points are in contact, not on what rotation to apply.

Objects can have multiple valid contact surfaces. A cube can rest stably on any of six faces. This is the multimodality problem: similar inputs can have very different correct outputs. The system handles it with a CVAE (Conditional Variational Autoencoder), a generative model that samples plausible hypotheses rather than averaging over all of them. Even when predicted probabilities spread across several valid contact regions, RANSAC selects the dominant cluster and ignores the rest.

On a simulated block-stacking benchmark requiring high rotational precision, the method substantially outperforms previous state-of-the-art approaches. It also transfers directly from simulation to the real world zero-shot, with no real-world training data at all, successfully reorienting a diverse set of never-before-seen objects using only noisy point clouds from commodity RealSense depth cameras.

Why It Matters

Object reorientation might sound narrow, but it is a linchpin of robotic manipulation. Virtually every downstream task (pick-and-place, assembly, tool use, surgical robotics) requires a robot to reason about how objects sit in space.

Systems that generalize poorly to new object shapes are effectively useless outside a controlled lab. The sim-to-real gap, the tendency for robots trained in simulation to fail in the messier real world, remains one of the biggest open problems in robotics. This work attacks both issues simultaneously.

The deeper contribution is methodological. Decomposing a hard rotation-prediction problem into a more tractable contact-classification problem shows that clever reformulation can outperform simply scaling up model capacity. Local geometric reasoning, the kind that transfers across shapes, is exactly the right prior for manipulation tasks. Open questions remain around non-planar contact surfaces, dynamics during contact, and deformable objects where stable orientations are harder to define.

Bottom Line: By teaching a robot to ask “which face goes down?” instead of “what rotation is needed?”, this system achieves accurate, generalizable object reorientation from real-world depth cameras, transferring from simulation to physical robots without any real-world training data.

IAIFI Research Highlights

This work connects geometric deep learning and robotic manipulation, using physical reasoning about contact and gravity to structure a machine learning problem, reflecting IAIFI's AI-physics integration approach.

The contact plane reformulation offers a principled solution to the multimodality problem in SO(3) rotation prediction, combining conditional generative modeling with geometric estimation for stable, generalizable inference.

By grounding the learning problem in physical constraints (stable equilibria, contact mechanics, gravitational alignment), the method shows how physics priors can be embedded directly into neural architectures to improve generalization across object geometries.

Future extensions may address non-planar contact surfaces and dynamic manipulation; the sim-to-real framework also provides a template for training robotic systems without costly real-world data collection. Full paper by Li, Esteves, Makadia, and Agrawal: [arXiv:2208.08962](https://arxiv.org/abs/2208.08962).