Surrogate- and invariance-boosted contrastive learning for data-scarce applications in science

Authors

Charlotte Loh, Thomas Christensen, Rumen Dangovski, Samuel Kim, Marin Soljacic

Abstract

Deep learning techniques have been increasingly applied to the natural sciences, e.g., for property prediction and optimization or material discovery. A fundamental ingredient of such approaches is the vast quantity of labelled data needed to train the model; this poses severe challenges in data-scarce settings where obtaining labels requires substantial computational or labor resources. Here, we introduce surrogate- and invariance-boosted contrastive learning (SIB-CL), a deep learning framework which incorporates three ``inexpensive'' and easily obtainable auxiliary information sources to overcome data scarcity. Specifically, these are: 1)~abundant unlabeled data, 2)~prior knowledge of symmetries or invariances and 3)~surrogate data obtained at near-zero cost. We demonstrate SIB-CL's effectiveness and generality on various scientific problems, e.g., predicting the density-of-states of 2D photonic crystals and solving the 3D time-independent Schrodinger equation. SIB-CL consistently results in orders of magnitude reduction in the number of labels needed to achieve the same network accuracies.

Concepts

The Big Picture

Imagine trying to teach a child to recognize dogs with only three photographs. Three examples won’t cut it; the child needs hundreds before the concept clicks. Now scale that problem to physics: you want a neural network to predict the quantum properties of a material, but each calculation takes days of supercomputer time. You can afford maybe 50 labeled examples. That’s it.

This is the brutal reality facing researchers who apply deep learning to fundamental science. Unlike computer vision, where millions of labeled images can be crowdsourced from the internet, generating labeled data in physics means running expensive simulations or conducting painstaking experiments. Every data point costs real time and money.

A team at MIT has developed SIB-CL (Surrogate- and Invariance-Boosted Contrastive Learning), a framework that slashes the number of labeled examples needed to train accurate models by orders of magnitude.

Key Insight: SIB-CL exploits three cheap, readily available information sources — unlabeled data, physical symmetries, and approximate “surrogate” calculations — to teach neural networks about physical systems using a tiny fraction of the labeled data otherwise required.

How It Works

The core idea is that even when precise labels are scarce, science problems are rarely information-starved. They come packaged with rich structural knowledge that conventional supervised learning ignores entirely. SIB-CL consumes all of it.

The framework draws on three auxiliary information sources:

- Unlabeled data — raw inputs (say, the geometry of a photonic crystal) that haven’t been paired with expensive computed outputs. These are essentially free to generate.

- Invariances and symmetries — prior knowledge that certain transformations leave the label unchanged. If rotating a crystal by 90° doesn’t change its optical properties, the model should know that upfront rather than learning it from scratch across thousands of examples.

- Surrogate data — approximate labels computed using faster, cheaper methods. Not perfectly accurate, but they capture the essential structure at a fraction of the cost.

SIB-CL fuses these sources through a two-stage training pipeline. In the first stage, the network undergoes contrastive pre-training, a self-supervised technique where the model learns useful internal representations without any labeled answers. The specific method used is SimCLR.

The model sees two differently-transformed versions of the same input and learns to place them close together in a shared representational space, while pushing representations of different inputs far apart. The transformations aren’t arbitrary. They’re chosen to reflect the actual physical symmetries of the problem. A rotation that leaves a crystal’s properties invariant becomes a principled training signal. The physics does the work.

Interleaved with this contrastive step is surrogate transfer learning: the model pre-trains on the cheaper approximate dataset before fine-tuning on the small, precious set of high-fidelity labels. This gives the network’s encoder, the component that transforms raw inputs into a compact internal representation, a meaningful head start. It arrives at fine-tuning already understanding the structure of the problem, even if the surrogate’s answers aren’t exact.

The final stage is conventional fine-tuning. A prediction head is attached to the pre-trained encoder and trained on the available accurate labels. Because the encoder already carries rich, physics-aware representations, it needs far fewer expensive examples to converge.

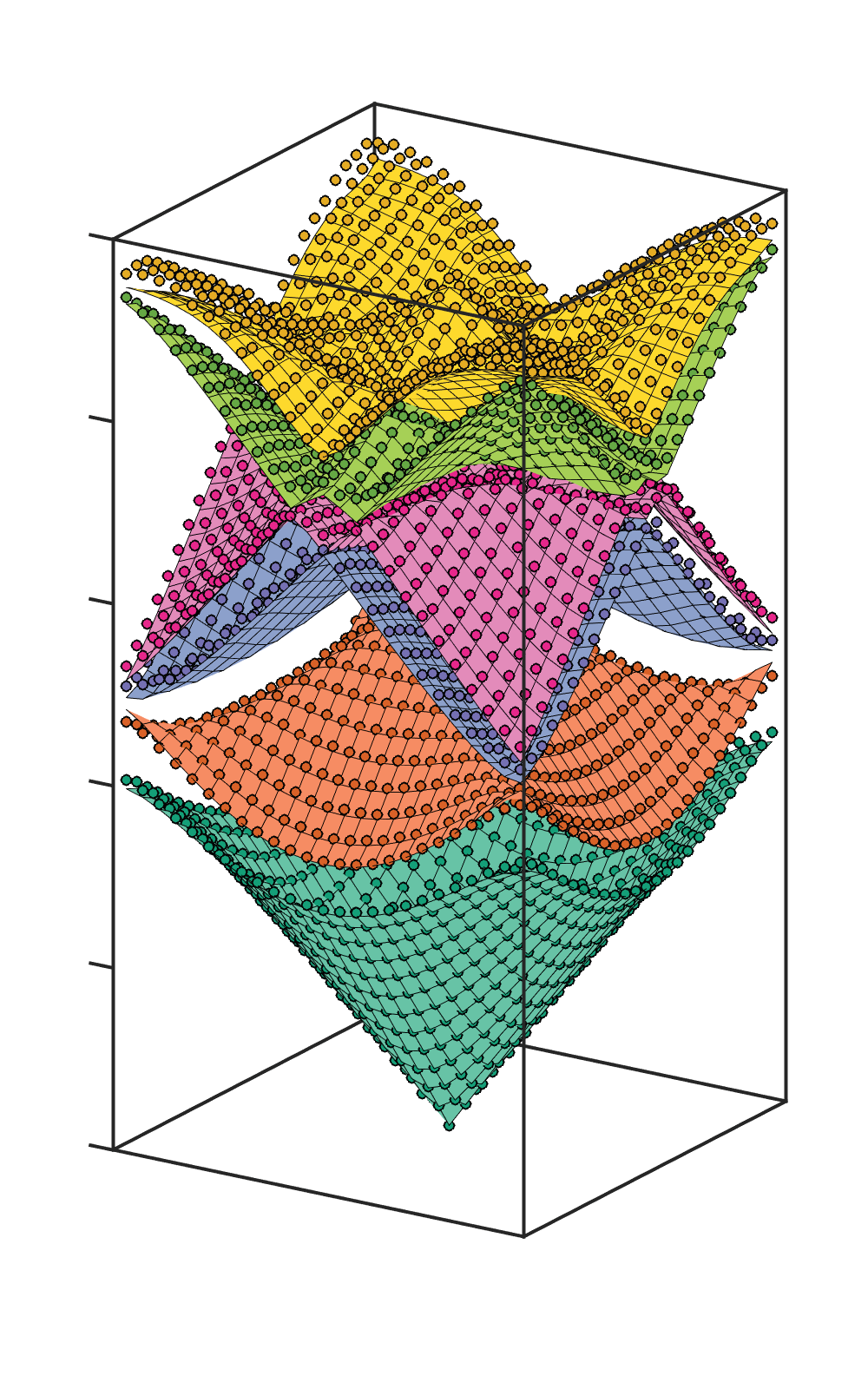

The team validated SIB-CL on two demanding test cases. The first: predicting the density-of-states (DOS) of 2D photonic crystals, materials that control light propagation through periodic structure, with applications in lasers and optical computing. The input is the geometry of the crystal’s unit cell (its smallest repeating structural building block); the output is a complex spectral function requiring full electromagnetic simulation to compute accurately.

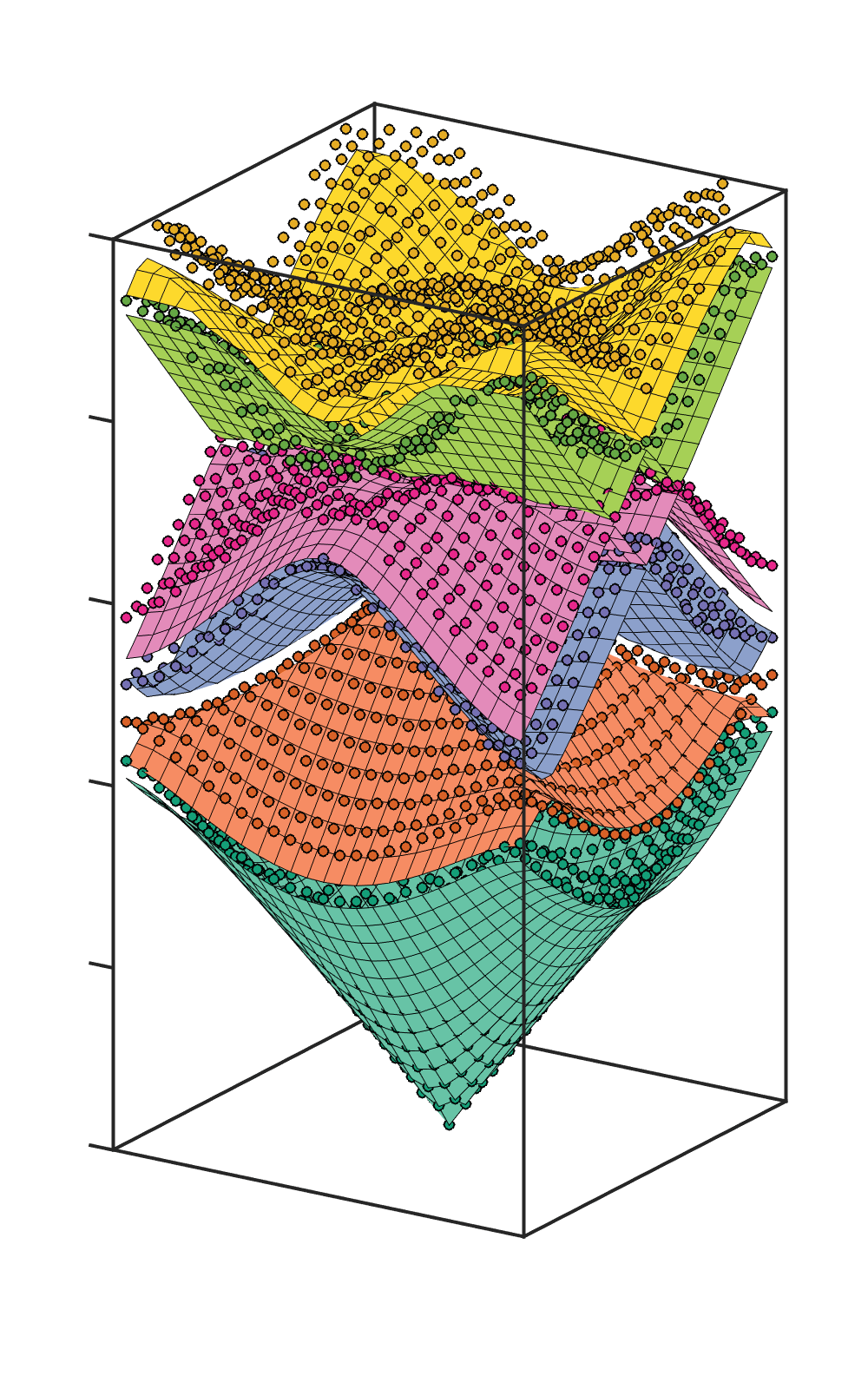

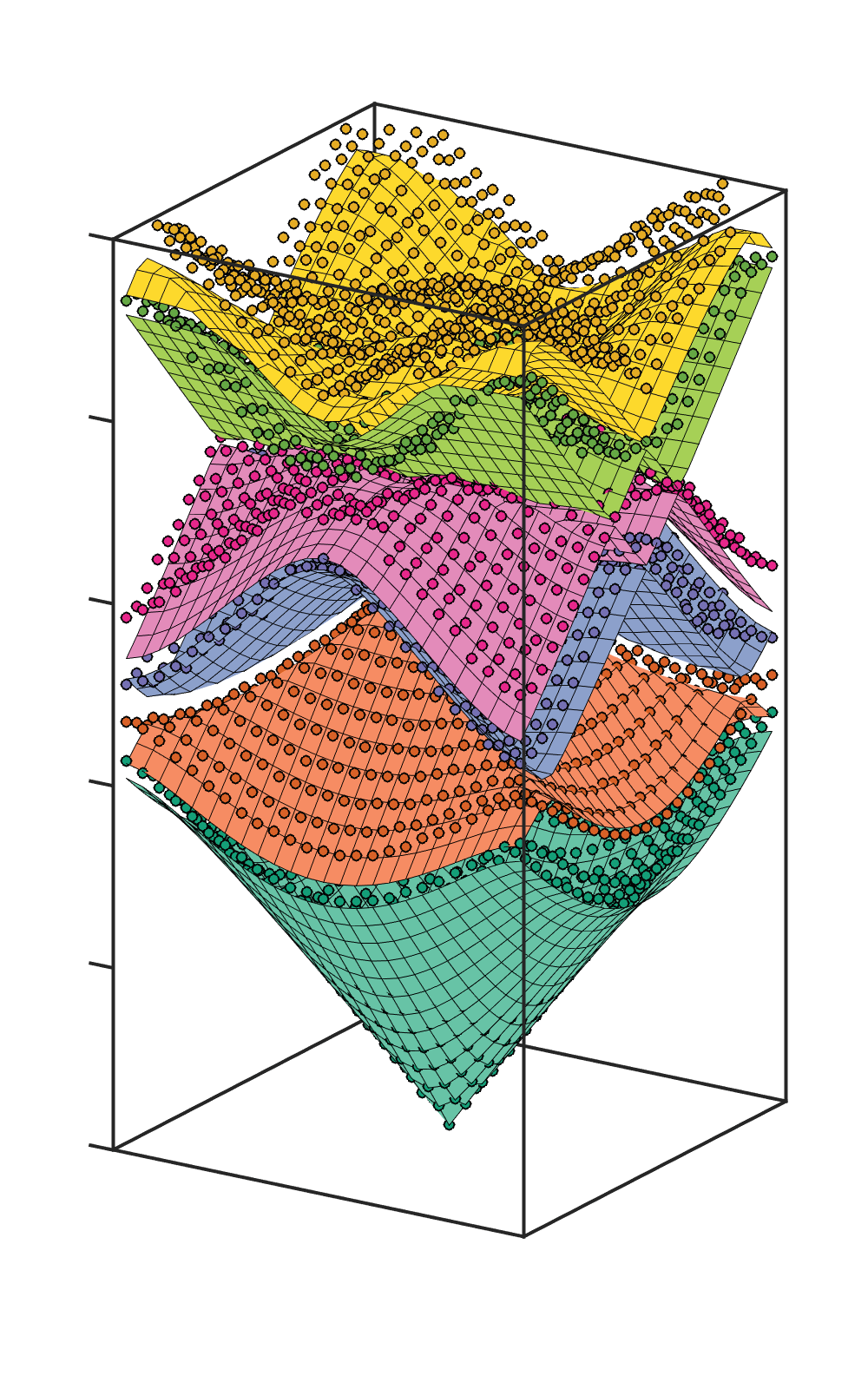

The second: solving the 3D time-independent Schrödinger equation for quantum systems, a central problem in quantum chemistry and materials design. In both cases, SIB-CL matched the accuracy of standard supervised learning while using orders of magnitude fewer labeled examples.

Why It Matters

The practical payoff is immediate. Tasks that would require thousands of expensive simulations might now be feasible with dozens. That’s the difference between a project taking months versus days, between being accessible only to well-funded groups versus a broader research community.

But there’s a conceptual point worth dwelling on. SIB-CL puts into practice a principle that physicists have always relied on but machine learning has historically ignored: science problems are not naked datasets. They come embedded in a web of known structure, from symmetries and conservation laws to approximate solutions and limiting cases.

Every physics student learns that when you can’t solve a hard problem, you solve an easier one first and work outward. SIB-CL formalizes exactly that strategy for neural networks. As AI becomes central to material discovery, drug design, and quantum simulation, frameworks like this one point toward a future where labeled-data scarcity no longer determines which scientific questions are computationally tractable.

Open questions remain. The current demonstrations focus on well-defined problems where symmetries are known analytically. Extending the approach to messier domains, such as experimental data from telescopes or particle detectors, will require creative thinking about how to define useful invariances when the underlying symmetry group isn’t clean. SIB-CL also inherits contrastive learning’s sensitivity to batch size and augmentation strategy.

Bottom Line: The rich structural knowledge embedded in physics problems (symmetries, approximate models, unlabeled structure) can be systematically harvested to reduce labeled-data requirements by orders of magnitude, making deep learning viable for some of science’s most expensive computational problems.

IAIFI Research Highlights

This work sits at the AI-physics interface, translating principles from contrastive self-supervised learning into a framework that explicitly encodes physical symmetries and exploits the hierarchical structure of approximate versus exact scientific calculations.

SIB-CL advances the self-supervised learning toolkit by showing that domain-specific invariances can substitute for generic image augmentations, yielding dramatically more data-efficient pre-training when those invariances are physically grounded.

By making accurate property prediction tractable with far fewer labeled simulations, the framework speeds up research in photonics and quantum mechanics, domains where simulation cost has long constrained what questions researchers can ask computationally.

Future work will likely extend SIB-CL to experimental datasets and to problems where invariances must be discovered rather than specified. The full paper is available at [arXiv:2110.08406](https://arxiv.org/abs/2110.08406).