The DNA of nuclear models: How AI predicts nuclear masses

Authors

Kate A. Richardson, Sokratis Trifinopoulos, Mike Williams

Abstract

Obtaining high-precision predictions of nuclear masses, or equivalently nuclear binding energies, $E_b$, remains an important goal in nuclear-physics research. Recently, many AI-based tools have shown promising results on this task, some achieving precision that surpasses the best physics models. However, the utility of these AI models remains in question given that predictions are only useful where measurements do not exist, which inherently requires extrapolation away from the training (and testing) samples. Since AI models are largely black boxes, the reliability of such an extrapolation is difficult to assess. We present an AI model that not only achieves cutting-edge precision for $E_b$, but does so in an interpretable manner. For example, we find that (and explain why) the most important dimensions of its internal representation form a double helix, where the analog of the hydrogen bonds in DNA here link the number of protons and neutrons found in the most stable nucleus of each isotopic chain. Furthermore, we show that the AI prediction of $E_b$ can be factorized and ordered hierarchically, with the most important terms corresponding to well-known symbolic models (such as the famous liquid drop). Remarkably, the improvement of the AI model over symbolic ones can almost entirely be attributed to an observation made by Jaffe in 1969 based on the structure of most known nuclear ground states. The end result is a fully interpretable data-driven model of nuclear masses based on physics deduced by AI.

Concepts

The Big Picture

Imagine trying to predict the weight of every possible molecule. Not just the ones chemists have measured, but the exotic, unstable ones that exist only for fractions of a second in stellar explosions or particle accelerators. That’s roughly the challenge nuclear physicists face with nuclear binding energies: the precise amount of energy holding each nucleus together. We’ve measured thousands of nuclei, but the universe needs us to predict thousands more.

The problem runs deep. Many fundamental questions depend on knowing the masses of nuclei we can’t easily measure: how heavy elements form in neutron star mergers, how far the map of all possible nuclei extends into unstable territory. The best physics models achieve impressive precision, but not quite enough. AI models have recently leapfrogged them on accuracy, but with a catch: nobody knows why they work, which makes trusting their predictions beyond training data dangerous.

Researchers at MIT and CERN have now built an AI model that does both. It achieves record precision while also opening its own hood, revealing internal structure as recognizable as a strand of DNA.

Key Insight: When forced to explain itself, an AI trained on nuclear data rediscovered the same physics structures humans have been building for nearly a century, then pointed to a 1969 insight that explains almost all of its remaining advantage.

How It Works

The model takes only two inputs: the number of protons (Z) and neutrons (N) in a nucleus. No hand-engineered physics features, no pre-baked formulas. It learns purely from patterns in measured binding energy data.

The first surprise came when the researchers examined the AI’s internal representation, the mathematical space the network uses to organize information about each nucleus before producing a prediction. The two most important dimensions of this space, when plotted together, trace out a double helix.

This isn’t metaphor. The structure geometrically resembles DNA, with the “hydrogen bonds” connecting the proton count and neutron count of the most stable nucleus for each element. The AI, with no knowledge of chemistry or molecular biology, invented an organizational scheme that mirrors life’s information storage molecule. That structure is a direct consequence of which nuclei are most tightly bound.

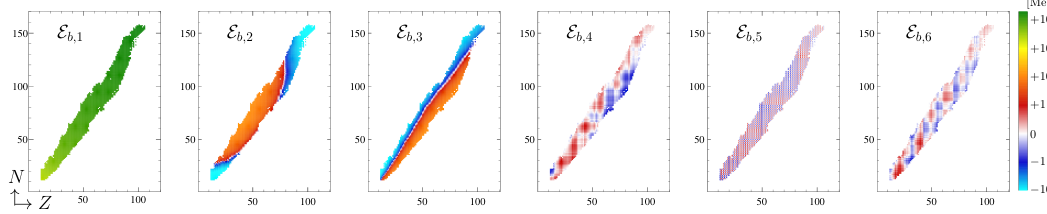

The second finding came from factorization: breaking the AI’s prediction into a ranked series of components, from most important to least.

- The dominant term closely matches the liquid drop model, a nearly century-old formula treating the nucleus like a dense incompressible fluid

- Successive terms layer on corrections, each corresponding to progressively finer physical effects

- Precision improves step by step: from ~2.7 MeV (basic liquid drop) down to ~0.5 MeV (best symbolic models), and finally to 0.13 MeV for the full AI model

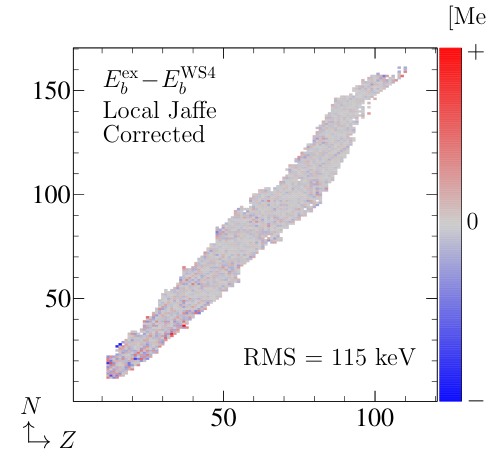

That final leap, from the best human-crafted symbolic model to the AI, has a very specific origin. Almost all of the improvement traces back to a 1969 observation by physicist Jaffe: a structural property of nuclei in their lowest-energy configurations, noted but never fully exploited in mass formulas. The AI didn’t know about Jaffe’s work. It rediscovered the same physical insight from data alone.

For comparison, the best non-AI model (WS4) achieves an RMS error of about 0.28 MeV. This AI model cuts that in half, reaching 0.13 MeV, a mean relative precision of roughly one part in ten thousand. Unlike black-box AI models, every component of that prediction now has a physical interpretation.

Why It Matters

Precision matters here in a practical sense. The r-process, the rapid neutron-capture chain responsible for forging gold, platinum, and most heavy elements in neutron star collisions, requires binding energies for thousands of nuclei that have never been measured. Feed it wrong numbers and your astrophysical simulations go wrong in ways that are hard to diagnose. An interpretable AI model is far more trustworthy for extrapolation: if you understand why it works where you can check it, you have principled reasons to trust it where you can’t.

The implications go beyond nuclear physics. Rather than treating AI as an oracle, the researchers used it as an automated theorist, one that sifts through data, extracts structure, and points back to real physics. The model rediscovered both the liquid drop model and a 1969 result without any prior knowledge. That kind of interpretable AI can genuinely advance physical understanding, not just curve-fit data.

Bottom Line: An AI trained on nuclear masses spontaneously developed a DNA-like internal structure, rediscovered the liquid drop model, and traced its remaining advantage to a half-century-old observation. Interpretable AI and physics precision are not in conflict; they reinforce each other.

IAIFI Research Highlights

This work connects nuclear physics and machine learning by building an AI that achieves top-tier binding energy predictions while remaining fully interpretable, showing that AI can deduce physics rather than merely fit data.

High-dimensional neural network representations can spontaneously organize into physically meaningful low-dimensional structures (a double helix), providing a concrete case study in AI interpretability for scientific applications.

By achieving 0.13 MeV precision on nuclear masses and tracing each component to known physics, this model directly improves the reliability of extrapolations needed for *r*-process nucleosynthesis and nuclear chart exploration.

Future work may apply this factorization and interpretability framework to other nuclear observables and to nuclei far from stability. The full paper is available at [arXiv:2508.08370](https://arxiv.org/abs/2508.08370).