Towards Designing and Exploiting Generative Networks for Neutrino Physics Experiments using Liquid Argon Time Projection Chambers

Authors

Paul Lutkus, Taritree Wongjirad, Shuchin Aeron

Abstract

In this paper, we show that a hybrid approach to generative modeling via combining the decoder from an autoencoder together with an explicit generative model for the latent space is a promising method for producing images of particle trajectories in a liquid argon time projection chamber (LArTPC). LArTPCs are a type of particle physics detector used by several current and future experiments focused on studies of the neutrino. We implement a Vector-Quantized Variational Autoencoder (VQ-VAE) and PixelCNN which produces images with LArTPC-like features and introduce a method to evaluate the quality of the images using a semantic segmentation that identifies important physics-based features.

Concepts

The Big Picture

Imagine trying to photograph a ghost. Neutrinos, the most abundant matter particles in the universe, pass through virtually everything without leaving a trace. To catch them in the act, physicists have built some of the most extraordinary detection machines ever conceived: giant tanks of ultrapure liquid argon, chilled to −186°C.

When a neutrino does collide with an argon atom (rarely), it knocks loose a trail of charged particles that drift through the liquid. Sensitive electronics capture these trails as images: ghostly sketches of particle paths that reveal the neutrino’s energy, type, and behavior. These detectors are called LArTPCs, or Liquid Argon Time Projection Chambers.

The problem? Generating realistic simulated images of particle trajectories is painfully slow. Traditional methods model every step of how particles travel through matter, and experiments like DUNE, which will use a 40,000-ton liquid argon detector, will demand more simulated data than those methods can economically produce.

Researchers at Tufts University, working with IAIFI, have taken a hard look at whether AI systems that learn to generate new data from scratch can step in and produce convincing fake detector images fast enough to meet that demand (arXiv:2204.02496).

Key Insight: By combining two complementary AI architectures into a hybrid system, the researchers produced LArTPC detector images that capture the distinctive physics features of real particle trajectories, an important step toward AI-powered simulation for neutrino physics.

How It Works

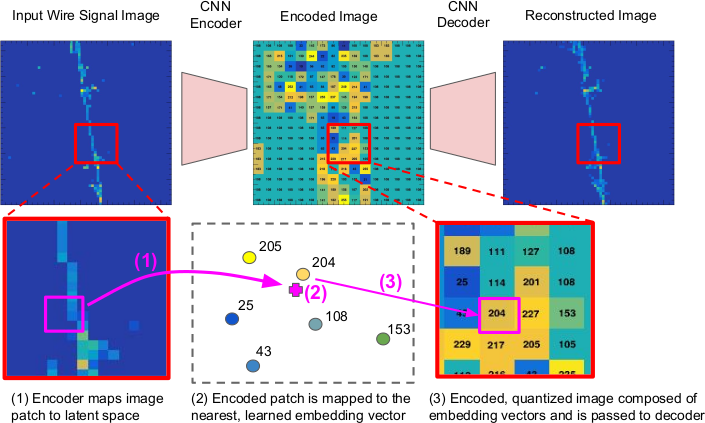

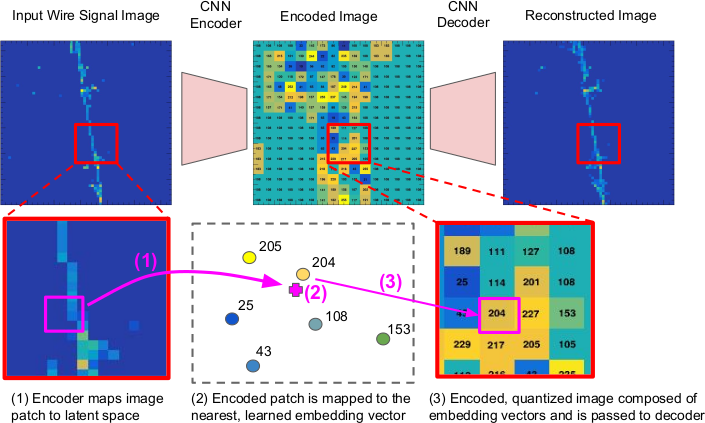

The approach is a two-stage pipeline that splits the problem into two manageable pieces. A Vector-Quantized Variational Autoencoder (VQ-VAE) compresses detector images into a compact “codebook” of discrete symbols. A PixelCNN then generates new sequences of those symbols from scratch.

Think of it as a two-part language system. The VQ-VAE acts like a translator, converting complex detector images into a simplified alphabet of visual codes, where each patch of the image gets assigned a code number from a fixed vocabulary. The PixelCNN acts like an author: it learns the grammar of how those codes typically appear together, then writes entirely new sequences to produce novel images.

Training has two phases:

- Phase 1 — Train the VQ-VAE: The network learns to encode detector images into discrete latent codes and decode them back to pixel space with minimal loss. The quantization step forces the model to commit to discrete representations rather than blurry continuous ones, which preserves the sharp, track-like features of real particle trajectories.

- Phase 2 — Train the PixelCNN on the latent codes: The PixelCNN trains on the compressed code images produced by the encoder. It learns to predict each code token one by one, given all previous tokens, effectively learning a prior over the space of physically plausible trajectories.

At generation time, the PixelCNN samples a new code image from scratch, and the VQ-VAE decoder converts it into a full-resolution detector image.

Why go hybrid? GANs (Generative Adversarial Networks) produce samples by transforming random noise through a deep network. They’re fast and flexible, but notoriously hard to control. A standard PixelCNN working directly on raw pixels becomes too slow and memory-intensive for the large images LArTPC detectors produce. The hybrid approach gets the best of both: a manageable step-by-step model working in compressed space, with a powerful decoder doing the heavy lifting of image reconstruction.

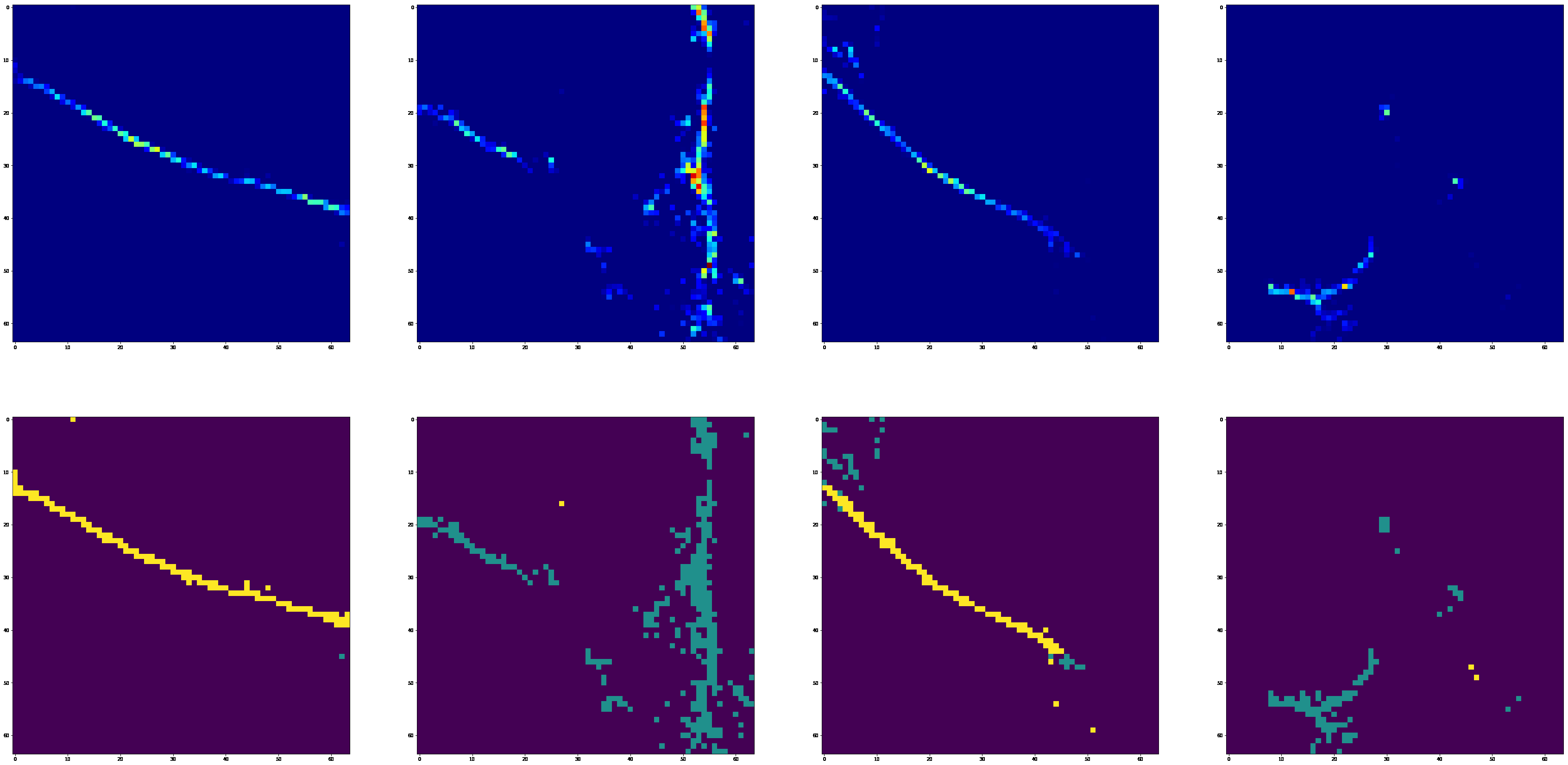

To check whether the generated images actually look like physics and not just visually plausible noise, the team ran a semantic segmentation network (trained to identify different types of particle trajectories) on both real and generated images and compared the resulting labels. This ties evaluation to physics-relevant structure rather than generic image statistics.

Why It Matters

Neutrinos carry information about processes ranging from supernovae to the Big Bang, and their mysterious mass, which shouldn’t exist according to the original Standard Model, hints at physics beyond our current understanding. Experiments like MicroBooNE, SBND, and the upcoming DUNE are poised to produce petabytes of LArTPC data. The bottleneck isn’t collecting data. It’s having enough simulated data to train the reconstruction algorithms that turn raw images into physics measurements.

Generative models like the one described here could run orders of magnitude faster than traditional Monte Carlo simulations, which track particle interactions step by step, without sacrificing the physics content that makes the images useful.

The researchers also point to a more ambitious application: using generative models for inverse problems. Given a real detector image, you could search the model’s latent space (the compressed mathematical representation it learned) to find the particle parameters that best explain what was observed. That would turn the generator into a reconstruction tool, not just a data factory.

Bottom Line: A hybrid VQ-VAE + PixelCNN system can generate physically meaningful LArTPC detector images with the distinctive track and shower features of real neutrino interactions, opening a path toward AI-accelerated simulation and reconstruction in next-generation neutrino experiments.

IAIFI Research Highlights

This work connects deep generative modeling with the practical simulation needs of experimental neutrino physics, showing that modern AI architectures can capture detector-specific physical structure.

The paper introduces a physics-grounded evaluation framework, using semantic segmentation of physics features as a domain-specific alternative to generic generative model metrics. This offers a template for measuring generation quality in other scientific applications.

Viable AI-based image generation for LArTPC detectors expands the computational toolkit for neutrino experiments like DUNE, which aim to probe matter-antimatter asymmetry and the neutrino mass hierarchy.

Future work includes extending the approach to conditional generation for specific particle types and momenta. The paper is available as [arXiv:2204.02496](https://arxiv.org/abs/2204.02496), from the Tufts University groups of Wongjirad and Aeron.

Original Paper Details

Towards Designing and Exploiting Generative Networks for Neutrino Physics Experiments using Liquid Argon Time Projection Chambers

2204.02496

["Paul Lutkus", "Taritree Wongjirad", "Shuchin Aeron"]

In this paper, we show that a hybrid approach to generative modeling via combining the decoder from an autoencoder together with an explicit generative model for the latent space is a promising method for producing images of particle trajectories in a liquid argon time projection chamber (LArTPC). LArTPCs are a type of particle physics detector used by several current and future experiments focused on studies of the neutrino. We implement a Vector-Quantized Variational Autoencoder (VQ-VAE) and PixelCNN which produces images with LArTPC-like features and introduce a method to evaluate the quality of the images using a semantic segmentation that identifies important physics-based features.