Towards Quantum Simulations in Particle Physics and Beyond on Noisy Intermediate-Scale Quantum Devices

Authors

Lena Funcke, Tobias Hartung, Karl Jansen, Stefan Kühn, Manuel Schneider, Paolo Stornati, Xiaoyang Wang

Abstract

We review two algorithmic advances that bring us closer to reliable quantum simulations of model systems in high energy physics and beyond on noisy intermediate-scale quantum (NISQ) devices. The first method is the dimensional expressivity analysis of quantum circuits, which allows for constructing minimal but maximally expressive quantum circuits. The second method is an efficient mitigation of readout errors on quantum devices. Both methods can lead to significant improvements in quantum simulations, e.g., when variational quantum eigensolvers are used.

Concepts

The Big Picture

Imagine trying to simulate a hurricane on a 1970s calculator. That’s roughly the situation physicists face when modeling quarks, gluons, and the strong force. Quantum chromodynamics, the theory governing these interactions, becomes mathematically intractable the moment you move beyond the simplest cases. Classical supercomputers choke on it.

The answers buried in those equations could explain one of physics’ deepest mysteries: why the universe contains any matter at all.

According to the Standard Model, matter and antimatter should have been created in equal amounts after the Big Bang and promptly annihilated each other. Yet here we are, made of matter. Something broke the symmetry, a phenomenon called CP violation, where particles and their antimatter counterparts don’t behave in exact mirror-image ways.

Understanding that “something” requires simulating real-time quantum processes that classical computers cannot handle. The problem is the sign problem: when complex oscillating phases appear in quantum field theory calculations, the statistical sampling methods that normally tame these computations break down completely.

Quantum computers can sidestep the sign problem by simulating quantum systems with quantum hardware, bypassing those failing statistical methods. But today’s machines are noisy and error-prone, operating in what’s called the noisy intermediate-scale quantum (NISQ) era.

A team spanning MIT, DESY, the Cyprus Institute, and Peking University has developed two algorithmic advances that make NISQ-era quantum simulations noticeably more reliable: a systematic way to build smarter quantum circuits, and a method to correct for the errors those circuits inevitably produce.

Key Insight: By combining a new technique for designing minimal yet maximally expressive quantum circuits with an efficient readout-error correction scheme, this team has made quantum simulations of particle physics measurably more reliable on today’s noisy hardware.

How It Works

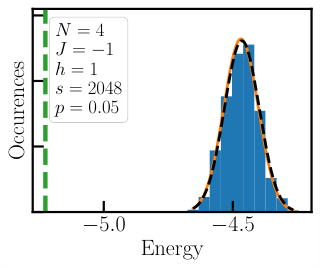

The workhorse for NISQ simulations is the variational quantum eigensolver (VQE), a hybrid algorithm that splits computational labor between quantum and classical computers. The quantum device evaluates a cost function measuring how close the current state is to the target. A classical optimizer then adjusts circuit parameters to minimize it. Think of it as teaching a quantum computer to tune itself: the circuit is a machine with knobs, and the classical computer keeps turning them until the lowest-energy state emerges.

Two problems lurk beneath the surface. First, how do you design the circuit itself? Before this work, circuit design was largely guesswork, borrowed from other contexts or built from generic templates (ansätze), with no principled way to know whether a given circuit could even represent the target quantum state. Second, every measurement is contaminated by readout errors, with hardware misidentifying quantum states and turning 0s into 1s at worrying rates.

The team’s first advance attacks circuit design with dimensional expressivity analysis. The idea is geometric: the set of all quantum states reachable by a parameterized circuit forms a mathematical surface. If some parameters can be changed without affecting the output state, the circuit is wasting resources.

Expressivity analysis detects these redundancies by examining the Jacobian of the circuit’s output, which measures how sensitively each output changes when each parameter is nudged. Redundant parameters get removed. What remains is a circuit that is simultaneously minimal (no unnecessary gates) and maximally expressive (it reaches every quantum state its architecture allows).

Fewer parameters mean a shallower circuit, which means less decoherence, the tendency of quantum states to degrade from environmental noise. Applied to gauge theories relevant to particle physics, including formulations of quantum electrodynamics in lower dimensions, this approach reduced circuit depth without sacrificing computational power.

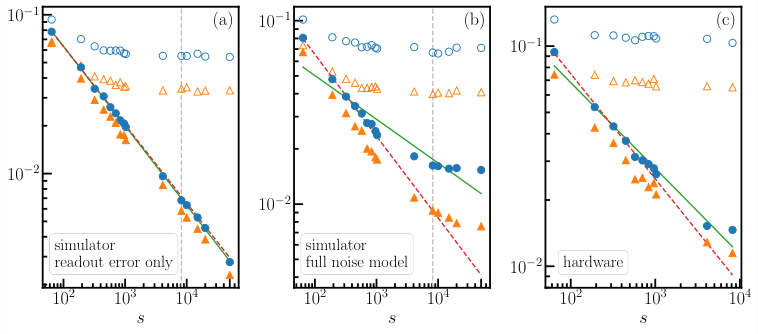

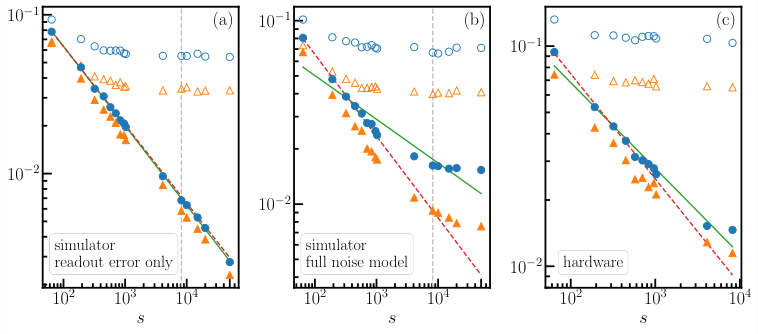

The second advance targets readout errors. Readout error mitigation works by characterizing a device’s error pattern: you build a map of how often hardware confuses each possible measurement outcome with another. This requires measuring the device on known states, then inverting the resulting error matrix to correct future measurements.

The computational catch: for n qubits, there are 2ⁿ possible outcomes, making the full error matrix exponentially large. The team’s solution exploits structure in real hardware. Readout errors on different qubits are often approximately independent, meaning each qubit’s confusion between 0 and 1 doesn’t strongly correlate with its neighbors’. By measuring this independence and grouping correlated qubits, they build an approximate error model that is tractable to invert and accurate enough to yield real improvements.

The correction pipeline has four steps:

- Characterize: Measure the device on single-qubit basis states to extract per-qubit error rates

- Group: Identify qubits whose errors are correlated and handle them jointly

- Invert: Construct an approximate inverse of the error matrix for each group

- Correct: Apply this inverse to raw measurement outcomes to recover cleaner probability distributions

Combining leaner circuits with cleaner measurements produces results much closer to exact solutions. The team validated this against classical tensor network simulations, a well-established technique that provides ground truth for small quantum systems.

Why It Matters

Particle physics is the motivating application, but these techniques are general tools. Any VQE application, whether in quantum chemistry, condensed matter physics, or quantum optimization, benefits from circuits that are maximally efficient and measurements that are maximally accurate. The readout mitigation scheme is hardware-agnostic and applies to any gate-based quantum computer regardless of the underlying technology.

Simulating full quantum chromodynamics in 3+1 dimensions remains far beyond current hardware. But algorithmic improvements accumulate. Better circuit design, better error mitigation, better classical validation: the community is building infrastructure that will eventually make such simulations possible. These are concrete, benchmarked gains on real devices, not aspirational promises.

Bottom Line: Dimensional expressivity analysis and structured readout error mitigation measurably improve quantum simulations on today’s noisy hardware, moving particle physics phenomena one step closer to quantum tractability.

IAIFI Research Highlights

This work sits at the intersection of quantum information theory and high-energy physics, applying differential geometry tools to the practical challenge of simulating gauge field theories on quantum hardware.

Dimensional expressivity analysis provides a principled, general framework for optimizing parameterized quantum circuits, with applications in quantum machine learning and variational algorithms beyond physics.

More reliable quantum simulations of gauge theories open a computational path toward studying CP violation and matter-antimatter asymmetry in regimes inaccessible to classical Monte Carlo methods.

Future directions include scaling these techniques to larger systems and 3+1D gauge theories; full details appear in the preprint [arXiv:2110.03809](https://arxiv.org/abs/2110.03809) (MIT-CTP/5325).

Original Paper Details

Towards Quantum Simulations in Particle Physics and Beyond on Noisy Intermediate-Scale Quantum Devices

2110.03809

["Lena Funcke", "Tobias Hartung", "Karl Jansen", "Stefan K\u00fchn", "Manuel Schneider", "Paolo Stornati", "Xiaoyang Wang"]

We review two algorithmic advances that bring us closer to reliable quantum simulations of model systems in high energy physics and beyond on noisy intermediate-scale quantum (NISQ) devices. The first method is the dimensional expressivity analysis of quantum circuits, which allows for constructing minimal but maximally expressive quantum circuits. The second method is an efficient mitigation of readout errors on quantum devices. Both methods can lead to significant improvements in quantum simulations, e.g., when variational quantum eigensolvers are used.