Turbulence teaches equivariance to neural networks

Authors

Ryley McConkey, Julia Balla, Jeremiah Bailey, Ali Backour, Elyssa Hofgard, Tommi Jaakkola, Abigail Bodner, Tess Smidt

Abstract

We investigate how the rotational nature of turbulence affects learned mappings between quantities governed by the Navier-Stokes equations. By varying the degree of anisotropy in a turbulence dataset, we explore how statistical symmetry affects these mappings. To do this, we train super-resolution models at different wall-normal locations in a channel flow, where anisotropy varies naturally, and test their generalization. By evaluating the learned mappings on new coordinate frames and new flow conditions, we find that coordinate-frame generalization is a key part of the generalization problem. Turbulent flows naturally present a wide range of local orientations, so respecting the symmetries of the Navier-Stokes equations improves generalization to new flows. Importantly, turbulence's rotational structure can embed these symmetries into learned mappings -- an effect that strengthens with isotropy and dataset size. This is because a more isotropic dataset samples a wider range of orientations, more fully covering the rotational symmetries of the Navier-Stokes equations. The dependence on isotropy means equivariance error is also scale-dependent, consistent with Kolmogorov's hypothesis. Therefore, turbulence provides its own data augmentation (we term this implicit data augmentation). We expect this effect to apply broadly to learned mappings between tensorial flow quantities, making it relevant to most machine learning applications in turbulence.

Concepts

The Big Picture

Imagine teaching a child to recognize a chair. You show them chairs from many angles, facing left, facing right, tilted, rotated, until they understand that “chairness” doesn’t depend on orientation. Now imagine the training data automatically provided all those angles, without any effort on your part. That’s what turbulence does for AI models trying to learn fluid physics.

When engineers simulate turbulent flows (the chaotic motion in jet engines, ocean currents, or the atmosphere), they face an uncomfortable truth: high-fidelity simulations are extremely expensive. Machine learning offers a shortcut, but with a catch. A model trained on fluid data in one orientation may fail completely when the same flow is rotated. Physics doesn’t care which way you’re looking; AI models traditionally do.

A team of MIT researchers showed that turbulence itself solves this problem, and the more chaotic and uniformly mixed the flow, the better it works.

Key Insight: Turbulence’s natural rotational structure implicitly teaches neural networks to respect the symmetries of the Navier-Stokes equations, reducing the need for hand-crafted data augmentation strategies that have long been an engineering burden in physics-based machine learning.

How It Works

The central concept is equivariance, a property where a model’s output rotates consistently when its input is rotated. An equivariant model f satisfies f(g·x) = g·f(x), where g is a rotation. The Navier-Stokes equations have this property: rotate the flow, and the physics rotates with it. A model that lacks equivariance is fundamentally inconsistent with the underlying physics.

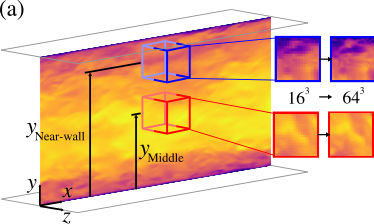

The researchers tested their ideas on super-resolution: reconstructing a high-resolution turbulent velocity field from a coarse input. This is practically important because expensive simulations can be run at lower resolution and then “upsampled,” saving significant compute. Standard convolutional neural networks make no guarantees about rotational equivariance.

Their setup exploited a natural feature of turbulent channel flow. Using the Johns Hopkins turbulent channel flow database at friction Reynolds number Re_τ = 1000, they focused on how anisotropy (the degree to which a flow has a preferred direction, rather than behaving the same in all directions) varies with distance from the wall:

- Near-wall region: Flow is highly anisotropic. Structures are stretched and flattened by the wall, sampling only a narrow range of orientations.

- Channel center: Flow is far less anisotropic. Structures spin more freely, sampling a much wider range of orientations.

By training models at these two locations separately, the team could study how the statistical symmetry of training data shapes what a model learns, without changing the architecture at all.

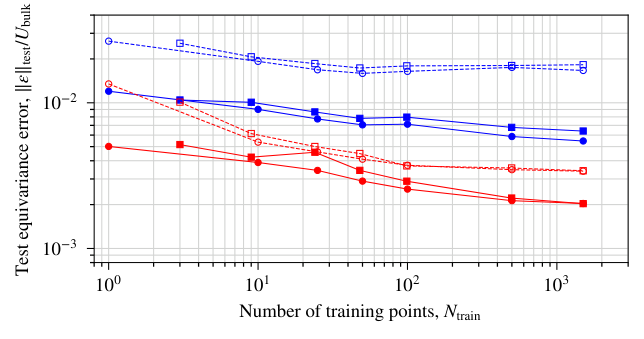

The results were clear. They measured each model’s equivariance error, how much outputs change when inputs are rotated in ways that shouldn’t matter. Then they tested generalization across three increasingly difficult scenarios: extrapolating in time, applying the model at a different wall-normal location with different anisotropy, and jumping to a higher Reynolds number (Re_τ = 5200). One pattern held across every configuration: lower equivariance error predicted better generalization, regardless of the task.

Implicit equivariance also strengthens with both isotropy and dataset size. A larger, more isotropic dataset samples more orientations, more completely covering the rotational symmetries of the Navier-Stokes equations. The team calls this implicit data augmentation: turbulence providing, for free, what engineers usually have to build by hand. Equivariance error is also scale-dependent, consistent with Kolmogorov’s classical theory that small-scale turbulent structures become increasingly direction-independent. Smaller structures exhibit lower error than the large energy-containing eddies that carry most of the flow’s kinetic energy.

Why It Matters

Equivariance is known to make models more physically consistent and more sample-efficient, but achieving it has required either specialized architectures built from group-theoretic principles, or explicit data augmentation (randomly rotating training pairs, which adds cost and complexity). Both demand deliberate engineering.

In turbulence, the data itself does much of this work. The implication extends well beyond super-resolution. The same effect should apply to any learned mapping between tensorial flow quantities: turbulence closure models for RANS simulations, large eddy simulation subgrid-scale models, reduced-order models for dynamics. Wherever rotating eddies appear in training data, implicit augmentation is at work.

Measuring equivariance error also gives practitioners a cheap, physics-motivated proxy for generalization. It’s a way to stress-test a model before deploying it on a new Reynolds number or flow geometry, without needing labeled data from the target domain.

Bottom Line: Turbulence doesn’t just challenge AI models; it trains them. By naturally presenting rotating structures across a wide range of orientations, isotropic turbulence implicitly teaches neural networks the rotational symmetries of the Navier-Stokes equations, making equivariance error a reliable predictor of out-of-distribution generalization.

IAIFI Research Highlights

This work connects Kolmogorov's classical turbulence theory with modern machine learning, showing that the statistical isotropy of turbulent flows governs how well neural networks learn physical symmetries.

The discovery of implicit data augmentation provides a physics-grounded explanation for why models trained on turbulence can generalize across coordinate frames, and establishes equivariance error as a practical, architecture-agnostic diagnostic for out-of-distribution robustness.

By demonstrating that the distributional symmetry of turbulent flows matches the covariance of the Navier-Stokes equations, this work yields new physical insight into the relationship between statistical isotropy and the symmetry structure of governing equations in fluid mechanics.

Future work could extend these findings to fully three-dimensional turbulence, broader symmetry groups, and other physics domains where governing equations possess known symmetries. The preprint is available at [arXiv:2602.04695](https://arxiv.org/abs/2602.04695) (McConkey et al., under consideration at *Journal of Fluid Mechanics*).