Universality of Neural Network Field Theory

Authors

Christian Ferko, James Halverson, Aaron Mutchler

Abstract

We prove that any quantum field theory, or more generally any probability distribution over tempered distributions in $\mathbb{R}^d$, admits a neural network description with a countable infinity of parameters. As an example, we realize the $2d$ Liouville theory as a neural network and numerically compute the three-point function of vertex operators, finding agreement with the DOZZ formula.

Concepts

The Big Picture

Imagine trying to describe every symphony ever composed or conceivable — not just those written so far, but every combination of notes that could ever exist. Now imagine a single mathematical framework could capture all of them. That’s the ambition behind a new result from IAIFI researchers at Northeastern University: proving that neural networks can represent any quantum field theory in existence.

Quantum field theory (QFT) is physics’ most powerful language. It underlies the Standard Model of particle physics, explains phase transitions like water freezing into ice, and appears in approaches to quantum gravity. Neural networks have recently emerged as a surprising tool for defining and simulating these theories, but until now, researchers only knew this worked for specific classes of QFTs or in simplified one-dimensional settings.

Christian Ferko, James Halverson, and Aaron Mutchler have now proven something much broader: every QFT, without exception, can be represented by a neural network, even one requiring infinitely many parameters. They then put the theorem to work, simulating a notoriously difficult theory called Liouville theory and recovering a famous exact formula to within a few percent.

Key Insight: Neural networks are not just useful approximators for some quantum field theories. They are a universal language for all of them, proven using measure theory (the mathematics of probability and integration) and functional analysis (the study of infinite-dimensional spaces).

How It Works

Earlier work on neural network quantum mechanics succeeded in one dimension, describing quantum particles evolving through time. The trick was representing a random path as an infinite sum:

x(t) = ⟨x(t)⟩ + θ₁f₁(t) + θ₂f₂(t) + …

where the θₖ are random parameters drawn from a probability distribution and the fₖ(t) are fixed basis functions. Draw the parameters, add up the terms, get a physically realistic quantum trajectory.

Moving to higher dimensions is qualitatively harder. In one dimension, quantum paths are rough but still function-like. In two or more spacetime dimensions, field configurations become Schwartz distributions, generalized objects like the Dirac delta function (an infinite spike at a single point with finite total area) that can’t be evaluated pointwise but can be integrated against test functions. This is what makes higher-dimensional QFTs so hard.

The proof handles this in three steps:

- Define a generalized quantum system (GQS): A precise framework for any random process whose outputs live in a well-behaved infinite-dimensional space.

- Apply the Borel isomorphism theorem: A classical result guaranteeing that any two such infinite-dimensional spaces can be mapped onto each other without losing their essential structure, allowing the randomness of any quantum field to be rerouted through a neural network’s parameter space.

- Construct the neural network: Show that any probability measure on the space of Schwartz distributions S′(ℝᵈ) can be realized by a network with countably many parameters, and that a single parameter drawn from the uniform distribution on [0,1] formally suffices.

Testing the theorem: Liouville theory. The authors chose 2D Liouville theory as their benchmark, a strongly interacting conformal field theory (a QFT that looks the same at every scale, like a fractal) defined on a sphere. Liouville theory appears in string theory, random geometry, and 2D quantum gravity, and it’s one of the rare QFTs where an exact analytic answer exists for certain observables.

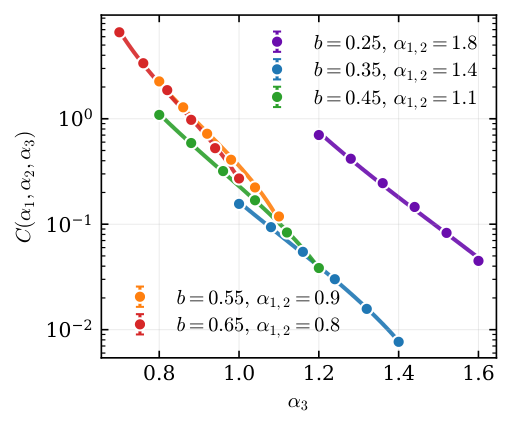

That answer is the DOZZ formula (named after Dorn, Otto, Zamolodchikov, and Zamolodchikov), which gives the three-point function, a number capturing how three field measurements at different locations are statistically correlated. Computing this numerically is a serious challenge.

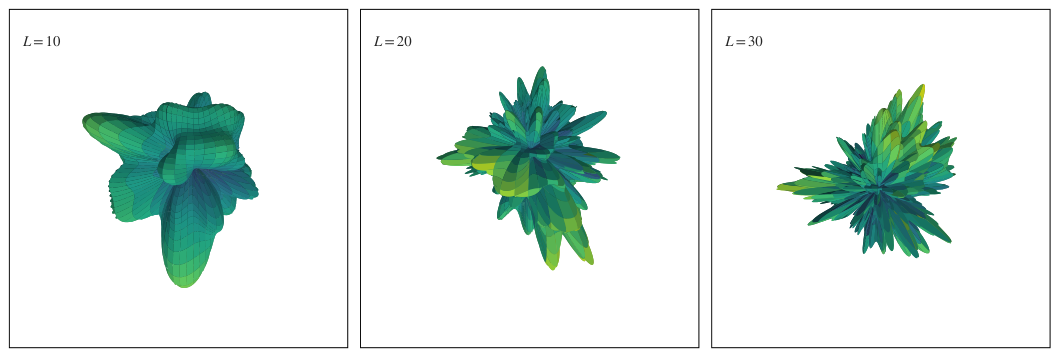

The team built a neural network representation of the Liouville field using spherical harmonics, wave-like building blocks on a sphere, analogous to how any sound can be decomposed into pure tones. They retained finitely many modes to make computation feasible, drew samples from the parameter distribution, computed the three-point function numerically, and compared results to the DOZZ formula.

The agreement is within a few percent. The residual error comes from truncating the infinite basis at finite order; it shrinks as more modes are included. The theorem produces working simulations, not just abstract mathematics.

Why It Matters

This result changes how we think about the relationship between neural networks and physics. The NN-FT correspondence was once a conjecture, then a result for special cases, and now a theorem of full generality. Every QFT lives inside the space of neural network models: free or interacting, conformal or not, in any number of dimensions.

For physicists, this amounts to a new computational strategy. Strongly coupled CFTs, theories on curved spacetimes, models with complex field configurations: these are all notoriously hard to simulate by traditional methods, and NN-based sampling grounded in the universality theorem could provide a way in.

The inverse question is just as interesting for machine learning researchers: what does it mean that the space of models a neural network can represent is rich enough to contain all of physics?

There’s also an unexpected connection to pure mathematics. The Borel isomorphism theorem, a result from descriptive set theory (the branch of mathematics that classifies infinite-dimensional spaces), is doing load-bearing work in the proof. Abstract classification theorems, it turns out, have direct use in building practical field theory simulators.

Bottom Line: Ferko, Halverson, and Mutchler have proven that neural networks are a universal representation for all quantum field theories, with immediate implications for simulating strongly coupled theories and for understanding the expressive power of neural architectures.

IAIFI Research Highlights

This work brings together rigorous measure theory (specifically the Borel isomorphism theorem) and neural network architecture design to prove a foundational theorem connecting AI and theoretical physics.

The universality result means neural networks are expressively complete for all of physics. What the full representational capacity of neural architectures implies for AI research and model design remains an open and compelling question.

Neural network simulations of strongly coupled theories like Liouville theory are now grounded in a general theorem, opening new approaches to quantum gravity, string theory, and other regimes where traditional methods break down.

Future work will focus on improving the efficiency of finite-mode approximations and extending NN-FT methods to other strongly coupled theories. The full paper by Ferko, Halverson, and Mutchler is available at [arXiv:2601.14453](https://arxiv.org/abs/2601.14453).