Variational diffusion transformers for conditional sampling of supernovae spectra

Authors

Yunyi Shen, Alexander T. Gagliano

Abstract

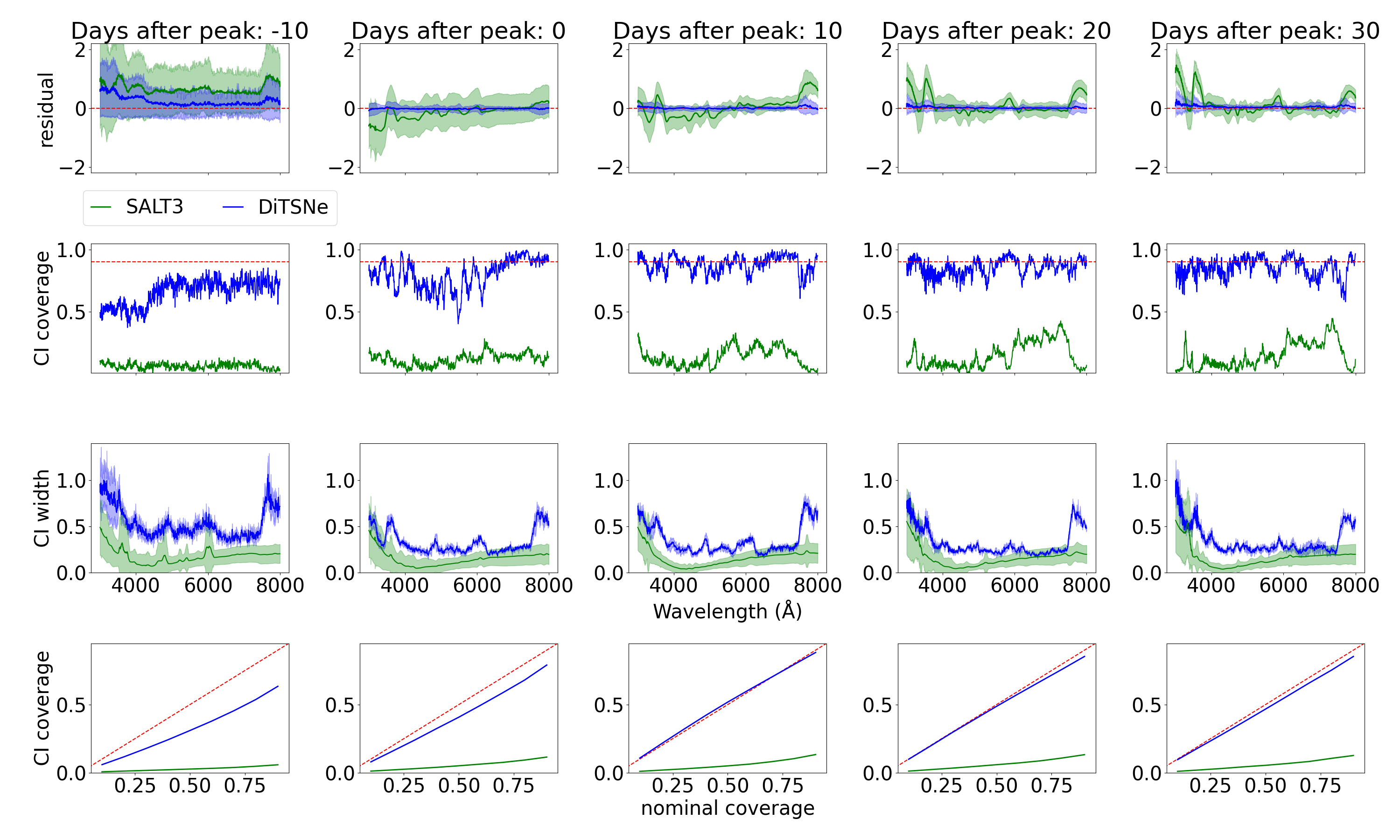

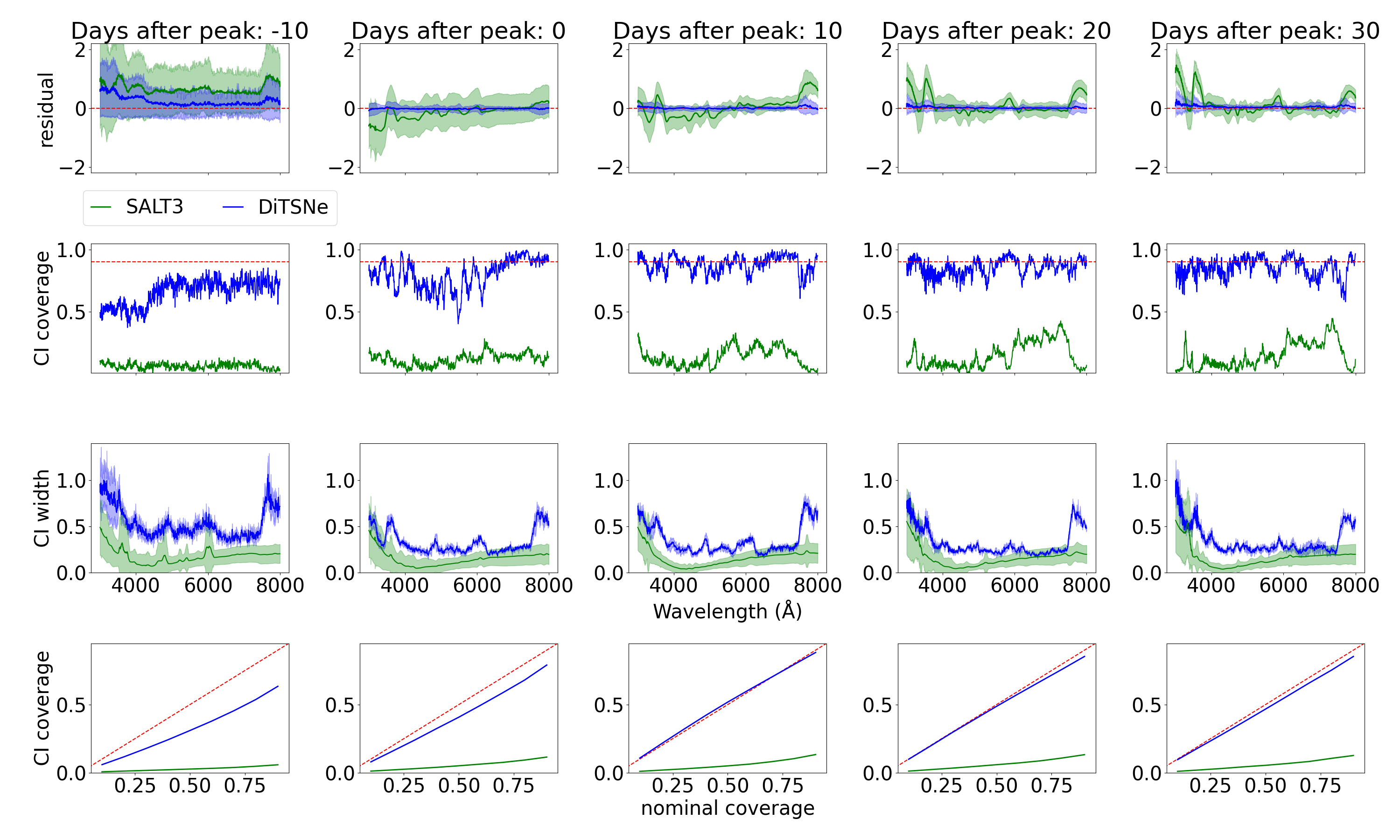

Type Ia Supernovae (SNe Ia) have become the most precise distance indicators in astrophysics due to their incredible observational homogeneity. Increasing discovery rates, however, have revealed multiple sub-populations with spectroscopic properties that are both diverse and difficult to interpret using existing physical models. These peculiar events are hard to identify from sparsely sampled observations and can introduce systematics in cosmological analyses if not flagged early; they are also of broader importance for building a cohesive understanding of thermonuclear explosions. In this work, we introduce DiTSNe-Ia, a variational diffusion-based generative model conditioned on light curve observations and trained to reproduce the observed spectral diversity of SNe Ia. In experiments with realistic light curves and spectra from radiative transfer simulations, DiTSNe-Ia achieves significantly more accurate reconstructions than the widely used SALT3 templates across a broad range of observation phases (from 10 days before peak light to 30 days after it). DiTSNe-Ia yields a mean squared error of 0.108 across all phases-five times lower than SALT3's 0.508-and an after-peak error of just 0.0191, an order of magnitude smaller than SALT3's 0.305. Additionally, our model produces well-calibrated credible intervals with near-nominal coverage, particularly at post-peak phases. DiTSNe-Ia is a powerful tool for rapidly inferring the spectral properties of SNe Ia and other transient astrophysical phenomena for which a physical description does not yet exist.

Concepts

The Big Picture

Imagine you’re a detective, but instead of witnesses, all you have is a shadow. That’s roughly the situation astronomers face with supernovae. When a white dwarf (a burned-out stellar remnant) detonates in a thermonuclear fireball, it produces two kinds of measurements: a light curve (how the explosion brightens and fades over time) and a spectrum (a rainbow-like snapshot showing the precise chemical fingerprint at a single moment). Light curves are easy to collect. Spectra, which carry the real physical insight, require expensive telescope time and usually arrive too late, if at all.

The problem is about to get dramatically worse. The Vera C. Rubin Observatory, now beginning its decade-long Legacy Survey of Space and Time (LSST), will discover roughly one million supernovae per year. Spectroscopic follow-up can cover at most a tenth of a percent of those events. Without a way to infer spectra from light curves alone, astronomers risk drowning in explosions they can’t decipher, including a menagerie of “peculiar” subtypes that can corrupt the distance measurements we use to study dark energy.

Yunyi Shen and Alexander Gagliano at MIT and IAIFI have built a model called DiTSNe-Ia that predicts full supernova spectra directly from light curve data, using a generative AI architecture to achieve accuracy far beyond existing templates.

Key Insight: DiTSNe-Ia achieves five times lower reconstruction error than the standard SALT3 template model, and at post-peak phases it’s an order of magnitude more accurate, all without requiring a single photon from a spectrograph at prediction time.

How It Works

The core challenge is a translation problem. Light curves and spectra both measure the same underlying explosion, but they slice the data differently: one through time, one through wavelength. DiTSNe-Ia learns to translate from the time-domain signal to the wavelength-domain signal, conditioned on the phase of observation.

The architecture combines two ideas from modern deep learning. First, a variational diffusion model gradually removes noise from a random starting point to reconstruct data. Instead of predicting a single spectrum, it samples from a probability distribution over plausible spectra, producing calibrated uncertainty estimates alongside each prediction.

Second, the engine inside that diffusion process is a Diffusion Transformer (DiT). Transformers are well suited to sequences that aren’t regularly spaced, which is exactly the situation with astronomical data: observations arrive at irregular times and wavelengths.

The model receives three inputs simultaneously:

- Light curve observations: brightness measurements through multiple color filters, each sensitive to a different wavelength range, tagged with filter type, phase, and magnitude

- Observation phase: how many days before or after maximum light the spectrum is being predicted

- Diffusion timestep: the internal noise level in the generative process

Rather than concatenating these signals, DiTSNe-Ia uses cross-attention, a mechanism that lets the spectrum being generated look up relevant information from the light curve at each network layer. Positional information (wavelength, time) gets encoded with sinusoidal embeddings, a technique for representing irregular positions borrowed from the original transformer paper, before entering the network.

The model was trained and evaluated on realistic simulations of light traveling through exploding stellar material, covering a phase window from 10 days before peak brightness to 30 days after. The results speak for themselves: DiTSNe-Ia achieves a mean squared error of 0.108 across all phases, compared to SALT3’s 0.508. At post-peak phases the gap widens to nearly an order of magnitude: 0.0191 versus 0.305. The model also produces well-calibrated uncertainty intervals, meaning its stated confidence levels reflect real predictive accuracy.

Why It Matters

The immediate payoff is operational. When LSST alerts start flooding in, astronomers will need to decide within hours which events deserve scarce spectroscopic follow-up. DiTSNe-Ia can flag the most unusual candidates (underluminous 1991bg-like events, overluminous 1991T-likes) from light curve data alone. Miss these outliers, and they silently bias the distance measurements behind our best constraints on dark energy.

The deeper significance is methodological. SALT3, the current standard, is a manually crafted template trained to encode known spectral correlations. It works well for typical supernovae but breaks down precisely where you most need it: on the diverse, physically interesting outliers.

DiTSNe-Ia makes no such assumptions. It learns the full distribution of spectral behaviors from data, including behaviors that existing physical models struggle to explain. That flexibility makes it a blueprint for any astrophysical transient (gamma-ray bursts, tidal disruption events, kilonovae) where a complete physical theory doesn’t yet exist. As the authors note, the approach generalizes naturally to phenomena “for which a physical description does not yet exist,” making generative AI a viable spectral inference tool for the survey astronomy era.

Bottom Line: Modern generative AI can reconstruct detailed physical spectra from sparse photometric data with far greater accuracy than hand-crafted templates, opening the door to rapid, unbiased classification of millions of supernovae in the LSST era.

IAIFI Research Highlights

This work connects transformer-based generative AI, originally developed for language and image generation, with stellar astrophysics, applying diffusion models to a fundamental observational gap in transient astronomy.

DiTSNe-Ia shows a practical architecture for conditional generation over irregularly sampled scientific sequences, combining variational diffusion with cross-attention conditioning in a framework transferable to any domain with sparse observational coverage.

By enabling rapid spectral inference from light curves alone, this model tackles a real bottleneck in the cosmological distance ladder, with implications for precision measurements of dark energy and the physics of thermonuclear explosions.

Future work will extend DiTSNe-Ia to observed supernova spectra and other transient classes; the paper is available at [arXiv:2505.03063](https://arxiv.org/abs/2505.03063) and represents a collaboration between MIT and IAIFI.

Original Paper Details

Variational diffusion transformers for conditional sampling of supernovae spectra

[arXiv:2505.03063](https://arxiv.org/abs/2505.03063)

["Yunyi Shen", "Alexander T. Gagliano"]

Type Ia Supernovae (SNe Ia) have become the most precise distance indicators in astrophysics due to their incredible observational homogeneity. Increasing discovery rates, however, have revealed multiple sub-populations with spectroscopic properties that are both diverse and difficult to interpret using existing physical models. These peculiar events are hard to identify from sparsely sampled observations and can introduce systematics in cosmological analyses if not flagged early; they are also of broader importance for building a cohesive understanding of thermonuclear explosions. In this work, we introduce DiTSNe-Ia, a variational diffusion-based generative model conditioned on light curve observations and trained to reproduce the observed spectral diversity of SNe Ia. In experiments with realistic light curves and spectra from radiative transfer simulations, DiTSNe-Ia achieves significantly more accurate reconstructions than the widely used SALT3 templates across a broad range of observation phases (from 10 days before peak light to 30 days after it). DiTSNe-Ia yields a mean squared error of 0.108 across all phases-five times lower than SALT3's 0.508-and an after-peak error of just 0.0191, an order of magnitude smaller than SALT3's 0.305. Additionally, our model produces well-calibrated credible intervals with near-nominal coverage, particularly at post-peak phases. DiTSNe-Ia is a powerful tool for rapidly inferring the spectral properties of SNe Ia and other transient astrophysical phenomena for which a physical description does not yet exist.